A little quiz: who spoke the following lines, and on what occasion?

…no plausible claim to intellectuality can possibly be made in the near future without an intimate dependence upon XXX. Those intellectuals who persist in their indifference, not to say snobbery, will find themselves stranded in a quaint museum of the intellect, forced to live petulantly, and rather irrelevantly, on the charity of those who understand the real dimensions of the revolution and can deal with the new world it will bring about.

Of course, the answer depends on what one substitutes for “XXX.” Readers who filled in, say, “national socialism” could have supposed that these words were spoken as a warning to German intellectuals who had not yet appreciated the glory of the Nazi revolution, by Josef Goebbels on the occasion of the book burning in Berlin on May 10, 1933. Readers could substitute “the ideas of the great leader and teacher” for “XXX” and leave open what particular revolution is being talked about. Leaders who come to mind, and whose names would render the quoted paragraph plausible, are, to name just a few: Karl Marx, General Pinochet, Stalin. The Germans, by the way, had a word for what intellectuals are here being warned to do: Gleichschaltung, which is translated as “bringing into line” or “coordination.”

But, implausible as it may seem at first glance, “XXX” in the quoted passage stands for “this new instrument,” meaning the computer. The authors of The Fifth Generation maintain that intellectuality, the creative use of the mind engaged in study and in reflection, will soon become inevitably and necessarily dependent on the computer. They are astounded that American intellectuals aren’t rushing to enlist in their revolution. They would expect, they say,

that American intellectuals (in particular those who still talk so reverently about the values of a liberal education, the sharing of the common culture, and so on, and so on) are eager to mold this new technology to serve the best human ends it possibly can.

Unfortunately, they’re not. Most of them haven’t the faintest idea what’s happening…they live in a dream world, irresponsible and whimsical, served by faithful old retainers (in the form of periodicals that are high in brow, even higher in self-importance, but low in circulation) that shamelessly pander to their illusions.

In this book Edward Feigenbaum, a professor of computer science at Stanford University and a cofounder of two commercial companies that market artificial intelligence software systems, and Pamela McCorduck, a science writer, give the reader an idea of what’s happening in the world of computers. They make the following claims:

First, certain American computer scientists have discovered that if computers are expected to intervene in some activity in the real world, then it would help, to say the least, if they had some knowledge of the domain of the activity in question. For example, computer systems designed to help make medical diagnoses had better know about diseases and their signs and symptoms.

Second, other American computer scientists have described designs of computers, “computer architectures,” that depart radically from the industry’s traditional design principles originally laid down by the pioneer computer scientist, John von Neumann. In orthodox, so-called von Neumann, machines, long chains of computations are organized as sequences of very small computational steps which are then executed serially, that is, one step after another. The new architectures allow computational chains to be decomposed into steps which can be executed as soon as the data for executing them is ready. They then don’t have to wait their turn, so to speak. Indeed, many steps can be executed simultaneously. Computation time is in a sense “folded” in such machines, which are consequently very much faster than their orthodox predecessors.

Third, still other computer scientists, mainly French and British, have created a computer language which they believe to be well suited for representing knowledge in computers in a form that lends itself to powerful logical manipulation. The Japanese hope that the conjunction of this computer language with the new architecture will allow ultrarapid computation of “inferences” from masses of stored knowledge. Fourth, these developments have taken place at a time of continuing dramatic progress in making computers physically smaller, functionally faster, and with increasing storage capacities. Computer hardware becomes constantly cheaper.

Finally, the Japanese, who already dominate the world market in consumer electronics, have seized on the resulting opportunity and decided to create entirely new and enormously powerful computer systems, the “fifth generation,” based on the developments described above. These systems, as the book jacket puts it, will be “artificially intelligent machines that can reason, draw conclusions, make judgments, and even understand the written and spoken word.”

This appears to be a very ambitious claim. But not to seasoned observers of the computer scene who have long since learned to penetrate the foggy language of the computer enthusiasts. What have they boasted of before and how were such boasts justified in reality? One example will do: In 1958, a quarter of a century ago, Herbert Simon and Allen Newell, both pioneer computer scientists and founding members of the Artificial Intelligence (AI) movement within computer science, wrote that “there are now in the world machines that think, that learn and that create. Moreover, their ability to do these things is going to increase rapidly until—in the visible future—the range of problems they can handle will be coextensive with the range to which the human mind has been applied.”1

Advertisement

In other words, the most recent ambitions of the Japanese were already close to being realized according to leaders of the American artificial intelligence community a quarter of a century ago! All that remained to be done—and it would be done within the “visible future”—was to extend the range of the problems such machines would solve to the whole range of the problems to which the human mind has been applied.

That ambition remains as absurd today as it was twenty-five years ago. In the meanwhile, however, much progress has been made in getting computers to “understand” the written word and even some words spoken in very highly controlled contexts. Is the Japanese project then really not very ambitious? The answer depends on the standards of intelligent computer performance one adopts: by those Simon and Newell evidently held twenty-five years ago, not much remains to be done. If, on the other hand, words like “judgment,” “reason,” and “understanding” are to be comprehended in their usual meanings, then the prospects for anything like full success for the Japanese project are very dim. I believe the Japanese will build some remarkable hardware in the coming decade, but nothing radically in advance of American designs. However, in order to do all they intend to do in a single decade, the Japanese have organized a huge effort involving the close cooperation of Japan’s Ministry of International Trade and Industry (MITI) and the major Japanese firms in the electronics industry.

That, briefly, is “what’s happening.” But Feigenbaum and McCorduck do more than merely deliver the latest bulletins from the high-technology front. They also present their own vision of the world that is within our grasp, if only we would reach for it. Here are just a few of their speculative glimpses into that future:

Loneliness will have been done away with—at least for old people. There will be friendly and helpful robots to keep them company.

The geriatric robot is wonderful. It isn’t hanging about in the hopes [sic] of inheriting your money—nor of course will it slip you a little something to speed the inevitable. It isn’t hanging about because it can’t find work elsewhere. It’s there because it’s yours. It doesn’t just bathe you and feed you and wheel you out into the sun when you crave fresh air and a change of scene, though of course it does all those things. The very best thing about the geriatric robot is that it listens [emphasis in the original]. “Tell me again,” it say, “about how wonderful/dreadful your children are to you. Tell me again that fascinating tale of the coup of ’63. Tell me again…” And it means it. It never gets tired of hearing those stories, just as you never get tired of telling them. It knows your favorites, and those are its favorites too. Never mind that this all ought to be done by human caretakers; humans grow bored, get greedy, want variety.

Then there will be the “mechanical” doctor. But

if the idea of a mechanical doctor repels you, consider that not everyone feels that way. Studies in England showed that many humans were much more comfortable (and candid) with an examination by a computer terminal than with a human physician, whom they perceived as somehow disapproving of them.

Another helpful device that awaits us is the

intelligent newspaper [which] will know the way you feel and behave accordingly.

It will know because you have trained it yourself. In a none-too-arduous process, you will have informed your intelligent newsgathering system about the topics that are of special interest to you. Editorial decisions will be made by you, and your system will be able to act upon them thereafter…. It will understand (because you have told it) which news sources you trust most, which dissenting opinions you wish to be exposed to, and when not to bother you at all.

You could let your intelligent system infer your interests indirectly by watching you as you browse. What makes you laugh? It will remember and gather bits of fantasia to amuse you. What makes you steam? It may gather information about that, too, and then give you names of groups that are organized for or against that particular outrage.

We may suppose, by the way, such a system would be just as ready to help other agencies, say the police, gather information and obtain names of groups that may be for or against “outrages” that interest them.

Advertisement

But, much more importantly, what Feigenbaum and McCorduck describe here is a world in which it will hardly be necessary for people to meet one another directly. Not that this is a consequence they hadn’t foreseen and from which they would recoil. They have seen that aspect of the new world and they greet it as a welcome advance for mankind:

Despite the gray warnings about how the computer would inevitably dehumanize us, it has not. We are just as obstreperously human as ever, seizing this new medium to do better one of the things we’ve always liked to do best, which is to create, pursue, and exchange knowledge with our fellow creatures. Now we are allowed to do it with greater ease—faster, better, more engagingly, and without the prejudices that often attend face-to-face interaction [emphasis added].

A geriatric robot that frees old people from the murderous instincts of their children and is programmed to lie to them systematically, telling them that it understands their petty stories and enjoys “listening” to them. Mechanical doctors we can be utterly candid with and which won’t disapprove of us as human doctors often do. Technical devices in our own homes that gather information about us and determine to what group we ought to belong and which ones we should hate. Technical systems that permit us to exchange knowledge “engagingly” with our fellow creatures while avoiding the horror of having to look at them or be looked at let alone touched. This is the world Feigenbaum and McCorduck are recommending.

In fact, they haven’t told us the whole story. Professor Tohru Moto-oka of Tokyo University and titular head of the Japanese Fifth Generation project promises even more:

…first [Fifth Generation computers] will take the place of man in the area of physical labor, and, through the intellectualization of these advanced computers, totally new applied fields will be developed, social productivity will be increased, and distortions in values will be eliminated [emphasis added].

In existing totalitarian societies, “distortions in values” are eliminated by very unpleasant methods indeed. professor Moto-oka promises a future in which computers will do that without anyone’s noticing, let alone feeling pain. This would be truly an advance for human civilization. Can it be done? It is a sign of the times that people will think this is a question about the technical power of computing systems. It is, however, a question of the willingness of populations to surrender themselves to the “conveniences” offered by technical devices of all sorts, particularly by information-handling machines. Will they be eager to make the Faustian bargain by which they will have the things and entertainments they want in exchange for their right and responsibility to determine their own values? Does that exchange sound so preposterous? Think of the enthusiasm with which millions of parents turn their children over to the television set in order to escape having to bother with them. Nor do most such parents exhibit any worries about what values their children might learn while watching hundreds of murders annually.

We are well on our way to the kind of world sketched here. Already, we are told, and it is undoubtedly true, that many people prefer to “interact” with computers. Schoolchildren prefer them to teachers, and many patients to doctors. No one seems to ask what it may be about today’s doctors and teachers, or with the situations in which they work, that causes them to come off second best in competition with computers. Perhaps it would help to learn in what way and why such interpersonal encounters fail, and then remedy whatever difficulties come to light. Perhaps the computer ought not to be wheeled into the classroom as a solution before the problems plaguing the schools, not merely their manifestations, have been identified.

The computer has long been a solution looking for problems—the ultimate technological fix which insulates us from having to look at problems. Our schools, for example, tend to produce students with mediocre abilities to read, write, and reason; the main thing we are doing about that is to sit kids down at computer consoles in the classrooms. Perhaps they’ll manage to become “computer literate”—whatever that means—even if, in their mother tongue, they remain functionally illiterate. We now have factories so highly computerized that they can operate virtually unmanned. Devices that shield us from having to come in contact with fellow human beings are rapidly taking over much of our daily lives. Voices synthesized by computers tell us what to do next when we place calls on the telephone.

The same voices thank us when we have done what they ask. But what does “the same voices” mean in this context? Individuality, identity, everything that has to do with the uniqueness of persons—or of anything else!—simply disappears. No wonder the architects of, and apologists for, worlds in which work becomes better and more engaging to the extent that it can be carried out without face-to-face interaction, and in which people prefer machines that listen to them to people, come to the conclusion that intellectuals are irrelevant figures. There is no place in their scheme of things for creative minds, for independent study and reflection, for independent anything.

These people see the technical apparatus underlying Orwell’s 1984 and, like children on seeing the beach, they run for it. I wish it were their private excursion, but they demand that we all come along. Indeed, they tell us we have no choice, unless, that is, we are prepared to witness “the end, the wimpish end, of the American century,” the conversion of the United States of America to an agrarian nation. Perhaps we ought to consider that and other alternatives to the Faustian bargain I mentioned. Increasing computerization may well allow us to increase the productivity of labor indefinitely—but to produce what? More video games and fancier television sets along with “smarter” weapons? And with people’s right to feed their families and themselves largely conditional on their “working,” how do we provide for those whose work has been taken from them by machines? The vision of production with hardly any human effort, of the consumption of every product imaginable, may excite the greed of a society whose appetites are fixed on things. It may be good that, in our part of the globe, people need no longer sort bank checks or mail by hand, or retype article like this one. But how far ought we to extrapolate such “good” things? At what price? Who stands to gain and who must finally pay? Such considerations ought at least to be part of a debate. Are there really no choices other than that “we” win or lose?

Aside from whatever advantages are to be gained by living our lives in an electronic isolation ward, and aside also from the loss to “them” of a market “we” now dominate, what good reasons are there for mounting our own fifth generation project on a scale, as Feigenbaum and McCorduck recommend, of the program that landed our man on the moon? “If you can think of a good defense application,” Feigenbaum and McCorduck quote “one Pentagon official” as saying, “we’ll fund an American Fifth Generation Project.” “But,” Feigenbaum and McCorduck insist, “there are other compelling reasons for doing it, too.” Forty pages of text, not to mention appendices, indexes, and so on follow this remark, but no “other” reasons are given—other, that is, than “defense” reasons.

One chapter of the book is devoted to “AI and the National Defense.” However, the chapter is only six pages long, and is mainly a song of praise—perhaps gratitude is a more apt word—for the Pentagon’s “enlightened scientific leadership.” Laid on thick, the praise tends to betray its own absurdity:

Since the Pentagon is often perceived as the national villain, especially by intellectuals, it’s a pleasure to report that in one enlightened corner of it, human beings were betting taxpayers’ money on projects that would have major benefits for the whole human race.

Notwithstanding the characteristic swipe at intellectuals, the principal author of this book is a university professor. The corner mentioned is the Defense Department’s Advance Research Projects Agency, DARPA, also often called just ARPA. Well, there is reason for workers in AI to be grateful to this agency. It has spent on the order of $500 million on computer research, and that certainly benefited the AI community—the artificial intelligentsia—enormously. But “major benefits for the whole human race”?

The military, however, has good reason to continue, just as generously as ever, to provide funds for work on the fifth generation, as Feigenbaum and McCorduck make clear:

The so-called smart weapons of 1982, for all their sophisticated modern electronics, are really just extremely complex wind-up toys compared to the weapons systems that will be possible in a decade if intelligent information processing systems are applied to the defense problems of the 1990s.

The authors make five points: first, they believe we should look “with awe at the peculiar nature of modern electronic warfare,” particularly at the fact that the Israelis recently shot down seventy-nine Syrian airplanes with no losses to themselves. “This amazing result was achieved,” they write, “largely by intelligent human electronic battle management. In the future, it can and will be done by computer.”

Second, we cannot afford to allow the “technology of the intelligent computer systems of the future…to slip away to the Japanese or to anyone else…. Japan, as a nation, has a longstanding casual attitude toward secrecy when it comes to technological matters.” Third, with the ever-increasing cost of military hardware, “the economic impact of an intelligent armaments system that can strike targets with extreme precision should be apparent…—fewer weapons used selectively for maximum strike capability.” Fourth, “it is essential that the newest technological developments be made available to the Defense Department.” Finally, “the Defense Department needs the ability to shape technology to conform to its needs in military systems.” Here, perhaps more than in any other argument of the book, we are close to what it’s “all about.”

A much shorter, and better, account of the Japanese Fifth Generation project appeared recently as a cover story of Newsweek magazine (July 4, 1983). The authors of that story reached pretty much the same conclusions about the reasons the United States should invest heavily in “these technologies”:

Once they are in place, these technologies will make possible an astonishing new breed of weapons and military hardware. smart robot weapons—drone aircraft, unmanned submarines and land vehicles—that combine artificial intelligence and high powered computing can be sent off to do jobs that now involve human risk. [To the people on our side. It is the intention, of course, to expose the others to considerable risk.] “This is a very sexy area to the military, because you can imagine all kinds of neat, interesting things you could send off on their own little missions around the world or even in local combat,” says [the head of ARPA’s information-processing research office]. The Pentagon will also use the technologies to create artificial intelligence machines that can be used as battlefield advisors and superintelligent computers to coordinate complex weapons systems. An intelligent missile guidance system would have to bring together different technologies—real-time signal processing, numerical calculations and symbolic processing, all at unimaginably high speeds—in order to make decisions and give advice to human commanders.

People generally should know the end use of their labor. Students coming to study at the artificial intelligence laboratories of MIT, my university, or Stanford, Edward Feigenbaum’s, or the other such laboratories in the United States, should decide what they want to do with their talents without being befuddled by euphemisms. They should be clear that, upon graduation, most of the companies they will work for, and especially those that will recruit them most energetically, are the most deeply engaged in feverish activity to find still faster, more reliable ways to kill ever more people—Feigenbaum and McCorduck speak of the objective of creating smart weapons systems with “zero probability of error” (their emphasis). Whatever euphemisms are used to describe students’ Al laboratory projects, the probability is overwhelming that the end use of their research will serve this or similar military objectives.

The tone of the book, moreover, is as doubtful as its approach to the machines, projects; and people it seeks to promote. The authors, for example, speak of each other in the third person. If the first-person singular were substituted, some of the things they say would be absurd. For example: “‘I know,’ Feigenbaum said affably….” Suppose they had written, “‘I know,’ I said affably.” Nor is this a trivial point. The device is used in the service of reconstructing history. Even moderately wellinformed computer professionals could be forgiven if, after reading this book, they came to a number of conclusions that are implausible to say the least: that Feigenbaum had single-handedly brought about a revolution in computer science; that the whole of the Japanese Fifth Generation program was designed by Japanese scientists informed largely by visits to Feigenbaum’s laboratory; and that IBM missed its chance to mount a similar, but American, project because they didn’t listen to Feigenbaum when, at their invitation, he told them the way things were.

Such effects are produced through promotional rhetoric. McCorduck, for example, remembers a lecture Feigenbaum had given at Carnegie-Mellon University. Herbert Simon, who had directed his thesis, was there, and

beside Simon was Allen Newell, another artificial intelligence great, and scattered about the room were some of the best and brightest in computer science in general and artificial intelligence in particular. But the Carnegie mood was skeptical….

Feigenbaum…threw out a challenge. “You people are working on toy problems,” he said…. “Get out into the real world and solve real-world problems.”

…It is sound science strategy to choose a simplified problem and explore it in depth to grasp principles and mechanisms that are otherwise obscured by details that don’t really matter. But Feigenbaum was arguing to the contrary, that here the details not only mattered; they make all the difference [emphasis in the original].

There were murmurings among the graduate students. Maybe Feigenbaum was right…. And not immediately, but later, Carnegie-Mellon came around….

Could that have been written with “I” substituted for “Feigenbaum”? Not without making the reader giggle. As it is, the account suggests the young Newton lecturing the Royal Society whose members are a little slow, but eventually come around—an impression conveyed throughout the book. Early on, the authors talk of the insight that

“computer” implies only counting and calculating, whereas [the computer] was, in principle, capable of manipulating any sort of symbol [emphasis in the original].

Though younger men eagerly pointed it out to them, that insight was simply unacceptable to many computer pioneers. John von Neumann, for example, who is widely acknowledged as a giant in computing, left as his last piece of published writing a long argument that computers would never exhibit intelligence.

In fact, John von Neumann was one of the first—if not the first—to recognize that commands to computers are merely symbols that could be manipulated by computers in the same way that numbers—or any other symbols—could be manipulated. He is responsible for a computer architecture, based on that insight, which is still a worldwide industry standard. But here von Neumann is cited as “an example” of a computer pioneer who didn’t accept his own insight when “young men,” if we are to believe the authors, “pointed it out” to him. As for von Neumann having written “a long argument that computers would never exhibit intelligence,” no such published document exists so far as I know. Von Neumann’s last published work is, in von Neumann’s own words, “an approach toward the understanding of the nervous system from a mathematician’s point of view.” It simply has nothing to do with “computers exhibiting intelligence.”2

Actually von Neumann had a standard answer for anyone who asked him whether computers could think, or be intelligent, and so on. He argued that if his questioner were to present him with a precise description of what he wanted the computer to do, someone could program the computer to behave in the required manner. Whether he thought there were some things in the human experience that could not satisfy his criterion, I simply don’t know. The position that every aspect of nature, most importantly of human existence, must be precisely describable, and its corollary that all human knowledge is sayable in words, is central to the credo to which all true believers in the limitless scope of artificial intelligence must hold.

As a computer scientist, I agree with Ionesco, who wrote, “Not everything is unsayable in words, only the living truth.” The Japanese, if one is to believe this book, appear to be true believers; von Neumann, perhaps, was not. It is unfortunate that Feigenbaum and McCorduck don’t supply a reference for their claim about him. Indeed, whenever criticism of one of Feigenbaum’s beliefs is alluded to, it remains anonymous. We are told, for example, that arguments against machine intelligence fall into four broad categories. These are briefly sketched, without mention, let alone identification, of serious proponents of such arguments or any hint where they might be found. Von Neumann’s “long argument” must, I suppose, fall into at least one of the four mentioned categories. Which one? We would suppose that an argument by such a giant in the discipline in question might well be decisive—or that Feigenbaum has developed a powerful counterargument. If he has, it remains well hidden.

It is as if someone trying to build a vehicle that is to travel at twice the speed of light categorizes the arguments against the possibility of success—but only in a caricature that makes them appear ridiculous on their face. And then remarks casually that Albert Einstein had a “long argument” for the impossibility of the proposed project, but gives no hint of the nature of the argument or where it may be found, and fails to supply the refutation that someone investing money in the project—say the taxpayer—might wish for.

Henry Kissinger, commenting on the Watergate scandal, once spoke of “the awfulness of events and the tragedy that has befallen so many people.” The use of the passive voice here renders invisible the various actors in the drama and their degrees of responsibility; it denies the role of human will in human affairs. Feigenbaum and McCorduck use similar devices to shield themselves from having to quote their potential critics or to give citations that interested readers might follow up. “Cogent arguments have been made that…. Others have argued that….” Such phrases recur throughout the book. The reader has no way of knowing the authors’ own standards of cogency. If the arguments matter, they ought to be stated, or we should be told where to find them.

To underline the importance of the computer as the “main artifact of the age of information,” Feigenbaum and McCorduck instruct us just wherein the importance of the computer lies:

[The computer’s] purpose is certainly to process information—to transform, amplify, distribute, and otherwise modify it. But more important, the computer produces information. The essence of the computer revolution is that the burden of producing the future knowledge of the world will be transferred from human heads to machine artifacts [emphasis in the original].

How are we to understand the assertion that the computer “produces information”? In the same way, presumably, as the statement that a coalfired electric power station produces energy. But that would be a simple and naive falsehood. Coal-fired power stations transform energy, they do not produce it. Computers similarly transform information, generally using information-losing operations. For example, when a computer executes an instruction to add 2 and 5, it computes 7. But one cannot infer from “7” that it was the result of an addition, let alone what two numbers were involved. That information, though it may have been preserved elsewhere, is lost in the performance of the computation. Perhaps a more generous interpretation of what the authors are trying to say is that a computer produces information in the way a Polaroid camera produces—what? Information or a picture? But how much information is there in a photograph that wasn’t already in the world to be photographed? To be sure, the photographer selects from the world and composes aspects of it in order to create what will ultimately be encoded on film. But it is the photographer, the artist, who produces the art. The camera transforms images, but only those that were placed in front of it. I think Professor Feigenbaum has to explain just what information a computer produces and how.

But more important: how can the authors’ jump from the idea of producing information—never mind whether that is nonsense or not—to that of producing knowledge, indeed the “future knowledge of the world,” be justified? The knowledge that appears to be least well understood by Edward Feigenbaum and Pamela McCorduck is that of the differences between information, knowledge, and wisdom, between calculating, reasoning, and thinking, and finally of the differences between a society centered on human beings and one centered on machines.

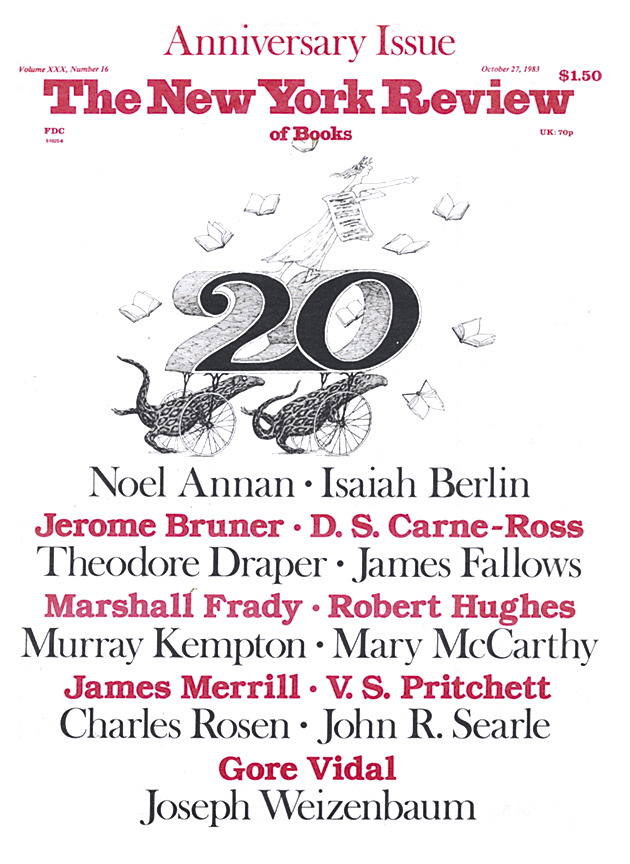

This Issue

October 27, 1983