It is so hard to make important decisions that we have a great urge to reduce them to rules. Every moral teacher or spiritual adviser gives injunctions about how to live wisely and well. But life is so complicated and full of uncertainty that rules seldom tell us quite what to do. Even the more analytically minded philosophers leave us in quandaries. Utilitarians start with Jeremy Bentham’s maxim, that we should strive for the greatest happiness of the greatest number of people. How do we achieve that? Kant taught us that we should follow just those rules of conduct that we would want everybody to follow. Few find this generalization of the golden rule a great help. It may seem that there is one kind of person or group that has no problem: the entirely selfish. Utilitarians find selfish people more interesting than one might expect, for it has been argued that the way to “maximize everyone’s utility” is to have a free market in which everyone acts in his own interests.

Even if you are entirely selfish and know what you want, you still live in a world full of uncertainties and won’t know for sure how to achieve what you want. The problem is compounded if you are interacting with another selfish person with desires counter to yours. Long ago Leibniz said we should model such situations on competitive games. Only after World War II was the idea fully exploited by John von Neumann. The result is called game theory, which quickly won a firm foothold in business schools and among strategic planners. In a recently reprinted essay, “What is Game Theory?” Thomas Schelling engagingly reminds us that we reason “game-theoretically” all the time.1 He starts with an example of getting on the train, hoping to sit beside a friend, or to evade a bore. Given some constraints about reserved seats and uncertainties about what the other person will do, should one go, he asks, to the dining car or the buffet car? You’ll see by the example that this is a vintage piece from 1967, when people were a little more optimistic about game theory than they are now.

The simplest games have two players, you and your friend, perhaps. Each has some strategies; go, for example, to the dining car or to the buffet car. Each has some beliefs about the world, about seating, reservations, and so forth, and each has some uncertainties. Each has some preferences—company over isolation, and dinner with wine, perhaps, over sandwich with beer. The “rational” player tries to maximize his utility by calculating the most effective way to get what he wants. Schelling’s first game is not even competitive; it becomes so when one is trying to avoid the bore.

Game theory is quite good for those games in which winners take everything that is staked, and losers lose all that they stake. The solution—the optimum strategy—is crisply defined for some classes of games. Unfortunately things begin to go awry for more interesting cases. Game theory has not been so good for games in which all parties stand to gain by collaboration. Indeed one of the advantages of moral philosophy over game theory is that moralists give sensible advice to moral agents while game theory can give stupid advice to game theorists.

By now the classic example of this is a little puzzle, invented in 1950: the prisoners’ dilemma. It is an irritating puzzle because problems taking the same general form seem to recur, time and again, in daily life. Here is the classic, abstract, and impossible tale that gives the dilemma its name. Two thieves in cahoots have been caught and await trial. They are locked in isolated cells. The prosecutor goes to each offering a deal. “Confess and implicate the other: if he is meanwhile maintaining innocence, we shall set you free and imprison him for five years. If neither of you confesses, you will both get two years for lack of better evidence. If you both confess, we have both of you, and will give each of you a slightly mitigated four-year term.”

Thief A reasons thus: if thief B does not confess, I go free by confessing. If thief B does confess but I do not, I get five years in jail. So whatever B does, I had better confess and implicate B. Thief B reasons identically. So both confess, and both get four years. Had both remained silent, each would have got only two years in jail. Game theory teaches each thief to act in his own worst interests.

Perhaps the two thieves will take an ethics course during their four years in prison. If they read Bentham, they may still be in doubt how to act so as to maximize the greatest happiness of the greatest number. Since utilitarians are the ancestors of game theorists, they may be in a dilemma, but at first sight maximizing both players’ happiness means not confessing. The golden rule is more transparent: A should act as he hopes B would act; if B follows the same rule, neither confesses and they get a two-year sentence. In their ethics course the thieves will also learn Kant’s categorical imperative: follow only those rules that you can sincerely wish every agent to follow. Kant thought that defined the very essence of the rational moral agent. Certainly it looks rational, while game theory looks irrational. Game theory advises the thieves to spend four years in jail, while the categorical imperative recommends two years.

Advertisement

Kant and Bentham act on fundamentally different moral principles, but had Kant and Bentham, per impossibile, been the two thieves, they would have had the shorter sentence. You may wonder why the business schools teach game theory rather than ethics. The answer is plain. Kant does not know that Bentham is on the other side of the wall. If it is not Bentham but a game theorist, the villain will double-cross and go scot free, while Kant gets what, in the game theoretic jargon, is called the “sucker’s payoff,” namely five years in jail. Not that “sucker” is my choice of words for a philosopher who spends five years in jail for acting as a rational human being.

Decision problems in the form of the prisoners’ dilemma are common enough. They require that two agents—human beings, nations, corporations, or bacteria—can benefit from cooperation. However one of the two could profit more from “defecting”—not cooperating—when the other is trying to cooperate. If one succumbs to this temptation, he profits while the other loses a lot. But if both succumb, both incur substantial losses (or at any rate gain less than they would have through mutual cooperation).

One of Axelrod’s chapters uses a historical example based on a recent book by Tony Ashworth, which describes the trench warfare in which German battalions were pitted against French or British ones.2 The opposed units are in a prisoners’ dilemma. The soldiers themselves would rather be alive, unharmed and free, than dead, maimed, or captured. They also prefer victory to stalemate, but stalemate is better than death or defeat.

Now if neither side shoots there will be a stalemate, but everyone will be alive and well. But if one side does not shoot while the other does, one side will be dead or ignominiously vanquished, while the other will win. If both sides shoot, most everyone will be dead, and in trench warfare, no one will win.

What to do? Were the soldiers on both sides to follow the insane motto of my maternal clan, Buiadh no bas (“Victory or death”), there would be much death and no victory. Game theorists on both sides would also, in one encounter, act like a demented highland regiment, and be dead. Ashworth’s book proves, however, what has long been rumored. Private soldiers (as opposed to staff officers) behaved like rational human beings. For substantial periods of the Great War, much cooperative nonshooting arose spontaneously, even though both sides of the line were, presumably, acting selfishly with no serious concern for the other side.

How were soldiers able to act in the best interests of all? German Kantians pitted against British Benthamites? Not at all. Axelrod’s answer is that trench warfare is not a one-shot affair. Every morning you are supposed to get up and shell the other side. Every day there dawns a new prisoners’ dilemma. This is a repeated or “iterated” prisoners’ dilemma, about which game theorists have a curious story to tell.

In Ashworth’s history there was seldom any outright palaver between opposed battalions. Protocols emerged slowly, starting, for example, with neither side firing a shot at dinner time. This silence spread through days and months: effective silence, not actual silence, for the infantrymen and artillery took care to simulate action so they would not be court-martialed and shot by their own side. According to Ashworth, staff officers, worried at the loss in morale caused by no one being killed, finally invented raids and sorties which, according to Axelrod, destroyed the logical structure of the prisoners’ dilemma.

How could such well-understood rational conventions about not shooting arise, when opposed sides did not arrange any explicit treaty? The emergence of such cooperation is one topic of Axelrod’s book. The secret is iterating the prisoners’ dilemma: players are caught in the same situation over and over again, day in, day out, facing roughly the same opponent on each occasion. Repetition makes a difference to people trapped in repeated dilemmas of the same form.

Advertisement

The difference is not that pure game theory sees a way out of its dilemma thanks to repeated plays. There is a rather abstract argument that two game theorists, equipped with a complete strategy for all possible ways in which a sequence of games might go, are still bound to defect on every single game. It arises this way. Suppose both players know that exactly 200 games will be played. Then they reason on the final, 200th, game, as they would on a one-shot affair: both defect. But then on the 199th game, both know that at the next game both will defect, so both defect at 199 as well—and so on. Knowing that there are just 200 games, they defect at every game. The same argument works for any finite number of games (so long as we do not “discount” very distant games overmuch). Hence no matter what finite number of games are played, the game theorists “ought” to defect at every game. In technical jargon, “defect always” is the equilibrium solution to the class of finitely repeated prisoners’ dilemmas.

Whatever one thinks of this argument, it is entirely remote from what real people do. In a one-shot game many people cooperate at once. There are, in most experiments, at least as many initial cooperators as initial exploiters. There is also a fairly regular pattern when people are asked to play prisoners’ dilemmas against each other many times. People do act differently, but typically there is a fairly high chance of cooperation at the start, which declines as one tries to exploit another, and mutual distrust develops. But after a while people realize this is stupid, and on average they slowly begin to cooperate again. There is now an enormous literature on the psychology of the dilemma, whose results do not always cohere, but that gives a rough-and-ready summary of many psychological investigations.

People do not enter a game with a total strategy any more than chess players have a total strategy. We might ask: could there be a best simple-minded strategy for use in any circumstance? No, for just as in chess, it depends who you are playing. If your opponent is a villain who has decided always to defect, you would do best if you, like him, never cooperate. If your opponent is a Kantian who cooperates so long as you cooperate, then you should cooperate too. But we can at least ask another question profitably: in an environment of human beings, each with his different manageable strategy, could some strategies fare better than others? Axelrod made the interesting discovery that there is a very clear affirmative answer to this question.

To measure the quality of different strategies we would need a system of scoring. Give each player three points if both cooperate. We give them one point each if both defect. If one player defects while the other cooperates, the exploiter gets five points and the exploited gets zero. Now let us try to see what happens in real life. In order to simulate what could happen, game theorists tend to inject random elements into their strategies (on the ground that an opponent cannot outwit the witless) so strategies will have to be run against one another in many different sequences in order to even out the chance effects of good and bad luck. A few years ago a serious analysis would have been impracticable, but nowadays computer simulation makes it easy.

Axelrod composed a first tournament by asking experts to tell him how they would play the iterated prisoners’ dilemma, if they were to use one set strategy against a number of other experts, each with his own pet strategy. Fourteen professional game theorists responded. Their systems were matched against one another 200 times. The clear winner was the simplest. It had been sent in by Anatol Rapoport, who had studied it in psychological work on the prisoners’ dilemma twenty years ago.3 In confronting another player, his system cooperates on the first game. On each later game, it simply mimics what the other player did on the last game. If the other just cooperated, cooperate. If he just defected, defect. You see why Rapoport called this strategy “tit-for-tat.” When he plays a villain who always defects, he defects after the first game. When Rapoport plays a fellow tit-for-tatter, they both cooperate forever.

Rapoport won by not beating anyone in particular. Indeed he can never get more points than his opponent. You might think that a born nonwinner is a born loser. Not so. The point is that less generous systems may be mutually destructive. Say only three players are in the field: Rapoport, A, and B. Against Rapoport, the more evil A and B do slightly better, perhaps, but against each other both set up a pattern of mutual defection which brings down both their scores.’ Thus Rapoport’s score over many matches against each of A and B is greater than A’s total score (points against Rapoport plus points against B). Tit-for-tat loses games but wins the day.

Axelrod noticed that some programs that had not been submitted would have done better than any that were sent in, better even than tit-for-tat. He organized a larger tournament, advertising both for experts and also in popular computer magazines. He got sixty-three entries, including one from a boy aged ten. All competitors were given Axelrod’s analysis of which programs did best in the first tournament and which might have done better. A couple of players sent in the programs that would have beaten tit-for-tat in the first tournament. Rapoport sent in tit-for-tat again. He won, even against those programs that would have beaten him last time. (This reminds us that success of a system depends on the other systems in the tournament.)

A few technicalities should be mentioned. It is assumed that a program can recognize which player it is playing in a given game. This recognition can be very limited: tit-for-tat has a tiny memory which recalls only what the player did at the last game. Secondly, economists considering a long series of games will commonly discount the future: a bird in the hand is worth two in the bush. But for the iterated prisoners’ dilemma it is important that the discount not be too big. A 100 percent discount means you attend only to the present game, and are back with the disastrous one-shot prisoners’ dilemma. In scoring the tournament it was important to have a sensible discount factor. In Axelrod’s theorems, the discount factor often appears as a key factor.

That said, we can see what did best in the tournaments. Of the top fifteen players in tournament 2, only one was ever the first to defect. Of the bottom fifteen, only one was never the first to defect. Axelrod calls systems that do not defect first the “nice” ones. Nice ones do best. Moreover the winners don’t hold grudges. Once an opponent quits defecting, successful systems start cooperating. However they do react quickly to defection, soon defecting themselves. Finally, successful systems are fairly simple: you can see quite soon what they are doing.

Notice that both Kant and Bentham commend rules like these. Bentham wants to maximize the greatest happiness of the greatest number of players. That is exactly what tit-for-tat does. Kant wants a maxim that he would like everyone to follow. In a one-shot prisoners’ dilemma he cooperates, but the situation is more complicated for repeated games. He is not committed to blind cooperation in the face of persistent defection. Even someone aspiring to sanctity, who personally chooses to turn the other cheek, need not will on others, who live in a world with villains, such painful virtue. Punishment has a well-known place in Kant’s scheme (although unlike Bentham he did not actually design penitentiaries). Kant will urge a maxim that punishes villains, but only enough to get them to quit villainy as soon as they can. Tit-for-tat is a strategy of this sort. So also is tit-for-two-tats—which defects only after two defections by the other player. Tit-for-two-tats is one rule that would have beaten tit-for-tat in tournament 1, but it lost to tit-for-tat in tournament 2. There is no Kantian answer (so far as I can see) for which of the two rules is preferred. Nor was there an empirical answer in Axelrod’s experiments; nor was there much to choose between them anyway.

Axelrod’s papers published in advance of the book under review have attracted wide admiration. Many commentators express astonishment at the fact that the simplest system won both tournaments. I am not astonished that a system (or family of systems) mandated by several thousand years of moral philosophy should outwit a band of game theorists and computer enthusiasts. One surprise is that the ten-year-old did not win, but perhaps innocence is nowadays lost before the age of ten. We can take solace in the fact that the winner, Anatol Rapoport, is now a professor of peace studies.

Of course saying that Kant and Bentham might have chosen tit-for-tat in this entirely artificial tournament does not mean it fits real life that well. Nor would a student of war and peace be too enthusiastic about traditional morality. Even the golden rule desperately needs to take into account Bernard Shaw’s entry in his Maxims for Revolutionists: “Do not do unto others as you would they should do unto you. Their tastes may not be the same.”

Axelrod’s tournaments and the story of the trenches illustrate the emergence of cooperation. His title speaks of evolution. To see why, let us imagine a lot of players who follow the same rule: call them natives. Suppose a new player enters, or an old one changes rules (mutation). The new rule is said to invade the other if a player using it can get a higher score with a native than the natives themselves can when they are playing natives.

Natives are collectively stable if they cannot be invaded by a player with another system. A society of total defectors is collectively stable. It lives in misery, but cannot be invaded by another system because it brings any rival down to its level of worthless existence, or worse. On the other hand, a tit-for-tat society is also collectively stable, so long as it does not discount the future too much.

One tit-for-tatter cannot invade a society of villainous defectors, but a group of tit-for-tatters can. This is because although tit-for-tatters do slightly worse than villains when playing villains, they do better, in encounters with each other, than villains meeting villains. Hence their total score is greater, and the invasion can go forward.

That is one key to The Evolution of Cooperation. The evolutionary story continues if we think of a long sequence of tournaments. We put into round 3 all the systems used in round 2, but in numbers proportional to the scores of those systems in round 2. Thus a high score in 2 gives more offspring of the system in the next generation. As this process is continued, the less successful systems become rare and then extinct. Cooperation evolves dramatically. Recall the one nasty system that finished in the top fifteen of tournament 2. It gets good scores, as it happens, by exploiting other nasty systems. But as those other systems gradually drop out, there are fewer to exploit, and its own score goes down. Thus after a couple of hundred generations even the most successful noncooperator becomes an endangered species.

One chapter in Axelrod’s book, written jointly with W.D. Hamilton, abounds in speculative applications to biology. It is especially tempting to study parasites and symbiosis in this way, and certainly there are many attractive modelings of cooperative behavior which more traditional evolutionary theory has difficulty in explaining. Bacteria that are benign until the host gets sick work like this: when the host is healthy, the discount rate on future interactions with the host is low—live and let live. But when the host gets sick, future interactions are heavily discounted, and the bacteria defect, finishing off the host for good.

Should you be skeptical of bacteria using game theory, remember that, as Axelrod and Hamilton say, “an organism does not need a brain to employ a strategy.” A connected point has been forcibly expressed by John Maynard Smith, whose applications of game theory to evolutionary biology are fundamental:

It has turned out that game theory is more readily applied to biology than to the field of economic behavior. There are two reasons for this. [One is that “utility” makes more sense in biology than economics.] More importantly, in seeking the solution of a game, the concept of human rationality is replaced by that of evolutionary stability. The advantage here is that there are good theoretical reasons to expect populations to evolve to stable states, whereas there are grounds for doubting whether human beings always behave rationally.4

“And if bacteria can play games,” says Axelrod, “so can people and nations.” Shall we then amend Aristotle to say that the bacterium, not man, is the rational animal?

Maybe “man” is part of the problem. Game theory has the quality of locker-room conversation, with its “sucker’s payoff,” its “invasions,” and so forth. Axelrod’s volunteer players in both his tournaments were, with one exception, men. He takes as his examples men in trenches, as mentioned, and congressmen, evolving cooperation from the early days of the republic until 1900.

I do not mean to imply that women play repeated prisoners’ dilemma games better than men. There has been substantial confirmation of Rapoport’s findings of twenty years ago, that within the confines of an iterated prisoners’ dilemma, women in certain ways play less well than men: that is, they cooperate less. The form of the experiment is simple. We can have men play against men, women against women, mixed singles, people against computers, people against people, neither knowing the sex of the other, and so forth. There is no substantial difference between the ways in which the sexes play a one-shot dilemma. In iterated dilemmas M-M and F-F interactions have roughly the same curves: on average, there is an initial decline in cooperation, followed by an increase in trust. Naturally the sooner trust is regained, the greater the score of both players. Males against males apparently resume trust much more quickly than females against females.

We should jump to no conclusions from this. For example suppose we do not tell the players the initial formal specifications of the game (the “matrix” of payoffs). Although these can quickly be guessed at, male/male performance drops to female/female performance, which is unaffected. So it may be suggested that inattention to formal specifications makes the difference. Another conclusion to jump at is that women simply get bored playing this stupid game, while men enjoy it—so long as there is a tidy artificial format in which to play it.

That accords with the remarks of Carol Gilligan and others, who have noticed for example how girls describe their relations to others very differently from boys. Their solutions to problems take up the whole web of human life. In one life-and-death story, eleven-year-old Jake “discerns the logical priority of life and uses that logic to justify his choice.” He locates truth in mathematics “which he says is ‘the only thing that is totally logical.”‘ In contrast eleven-year-old Amy sees “in the dilemma not a math problem with humans but a narrative of relationships that extends over time.”5 In part the girls collaborate by trying to change the rules of the game to suit the human beings caught up in it. One of Gilligan’s examples is this: The girl said, “Let’s play next-door neighbors.” “I want to play pirates,” the boy replied. “Okay,” said the girl, “then you can be the pirate who lives next door.”6 We certainly need to change games more often than we need optimum strategies.

“The condition of man,” wrote Hobbes, “is a condition of war of everyone against everyone.” From this false premise he deduced that we require a sovereign authority, a veritable leviathan, to keep us in order. It is a remarkable achievement of Axelrod to show that from Hobbes’s assumption about “man” we could still evolve cooperation without leviathan. We could do it by processes we could share with bacteria, whose lives are even more nasty, more brutish, and shorter than man’s. It is instructive that from a Hobbesian assumption Axelrod can deduce the following maxims as “advice for participants and reformers”: “Don’t be envious”; “Don’t be the first to defect”; “Reciprocate both cooperation and defection”; “Don’t be too clever.” That is the right advice, if you are forced to play out a prisoners’ dilemma game many times. But we should also turn our thoughts to being more rational than bacteria. Perhaps some ten-year-old girl can help us out of the game. I have done my small bit. At least I have changed the name of the game. It has hitherto been known as the prisoner’s dilemma. I have been calling it the prisoners’ dilemma. The two prisoners are in it together.

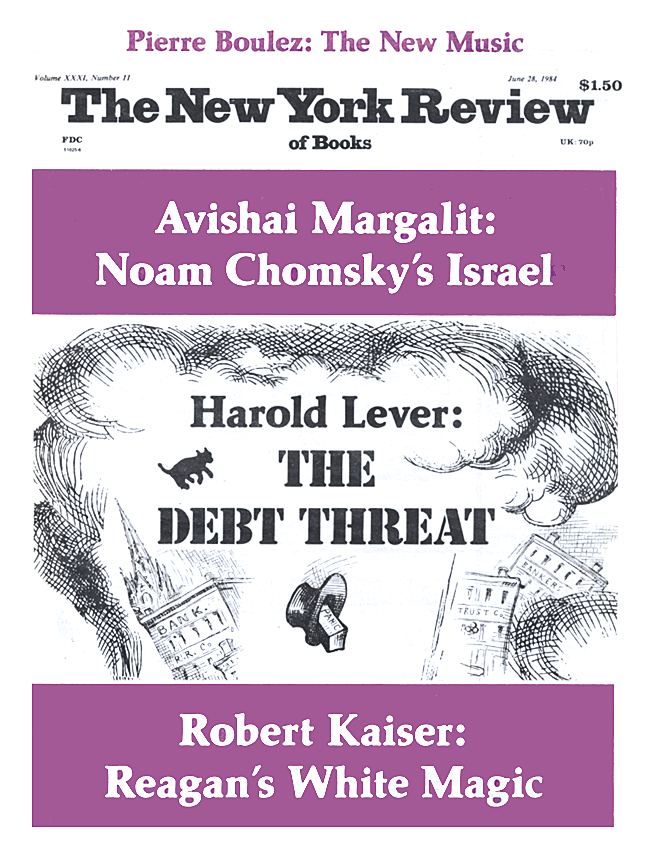

This Issue

June 28, 1984

-

1

Thomas Schelling, “What is Game Theory,” in Choice and Consequence (Harvard University Press, 1984), pp. 213–242. ↩

-

2

Tony Ashworth, Trench Warfare 1914–1918: The Live and Let Live System (Holmes and Meier, 1980). ↩

-

3

Prisoner’s Dilemma: A Study in Conflict and Cooperation, by Anatol Rapoport and Albert M. Chammah (University of Michigan Press, 1965). ↩

-

4

John Maynard Smith, Evolution and the Theory of Games (Cambridge University Press, 1982), p. vii. ↩

-

5

Carol Gilligan, In a Different Voice: Psychological Theory and Women’s Development (Harvard University Press, 1983), pp. 26, 28. ↩

-

6

Carol Gilligan, “Remapping Development: the Power of Divergent Data,” in Value Presuppositions in Theories of Human Development, edited by L. Cirollo and S. Wapner, to be published by Erlbaum Associates, Hillsdale, New Jersey. ↩