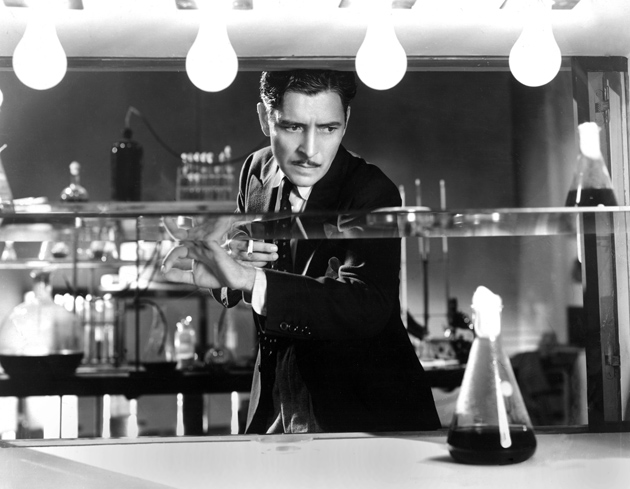

Everett Collection

Ronald Colman as a doctor torn between conflicting goals in medicine and scientific research in the 1931 film Arrowsmith, adapted from Sinclair Lewis’s 1925 novel. According to Steven Shapin in The Scientific Life, ‘Generations of American scientists traced their conceptions of scientific research and their vocation for science to their youthful reading of Arrowsmith.’

Since science is the defining intellectual enterprise of our age, it would seem worth understanding who the scientist is. This is the task Steven Shapin takes on in his latest book, The Scientific Life. Shapin’s book represents something of a departure from his previous efforts. The Franklin L. Ford Professor of the History of Science at Harvard University, Shapin is perhaps best known for two works on seventeenth-century science, A Social History of Truth (1994) and The Scientific Revolution (1996). He is also coauthor, with Simon Schaffer, of Leviathan and the Air-Pump (1985), a fascinating account of debates between Robert Boyle and Thomas Hobbes over the legitimacy and proper interpretation of experimental manipulation in science. In his new book, Shapin ventures beyond the strict boundaries of the history of science. While he spends some time on the evolution of the scientific vocation, he’s also concerned with how scientists live and work now.

The Scientific Life considers a diffuse set of big questions. Who are scientists? In what kinds of institutions do they work and how do those institutions shape their work? What’s the relationship, if any, between the authority of science and the moral status of scientists? In particular, are scientists priests of nature, endowed with exceptional moral competence, or ordinary people who have acquired esoteric technical knowledge? And to what extent do personal virtues matter in the practice of science?

Shapin focuses almost entirely on twentieth-century “technoscience” in America—technoscience being the various scientific and high-tech enterprises that engage in research and so shape our future. He is interested not in gentlemen amateurs like Darwin, who typified Victorian science, but in professional (and often obscure) researchers. Shapin chooses to examine science during what he calls “late modernity”—roughly 1900 to now—partly because he believes that too many humanists and social scientists have lost touch with the realities of technoscience. Consequently, he claims, these thinkers subscribe to a number of mistaken, or at least dubious, views about science and scientists. To correct these misapprehensions, Shapin seeks to sketch the worlds of university professors, industrial researchers, and Internet entrepreneurs. As he emphasizes repeatedly, his goal is neither to celebrate nor to criticize modern science, merely to describe it. The result is a kind of natural history of the American scientist.

The Scientific Life confirms Shapin’s reputation for erudition. The book is packed with facts culled from wide reading that ranges from the pages of long-extinct trade journals like Industrial Laboratories, through congressional testimony, to the memoirs of now-forgotten scientists. Shapin also draws on formal interviews he conducted with twenty-five or so scientists, as well as on his informal observations of meetings among high-tech entrepreneurs and venture capitalists and other investors. While extended chapters on the management of research in chemical companies may sound less than enthralling, Shapin’s treatment is lively and full of surprises. In the end, his claims are contrarian. He hopes to convince you that many of your ideas about science and scientists could be wrong. (Scientists working in industry, for example, might be as creative as those working in universities.) And though his case isn’t entirely convincing, it’s remarkably rich in detail and revelation.

The Scientific Life tells two parallel tales, one about the evolution of academic and commercial science and the other about the role of personal virtue in the practice of science. While Shapin weaves these stories together throughout his book, it’s worth considering them separately.

1.

What it means to be a scientist at the beginning of the twenty-first century differs profoundly from what it meant at the beginning of the twentieth. A hundred years ago, an American scientist was almost certainly an academic. He toiled in a small university laboratory and, likely as not, performed experiments using his own hands. He acted as mentor for a few students, undergraduate or graduate, who worked odd hours and probably pursued their own research projects. Collaborations among laboratories were rare. Science was small.

In the early decades of the twentieth century, a new kind of science emerged. This new science, performed in industrial laboratories at large corporations like Bell Telephone, General Electric, or Eastman Kodak, did not, of course, displace academic science. It appeared alongside it. As a result, a young scientist faced a choice: the academy or industry.

The rise of industrial science involved something of a clash of stereotypes. The traditional picture of the scientist romanticized him as fiercely independent. It wasn’t easy to see how such a character could fit into the stereotypic business world of company men who worried over short-term profits, not eternal truths. Not surprisingly, many academics held that any scientist foolish enough to enter industry was doomed to misery. He’d spend his days struggling against an overregimented hierarchy, taking orders from managers, and hiding from accountants. Indeed the only imaginable motive for such a move was the vulgar one of money.

Advertisement

One of Shapin’s principal claims is that there is little historical support for this picture. Surprisingly, he shows that industrial research, at least at some companies, was not invariably more regimented than that in academia. He identifies two main reasons why the stereotype fails. First, to a greater extent than most appreciate, industry sometimes offered scientists intellectual freedom. Many companies, like Eastman Kodak, understood that the results of scientific investigation were by their nature uncertain and that profits, if any, would take many years to materialize. Scientists were thus granted considerable breathing room to work out their ideas and to follow them in unexpected directions. Some companies, including American Cyanamid, Dow Chemical, and Eastman Kodak, went so far as to provide senior scientists free time to pursue their own research interests, whatever they might be. Consequently, scientists in industry generally found their work satisfying. And while the money was good, it doesn’t appear to have been the main attraction. Instead, industry was frequently seen as the place to do cutting-edge research.

Unlike the academy, industry provided world-class facilities, well-trained support staff, and freedom from teaching. Many scientists also discovered that industrial research demanded a degree of flexibility and teamwork rarely required in a university. A chemist trained in one specialty might, the next year, find himself working in another, if the team’s efforts led in that direction. The results of industrial research were often impressive: the invention of the transistor and the discovery of the cosmic microwave background radiation, to name but two.1

Shapin spends a good deal of time characterizing the management styles of research directors who nurtured a culture of freedom in industry. C.E. Kenneth Mees, for example, who led research at Eastman Kodak in the early decades of the twentieth century, was a passionate defender of creative disorganization in applied research. (Shapin quotes several of Mees’s aphorisms, including “When I am asked how to plan, my answer is ‘Don’t.'”) Shapin concludes that effective directors led not so much by a rulebook but by a kind of charisma. They walked the halls, projected confidence, ensured that scientists talked with one another, and subtly inspired them.

The academy versus industry stereotype may also fail on the academic side. Throughout the twentieth century, academic science grew somewhat less free. One reason was money. Modern research is expensive and universities rarely, if ever, fund it fully. Scientists are thus obliged to seek outside support, usually from federal agencies. This situation is a recent one. Before World War II, the federal government sponsored little science. But on the heels of the Manhattan Project to build the atomic bomb, the government recognized that research was important and spun off a host of agencies to fund it, including the National Science Foundation, the Atomic Energy Commission (later incorporated into the Department of Energy), and the National Institutes of Health.

While these developments surely helped propel scientific progress—American science is, after all, the envy of the world—such an arrangement entailed some intellectual risk. President Eisenhower, who led Columbia University for five years, clearly perceived some of them. In his presidential farewell address of 1961, he warned:

The free university, historically the fountainhead of free ideas and scientific discovery, has experienced a revolution in the conduct of research. Partly because of the huge costs involved, a government contract becomes virtually a substitute for intellectual curiosity. For every old blackboard there are now hundreds of new electronic computers. The prospect of domination of the nation’s scholars by Federal employment, project allocations, and the power of money is ever present—and is gravely to be regarded.

While he was perhaps a bit alarmist, there was surely something to Eisenhower’s concerns. In practice, a scientist seeking research funds must satisfy a panel of peers that his newest idea is worth pursuing. And because the chance that a given proposal gets funded can be small, the grant system can encourage a kind of safe science. (Many believe that, in the game of grants, the way to win is not to lose, i.e., to make the fewest mistakes.) Such a system hardly seems likely to encourage the most daring science imaginable. The academy’s principled claim to intellectual freedom is thus compromised by the realities of external funding, especially as tenure for young scientists often depends implicitly on a record of successfully obtaining research funds.

Advertisement

The traditional tension between academic and industrial science has, in recent years, been complicated by the rise of a third species of science: that of the entrepreneur. Shapin devotes the last third of his book to this development. In some disciplines, a scientist with a new idea needn’t persuade a federal agency nor the management hierarchy of a large corporation to pursue it. Instead, he can turn to venture capitalists eager to launch potentially profitable start-up firms. Because these firms are usually small and, initially at least, focus on one or a few projects, entrepreneurial science offers both risks and rewards that are atypical of traditional industrial science. On the one hand, many small biotech or Internet firms have half-lives measured in months, but on the other, those that do survive can leave their founding scientists remarkably rich. This is the world in which J. Craig Venter sequenced the complete human genome (while competing against a federally funded project) and in which Kary Mullis invented PCR, a molecular technique that allows any DNA sequence to be copied billions of times.2 Shapin gives a great deal of space to entrepreneurial science not because conventional industrial research has disappeared—major corporations like Intel, IBM, and Merck still, of course, support enormous amounts of research—but because entrepreneurial science represents the latest major transformation of research in America.

One consequence of the rise of entrepreneurial science is that the line between academic and commercial research has been blurred. A scientist might work simultaneously in both the academy and business or slip adroitly between the two. This situation has raised a number of ethical questions that have been widely discussed. Are university scientists obliged to freely exchange all their ideas and data? Do private interests set the direction of academic research? The ascendance of entrepreneurial science has also raised a number of sociological questions which have been less widely discussed. To what extent are scientific entrepreneurs motivated by money versus, say, altruism? Couldn’t a scientist, after all, conclude that the most promising path to treatment of a disease involves launching a biotech firm? Shapin again suggests that we should not presume we know the answers to such questions. They should, he maintains, be the subject of objective inquiry, an inquiry that has only begun.

There can be little doubt that, at least in some areas of science, particularly biology and information technology, entrepreneurial science will grow in size and, possibly, significance. Indeed Shapin interviews a surprising number of young scientists who are turned off by the prospect of the academy and are eager to join the supposedly more freewheeling world of small biotech or Internet firms. Whatever one thinks of these developments, there can be no question that the practice of American science has been transformed radically.

2.

As the role of the scientist evolved, so too did the public’s perception of the scientist’s moral status. Early scientists, in the popular view, were not so much salaried members of a professional class as priests of nature. These priests, unlike the more familiar variety, studied the book of nature, not scripture, but both books were understood to have the same author. Not surprisingly, premodern scientists often suggested that the study of natural phenomena was ennobling. As Joseph Priestly, the eighteenth-century chemist and theologian who discovered oxygen, put it, the “contemplation of the works of God should give a sublimity to [the scientist’s] virtue, should expand his benevolence, extinguish every thing mean, base and selfish in [his] nature.” It followed easily that the scientist had a special moral, and perhaps even political, competence. Science was a calling and those who answered the call were different—and better.

This idea now seems quixotic. Indeed by World War II it was waning. A number of writers, as different as Robert K. Merton and H.L. Mencken, began to suggest that scientists were, morally, no better or worse than anyone else.3 This idea of moral equivalence between scientists and nonscientists ultimately became the new orthodoxy. As Shapin puts it, it appeared to many that

there were no just grounds in the nature of science—properly understood—or in the make-up of the scientist—properly understood—to expect expertise in the natural order to translate into virtue in the moral order.

One of Shapin’s more interesting tasks is to trace the reasons for the rise of this view of moral equivalence of scientists and other people (whether or not that view is correct). Perhaps the most obvious cause was World War II itself and, in particular, the scientists’ role in bringing the war to a close at Hiroshima and Nagasaki. Whatever one thought of the legitimacy of the use of atomic weapons, there could be no doubt that scientists appeared considerably less priestly afterward. (Ironically, the same event that ultimately led to the injection of vast quantities of cash into American science simultaneously helped rob scientists of their exalted moral status.)

More surprisingly, Shapin shows that the idea that scientists are like everyone else resulted partly from a deliberate propaganda campaign. During the cold war, American policymakers grew concerned about a shortage of scientists needed to combat communism. Unfortunately, several studies revealed that the public viewed the scientist as a creature apart. He was a paragon, an eccentric; he was contemplative and far too austere. Recognizing that this image jeopardized the recruitment of young people into science, a kind of marketing blitz began. As Shapin explains:

Leading spokespersons of government, industry, and the universities took it upon themselves to specify the ordinariness of the scientist and, therefore, the attractiveness of the scientific career to those who felt themselves to be neither geniuses nor morally special.

Time and Life magazines also chimed in, running pieces in the late 1950s and early 1960s that emphasized the unremarkable character of the scientist.

Shapin himself seems to have some doubts about the moral equivalence thesis (mightn’t scientists be morally special after all?). But this could reflect his relative neglect of one of the more obvious reasons for the rise of that thesis. During the twentieth century, it became painfully clear that scientists were capable of behavior that ranged from the dishonorable to the heinous. Many scientists were, for instance, complicit in promoting false theories or in performing unethical and sometimes barbarous science under Nazi and Soviet rule.4 While one might argue that Shapin isn’t concerned with research under dictatorships or that the scientists involved sometimes labored at the point of a gun, the picture in Western democracies wasn’t entirely rosy either. There, success with physical phenomena fed hubris among some scientists about their ability to rethink social and political arrangements. The result was a proliferation of utopian schemes from both the left and right, featuring everything from eugenics to fascism to scientifically planned economies.

The thinking public could hardly fail to notice that scientists backed some bad ideas. To pick only on evolutionary genetics, my own specialty, Sir Ronald Fisher participated in the Eugenics Society, which, in the early 1930s, fought for eugenic sterilization in the United Kingdom. And J.B.S. Haldane, who announced in 1938 that Lenin was the “greatest man of his time,” would, as late as 1962, consider Stalin “a very great man who did a very good job.”

More recently, we have heard seemingly endless tales of unscrupulous behavior by scientists working at major corporations. As I write, the newspapers report that pharmaceutical company–sponsored studies of new drugs often go unpublished if they reveal the drugs to be ineffective or to have serious side effects, leaving a highly biased record of research. However widespread such problems may be—and far worse clearly occurs—the phrase “priests of nature” doesn’t spring to mind when most of us think of Big Pharma.5

Taking all this into account, the public’s demotion of scientists’ moral status was unsurprising and perhaps even charitable.

3.

Shapin sees the morality of scientists as part of a larger issue: the extent to which the personal qualities of scientists matter in the practice of science. His concern here derives from the claims about modern society of Max Weber and his disciples. These men maintained that one mark of the modern was a decrease in the significance of the personal and familiar and an increase in the significance of the impersonal and bureaucratic. But when it comes to that most characteristic modern activity—science —Shapin isn’t so sure. Instead, he concludes that personal qualities like virtue, trust, reliability, and familiarity continue to matter in science, perhaps more than ever.

While the Weberian account faced some difficulties with industrial science, Shapin seems to think it’s most clearly contradicted by contemporary entrepreneurial science. As Shapin watched Internet and biotech entrepreneurs pitching their cases to venture capitalists, a pattern emerged. More than anything, venture capitalists weigh the personal qualities of the scientists that appear before them: their reliability, honesty, and creativity. As Shapin puts it, “judgment in these worlds of leading-edge technoscience and finance often implicates knowledge of the virtues of familiar people. People and their virtues matter. ” Shapin connects this reliance on personal virtue to the “radical uncertainty” of scientific research. The outcome of research is unknowable a priori and thus all one can sensibly do is back the best person, not a particular project.

This all seems right to me, if somewhat unsurprising. Of course science isn’t a faceless bureaucracy. Any working scientist will, for example, believe new and surprising results far more readily when they derive from a colleague known to be reliable, or cautious, or immune to the seductions of sudden fame. This doesn’t mean that this colleague is necessarily admirable in his or her private life; he or she may even treat their spouse shamefully. It means only that scientists weigh relevant (and known) personal qualities when they weigh scientific claims.6

Though generally sound, Shapin’s discussion is in some ways unsatisfying. For one thing, he draws his main evidence for the role of the personal from the interaction of venture capitalists with entrepreneurs, an interaction that has more to do with investing than with science. I doubt many have argued that investors should disregard the personal attributes of those to whom they hand large sums of money. Also, Shapin’s discussion of industrial science mostly breaks off around the middle of the twentieth century. Is there any reason to believe that personal qualities still matter much in such large bureaucracies, the locus of much scientific research in America? Is research at Pfizer really shaped by the personal virtues of its scientists?

More important, Shapin sometimes blurs his discussion of the personal versus bureaucratic with his discussion of moral equivalence versus moral superiority. He writes, for example, that it’s now commonly believed that “scientists are morally no different from anyone else, and, more generally, that the ‘personal equation’ has been eliminated from the scenes in which powerful technoscientific knowledge is produced.” But these are, at least in principle, separate matters. It can both be true that the personal qualities of scientists, including their moral attributes, matter in many ways and situations and also that scientists, on average, are no better or worse than anyone else. Indeed, in my experience, both are true. Shapin doesn’t deny this but he doesn’t emphasize it either.

Finally, one other problem runs through The Scientific Life. While Shapin correctly sees that science has grown far more heterogeneous over the last century (it’s now thoroughly academic, industrial, and entrepreneurial), he at times seems so taken with this heterogeneity that he loses sight of any coherent entity that can be sensibly called science or even technoscience. Many of the firms initially funded by venture capital, for instance, are engaged in science in only the most superficial way. (Shapin at one point mentions Facebook and eBay.7) Unfortunately, this problem may lead Shapin to mislocate the center of gravity of science. Matters here depend partly on how one assesses the significance of scientific research. Does it depend on the number of jobs in the academy as opposed to the number in industry, or on the amount of dollars invested, or on the importance of the scientific findings themselves?

Many more thousands of scientists may work in corporate than in academic settings; and yet, in many disciplines, most fundamental insights might still derive from the academy. Internet firms may employ thousands of researchers and make billions of dollars, but the key idea of a universal computing machine—the idea that made all such enterprises possible—originated with Alan Turing, working at King’s College, Cambridge. Similarly, a thousand biotech firms may produce dozens of useful drugs but one of the key breakthroughs that allowed at least some of these ventures, the unraveling of the structure of DNA, came from James Watson and Francis Crick, working at Cambridge. (And many innovations marketed by drug companies originally were the products of university laboratories.) While there can be no doubt that the figure of the independent academic scientist has been overly romanticized, when it comes to truly transformational science, it’s at least possible that the lone wolf mythology isn’t entirely mythological.

But it would be unfair to end on this note. Shapin’s book is a major contribution to a fascinating topic. And like many major contributions, its significance lies partly in the new questions it asks—when and why, for example, did people start thinking that scientists are like everyone else?—and in the new places it looks for answers—in the laboratories of chemical companies. Shapin may not be doing a conventional history of the “scientific life,” but what he has done is both novel and provocative.

-

1

The transistor was invented at AT&T’s Bell Labs in the late 1940s by William Shockley, John Bardeen, and Walter Brattain. The cosmic microwave background radiation was discovered in 1965 by Arno Penzias and Robert Wilson of Bell Telephone Laboratories. The discovery of the background radiation, believed to be a remnant of the Big Bang, is recounted in Steven Weinberg’s The First Three Minutes: A Modern View of the Origin of the Universe (Basic Books, 1977). ↩

-

2

PCR, short for “polymerase chain reaction,” is a technique that makes billions of copies of any short DNA sequence, no matter how small the original sample of hereditary material. The method has altered radically the practice of molecular biology and is used nearly every day in virtually every genetics and molecular biological laboratory. (PCR is also what allows the police to determine a suspect’s DNA sequence, in certain genomic regions, from a single hair, or from semen, etc.) Kary Mullis invented the technique in 1983 while working for the Cetus Corporation, an early biotech firm. ↩

-

3

Shapin doesn’t mention it, but Mencken spoke from experience. He was a friend of Raymond Pearl, a biologist at Johns Hopkins University and an important early student of the biology of aging. Mencken and Pearl were members of the Saturday Night Club, an informal group that enjoyed a reputation for drunken excess. ↩

-

4

If we consider only the Nazis, the monstrous behavior of many German geneticists, anthropologists, and physicians is documented in essays collected in Racial Hygiene: Medicine Under the Nazis, edited by Robert Proctor (Harvard University Press, 1988), and Deadly Medicine: Creating the Master Race, edited by Susan D. Bachrach and Dieter Kuntz (United States Holocaust Memorial Museum/Univeristy of North Carolina Press, 2004). Daniel J. Kevles’s In the Name of Eugenics (Knopf, 1985) provides the classic history of the rise of eugenic thought generally, including its reception and development in Nazi Germany. ↩

-

5

Until several months ago, the federal government required registration of commercial drugs trials only at the beginning of a trial; their outcomes needn’t be reported and many were not. This almost certainly led to a skewed publication record in which positive drug effects were reported but unwelcome side effects were not (see Robert Lee Hotz, “What You Don’t Know About a Drug Can Hurt You,” TheWall Street Journal, December 12, 2008). For more on the often-alarming behavior of pharmaceutical companies and the scientists they employ, see Marcia Angell, “The Truth About the Drug Companies,” The New York Review, July 15, 2004; Arnold S. Relman and Marcia Angell, “America’s Other Drug Problem,” The New Republic, December 16, 2002; and Marcia Angell’s recent review, ” Drug Companies and Doctors: A Story of Corruption,” The New York Review, January 15, 2009. ↩

-

6

As Shapin notes, this role of the personal in research in no way undermines the objectivity of science. That certain scientists hit a target more reliably than others do cannot be taken to justify postmodernist doubts about the existence of targets. ↩

-

7

Shapin is aware of, and wrestles with, this problem. (This is also, of course, why he prefers the more inclusive term “technoscience.”) And I certainly agree that what we mean by science or technoscience shouldn’t be too narrowly or dogmatically construed. But Shapin’s story is about research. And while the mid-twentieth-century industrial firms he discusses were clearly in the business of research, some of the venture capital–funded firms he mentions do not appear to be. ↩