The digital universe came into existence, physically speaking, late in 1950, in Princeton, New Jersey, at the end of Olden Lane. That was when and where the first genuine computer—a high-speed, stored-program, all-purpose digital-reckoning device—stirred into action. It had been wired together, largely out of military surplus components, in a one-story cement-block building that the Institute for Advanced Study had constructed for the purpose. The new machine was dubbed MANIAC, an acronym of “mathematical and numerical integrator and computer.”

And what was MANIAC used for, once it was up and running? Its first job was to do the calculations necessary to engineer the prototype of the hydrogen bomb. Those calculations were successful. On the morning of November 1, 1952, the bomb they made possible, nicknamed “Ivy Mike,” was secretly detonated over a South Pacific island called Elugelab. The blast vaporized the entire island, along with 80 million tons of coral. One of the air force planes sent in to sample the mushroom cloud—reported to be “like the inside of a red-hot furnace”—spun out of control and crashed into the sea; the pilot’s body was never found. A marine biologist on the scene recalled that a week after the H-bomb test he was still finding terns with their feathers blackened and scorched, and fish whose “skin was missing from a side as if they had been dropped in a hot pan.”

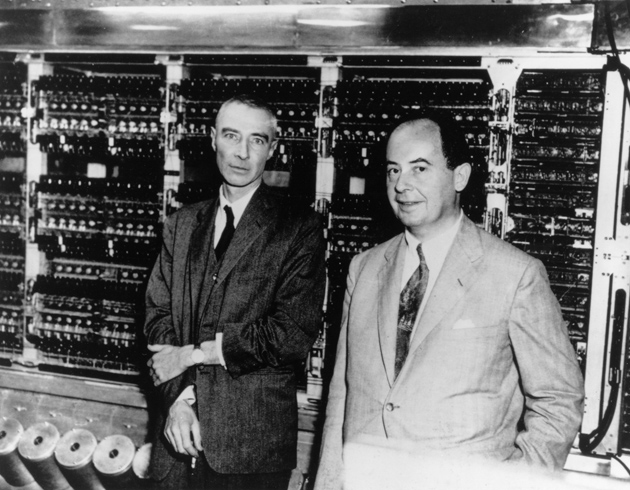

The computer, one might well conclude, was conceived in sin. Its birth helped ratchet up, by several orders of magnitude, the destructive force available to the superpowers during the cold war. And the man most responsible for the creation of that first computer, John von Neumann, was himself among the most ardent of the cold warriors, an advocate of a preemptive military attack on the Soviet Union, and one of the models for the film character Dr. Strangelove. As George Dyson writes in his superb new history, Turing’s Cathedral, “The digital universe and the hydrogen bomb were brought into existence at the same time.” Von Neumann had seemingly made a deal with the devil: “The scientists would get the computers, and the military would get the bombs.”

The story of the first computer project and how it begat today’s digital universe has been told before, but no one has told it with such precision and narrative sweep as Dyson. The author, a noted historian of science, came to the task with two great advantages. First, as a visiting scholar at the Institute for Advanced Study in 2002–2003, he got access to records of the computer project that in some cases had not seen the light of day since the late 1940s. This makes for a wealth of quasi-novelistic detail in his book, ranging from the institute’s cafeteria menu one evening in 1946 (“Creamed Halibut with Eggs on Potatoes,” 25 cents) to the consequences of Princeton’s sultry summer weather for the nascent computing device (“IBM machine putting a tar-like substance on cards,” one log entry reads; the next entry explains, “Tar is tar from roof”). The author’s second advantage is that his parents, the physicist Freeman Dyson and the logician Verena Huber-Dyson, both arrived at the institute in 1948, five years before he was born. He thus grew up around the computer project, and many of the surviving eyewitnesses were glad to talk to him—one of whom recalled writing “STOP THE BOMB” in the dust on von Neumann’s car.

It was not just the military impetus behind the project that evoked opposition at the institute. Many felt that such a number-crunching behemoth, whatever its purpose, had no place in what was intended to be a sort of platonic heaven for pure scholarship. The Institute for Advanced Study was founded in 1930 by the brothers Abraham and Simon Flexner, philanthropists and educational reformers. The money came from Louis Bamberger and his sister Caroline Bamberger Fuld, who sold their interest in the Bamberger’s department store chain to Macy’s in 1929, just weeks before the stock market crash. Of the $11 million of the proceeds they took in cash, the Bambergers committed $5 million (equivalent to $60 million today) to establishing what Abraham Flexner envisaged as “a paradise for scholars who, like poets and musicians, have won the right to do as they please.” The setting was to be Olden Farm in Princeton, the site of a skirmish during the Revolutionary War.

Although some thought was given to making the new institute a center for economics, the founders decided to start with mathematics, both because of its universal relevance and its minimal material requirements: “a few rooms, books, blackboard chalk, paper, and pencils,” as one of the founders put it. The first appointee was Oswald Veblen (Thorstein Veblen’s nephew) in 1932, followed in 1933 by Albert Einstein—who, on his arrival, found Princeton to be “a quaint and ceremonious little village of puny demigods on stilts” (or so at least he told the Queen of Belgium). That same year, the institute hired John von Neumann, a Hungarian-born mathematician who had just turned twenty-nine.

Advertisement

Among twentieth-century geniuses, von Neumann ranks very close to Einstein. Yet the styles of the two men were quite different. Whereas Einstein’s greatness lay in his ability to come up with a novel insight and elaborate it into a beautiful (and true) theory, von Neumann was more of a synthesizer. He would seize on the fuzzy notions of others and, by dint of his prodigious mental powers, leap five blocks ahead of the pack. “You would tell him something garbled, and he’d say, ‘Oh, you mean the following,’ and it would come back beautifully stated,” said his onetime protégé, the Harvard mathematician Raoul Bott.

Von Neumann may have missed Budapest’s café culture in provincial Princeton, but he felt very much at home in his adopted country. Having been brought up a Hungarian Jew in the late Hapsburg Empire, he had experienced the short-lived Communist regime of Béla Kun after World War I, which made him, in his words, “violently anti-Communist.” After returning to Europe in the late 1930s to court his second wife, Klári, he finally quit the continent with (Dyson writes) “an unforgiving hatred for the Nazis, a growing distrust of the Russians, and a determination never again to let the free world fall into a position of military weakness that would force the compromises that had been made with Hitler.” His passion for America’s open frontiers extended to a taste for large, fast cars; he bought a new Cadillac every year (“whether he had wrecked the previous one or not”) and loved speeding across the country on Route 66. He dressed like a banker, gave lavish cocktail parties, and slept only three or four hours a night. Along with his prodigious intellect went (according to Klári) an “almost primitive lack of ability to handle his emotions.”

It was toward the end of World War II that von Neumann conceived the ambition of building a computer. He spent the latter part of the war working on the atomic bomb project at Los Alamos, where he had been recruited because of his expertise at the (horribly complicated) mathematics of shock waves. His calculations led to the development of the “implosion lens” responsible for the atomic bomb’s explosive chain reaction. In doing them, he availed himself of some mechanical tabulating machines that had been requisitioned from IBM. As he familiarized himself with the nitty-gritty of punch cards and plug-board wiring, the once pure mathematician became engrossed by the potential power of such machines. “There already existed fast, automatic special purpose machines, but they could only play one tune…like a music box,” said his wife Klári, who had come to Los Alamos to help with the calculations; “in contrast, the ‘all purpose machine’ is like a musical instrument.”

As it happened, a project to build such an “all purpose machine” had already been secretly launched during the war. It was instigated by the army, which desperately needed a rapid means of calculating artillery firing tables. (Such tables tell gunners how to aim their weapons so that the shells land in the desired place.) The result was a machine called ENIAC (for “electronic numerical integrator and computer”), built at the University of Pennsylvania. The coinventors of ENIAC, John Presper Eckert and John Mauchly, cobbled together a monstrous contraption that, despite the unreliability of its tens of thousands of vacuum tubes, succeeded at least fitfully in doing the computations asked of it. ENIAC was an engineering marvel. But its control logic—as von Neumann soon saw when he was given clearance to examine it—was hopelessly unwieldy. “Programming” the machine involved technicians spending tedious days reconnecting cables and resetting switches by hand. It thus fell short of the modern computer, which stores its instructions in the form of coded numbers, or “software.”

Von Neumann aspired to create a truly universal machine, one that (as Dyson aptly puts it) “broke the distinction between numbers that mean things and numbers that do things.” A report sketching the architecture for such a machine—still known as the “von Neumann architecture”—was drawn up and circulated toward the end of the war. Although the report contained design ideas from the ENIAC inventors, von Neumann was listed as the sole author, which occasioned some grumbling among the uncredited. And the report had another curious omission. It failed to mention the man who, as von Neumann well knew, had originally worked out the possibility of a universal computer: Alan Turing.

Advertisement

An Englishman nearly a decade younger than von Neumann, Alan Turing came to Princeton in 1936 to earn a Ph.D. in mathematics (crossing the Atlantic in steerage and getting swindled by a New York taxi driver en route). Earlier that year, at the age of twenty-three, he had resolved a deep problem in logic called the “decision problem.” The problem traces its origins to the seventeenth-century philosopher Leibniz, who dreamed of “a universal symbolistic in which all truths of reason would be reduced to a kind of calculus.” Could reasoning be reduced to computation, as Leibniz imagined? More specifically, is there some automatic procedure that will decide whether a given conclusion logically follows from a given set of premises? That was the decision problem. And Turing answered it in the negative: he gave a mathematical demonstration that no such automatic procedure could exist. In doing so, he came up with an idealized machine that defined the limits of computability: what is now known as a “Turing machine.”

The genius of Turing’s imaginary machine, as Dyson makes clear, lay in its stunning simplicity. (“Let us praise the uncluttered mind,” exulted one of Turing’s colleagues.) It consisted of a scanner that moved back and forth over an infinite tape reading and writing 0s and 1s according to a certain set of instructions—0s and 1s being capable of expressing all letters and numerals. A Turing machine designed for some special purpose—like adding two numbers together—could itself be described by a single number that coded its action. The code number of one special-purpose Turing machine could even be fed as an input onto the tape of another Turing machine.

The boldest of Turing’s ideas was that of a universal machine: one that, if fed the code number of any special-purpose Turing machine, would perfectly mimic its behavior. For instance, if a universal Turing machine were fed the code number of the Turing machine that performed addition, the universal machine would temporarily turn into an adding machine. In effect, the “hardware” of a special-purpose machine could be translated into “software” (the machine’s code number) and then entered like data into the universal machine, where it would run as a program. That is exactly what happens when your laptop, which is a physical embodiment of Turing’s universal machine, runs a word-processing program, or when your smartphone runs an app.

As a byproduct of solving a problem in pure logic, Turing had originated the idea of the stored-program computer. When he subsequently arrived at Princeton as a graduate student, von Neumann made his acquaintance. “He knew all about Turing’s work,” said a codirector of the computer project. “The whole relation of the serial computer, tape and all that sort of thing, I think was very clear—that was Turing.” Von Neumann and Turing were virtual opposites in character and appearance: the older man a portly, well-attired, and clubbable sybarite who relished wielding power and influence; the younger one a shy, slovenly, dreamy ascetic (and homosexual), fond of intellectual puzzles, mechanical tinkering, and long-distance running. Yet the two shared a knack for getting to the logical essence of things. After Turing completed his Ph.D. in 1938, von Neumann offered him a salaried job as his assistant at the institute; but with war seemingly imminent, Turing decided to return to England instead.

“The history of digital computing,” Dyson writes, “can be divided into an Old Testament whose prophets, led by Leibniz, supplied the logic, and a New Testament whose prophets, led by von Neumann, built the machines. Alan Turing arrived in between.” It was from Turing that von Neumann drew the insight that a computer is essentially a logic machine—an insight that enabled him to see how to overcome the limitations of ENIAC and realize the ideal of a universal computer. With the war over, von Neumann was free to build such a machine. And the leadership of the Institute for Advanced Study, fearful of losing von Neumann to Harvard or IBM, obliged him with the authorization and preliminary funding.

There was widespread horror among the institute’s fellows at the prospect of such a machine taking shape in their midst. The pure mathematicians tended to frown on tools other than blackboard and chalk, and the humanists saw the project as mathematical imperialism at their expense. “Mathematicians in our wing? Over my dead body! and yours?” a paleographer cabled the institute’s director. (It didn’t help that fellows already had to share their crowded space with remnants of the old League of Nations that had been given refuge at the institute during the war.) The subsequent influx of engineers raised hackles among both the mathematicians and the humanists. “We were doing things with our hands and building dirty old equipment. That wasn’t the Institute,” one engineer on the computer project recalled.

Dyson’s account of the long struggle to bring MANIAC to life is full of fascinating and sometimes comical detail, involving stolen tea-service sugar, imploding tubes, and a computer cooling system that was farcically prone to ice over in the Princeton humidity. The author is unfailingly lucid in explaining just how the computer’s most basic function—translating bits of information from structure (memory) to sequence (code)—was given electronic embodiment. Von Neumann himself had little interest in the minutiae of the computer’s physical implementation; “he would have made a lousy engineer,” said one of his collaborators. But he recruited a resourceful team, led by chief engineer Julian Bigelow, and he proved a shrewd manager. “Von Neumann had one piece of advice for us,” Bigelow recalled: “not to originate anything.” By limiting the engineers to what was strictly needed to realize his logical architecture, von Neumann saw to it that MANIAC would be available in time to do the calculations critical for the hydrogen bomb.

The possibility of such a “Super bomb”—one that would, in effect, bring a small sun into existence without the gravity that keeps the sun from flying apart—had been foreseen as early as 1942. If a hydrogen bomb could be made to work, it would be a thousand times as powerful as the bombs that destroyed Hiroshima and Nagasaki. Robert Oppenheimer, who had led the Los Alamos project that produced those bombs, initially opposed the development of a hydrogen bomb on the grounds that its “psychological effect” would be “adverse to our interest.” Other physicists, like Enrico Fermi and Isador Rabi, were more categorical in their opposition, calling the bomb “necessarily an evil thing considered in any light.” But von Neumann, who feared that another world war was imminent, was enamored of the hydrogen bomb. “I think that there should never have been any hesitation,” he wrote in 1950, after President Truman decided to proceed with its development.

Perhaps the fiercest advocate of the hydrogen bomb was the Hungarian-born physicist Edward Teller, who, backed by von Neumann and the military, came up with an initial design. But Teller’s calculations were faulty; his prototype would have been a dud. This was first noticed by Stanislaw Ulam, a brilliant Polish-born mathematican (elder brother to the Sovietologist Adam Ulam) and one of the most appealing characters in Dyson’s book. Having shown that the Teller scheme was a nonstarter, Ulam produced, in his typically absent-minded fashion, a workable alternative. “I found him at home at noon staring intensely out of a window with a very strange expression on his face,” Ulam’s wife recalled. “I can never forget his faraway look as peering unseeing in the garden, he said in a thin voice—I can still hear it—‘I found a way to make it work.’”

Now Oppenheimer—who had been named director of the Institute for Advanced Study after leaving Los Alamos—was won over. What became known as the Teller-Ulam design for the H-bomb was, Oppenheimer said, “technically so sweet” that “one had to at least make the thing.” And so, despite strong opposition on humanitarian grounds among many at the institute (who suspected what was going on from the armed guards stationed by a safe near Oppenheimer’s office), the newly operational computer was pressed into service. The thermonuclear calculations kept it busy for sixty straight days, around the clock, in the summer of 1951. MANIAC did its job perfectly. Late the next year, “Ivy Mike” exploded in the South Pacific, and Elugelab island was removed from the map.

Shortly afterward, von Neumann had a rendezvous with Ulam on a bench in Central Park, where he probably informed Ulam firsthand of the secret detonation. But then (judging from subsequent letters) their chat turned from the destruction of life to its creation, in the form of digitally engineered self-reproducing organisms. Five months later, the discovery of the structure of DNA was announced by Francis Crick and James Watson, and the digital basis of heredity became apparent. Soon MANIAC was being given over to problems in mathematical biology and the evolution of stars. Having delivered its thermonuclear calculations, it became an instrument for the acquisition of pure scientific knowledge, in keeping with the purpose of the institute where it was created.

But in 1954 President Eisenhower named von Neumann to the Atomic Energy Commission, and with his departure the institute’s computer culture went into decline. Two years later, the fifty-two-year-old von Neumann lay dying of bone cancer in Walter Reed Army Hospital, disconcerting his family by converting to Catholicism near the end. (His daughter believed that von Neumann, an inventor of game theory, must have had Pascal’s wager in mind.) “When von Neumann tragically died, the snobs took their revenge and got rid of the computing project root and branch,” the author’s father, Freeman Dyson, later commented, adding that “the demise of our computer group was a disaster not only for Princeton but for science as a whole.” At exactly midnight on July 15, 1958, MANIAC was shut down for the last time. Its corpse now reposes in the Smithsonian Institution in Washington.

Was the computer conceived in sin? The deal von Neumann made with the devil proved less diabolical than expected, the author observes: “It was the computers that exploded, not the bombs.” Dyson’s telling of the subsequent evolution of the digital universe is brisk and occasionally hair-raising, as when he visits Google headquarters in California and is told by an engineer there that the point of Google’s book-scanning project is to allow smart machines to read the books, not people.

What is most interesting, though, is how von Neumann’s vision of the digital future has been superseded by Turing’s. Instead of a few large machines handling the world’s demand for high-speed computing, as von Neumann envisaged, a seeming infinity of much smaller devices, including the billions of microprocessors in cell phones, have coalesced into what Dyson calls “a collective, metazoan organism whose physical manifestation changes from one instant to the next.” And the progenitor of this virtual computing organism is Turing’s universal machine.

So it is fitting that Dyson titled his book Turing’s Cathedral. The true dawn of the digital universe came not in the 1950s, when von Neumann’s machine started running thermonuclear calculations. Rather, it was in 1936, when the young Turing, lying down in a meadow during one of his habitual long-distance runs, conceived of his abstract machine as a means of solving a problem in pure logic. Like von Neumann, Turing was to play an important behind-the-scenes role in World War II. Working as a code-breaker for his nation at Bletchley Park, he deployed his computational ideas to crack the Nazi “Enigma” code, an achievement that helped save Britain from defeat in 1941 and reversed the tide of the war.

But Turing’s wartime heroism remained a state secret well beyond his suicide in 1954, two years after he had been convicted of “gross indecency” for a consensual homosexual affair and sentenced to chemical castration. In 2009, British Prime Minister Gordon Brown issued a formal apology, on behalf of “all those who live freely thanks to Alan’s work,” for the “inhumane” treatment Turing received. “We’re sorry, you deserved so much better,” he said. Turing’s imaginary machine did more against tyranny than von Neumann’s real one ever did.