1.

The most important problem in the biological sciences is one that until quite recently many scientists did not regard as a suitable subject for scientific investigation at all. It is this: How exactly do neurobiological processes in the brain cause consciousness? The enormous variety of stimuli that affect us—for example, when we taste wine, look at the sky, smell a rose, listen to a concert—trigger sequences of neuro-biological processes that eventually cause unified, well-ordered, coherent, inner, subjective states of awareness or sentience. Now what exactly happens between the assault of the stimuli on our receptors and the experience of consciousness, and how exactly do the intermediate processes cause the conscious states?

The problem, moreover, is not just about the perceptual cases I have mentioned, but includes the experiences of voluntary actions, as well as such inner processes as worrying about income taxes or trying to remember your mother-in-law’s phone number. It is an amazing fact that everything in our conscious life, from feeling pains, tickles, and itches to—pick your favorite—feeling the angst of postindustrial man under late capitalism or experiencing the ecstasy of skiing in deep powder—is caused by brain processes. As far as we know the relevant processes take place at the micro levels of synapses, neurons, neuron columns, and cell assemblies. All of our conscious life is caused by these lower-level processes, but we have only the foggiest idea of how it all works.

Well, you might ask, why don’t the relevant specialists get on with it and figure out how it works? Why should it be any harder than finding out the causes of cancer? But there are a number of special features that make the problems raised by brain sciences even harder to solve. Some of the difficulties are practical: by current estimate, the human brain has over 100 billion neurons, and each neuron has synaptic connections with other neurons ranging in number from a few hundred to many tens of thousands. All of this enormously complex structure is massed together in a space smaller than a soccer ball. Furthermore, it is hard to work on the micro elements in the brain without damaging them or killing the organism. In addition to the practical difficulties there are several philosophical and theoretical obstacles and confusions that make it hard to pose and answer the right questions. For example, the common-sense way in which I have just posed the question “How do brain processes cause consciousness?” is already philosophically loaded. Many philosophers and even some scientists think that the relation cannot be causal, because a causal relation between brain and consciousness seems to them to imply some version of dualism of brain and consciousness, which they want to reject on other grounds.

From the time of the ancient Greeks up to the latest computational models of cognition, the entire subject of consciousness, and of its relation to the brain, has been something of a mess, and at least some of the mistakes in the history of the subject are repeated in the books under review here. Before discussing the latest work, I want to set the stage by clarifying some of the issues and correcting some of what seem to me the worst historical mistakes.

One issue can be dealt with swiftly. There is a problem that is supposed to be difficult but does not seem very serious to me, and that is the problem of defining “consciousness.” It is supposed to be frightfully difficult to define the term. But if we distinguish between analytic definitions, which aim to analyze the underlying essence of a phenomenon, and common-sense definitions, which just identify what we are talking about, it does not seem to me at all difficult to give a common-sense definition of the term: “Consciousness” refers to those states of sentience and awareness that typically begin when we awake from a dreamless sleep and continue until we go to sleep again, or fall into a coma or die or otherwise become “unconscious.” Dreams are a form of consciousness, though of course quite different from full waking states. Consciousness so defined switches off and on. By this definition a system is either conscious or it isn’t, but within the field of consciousness there is a range of states of intensity ranging from drowsiness to full awareness. Consciousness so defined is an inner, first-person, qualitative phenomenon. Human beings and higher animals are obviously conscious, but we do not know how far down the phylogenetic scale consciousness extends. Are fleas conscious, for example? At the present state of neurobiological knowledge, it is probably not useful to worry about such questions. We do not know enough biology to know where the cutoff point is.

The first serious problem derives from intellectual history. In the seventeenth century Descartes and Galileo made a sharp distinction between the physical reality described by science and the mental reality of the soul, which they considered to be outside the scope of scientific research. This dualism of conscious mind and unconscious matter was useful in the scientific research of the time, both because it helped to get the religious authorities off scientists’ backs and because the physical world was mathematically treatable in a way that the mind did not seem to be. But this dualism has become an obstacle in the twentieth century, because it seems to place consciousness and other mental phenomena outside the ordinary physical world and thus outside the realm of natural science. In my view we have to abandon dualism and start with the assumption that consciousness is an ordinary biological phenomenon comparable with growth, digestion, or the secretion of bile. But many people working in the sciences remain dualists and do not believe we can give a causal account of consciousness that shows it to be part of ordinary biological reality. Perhaps the most famous of these is the Nobel laureate neurobiologist Sir John Eccles, who believes that God attaches the soul to the unborn fetus at the age of about three weeks.

Advertisement

Of the authors reviewed here, Roger Penrose is a dualist in the sense that he thinks we do not live in one unified world but rather that there is a separate mental world that is “grounded” in the physical world. Actually, he thinks we live in three worlds, a physical world, a mental world, and a world of abstract objects such as numbers and other mathematical entities. I will say more about this later.

But even if we treat consciousness as a biological phenomenon and thus as part of the ordinary physical world, as I urge we should, there are still many other mistakes to avoid. One I just mentioned: if brain processes cause consciousness, then it seems to many people that there must be two different things, brain processes as causes, and conscious states as effects, and this seems to imply dualism. This second mistake derives in part from a flawed conception of causation. In our official theories of causation we typically suppose that all causal relations must be between discrete events ordered sequentially in time. For example, the shooting caused the death of the victim.

Certainly, many cause and effect relations are like that, but by no means all. Look around you at the objects in your vicinity and think of the causal explanation of the fact that the table exerts pressure on the rug. This is explained by the force of gravity, but gravity is not an event. Or think of the solidity of the table. It is explained causally by the behavior of the molecules of which the table is composed. But the solidity of the table is not an extra event, it is just a feature of the table. Such examples of non-event causation give us appropriate models for understanding the relation between my present state of consciousness and the underlying neurobiological processes that cause it. Lower-level processes in the brain cause my present state of consciousness, but that state is not a separate entity from my brain; rather it is just a feature of my brain at the present time. By the way, this analysis—that brain processes cause consciousness but that consciousness is itself a feature of the brain provides us with a solution to the traditional mind-body problem, a solution which avoids both dualism and materialism, at least as these are traditionally conceived.

A third difficulty in our present intellectual situation is that we don’t have anything like a clear idea of how brain processes, which are publicly observable, objective phenomena, could cause anything as peculiar as inner qualitative states of awareness or sentience, states which are in some sense “private” to the possessor of the state. My pain has a certain qualitative feel and is accessible to me in a way that it is not accessible to you. Now how could these private, subjective, qualitative phenomena be caused by ordinary physical processes such as electrochemical neuron firings at the synapses of neurons? There is a special qualitative feel to each type of conscious state, and we are not in agreement about how to fit these subjective feelings into our overall view of the world as consisting of objective reality. Such states and events are sometimes called qualia and the problem of accounting for them within our overall world view is called the problem of qualia. Among the interesting differences in the accounts of consciousness given by the writers under review are their various divergent ways of coming to terms—or sometimes failing to come to terms—with the problem of qualia.

A fourth difficulty is peculiar to our intellectual climate right now, and that is the urge to take the computer metaphor of the mind too literally. Many people still think that the brain is a digital computer and that the conscious mind is a computer program, though mercifully this view is much less widespread than it was a decade ago. On this view, the mind is to the brain as the software is to the hardware. There are different versions of the computational theory of the mind. The strongest is the one I have just stated: the mind is just a computer program. There is nothing else there. This view I call Strong Artificial Intelligence (Strong AI, for short) to distinguish it from the view that the computer is a useful tool in doing simulations of the mind, as it is useful in doing simulations of just about anything we can describe precisely, such as weather patterns or the flow of money in the economy. This more cautious view I call Weak AI.

Advertisement

Strong AI can be refuted swiftly, and indeed I did so in these pages and elsewhere over a decade ago.1 A computer is by definition a device that manipulates formal symbols. These are usually described as 0’s and 1’s, though any old symbol will do just as well. Computation, so defined, is a purely syntactical set of operations. But we know from our own experience that the mind has something more going on in it than the manipulation of formal symbols; minds have contents. For example when we are thinking in English, the English words going through our minds are not just uninterpreted formal symbols; rather, we know what they mean. For us the words have a meaning, or semantics. The mind could not be just a computer program because the formal symbols of the computer program by themselves are not sufficient to guarantee the presence of the semantic content that occurs in actual minds.

I have illustrated this point with a simple thought experiment. Imagine that you carry out the steps in a program for answering questions in a language you do not understand. I do not understand Chinese, so I imagine that I am locked in a room with a lot of boxes of Chinese symbols (the data base), I get small bunches of Chinese symbols passed to me (questions in Chinese), and I look up in a rule book (the program) what I am supposed to do. I perform certain operations on the symbols in accordance with the rules (that is, I carry out the steps in the program) and give back small bunches of symbols to those outside the room (answers to the questions). I am the computer implementing a program for answering questions in Chinese, but all the same I do not understand a word of Chinese. And this is the point: If I do not understand Chinese solely on the basis of implementing a computer program for understanding Chinese, then neither does any other digital computer solely on that basis, because no digital computer has anything I do not have.

This is such a simple and decisive argument that I am embarrassed to have to repeat it, but in the intervening years since I first published it there must have been over a hundred published attacks on it, including some in the book by Daniel Dennett reviewed here. The Chinese Room Argument—as it has come to be called—has a simple three-step structure.

- Programs are syntactical.

- Minds have a semantics.

- Syntax is not the same as, nor by itself sufficient for, semantics.

Therefore programs are not minds. QED.

In order to refute the argument you would have to show that one of those premises is false, and that is not a likely prospect.

It now seems to me that the argument if anything concedes too much to Strong AI in that it concedes that the theory was at least false. I now think it is incoherent, and here is why. Ask yourself what fact about the machine I am now writing this on makes its operations syntactical or symbolic. As far as its physics is concerned it is just a very complex electronic circuit. The fact that makes these electrical pulses symbolic is the same sort of fact that makes the ink marks on the pages of a book into symbols: we have designed, programmed, printed, and manufactured these systems so that we can treat and use these things as symbols. Syntax, in short, is not intrinsic to the physics of the system but is in the eye of the beholder. Except for the few cases of conscious agents actually going through a computation, adding 2+2 to get 4 for example, computation is not an intrinsic process in nature like digestion or photosynthesis but exists only relative to some agent who gives a computational interpretation to the physics. The upshot is that computation is not intrinsic to nature but is relative to the observer or user.

The consequence for our present discussion is that the question “Is the brain a digital computer?” lacks a clear sense. If it asks, “Is the brain intrinsically a digital computer?” the answer is trivially no, because nothing is intrinsically a digital computer; something is a computer only relative to the assignment of a computational interpretation. If it asks, “Can you assign a computational interpretation to the brain?” the answer is trivially yes, because you can assign a computational interpretation to anything. For example, the window in front of me is a very simple computer. Window open=1, window closed=0. You could never discover computational processes in nature independently of human interpretation because any physical process you might find is computational only relative to some interpretation. This is an obvious point and I should have seen it long ago.

The upshot is that Strong AI, which prides itself on its “materialism” and on the view that the brain is a machine, is not nearly materialistic enough. The brain is indeed a machine, an organic machine; and its processes, such as neuron firings, are organic machine processes. But computation is not a machine process like neuron firing or internal combustion; rather, computation is an abstract mathematical process that exists only relative to conscious observers and interpreters. Such observers as ourselves have found ways to implement computation on silicon-based electrical machines, but that does not make computation into something electrical or chemical.

This is a different argument from the Chinese Room, but it is deeper. The Chinese Room Argument showed that semantics is not intrinsic to syntax; this shows that syntax is not intrinsic to physics.

I reject Strong AI but accept Weak AI. Of the authors under review, Dennett holds a version of Strong AI, Roger Penrose rejects even Weak AI. He thinks the mind cannot even be simulated on a computer. Gerald Edelman accepts the Chinese Room Argument against Strong AI and presents some other arguments of his own, but he accepts Weak AI; indeed he makes powerful use of computer models in his research on the brain, as we will see.

To summarize the general position, then, of how brain research can proceed in answering the questions that bother us: the brain is an organ like any other; it is an organic machine. Consciousness is caused by lower-level neuronal processes in the brain and is itself a feature of the brain. Because it is a feature that emerges from certain neuronal activities, we can think of it as an “emergent property” of the brain. Computers play the same role in studying the brain that they play in any other discipline. They are immensely useful devices for simulating brain processes. But the simulation of mental states is no more a mental state than the simulation of an explosion is itself an explosion.

2.

I said that until recently there was a reluctance among scientists to tackle the problem of consciousness. Now all that has changed, and there has been a spate of books on the subject by biologists, mathematicians, and physicists as well as philosophers. Of the books under review, the one that attempts to give the simplest and most direct account of what we know about how the brain works is Crick’s The Astonishing Hypothesis: The Scientific Search for the Soul. The astonishing hypothesis, on which the book is based, is

that “You,” your joys and your sorrows, your memories and your ambitions, your sense of personal identity and free will, are in fact no more than the behavior of a vast assembly of nerve cells and their associated molecules.

I have seen reviews of Crick’s book which complained that it is not at all astonishing in this day and age to be told that what goes on in our skulls is responsible for the whole of our mental life, and that anyone with a modicum of scientific education will accept Crick’s hypothesis as something of a platitude. I do not think this is a fair complaint. There are two parts to Crick’s astonishment. The first is that all of our mental life has a material existence in the brain—and that is indeed not very astonishing—but, more interestingly, the specific mechanisms in the brain that are responsible for our mental life are neurons and their associated molecules, such as neurotransmitter molecules. I, for one, am always amazed by the specificity of biological systems, and in the case of the brain, the specificity takes a form you could not have predicted just from knowing what it does. If you were designing an organic machine to pump blood you might come up with something like a heart, but if you were designing a machine to produce consciousness, who would think of a hundred billion neurons?

Crick is not clear about distinguishing causal explanations of consciousness from reductionist eliminations of consciousness. The passage I quoted above makes it look as if he is denying that we have conscious experiences in addition to having neuron firings. But I think that a careful reading of the book shows that what he means is something like the claim that I advanced earlier: all of our conscious experiences are explained by the behavior of neurons, and are themselves emergent properties of the system of neurons. (An emergent property of a system is a property that is explained by the behavior of the elements of the system; but it is not a property of any individual elements and it cannot be explained simply as a summation of the properties of those elements. The liquidity of water is a good example: the behavior of the H2O molecules explains liquidity but the individual molecules are not liquid.)

The fact that the explanatory part of Crick’s claim is standard neurobiological orthodoxy today, and will astonish few of the people who are likely to read the book, should not prevent us from agreeing that it is amazing how the brain does so much with such a limited mechanism. Furthermore, not everyone who works in this field agrees that the neuron is the essential functional element. Penrose believes neurons are already too big, and he wants to account for consciousness at a level of much smaller quantum mechanical phenomena. Edelman thinks neurons are too small for most functions and regards the functional elements as “neuronal groups.”

Crick takes visual perception as his wedge for trying to break into the problem of consciousness. I think this is a good choice, if only because so much work in the brain sciences has already been done on the anatomy and physiology of seeing. But one problem with it is that the operation of the visual system is astonishingly complicated. The simple act of recognizing a friend’s face in a crowd requires far more by way of processing than we are able to understand at present. My guess is—and it is just a guess—that when we finally break through to understanding how neuron firings cause consciousness or sentience, it is likely to come from understanding a simple system. However, this does not detract from the interest, indeed fascination, with which we are led by Crick through the layers of cells in the retina to the lateral geniculate nucleus and back to the visual cortex and then through the different areas of the cortex.

How does the neuron work? A neuron is a cell like any other, with a cell membrane and a central nucleus. Neurons differ, however, in certain remarkable ways, both anatomically and physiologically, from other sorts of cells. There are many different types of neuron, but the typical garden-variety neuron has on it a longish, thread-like protuberance called an axon growing out of one side, and a bunch of spiny, somewhat shorter, spiky threads called dendrites on the other side. Each neuron receives signals through its dendrites, processes them in its cell body or soma, and then fires a signal through its axon to the next neurons in line. The neuron fires by sending an electrical impulse down the axon. The axon of one neuron, however, is not directly connected to the dendrites of other neurons, but rather the point at which the signal is transmitted from one cell to the next is a small gap called a synapse. A synapse typically consists of a bump on the axon, called a “bouton,” which sticks out in roughly the shape of a mushroom, and typically fits next to a spine-like protrusion on the surface of the dendrite. The area between the bouton and the postsynaptic dendritic surface is called the synaptic cleft, and when the neuron fires the signal is transmitted across it.

The signal is transmitted not by a direct electrical connection between bouton and dendritic surface but by the release of small amounts of fluids called neurotransmitters. When the electrical signal arrives from the cell body down the axon to the end of a bouton, it causes the release of neurotransmitter fluids into the synaptic cleft. These make contact with receptors on the postsynaptic dendritic side. This causes gates to open, and charged ions flow in or out of the dendritic side, thus altering the electrical potential of the dendrite. The pattern then is this: there is an electrical signal on the axon side, followed by chemical transmission in the synaptic cleft, followed by an electrical signal on the dendrite side. The cell gets a whole bunch of signals from its dendrites, it does a summation of them in its soma, and on the basis of the summation adjusts its rate of firing to the next cells in line.

Neurons receive both excitatory signals, that is signals which tend to increase their rate of firing, and inhibitory signals, signals which tend to decrease their rate of firing. Oddly enough, though each neuron receives both excitatory and inhibitory signals, each neuron sends out only one or the other type of signal. As far as we know, with few exceptions, a neuron is either an excitatory or an inhibitory neuron.

Now what is astonishing is this: as far as our mental life is concerned, that story I just recounted about neurons is the entire causal basis of our conscious life. I left out various details about ion channels, receptors, and different types of neurotransmitters, but our entire mental life is caused by the behavior of neurons and all they do is increase or decrease their rates of firing. When, for example, we store memories it seems we must store them somehow in the synaptic connections between neurons. Crick is remarkably good at summarizing not only the simple account of brain functioning but at trying to integrate it with work in many related fields, including psychology, cognitive science, and the use of computers for neuronal net modeling among others.

Crick is generally hostile to philosophers and philosophy, but the price of having contempt for philosophy is that you make philosophical mistakes. Most of these do not seriously damage his main argument, but they are annoying and unnecessary. I will mention three philosophical errors that I think are really serious.

First, he misunderstands the problem of qualia. He thinks it is primarily a problem about the way one person has knowledge of another person’s qualia. “The problem springs from the fact that the redness of red that I perceive so vivdly cannot be precisely communicated to another human being.” But that is not the problem, or rather it is only one small part of the problem. Even for a system of whose qualia I have near perfect knowledge, myself for example, the problem of qualia is serious. It is this: How is it possible for physical, objective, quantitatively describable neuron firings to cause qualitative, private, subjective experiences? How, to put it naively, does the brain get us over the hump from electrochemistry to feeling? That is the hard part of the mind-body problem that is left over after we see that consciousness must be caused by brain processes and is itself a feature of the brain.

Furthermore, the problem of qualia is not just an aspect of the problem of consciousness, it is the problem of consciousness. You can talk about various other features of consciousness—for the example, the powers that the visual system has to discriminate colors—but to the extent that you are talking about conscious discrimination you are talking about qualia. For this reason I think that the term “qualia” is misleading because it suggests that the qualia of a state of consciousness might be carved off from the rest of consciousness and set on one side, as if you could talk about the rest of the problem of consciousness while ignoring the subjective, qualitative feel of consciousness. But you can’t set qualia on one side, because if you do there is no consciousness left over.

Second, Crick is inconsistent in his account of the reduction of consciousness to neuron firings. He talks reductionist talk, but the account he gives is not at all reductionist in nature. Or, rather, there are at least two senses of “reduction” that he fails to distinguish. In one sense, reduction is eliminative. We get rid of the reduced phenomenon by showing it is really something else. Sunsets are a good example. The sun does not really set over Mount Tamalpais; rather the appearance of the setting sun is an illusion entirely explained by the rotation of the earth on its axis relative to the sun. But in another sense of reduction we explain a phenomenon but do not get rid of it. Thus the solidity of an object is entirely explained by the behavior of molecules, but this does not show that no object is really solid or that there is no distinction between, say, solidity and liquidity. Now Crick talks as if he wants an eliminative reduction of consciousness but in fact the direction in his book is toward a causal explanation. He does not attempt to show that consciousness does not really exist. He gives the game away when he says, entirely correctly in my view, that consciousness is an emergent property of the brain. Complex sensations, he says, are emergent properties that “arise in the brain from the interactions of its many parts.”

The puzzle is that Crick preaches eliminative reductionism when he practices causal emergentism. The standard argument in philosophy against an eliminative reduction of consciousness is that even if we had a perfect science of neurobiology there would still be two distinct features, the neurobiological pattern of neuron firings and the feeling of pain, for example. The neuron firings cause the feeling, but they are not the same thing as the feeling. In different versions this argument is advanced, among other philosophers, by Thomas Nagel, Saul Kripke, Frank Jackson, and the present author. Crick mistakenly endorses an argument against this anti-reductionism, one made by Paul and Patricia Churchland. Their argument is a bad argument and I might as well say why I think so. They think the standard philosophical account is based on the following obviously flawed argument:

- Sam knows his feeling is a pain.

- Sam does not know that his feeling is a pattern of neuron firings.

-

Therefore Sam’s feeling is not a pattern of neuron firings.2

The Churchlands point out that this argument is fallacious, but they are mistaken in thinking that it is the argument used by their opponents. The argument they are attacking is “epistemic”; it is about knowledge. But the argument for the irreducibility of consciousness is not epistemic; it is about how things are in the world. It is about ontology. There are different ways of spelling it out but the fundamental point of each is the same: the sheer qualitative feel of pain is a very different feature of the brain from the pattern of neuron firings that cause the pain. So you can get a causal reduction of pain to neuron firings but not an ontological reduction. That is, you can give a complete causal account of why we feel pain, but that does not show that pains do not really exist.

Third, Crick is unclear about the logical structure of the explanation he is offering, and even on the most sympathetic reading it seems to be inconsistent. I have so far been interpreting him as seeking a causal explanation of visual consciousness, and his talk of “mechanism” and “explanation” supports that interpretation. But he never says clearly that he is offering a causal explanation of how brain processes cause visual awareness. His preferred way of speaking is to say that he is looking for the “neural correlates” of consciousness. But on his own terms “neural correlates” cannot be the right expression. First, a correlation is a relation between two different things, but a relation between two things is inconsistent with the eliminative reductionist line that Crick thinks he is espousing. On the eliminative reductionist view there should be only one thing, neuron firings. Second, and more important, even if we strip away the reductionist mistake, correlations by themselves would not explain anything. Think of the sight of lightning and the sound of thunder. The sight and sound are perfectly correlated, but without a causal theory, you do not have an explanation.

Furthermore, Crick is unclear about the relation between the visual experiences and the objects in the world that they are experiences of. Sometimes he says the visual experience is a “symbolic description” or “symbolic interpretation” of the world. Sometimes he says the neuronal processes “represent” objects in the world. He is even led to deny that we have direct perceptual awareness of objects in the world, and for that conclusion he uses a bad argument right out of seventeenth-century philosophy. He says that since our interpretations can occasionally be wrong, we have no direct knowledge of objects in the world. This argument is in both Descartes and Hume, but it is a fallacy. From the fact that our perceptual experiences are always mediated by brain processes (how else could we have them?) and the fact that they are typically subject to illusions of various kinds, it does not follow that we never see the real world, but only “symbolic descriptions” or “symbolic interpretations” of the world. In the standard case, such as when I look at my watch, I really see the real watch. I do not see a “description” or an “interpretation” of the watch.

I believe that Crick has been badly advised philosophically, but fortunately you can strip away the philosophical confusions and still find an excellent book. What I think in the end he wants, and what in any case I want, is a casual explanation of consciousness, whether visual or otherwise. Photons reflected off objects attack the photoreceptor cells of the retina and this sets up a series of neuronal processes (the retina being a part of the brain), which eventually result, if all goes well, in a visual experience that is a perception of the very object that originally reflected the photons. That is the right way to think of it, as I hope he would agree.

What, then, is Crick’s solution to the problem of consciousness? One of the most appealing features of Crick’s book is his willingness, indeed eagerness, to admit how little we know. But given what we do know he makes some speculations. To explain his speculations about consciousness, I need to say something about what neurobiologists call “the binding problem.” We know that the visual system has cells and indeed regions that are specially responsive to particular features of objects such as color, shape, movement, lines, angles, etc. But when we see an object we have a unified experience of a single object. How does the brain bind all of these different stimuli into a single, unified experience of an object? The problem extends across the different modes of perception. All of my experiences at present are part of one big unified conscious experience (Kant, with his usual gift for catchy phrases, called this “the transcendental unity of apperception”).

Crick says the binding problem is “the problem of how these neurons temporarily become active as a unit.” But that is not the binding problem; rather it is one possible approach to solving the binding problem. For example, a possible approach to solving the binding problem for vision has been proposed by various researchers, especially Wolf Singer and his colleagues in Frankfurt. They think that the solution to the binding problem might consist in the synchronization of firing of spatially separated neurons that correspond to the different features of an object. Neurons responsive to shape, color, and movement, for example, fire in synchrony in the general range of forty firings per second (forty Herz). Crick and his colleague Christof Koch take this hypothesis a step further and suggest that maybe synchronized neuron firing in this range (roughly forty Herz, but as low as thirty-five and as high as seventy-five) might be the “brain correlate” of visual consciousness.

Furthermore, the thalamus appears to play a central role in consciousness, and in particular consciousness appears to depend on the circuits connecting the thalamus and the cortex, especially cortical layers four and six. So Crick speculates that perhaps synchronized firing in the range of forty Herz in the networks connecting the thalamus and the cortex might be the key to solving the problem of consciousness.

I admire Crick’s willingness to speculate, but the nature of the speculation reveals how far we have to go. Suppose it turned out that consciousness is invariably correlated with neuron firing rates of forty Herz in neuronal circuits connecting the thalamus to the cortex. Would that be an explanation. of consciousness? No. We would never accept it, as it stands, as an explanation. We would regard it as a tremendous step forward, but we would still want to know, how does it work? It would be like knowing that car movements are “correlated with” the oxidization of hydrocarbons under the hood. You still need to know the mechanisms by which the oxidization of hydrocarbons produces, i.e., causes, the movement of the wheels. Even if Crick’s speculations turn out to be 100 percent correct, we still need to know the mechanisms whereby the neural correlates cause the conscious feelings, and we are a long way from even knowing the form such an explanation might take.

Crick has written a good and useful book. He knows a lot, and he explains it clearly. Most of my objections are to his philosophical claims and presuppositions, but when you read the book you can ignore the philosophical parts and just learn about the psychology of vision, and brain science. The limitations of neurobiological parts of the book are the limitations of the subject right now: we do not know how the psychology of vision and neurophysiology hang together, and we do not know how brain processes cause consciousness, whether visual consciousness or otherwise.

3.

Of the books under review perhaps the most ambitious is Penrose’s Shadows of the Mind. This is a sequel to his earlier The Emperor’s New Mind and many of the same points are made, with further developments and answers to objections to his earlier book. The book divides into two equal parts. In the first he uses a variation of Gödel’s famous proof of the incompleteness of mathematical systems to try to prove that we are not computers and cannot even be simulated on computers. Not only is Strong AI false, Weak AI is too. In the second half he provides a lengthy explanation of quantum mechanics with some speculations on how a quantum mechanical theory of the brain might explain consciousness in a way that he thinks classical physics cannot possibly explain it. This, by the way, is the only book I know where you can find lengthy and clear explanations of two of the most important discoveries of the twentieth century, Gödel’s incompleteness theorem and quantum mechanics.

I admire Penrose and his books enormously. He is brash, enthusiastic, courageous, and often original. Also, I agree with him that the computational model of the mind is misguided, but for reasons I briefly explained earlier, and not for his reasons. My main disagreement is that his use of Gödel’s proof does not seem to me to succeed, and I want to say why it does not.

The first version of this argument goes back to an article by John Lucas, an Oxford philosopher, published in the early Sixties. Lucas argued that Gödel had shown that there are statements in mathematical systems that cannot be proven within those systems, but which we can see to be true. It follows, according to Lucas, that our understanding exceeds that of any computer. A computer uses only algorithms, that is, sets of precise rules that specify a sequence of actions to be taken in order to solve a problem or prove a proposition. But no algorithm can prove what we can see to be true. It follows that our knowledge of those truths cannot be algorithmic. But since computers use only algorithms (a program is an algorithm), if follows that we are not computers.

There were many objections to Lucas’s argument, but an obvious one is this: from the fact that our knowledge of these truths does not come by way of a theorem-proving algorithm it does not follow that we use no algorithms at all in arriving at these conclusions. Not all algorithms are theorem-proving algorithms. It is possible that we are using some computational procedure that is not a theorem-proving procedure.

Penrose revives the argument with a beautiful version of Gödel’s argument, one that was first made by Alan Turing and is usually called “the proof of the unsolvability of the halting problem.” Since even I, a nonmathematician, think I can understand this argument, and since we are not going to be able to assess its philosophical significance if we don’t try to understand it, I summarize it in the box on the next page for those willing to try to follow it.

What are we to make of the argument that we do not make use of algorithms to ascertain what we know? As it stands it appears to contain a fallacy, and we can answer it with a variant of the argument we used against Lucas. From the fact that no “knowably sound” algorithm can account for our mathematical abilities it does not follow that there might not be an algorithm which we did not and perhaps even could not know to be correct which accounted for those abilities. Suppose that we are following a program unconsciously and that it is so long and complicated that we could never grasp the whole thing at once. We can understand each step, but there are so many thousands of steps that we could never grasp the whole program at once. That is, Penrose’s argument rests on what we can know and understand, but it is not a requirement of computational cognitive science that people be able to understand the programs they are supposed to be using to solve cognitive problems.

This objection was made by Hilary Putnam in his recent review of Penrose in The New York Times, and Penrose responded with an indignant letter.3 Penrose has long been aware of this objection and discusses it at some length in his book. In fact, he considers and answers in detail some twenty different objections to his argument. He says the idea that we are using algorithms that we don’t or can’t know or understand is “not at all plausible,” and he gives various reasons for this claim. For the sake of my discussion. I will assume Penrose is right that it is implausible to assume we are using unknown algorithms when we arrive at mathematical conclusions. But even assuming that it is implausible and that we become quite convinced that we are not using some unknown algorithms when we think, this technical objection prepares the way for two more serious philosophical objections. First, what does he take the aim of computational models of the mind to be? He seems to think that when it comes to mathematical reasoning the aim is to get programs that can do what human beings can do by way of establishing mathematical truths—making “truth judgments” as he calls it. He talks as if there were some kind of competition between human beings and computers, and he is eager to prove that no computer is ever going to beat us at our game of mathematical reasoning.

Well, in the early days of computational cognitive science a lot of people did think that the job of that science was to design programs that could prove theorems, discover scientific hypotheses, etc. But nowadays that is not the main project of computational cognitive science; it is only one part of the project. Computational cognitive scientists typically assume that there is a level of description of the brain which is between the level of conscious thought processes and the level of brute neurophysiological processes; and they try to get their computers to simulate what goes on in cognition at that level. For example, computational theories of vision typically postulate that the visual system can be simulated by various unconscious computational rules in order to figure out the shape of an object from the limited sensory input that the system gets. I think these theories are mistaken in postulating an intermediate level between consciousness and neurophysiology, but the point for the present argument is that their project is immune to Penrose’s objection.

Penrose’s discussion is always about the conscious thought processes of mathematicians when they are proving mathematical results, and he wants to know whether theorem-proving algorithms can account for all of their successes. They can’t; but that is not the question at issue in computational cognitive science, at least not as far as Weak AI is concerned. The question is, can we simulate on computers the cognitive processes of humans, whether mathematicians or otherwise, in the same way that we simulate hurricanes, the flow of money in the Brazilian economy, or the processes of internal combustion in a car engine? The aim is not to get an algorithm that people are trying to follow, but rather one which accurately describes what is going on inside them. In this project, simulating failures is just as interesting as simulating successes. That is why, for example, in computational studies of vision, the models of how the brain produces optical illusions are just as important as those of how the brain produces accurate vision. We are trying to figure out how it works, not to compete with it.Nothing whatever in Penrose’s arguments militates against a computational model of the brain, so construed.

But, says Penrose, such computational models don’t guarantee truth judgments. They do not guarantee that the system will come up with the right answers. He is right about that, but it is beside the point. For every time a mathematician comes up with the right answer there will be some possible simulation of his cognitive processes that models what he did, and every time he comes up with the wrong answer there will also be a possible simulation. But the simulations know nothing of getting it right or getting it wrong or making “truth judgments” or any of the rest of it, any more than the simulations of the Brazilian economy are about how to get rich in Brazil.

The aspect of the process which is simulated has to do with unconscious information processing; the aspect which cannot be simulated, according to Penrose’s argument, has to do with conscious theorem proving. The fact that you cannot simulate the conscious theorem-proving efforts with a theorem-proving algorithm is just irrelevant to the question of whether the other aspects of very same processes can be simulated. The simulations are simply efforts to make models of what actually happens. So the real fallacy in Penrose’s argument is more radical than the technical objection I made above: from the fact that there is no theorem-proving algorithm that establishes the mathematical truths that we know, it simply does not follow that there is no computational simulation of what happens in our brains when we arrive at mathematical results. And that is all that computational cognitive science, in its Weak AI version, requires.

To summarize this point: Penrose fails to distinguish algorithms that mathematicians are consciously (or unconsciously, for that matter) following, in the sense of trying to carry out the steps in the algorithm, from algorithms that they are not following but which accurately simulate or model what is happening in their brains when they think. Nothing in his argument shows that the second kind of algorithm is impossible. The real objection to the second kind of algorithm is not one he makes. It is that such computer models don’t really explain anything, because the algorithms play no causal role in the behavior of the brain. They simply provide simulations or models of what is happening.

The second objection to Penrose is that his introduction of such epistemic notions as “known” and “sound” into Gödel’s original proof is not as innocent as he seems to think. In presenting the proof we had to use the assumption that A is “known” and “sound.” But that assumption can’t itself be part of A, so the proof rests on an assumption that is not part of the proof, and we can’t put that assumption into the proof, because if we do, we get another larger A, call it “A.” But to use A we would have to assume that it in turn is known and sound and that would require yet another assumption, which would give us A**, and so on ad infinitum. The problem is that “knowability” is an essential part of his argument, but it is not clear what it can mean in this context.

In the second part of the book, Penrose summarizes the current state of our knowledge of quantum mechanics and tries to apply its lessons to the problem of consciousness. Much of this is difficult to read, but among the relatively nontechnical accounts of quantum mechanics that I have seen this seems to me the clearest. If you have ever wanted to know about superposition, the collapse of the wave function, the paradox of Schroedinger’s cat, and the Einstein-Podolsky-Rosen phenomena, this may be the best place to find out. But what has all this to do with consciousness? Penrose’s speculation is that the computable world of classical physics is unable to account for the noncomputational character of the mind, that is, the features of the mind which he thinks cannot even be simulated on a computer. Even a quantum mechanical computer with its random features would not be able to account for the essentially noncomputational aspects of human consciousness. But Penrose hopes that when the physics of quantum mechanics is eventually completed, when we have a satisfactory theory of quantum gravity, it “would lead us to something genuinely non-computable.”

And how is this supposed to work in the brain? According to Penrose, we cannot find the answer to the problem of consciousness at the level of neurons because they are too big; they are already objects explainable by classical physics. We must look to the inner structure of the neuron, and there we find a structure called a “cytoskeleton,” which is the structure that holds the cell together and is the control system for the operation of the cell. The cytoskeleton contains small tubelike structures called “microtubules,” and these, according to Penrose, have a crucial role in the functioning of synapses. Here is the hypothesis he presents:

On the view that I am tentatively putting forward, consciousness would be some manifestation of this quantum-entangled internal cytoskeletal state and of its involvement in the interplay…between quantum and classical levels of activity.

In other words, the cytoskeletal stuff is all mixed up with quantum mechanical phenomena, and when this micro level gets involved with the macro level, consciousness emerges. Neurons are not on the right level to explain consciousness. They might be just a “magnifying device” for the real action, which is at the cytoskeletal level. The neuronal level of description might be “a mere shadow” of the deeper level where we have to seek the physical basis of the mind.

What are we to make of this? I do not object to its speculative character, because at this point any account of consciousness is bound to contain speculative elements. The problem with these speculations is that they do not adequately speculate on how we might solve the problem of consciousness. They are of the form: If we had a better theory of quantum mechanics and if that theory were noncomputable, then maybe we could account for consciousness in a noncomputational way. But how? It is not enough to say that the mystery of consciousness might be solved if we had a quantum mechanics even more mysterious than the present one. How is it supposed to work? What are the causal mechanisms supposed to be?

There are several attempts beside Penrose’s to give a quantum mechanical account of consciousness. The standard complaint is that these accounts, in effect, want to substitute two mysteries for one. Penrose is unique in that he wants to add a third. To the mysteries of consciousness and quantum mechanics he wants to add a third mysterious element, a yet-to-be-discovered noncomputable quantum mechanics.

The apparent motivation for this line of speculation is based on a fallacy. Supposing he were right that there is no level of description at which consciousness can be simulated, he thinks that the explanation of consciousness must for that reason be given in terms of entities that cannot be simulated. But logically speaking this conclusion does not follow. From the proposition that consciousness cannot be simulated on a computer it does not follow that the entities which cause consciousness cannot be simulated on a computer. Furthermore, there is a persistent confusion in his argument over the notion of computability. He thinks that because the behavior of neurons can be simulated and is therefore computable this makes each neuron somehow into a little computer, the “neural computer” as he calls it. But this is another fallacy. The trajectory of a baseball is computable and can be simulated on a computer. But this does not make baseballs into baseball computers. To summarize, there are really two fallacies at the core of his argument for a noncomputational quantum mechanical explanation of consciousness. The first is his supposition that if something cannot be simulated on a computer then its behavior cannot be explained by anything that can be simulated, and the second is his tacit supposition that anything you can simulate on a computer thereby intrinsically becomes a digital computer.

I have not explored the deeper metaphysical presuppositions behind his entire argument. He comes armed with the credentials of science and mathematics, but in fact he is a classical metaphysician, a self-declared Platonist. He believes we live in three worlds, the physical, the mental, and the mathematical. He wants to show how each world grounds the next in an endless circle. I believe this enterprise is incoherent but I will not attempt to show that here. I will simply assert the following without argument: we live in one world, not two or three or twenty-seven. The main task of a philosophy and science of consciousness right now is to show how consciousness is a biological part of that world, along with digestion, photosynthesis, and all the rest of it. Admiring Penrose and his work enormously, I conclude that the chief value of Shadows of the Mind is that from it you can learn a lot about Gödel’s theorem and about quantum mechanics. You will not learn much about consciousness.

In the next issue I will take up three other accounts of consciousness, those presented by Gerald Edelman, Daniel Dennett, and Israel Rosenfield.

GÖDEL’S PROOF & COMPUTERS

This is my own version of Penrose’s version of Turing’s version of Gödel’s incompleteness proof, which Penrose uses to try to prove Lucas’s claim. It is usually called “the proof of the unsolvability of the halting problem”:

Penrose does not provide a rigorous proof, but gives a summary, and the following is a summary of his summary. Some problems with his account are that he does not distinguish computational procedures from computational results, but assumes without argument that they are equivalent; he leaves out quantifiers and he introduces epistemic notions of “known” and “knowable,” which are not part of the original proofs. In what follows, for greater clarity I have tried to remove some of the ambiguities in his original and I have put my own comments in parentheses.

Step 1. Some computational procedures stop (or halt). For example, if we tell our computer to start with the numbers 1, 2, etc., and search for a number larger than 8 it will stop on 9. But some do not stop. For example, if we tell our computer to search for an odd number that is the sum of two even numbers it will never stop, because there is no such number.

Step 2. We can generalize this point. For any number, n, we can think of the computational procedures C1, C2, C3, etc., on n as dividing into two kinds, those that stop and those that do not stop. Thus the procedure consisting of a search for a number bigger than n stops at n+1 because the search is then over. The procedure consisting of a search for an odd number that is the sum of a series of even numbers never stops, because no procedure can ever find any such number.

Step 3. Now how can we find out which procedures never stop? Well, suppose we had another procedure (or finite set of procedures) A which, when it stops, would tell us that the computation C(n) does not stop. Think of A as the sum total of all the known and sound methods for deciding when computational procedures stop.

So if A stops then C(n) does not stop.

(Penrose asks us just to assume as a premise that there are such procedures and that we know them. This is where epistemology gets in. We are to think of these procedures as known to us and as “sound.” It is a nonstandard use of the notion of soundness to apply it to computational procedures. What he means is that such a procedure always gives true or correct results.)

Step 4. Now think of a series of computations numbered C1(n), C2(n), C3(n), etc… These are all the computations that can be performed on n—all the possible computations. And we are to think of them as numbered in some systematic way.

Step 5. But since we have now numbered all the possible computations on n, we can think of A as a computational procedure which, given any two numbers q and n, tries to determine whether Cq(n) never stops. Suppose, for example, that q=17 and n=8. Then the job for A is to figure out whether the 17th computation on 8 stops. Thus, if A(q,n) stops, then Cq(n) does not stop. (Notice that A operates on ordered pairs of numbers q and n, but C1, C2, etc., are computations on single numbers. We get around this difference in the next step.)

Step 6. Now consider cases where q=n. In such cases, for all n,

if A(n,n) stops, then Cn(n) does not stop.

Step 7. In such cases, A has only one number n to worry about, the nth computation on the number n, and not two different numbers. But we said back in step 4 that the series C1(n), C2(n), etc., included all the computations on n, so for any n, A(n,n) has to be one member of the series Cn(n). Well, suppose that A(n,n) is the kth computation on n, that is, suppose

A(n,n)=Ck(n)

Step 8. Now examine the case of n=k. In such a case,

A(k,k)=Ck(k)

Step 9. From step 6 it follows that if A(k,k) stops, then Ck(k) does not stop.

Step 10. But substituting the identity stated in step 8 we get

if Ck(k) stops, then Ck(k) does not stop.

From which it follows that

Ck(k) does not stop.

Step 11. It follows immediately that A(k,k) does not stop either, because it is the same computation as Ck(k). And that has the result that our known, sound procedures are insufficient to tell us that Ck(k) does not stop, even though in fact it does not stop, and we know that it does not stop.

But then A can’t tell us what we know, namely

Ck(k) does not stop.

Step 12. Thus from the knowledge that A is sound we can show that there are some nonstopping computational procedures, such as Ck(k), that cannot be shown to be nonstopping by A. So we know something that A cannot tell us, so A is not sufficient to express our understanding.

Step 13. But A included all the knowably sound algorithms we had.

(Penrose says not just “known and sound” but “knowably sound.” The argument so far doesn’t justify that move, but I think he would claim that the argument works not just for anything we in fact know but for anything we could know. So I have left it as he says it.)

Thus no knowably sound set of computational rules such as A can ever be sufficient to determine that computations do not stop, because there are some, such as Ck(k), that they cannot capture. So we are not using a knowably sound algorithm to figure out what we know.

Step 14. So we are not computers.

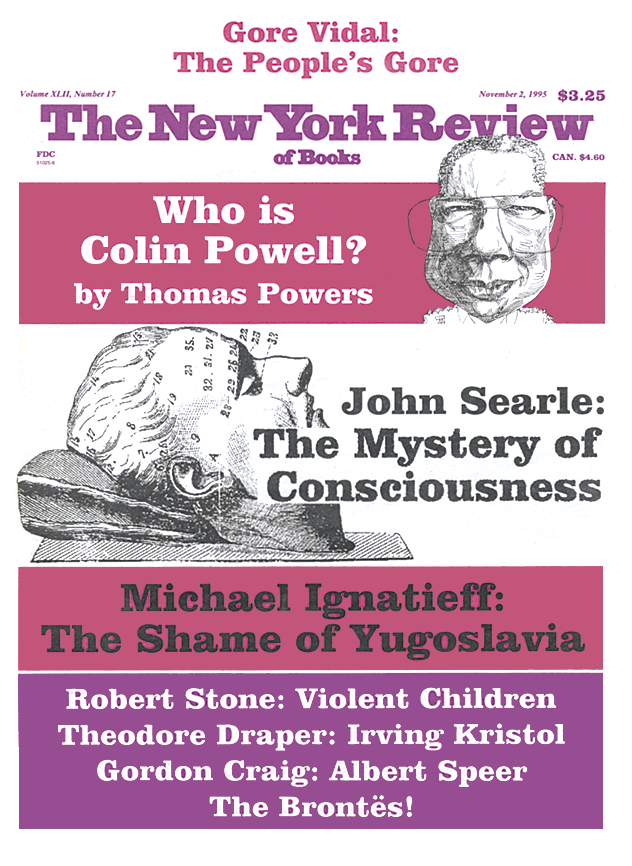

This Issue

November 2, 1995

-

1

“The Myth of the Computer,” The New York Review of Books, April 29, 1982, and “Minds, Brains and Programs,” Behavioral and Brain Sciences, 1980, Vol. 3, pp. 417–457. ↩

-

2

They have advanced this argument in a number of places, most recently in Paul M. Churchland’s The Engine of Reason, the seat of the Soul (MIT Press, 1995), pp. 195–208. ↩

-

3

New York Times Book Review, November 20, 1994. ↩