1.

W.B. Yeats wrote on the margin of his copy of The Genealogy of Morals, “But why does Nietzsche think the night has no stars, nothing but bats and owls and the insane moon?” Nietzsche outlined his skepticism of humanity and presented his chilling vision of the future just before the beginning of the last century—he died in 1900. The events of the century that followed, including world wars, holocausts, genocides, and other atrocities that occurred with systematic brutality, give us reason enough to worry whether Nietzsche’s skeptical view of humanity may not have been right.

Jonathan Glover, an Oxford philosopher, argues in his recent and enormously interesting “moral history of the twentieth century” that we not only must reflect on what has happened in the last century, but also “need to look hard and clearly at some monsters inside us” and to consider ways and means of “caging and taming them.”1 The end of a century—and of a millennium—is certainly a good moment to engage in critical examinations of this kind. Indeed, as the first millennium of the Islamic Hijri calendar came to an end in 1591-1592 (a thousand lunar years—shorter than solar years—after Mohammed’s epic journey from Mecca to Medina in 622 AD), Akbar, the Mughal emperor of India, engaged in just such a far-reaching scrutiny. He paid particular attention to the relations among religious communities and to the need for peaceful coexistence in the already multicultural India.

Taking note of the denominational diversity of Indians (including Hindus, Muslims, Christians, Jains, Sikhs, Parsees, Jews, and others), he laid the foundations of the secularism and religious neutrality of the state, which he insisted must ensure that “no man should be interfered with on account of religion, and anyone is to be allowed to go over to a religion that pleases him.”2 Akbar’s thesis that “the pursuit of reason” rather than “reliance on tradition” is the way to address difficult social problems is a view that has become all the more important for the world today.3

It is striking how little critical assessment of the experience of the millennium took place during its recent worldwide celebration.4 As the century and the second Gregorian millennium came to an end, the memory of the dreadful events that Glover describes with devastating effect did not seem to stir people much; nor was there much detectable interest in the challenging questions that Glover asks. The lights of celebratory glory not only drowned the stars but also the bats and the owls and the insane moon.

Nietzsche’s skepticism about ethical reasoning and his anticipation of difficulties to come were combined with an ambiguous approval of the annihilation of moral authority—“the most terrible, the most questionable, and perhaps also the most hopeful of all spectacles,” he wrote. Glover argues that we must respond to “Nietzsche’s challenge”: “The problem is how to accept [Nietzsche’s] scepticism about a religious authority for morality while escaping from his appalling conclusions.” This issue is related to Akbar’s thesis that morality can be guided by critical reasoning; in making moral judgments, Akbar argued, we must not make reasoning subordinate to religious command, or rely on “the marshy land of tradition.”

Interest in such questions was particularly strong during the European Enlightenment, which was optimistic about the reach of reason. The Enlightenment perspective has come under severe attack in recent years, and Glover adds his own powerful voice to this reproach.5 He argues that “the Enlightenment view of human psychology” has increasingly looked “thin and mechanical,” and “Enlightenment hopes of social progress through the spread of humanitarianism and the scientific outlook” now appear rather “naive.” Following an increasingly common tendency, Glover goes on to attribute many of the horrors of the twentieth century to the influence of the Enlightenment. He links modern tyranny with that perspective, noting not only that “Stalin and his heirs were in thrall to the Enlightenment,” but also that Pol Pot “was indirectly influenced by it.” But since Glover does not wish to seek solutions through the authority of religion or of tradition (in this respect, he notes, “we cannot escape the Enlightenment”), he concentrates his fire on other targets, such as reliance on strongly held beliefs. “The crudity of Stalinism,” he argues, “had its origins in the beliefs [Stalin held].” This claim is plausible enough, as is Glover’s reference to “the role of ideology in Stalinism.”

However, why is this a criticism of the Enlightenment perspective? It seems a little unfair to put the blame for the blind beliefs of dictators on the Enlightenment tradition, since so many writers associated with the Enlightenment insisted that reasoned choice was superior to any reliance on blind belief. Surely “the crudity of Stalinism” could be opposed, as it indeed was, through a reasoned demonstration of the huge gap between promise and practice, and by showing its brutality—a brutality that the authorities had to conceal through strict censorship. Indeed, one of the main points in favor of reason is that it helps us to transcend ideology and blind belief. Reason was not, in fact, Pol Pot’s main ally. He and his gang of followers were driven by frenzy and badly reasoned belief and did not allow any questioning or scrutiny of their actions. Given the cogency of Glover’s other arguments, there is something deeply puzzling about his willingness to join the fashionable chorus of attacks on the Enlightenment.

Advertisement

There is, however, an important question that emerges from Glover’s discussion on this subject, too. Are we not better advised to rely on our instincts when we are not able to reason clearly because of some hard-to-remove impediments to our critical thinking? The question is well illustrated by Glover’s remarks on a less harsh figure than Stalin or Pol Pot, namely Nikolai Bukharin, who, Glover notes, was not at all inclined to “turn into wood.” Glover writes that Bukharin “had to live with the tension between his human instincts and the hard beliefs he defended.” Bukharin was repelled by the actions of the regime, but the surrounding political climate, combined with his own formulaic thinking, prevented him from reasoning clearly enough about them. This, Glover writes, left him dithering between his “human instincts” and his “hard beliefs,” with no “clear victory for either side.” Glover is attracted by the idea—plausible enough in this case—that Bukharin would have done better to be guided by his instincts. Whether or not we see this as the basis of a general rule, Glover here poses an interesting argument about the need to take account of the situation in which reasoning takes place—and that argument deserves attention (no matter what we make of the alleged criminal tendencies of the Enlightenment).

2.

The possibility of reasoning is a strong source of hope and confidence in a world darkened by horrible deeds. It is easy to understand why this is so. Even when we find something immediately upsetting, or annoying, we are free to question that response and ask whether it is an appropriate reaction and whether we should really be guided by it. We can reason about the right way of perceiving and treating other people, other cultures, other claims, and examine different grounds for respect and tolerance. We can also reason about our own mistakes and try to learn not to repeat them. For example, the Japanese novelist and visionary social theorist Kenzaburo Oë argues powerfully that the Japanese nation, aided by an understanding of its own “history of territorial invasion,” has reason enough to remain committed to “the idea of democracy and the determination never to wage a war again.”6

Intellectual inquiry, moreover, is needed to identify actions and policies that are not evidently injurious but which have that effect. For example, famines can remain unchecked on the mistaken presumption that they cannot be averted through immediate public policy. Starvation in famines results primarily from a severe reduction in the food-buying ability of a section of the population that has become destitute through unemployment, diminished markets, disruption of agricultural activities, or other economic calamities. The economic victims are forced into starvation whether or not there is also a diminution of the total supply of food. The unequal deprivation of such people can be immediately countered by providing employment at relatively low wages through emergency public programs, which can help them to share the national food supply with others in the community.

Famine, like the Devil, takes the hindmost (rarely more than 5 percent of the population is affected—almost never more than 10 percent), and reducing the relative deprivation of destitute people by augmenting their incomes can rapidly and dramatically reduce their absolute deprivation in the amount of food obtained by them. By encouraging critical public discussion of these issues, democracy and a free press can be extremely important in preventing famine. Otherwise, unreasoned pessimism, masquerading as composure based on realism and common sense, can serve to “justify” disastrous inaction and an abdication of public responsibility.7

Similarly, environmental deterioration frequently arises not from any desire to damage the world but from thoughtlessness and lack of reasoned action—separate or joint—and this can end up producing dreadful results.8 To prevent catastrophes caused by human negligence or obtuseness or callous obduracy, we need practical reason as well as sympathy and commitment.

Attacks on ethics based on reason have come recently from several different directions. Apart from the claim that “the Enlightenment view of human psychology” neglects many human responses (as Glover argues), we also hear the claim that to rely primarily on reasoning in the ethics of human behavior involves a neglect of culture-specific influences on values and conduct. People’s thoughts and identities are fairly comprehensively determined, according to this claim, by the tradition and culture in which they are reared rather than by analytical reasoning, which is sometimes seen as a “Western” practice. We must examine whether the reach of reasoning is really compromised either by (1) the undoubtedly powerful effects of human psychology, or (2) the pervasive influence of cultural diversity. Our hopes for the future and the ways and means of living in a decent world may greatly depend on how we assess these criticisms.

Advertisement

Jonathan Glover’s arguments for the need for a “new human psychology” take account of the ways that politics and psychology affect each other. People can indeed be expected to resist political barbarism if they instinctively react against atrocities. We have to be able to react spontaneously and resist inhumanity whenever it occurs. If this is to happen, the individual and social opportunities for developing and exercising moral imagination have to be expanded. We do have moral resources, including, as Glover writes, “our sense of our own moral identity.” But to “function as a restraint against atrocity, the sense of moral identity most of all needs to be rooted in the human responses.” Two responses, Glover argues, are particularly important: “the tendency to respond to people with certain kinds of respect” and “sympathy: caring about the miseries and the happiness of others.” Hope for the future lies in cultivating such responses, and this line of reasoning leads Glover to conclude: “It is to psychology that we should now turn.”

Indeed, the importance of instinctive psychology and sympathetic response should be adequately recognized, and Glover is also right in believing that our hope for the future must, to a considerable extent, depend on the sympathy and respect with which we respond to things happening to others. For Glover it is therefore critically important to replace “the thin, mechanical psychology of the Enlightenment with something more complex, something closer to reality.”

While applauding the constructive features of this approach, we must also ask whether Glover is being quite fair to the Enlightenment (even without Pol Pot and assorted criminals blocking our vision). Glover does not refer to Adam Smith, but the author of The Theory of Moral Sentiments would, in fact, have greatly welcomed Glover’s diagnosis of the central importance of emotions and psychological response. While it has become fashionable in modern economics to attribute to Smith a view of human behavior that is devoid of all concerns except cool calculation of a narrowly defined personal interest, those who read his basic works know that this was not his position.9 Indeed, many issues in human psychology that Glover discusses (as part of the demands of “humanity”) were discussed by Smith as well. But Smith—no less than Diderot or Condorcet or Kant—was very much an “Enlightenment author,” whose arguments and analyses deeply influenced the thinking of his contemporaries.10

Smith may not have gone as far as another leader of the Enlightenment, David Hume, did in asserting that “reason and sentiment concur in almost all moral determinations and conclusions,”11 but both saw reasoning and feeling as deeply interrelated activities. In fact, Hume (to whom Glover also does not refer) is often seen as having precisely the opposite bias by giving precedence to passion over reason. Indeed, as Thomas Nagel puts it in his strongly argued defense of reason,

Hume famously believed that because a “passion” immune to rational assessment must underlie every motive, there can be no such thing as specifically practical reason, nor specifically moral reason either. 12

The crucial issue is not whether sentiments and attitudes are seen as important (they were clearly so recognized by most of the writers whom we tend to think of as part of the Enlightenment), but whether—and to what extent—these sentiments and attitudes can be influenced and cultivated through reasoning.13 Adam Smith argued that our “first perceptions” of right and wrong “cannot be the object of reason, but of immediate sense and feeling.” But even these instinctive reactions to particular conduct must, he argued, rely—if only implicitly—on our reasoned understanding of causal connections between conduct and consequences in “a vast variety of instances.” Furthermore, our first perceptions may also change in response to critical examination, for example on the basis of empirical investigation that may show that a certain “object is the means of obtaining some other.”14

Two pillars of Enlightenment thinking are sometimes wrongly merged and jointly criticized: (1) the power of reasoning, and (2) the perfectibility of human nature. Though closely linked in the writings of many Enlightenment authors, they are, in fact, quite distinct claims, and undermining one does not disestablish the other. For example, it might be argued that perfectibility is possible, but not primarily through reasoning. Or, alternatively, it can be the case that insofar as anything works, reasoning does, and yet there may be no hope of getting anywhere near what perfectibility demands. Glover, who gives a richly characterized account of human nature, does not argue for human perfectibility; but his own constructive hopes clearly draw on reasoning as an influence on psychology through “the social and personal cultivation of the moral imagination.” Glover has more in common with at least some parts of the Enlightenment literature—Adam Smith in particular—than would be guessed from his stinging criticisms of the Enlightenment.

3.

What of the skeptical view that the scope of reasoning is limited by cultural differences? Two particular difficulties—related but separate—have been emphasized recently. There is, first, the view that reliance on reasoning and rationality is a particularly “Western” way of approaching social issues. Members of non-Western civilizations do not, the argument runs, share some of the values, including liberty or tolerance, that are central to Western society and are the foundations of ideas of justice as developed by Western philosophers from Immanuel Kant to John Rawls. That centrality is not in dispute; indeed the long-awaited publication of Rawls’s collected papers allows us to see, in a wonderfully integrated way, just how significant and pivotal “the principles of toleration and liberty of conscience” are in the ethical and political analyses of the foremost moral philosopher of our own time.15 Since it has been claimed that many non-Western societies have values that place little emphasis on liberty or tolerance (the recently championed “Asian values” have been so described), this issue has to be addressed. Values such as tolerance, liberty, and reciprocal respect have been described as “culture-specific” and basically confined to Western civilization. I shall call this the claim of “cultural boundary.”

The second difficulty concerns the possibility that people reared in different cultures may systematically lack basic sympathy and respect for one another. They may not even be able to understand one another, and could not possibly reason together. This could be called the claim of “cultural disharmony.” Since atrocities and genocide are typically imposed by members of one community on members of another, the significance of understanding among communities can hardly be overstated. And yet such understanding might be difficult to achieve if cultures are fundamentally different from one another and are prone to conflict. Can Serbs and Albanians overcome their “cultural animosities”? Can Hutus and Tutsis, or Hindus and Muslims, or Israeli Jews and Arabs? Even to ask these pessimistic questions may appear to be skeptical of the nature of humanity and the reach of human understanding; but we cannot ignore such doubts, since recent writings on cultural specificity (whether in the self-proclaimed “realism” of the popular press or in the academic criticism of the folly of “universalism”) have given them such serious standing.

The issue of cultural disharmony is very much alive in many cultural and political investigations, which often sound as if they are reports from battle fronts, written by war correspondents with divergent loyalties: we hear of the “clash of civilizations,” the need to “fight” Western cultural imperialism, the irresistible victory of “Asian values,” the challenge to Western civilization posed by the militancy of other cultures, and so on. The global confrontations have their reflections within the national frontiers as well, since most societies now have diverse cultures, which can appear to some to be very threatening. “The preservation of the United States and the West requires,” Samuel Huntington argues, “the renewal of Western identity.”16

4.

The subject of “the reach of reason” is related to another theme, which has been important in the anthropological literature. I refer to what Clifford Geertz has called “culture war,” well illustrated by the much-discussed differences over the interpretation of Captain Cook’s sad death in 1779 at the hands of club-wielding and knife-brandishing Hawaiians.17 In his article Geertz contrasts the theories of two leading anthropologists: Marshall Sahlins, he writes, is “a thoroughgoing advocate of the view that there are distinct cultures, each with a ‘total cultural system of human action,’ and they are to be understood along structuralist lines.” The other anthropologist, Gananath Obeyesekere, is “a thoroughgoing advocate of the view that people’s actions and beliefs have particular, practical functions in their lives and that those functions and beliefs should be understood along psychological lines.”

Whatever view we find persuasive, however, the question still should be asked whether the people involved must remain inescapably confined to their traditional modes of thought and behavior (as cultural determinists argue). Neither Sahlins’s nor Obeyesekere’s approach rules out communication between cultures, even though this may be a more arduous task if we follow Sahlins’s interpretation. But we have to ask what kind of reasoning the members of each culture can use to arrive at better understanding and perhaps even sympathy and respect. Indeed, this is one of the questions Glover poses when he advocates moral imagination as a solution to the brutality and ruthlessness with which groups treat one another. Moral imagination, he hopes, can be cultivated through mutual respect, tolerance, and sympathy.

The central issue here is not how dissimilar the distinct societies may be from one another, but what ability and opportunity the members of one society have—or can develop—to appreciate and understand how others function. This may not, of course, be an immediate way of resolving such conflicts. The killers of Captain Cook could not instantly revise their culture-bound view of him, nor could Cook acquire at once the comprehension or acumen needed to hold his pistol rather than fire it. Rather, the hope is that the reasoned cultivation of understanding and knowledge would eventually overcome such impulsive action.

The question that has to be faced here is whether such exercises of reasoning may require values that are not available in some cultures. This is where the “cultural boundary” becomes a central issue. There have, for example, been frequent declarations that non-Western civilizations typically lack a tradition of analytical and skeptical reasoning, and are thus distant from what is sometimes called “Western rationality.” Similar comments have been made about “Western liberalism,” “Western ideas of right and justice,” and generally about “Western values.” Indeed, there are many supporters of the claim (articulated by Gertrude Himmelfarb with admirable explicitness) that ideas of “justice,” “right,” “reason,” and “love of humanity” are “predominantly, perhaps even uniquely, Western values.”18

This and similar beliefs figure implicitly in many discussions, even when the exponents shy away from stating them with such clarity. If the reasoning and values that can help in the cultivation of imagination, respect, and sympathy needed for better understanding and appreciation of other people and other societies are fundamentally “Western,” then there would indeed be ground enough for pessimism. But are they?

It is, in fact, very difficult to investigate such questions without seeing the dominance of contemporary Western culture over our perceptions and readings. The force of that dominance is well illustrated by the recent millennial celebrations. The entire globe was transfixed by the end of the Gregorian millennium as if that were the only authentic calendar in the world, even though there are many flourishing calendars in the non-Western world (in China, India, Iran, Egypt, and elsewhere) that are considerably older than the Gregorian calendar.19 It is, of course, extremely useful for the technical, commercial, and even cultural interrelations in the world that we can share a common calendar. But if that visible dominance reflects a tacit assumption that the Gregorian is the only “internationally usable” calendar, then that dominance becomes the source of a significant misunderstanding, since several of the other calendars could be used in much the same way if they were jointly adopted in the way the Gregorian has been.

Western dominance has similar effects also on the understanding of other aspects of non-Western civilizations. Consider, for example, the idea of “individual liberty,” which is often seen as an integral part of “Western liberalism.” Modern Europe and America, including the European Enlightenment, have certainly had a decisive part in the evolution of the concept of liberty and the many forms it has taken. These ideas have disseminated from one country to another within the West and also to countries elsewhere, in ways that are somewhat similar to the spread of industrial organization and modern technology. To see libertarian ideas as “Western” in this limited and proximate sense does not, of course, threaten their being adopted in other regions. For example, to recognize that the form of Indian democracy is based on the British model does not undermine it in any way. In contrast, to take the view that there is something quintessentially “Western” about these ideas and values, related specifically to the history of Europe, can have a dampening effect on their use elsewhere.

But is the historical claim correct? Is it indeed true (as claimed, for example, by Samuel Huntington) that “the West was the West long before it was modern”?20 The evidence for such claims is far from clear. When civilizations are categorized today, individual liberty is often used as a classificatory device and is seen as a part of the ancient heritage of the Western world, not to be found elsewhere. It is, of course, easy to find the advocacy of particular aspects of individual liberty in Western classical writings. For example, freedom and tolerance both get support from Aristotle (even though only for free men—not women and slaves). However, we can find championing of tolerance and freedom in non-Western authors as well. A good example is the emperor Ashoka in India, who during the third century BC covered the country with inscriptions on stone tablets about good behavior and wise governance, including a demand for basic freedoms for all—indeed he did not exclude women and slaves as Aristotle did; he even insisted that these rights must be enjoyed also by “the forest people” living in preagricultural communities distant from Indian cities.21 Ashoka’s championing of tolerance and freedom may not be at all well known in the contemporary world, but that is not dissimilar to the global unfamiliarity with calendars other than the Gregorian.

There are, to be sure, other Indian classical authors who emphasized discipline and order rather than tolerance and liberty, for example Kautilya in the fourth century BC (in his book Arthashastra—translatable as “Economics”). But Western classical writers such as Plato and Saint Augustine also gave priority to social disciplines. In view of the diversity within each country, it may be sensible, when it comes to liberty and tolerance, to classify Aristotle and Ashoka on one side, and, on the other, Plato, Augustine, and Kautilya. Such classifications based on the substance of ideas are, of course, radically different from those based on culture or region.

Even when beliefs and attitudes that are seen as “Western” are largely a reflection of present-day circumstances in Europe and North America, there is a tendency—often implicit—to interpret them as age-old features of the “Western tradition” or of “Western civilization.” One consequence of Western dominance of the world today is that other cultures and traditions are often identified and defined by their contrasts with contemporary Western culture.

Different cultures are thus interpreted in ways that reinforce the political conviction that Western civilization is somehow the main, perhaps the only, source of rationalistic and liberal ideas—among them analytical scrutiny, open debate, political tolerance, and agreement to differ. The West is seen, in effect, as having exclusive access to the values that lie at the foundation of rationality and reasoning, science and evidence, liberty and tolerance, and of course rights and justice.

Once established, this view of the West, seen in confrontation with the rest, tends to vindicate itself. Since each civilization contains diverse elements, a non-Western civilization can then be characterized by referring to those tendencies that are most distant from the identified “Western” traditions and values. These selected elements are then taken to be more “authentic” or more “genuinely indigenous” than the elements that are relatively similar to what can be found also in the West.

For example, Indian religious literature such as the Bhagavad-Gita or the Tantrik texts, which are identified as differing from secular writings seen as “Western,” elicits much greater interest in the West than do other Indian writings, including India’s long history of heterodoxy. Sanskrit and Pali have a larger atheistic and agnostic literature than exists in any other classical tradition. There is a similar neglect of Indian writings on nonreligious subjects, from mathematics, epistemology, and natural science to economics and linguistics. (The exception, I suppose, is the Kama Sutra, in which Western readers have managed to cultivate an interest.) Through selective emphases that point up differences with the West, other civilizations can, in this way, be redefined in alien terms, which can be exotic and charming, or else bizarre and terrifying, or simply strange and engaging. When identity is thus “defined by contrast,” divergence with the West becomes central.

Take, for example, the case of “Asian values,” often contrasted with “Western values.” Since many different value systems and many different styles of reasoning have flourished in Asia, it is possible to characterize “Asian values” in many different ways, each with plentiful citations. By selective citations of Confucius, and by selective neglect of many other Asian authors, the view that Asian values emphasize discipline and order—rather than liberty and autonomy, as in the West—has been given apparent plausibility. This contrast, as I have discussed elsewhere, is hard to sustain when one actually compares the respective literatures.22

There is an interesting dialectic here. By concentrating on the authoritarian parts of Asia’s multitude of traditions, many Western writers have been able to construct a seemingly neat picture of an Asian contrast with “Western liberalism.” In response, rather than dispute the West’s unique claim to liberal values, some Asians have responded with a pride in distance: “Yes, we are very different—and a good thing too!” The practice of conferring identity by contrast has thus flourished, driven both by Western attempts to establish its exclusiveness and also by the Asian counterattempt to establish its own contrary exclusiveness. Showing how other parts of the world differ from the West can be very effective and can shore up artificial distinctions. We may be left wondering why Gautama Buddha, or Lao-tzu, or Ashoka—or Gandhi or Sun Yat-sen—was not really an Asian.

Similarly, under this identity by contrast, the Western detractors of Islam as well as the new champions of Islamic heritage have little to say about Islam’s tradition of tolerance, which has been at least as important historically as its record of intolerance. We are left wondering what could have led Maimonides, as he fled the persecution of Jews in Spain in the twelfth century, to seek shelter in Emperor Saladin’s Egypt. And why did Maimonides, in fact, get support as well as an honored position at the court of the Muslim emperor who fought valiantly for Islam in the Crusades?

Despite the recent outbursts of intolerance in Africa, we can recall that in 1526, in an exchange of discourtesies between the kings of Congo and Portugal, it was the former, not the latter, who argued that slavery was intolerable. King Nzinga Mbemba wrote to the Portuguese king that the slave trade must stop, “because it is our will that in these kingdoms of Kongo there should not be any trade in slaves nor any market for slaves.”23

Of course, it is not being claimed here that all the different ideas relevant to the use of reasoning for social harmony and humanity have flourished equally in all civilizations of the world. That would not only be untrue; it would also be a stupid claim of mechanical uniformity. But once we recognize that many ideas that are taken to be quintessentially Western have also flourished in other civilizations, we also see that these ideas are not as culture-specific as is sometimes claimed. We need not begin with pessimism, at least on this ground, about the prospects of reasoned humanism in the world.

5.

It is worth recalling that in Akbar’s pronouncements of four hundred years ago on the need for religious neutrality on the part of the state, we can identify the foundations of a nondenominational, secular state which was yet to be born in India or for that matter anywhere else. Thus, Akbar’s reasoned conclusions, codified during 1591 and 1592, had universal implications. Europe had just as much reason to listen to that message as India had. The Inquisitions were still in force, and just when Akbar was writing on religious tolerance in Agra in 1592, Giordano Bruno was arrested for heresy, and ultimately, in 1600, burned at the stake in the Campo dei Fiori in Rome.

For India in particular, the tradition of secularism can be traced to the trend of tolerant and pluralist thinking that had begun to take root well before Akbar, for example, in the writings of Amir Khusrau in the fourteenth century as well as in the nonsectarian devotional poetry of Kabir, Nanak, Chaitanya, and others. But that tradition got its firmest official backing from Emperor Akbar himself. He also practiced as he preached—abolishing discriminatory taxes imposed earlier on non-Muslims, inviting many Hindu intellectuals and artists into his court (including the great musician Tansen), and even trusting a Hindu general, Man Singh, to command his armed forces.

In some ways, Akbar was precisely codifying and consolidating the need for religious neutrality of the state that had been enunciated, in a general form, nearly two millennia before him by the Indian emperor Ashoka, whose ideas I have referred to earlier. While Ashoka ruled a long time ago, in the case of Akbar there is a continuity of legal scholarship and public memory linking his ideas and codifications with present-day India.

Indian secularism, which was strongly championed in the twentieth century by Gandhi, Nehru, Tagore, and others, is often taken to be something of a reflection of Western ideas (despite the fact that Britain is a somewhat unlikely choice as a spearhead of secularism). In contrast, there are good reasons to link this aspect of modern India, including its constitutional secularism and judicially guaranteed multiculturalism (in contrast with, say, the privileged status of Islam in the constitution of the Islamic Republic of Pakistan), to earlier Indian writings and particularly to the ideas of this Muslim emperor of four hundred years ago.

Perhaps the most important point that Akbar made in his defense of a tolerant multiculturalism concerns the role of reasoning. Reason had to be supreme, since even in disputing the validity of reason we have to give reasons. Attacked by traditionalists who argued in favor of instinctive faith in the Islamic tradition, Akbar told his friend and trusted lieutenant Abul Fazl (a formidable scholar in Sanskrit as well as Arabic and Persian):

The pursuit of reason and rejection of traditionalism are so brilliantly patent as to be above the need of argument. If traditionalism were proper, the prophets would merely have followed their own elders (and not come with new messages).24

Convinced that he had to take a serious interest in the religions and cultures of non-Muslims in India, Akbar arranged for discussions to take place involving not only mainstream Hindu and Muslim philosophers (Shia and Sunni as well as Sufi), but also involving Christians, Jews, Parsees, Jains, and, according to Abul Fazl, even the followers of “Charvaka”—one of the Indian schools of atheistic thinking dating from around the sixth century BC.25 Instead of taking an all-or-nothing view of a faith, Ashoka liked to reason about particular components of each multifaceted religion. For example, arguing with Jains, Akbar would remain skeptical of their rituals, and yet become convinced by their argument for vegetarianism and end up deploring the eating of all flesh.

All this caused irritation among those who preferred to base religious belief on faith rather than reasoning. There were several revolts against Akbar by orthodox Muslims, on one occasion joined by his eldest son, Prince Salim, with whom he later reconciled. But he stuck to what he called “the path of reason” (rahi aql), and insisted on the need for open dialogue and free choice. At one stage, Akbar even tried, not very successfully, to launch a new religion, Din Ilahi (God’s religion), combining what he took to be the good qualities of different faiths. When he died in 1605, the Islamic theologian Abdul Haq concluded with some satisfaction that despite his “innovations,” Akbar had remained a good Muslim.26 This was indeed so, but Akbar would have also added that his religious beliefs came from his own reason and choice, not from “blind faith,” or from “the marshy land of tradition.”

6.

Akbar’s ideas remain relevant—and not just in the subcontinent. They have a bearing on many current debates in the West as well. They suggest the need for scrutiny of the fear of multiculturalism (for example, of Huntington’s argument that “multiculturalism at home threatens the United States and the West”). Similarly, in dealing with controversies in US universities about confining core readings to the “great books” of the Western world, Akbar’s line of reasoning would suggest that the crucial weakness of this proposal is not so much that students from other backgrounds (say, African-American or Chinese) should not have to read Western classics, as that confining one’s reading only to the books of one civilization reduces one’s freedom to learn about and choose ideas from different cultures in the world.27 And the counter-demand that the great Western books be banished from the reading list for students from other backgrounds would also be faulty, since that too would reduce the freedom to learn, reason, and choose.

There are implications also for the “communitarian” position, which argues that one’s identity is a matter of “discovery,” not choice. As Michael Sandel presents this conception of community (one of several alternative conceptions he outlines): “Community describes not just what they have as fellow citizens but also what they are, not a relationship they choose (as in a voluntary association) but an attachment they discover, not merely an attribute but a constituent of their identity.”28 This view—that a person’s identity is something he or she detects rather than determines—would have been resisted by Akbar on the ground that we do have a choice about our beliefs, associations, and attitudes, and must take responsibility for what we actually choose (if only implicitly).

The notion that we “discover” our identity is not only epistemologically limiting (we certainly can try to find out what choices—possibly extensive—we actually have), but it may also have disastrous implications for how we act and behave (well illustrated by Jonathan Glover’s account of the role of unquestioning loyalty and belief in precipitating atrocities and horrors). Many of us still have vivid memories of what happened in the pre-Partition riots in India just preceding independence in 1947, when the broadly tolerant subcontinentals of January rapidly and unquestioningly became the ruthless Hindus or the fierce Muslims of June.29 The carnage that followed had much to do with the alleged “discovery” of one’s “true” identity, unhampered by reasoned humanity.

Akbar’s analyses of social problems illustrate the power of open reasoning and choice even in a clearly pre-modern society. Shirin Moosvi’s wonderfully informative book Episodes in the Life of Akbar: Contemporary Records and Reminiscences gives interesting accounts of how Akbar arrived at social decisions—many of them defiant of tradition—through the use of reasoning.30

Akbar was, for example, opposed to child marriage, then a quite conventional custom. He argued that “the object that is intended” in marriage “is still remote, and there is immediate possibility of injury.” He went on to remark that “in a religion that forbids the remarriage of the widow [Hinduism], the hardship is much greater.” On property division, he noted that “in the Muslim religion, a smaller share of inheritance is allowed to the daughter, though owing to her weakness, she deserves to be given a larger share.” When his second son, Murad, who knew that his father was opposed to all religious rituals, asked him whether these rituals should be banned, Akbar immediately protested, on the ground that “preventing that insensitive simpleton, who considers body exercise to be divine worship, would amount to preventing him from remembering God [at all].” Addressing a question on the motivation for doing a good deed (a question that still gets asked often enough), Akbar criticizes “the Indian sages” for the suggestion that “good works” be done to achieve a favorable outcome after death: “To me it seems that in the pursuit of virtue, the idea of death should not be thought of, so that without any hope or fear, one should practice virtue simply because it is good.” In 1582 he resolved to release “all the Imperial slaves,” since “it is beyond the realm of justice and good conduct” to benefit from “force.”

Incidentally, the fact that reason may not be infallible, especially in the presence of uncertainty, is well illustrated by Akbar’s reflections on the newly arrived practice of smoking tobacco. His doctor, Hakim Ali, argued against its use: “It is not necessary for us to follow the Europeans, and adopt a custom, which is not sanctioned by our own wise men, without experiment or trial.” Akbar ignored this argument on the ground that “we must not reject a thing that has been adopted by people of the world, merely because we cannot find it in our books; or how shall we progress?” Armed with that argument, Akbar tried smoking, but happily for him he took an instant dislike of it, and never smoked again. Here instinct worked better than reason (in circumstances rather different from the case of Bukharin described by Glover). But reason worked often enough.

7.

There was good sense in Akbar’s insistence that a millennial occasion is not only for fun and festivities (of which there were plenty in Delhi and Agra as the first Hijri millennium was completed in 1591-1592), but also for serious reflection on the joys and horrors and challenges of the world in which we live. Akbar’s emphasis on reason and scrutiny serves as a reminder that “cultural boundaries” are not as limiting as is sometimes alleged (as, for example, in the view, discussed earlier, that “justice,” “right,” “reason,” and “love of humanity” are “predominantly, perhaps even uniquely, Western values”). Indeed, many features of the European Enlightenment can be linked with questions that were raised earlier—not just in Europe but widely across the world.

As the second Gregorian millennium began, India was visited by an intellectual tourist in the form of Alberuni, an Iranian who was born in Central Asia in 973 AD and who wrote in Arabic. As a mathemati-cian, Alberuni’s primary interest was in Indian mathematics (he produced, among other writings, an improved Arabic translation of Brahmagupta’s sixth-century Sanskrit treatise on astronomy and mathematics—first translated into Arabic in the eighth century). But he also studied Indian writings on science, philosophy, literature, linguistics, religion, and other subjects, and wrote a highly informative book about India, called Ta’rikh al-hind (“The History of India”). In explaining why he wrote it, Alberuni argued that it is very important for people in one country to know how others elsewhere live, and how and what they think. Evil behavior (of which Alberuni had seen plenty in the barbarity of his former patron, Sultan Mahmud of Ghazni, who had savagely raided India several times) can arise from a lack of understanding of—and familiarity with—other people:

…In all manners and usages [the Indians] differ from us to such a degree as to frighten their children with us, with our dress, and our ways and customs, and as to declare us to be devil’s breed, and our doings as the very opposite of all that is good and proper. By the bye, we must confess, in order to be just, that a similar depreciation of foreigners not only prevails among us and the Indians, but is common to all nations towards each other.31

That insight from the beginning of the last millennium has remained pertinent a thousand years later.

In trying to go beyond what Adam Smith called our “first perceptions,” we need to transcend what Akbar saw as the “marshy land” of unquestioned tradition and unreflected response. Reason has its reach—compromised neither by the importance of instinctive psychology nor by the presence of cultural diversity in the world. It has an especially important role to play in the cultivation of moral imagination. We need it in particular to face the bats and the owls and the insane moon.32

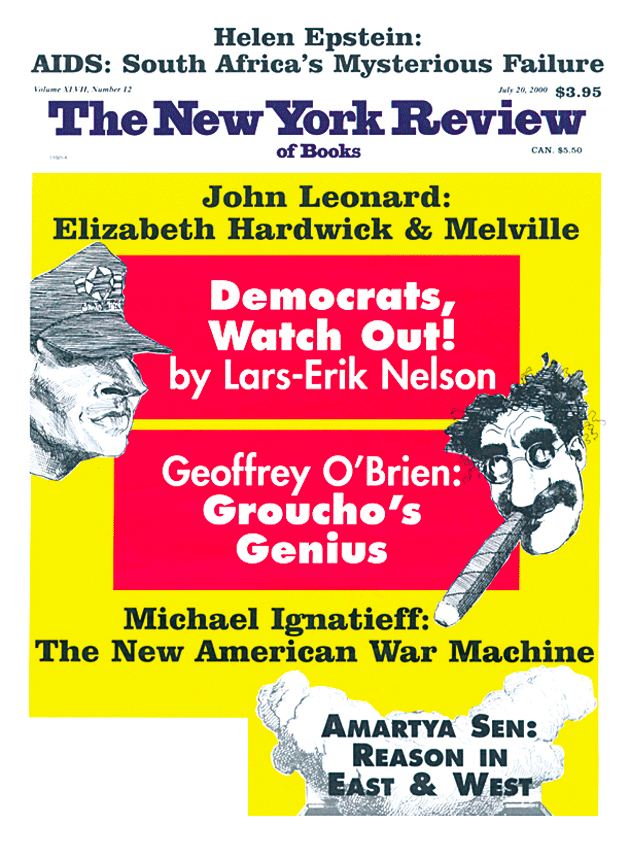

This Issue

July 20, 2000

-

1

Jonathan Glover, Humanity: A Moral History of the Twentieth Century (London: Jonathan Cape, 1999; forthcoming from Yale University Press, Fall 2000), p. 7. Glover, a leading light in Oxford philosophy for many decades, is also the author of Responsibility (Humanities Press, 1970) and Causing Death and Saving Lives (Penguin, 1977), among other works of note. He is now the Director of Medical Law and Ethics at King’s College, London. ↩

-

2

Translation in Vincent Smith, Akbar: the Great Mogul (Oxford University Press/Clarendon Press, 1917), p. 257. ↩

-

3

See Irfan Habib, editor, Akbar and His India (Delhi: Oxford University Press, 1997) for a set of fine essays investigating the beliefs and policies of Akbar as well as the intellectual influences that led him to his heterodox position. ↩

-

4

The last century, however, was subjected to a searching scrutiny by Eric Hobsbawm, a few years before the century and the millennium came to an end, in The Age of Extremes:A History of the World, 1916-1991 (Vintage, 1994). See also Garry Wills, “A Reader’s Guide to the Century,” The New York Review, July 15, 1999. ↩

-

5

See, for example, John Gray, Enlightenment’s Wake:Politics and Culture at the Close of the Modern Age (Routledge, 1995). See also the perceptive review of this work by Charles Griswold, Political Theory, Vol. 27 (1999), pp. 274-281. ↩

-

6

Kenzaburo Oë, Japan, the Ambiguous, and Myself (Kodansha, 1995), pp. 118-119. ↩

-

7

I have tried to discuss the causes of famines and the policy requirements for famine prevention in Poverty and Famines: An Essay on Entitlement and Deprivation (Oxford University Press, 1981) and, jointly with Jean Drèze, in Hunger and Public Action (Clarendon Press/Oxford University Press, 1989). Famine prevention requires diverse policies, among which income creation is immediately and crucially important (for example, through emergency employment in public works programs); but, especially for the long term, they also include expansion of production in general and food production in particular. ↩

-

8

An important collection of perspectives on this is presented in Rajaram Krishnan, Jonathan M. Harris, and Neva R. Goodwin, editors, ASurvey of Ecological Economics (Island Press, 1995). A far-reaching critique of the relationship between institutions and reasoned behavior can be found in Andreas Papandreou, Externality and Institutions (Oxford University Press, 1994). ↩

-

9

I have discussed this question in On Ethics and Economics (Blackwell, 1985), Chapter 1. ↩

-

10

On this, see Emma Rothschild, Economic Sentiments (Harvard University Press, forthcoming). ↩

-

11

David Hume, Enquiries concerning the Human Understanding and concerning the Principles of Morals, edited by L.E. Selby-Bigge (Oxford University Press, 1962), p. 172. ↩

-

12

Thomas Nagel, The Last Word (Oxford University Press, 1997), p. 102. ↩

-

13

On the role of reasoning in the development of attitudes and feelings, see particularly T.M. Scanlon, What We Owe to Each Other (Belknap Press/ Harvard University Press, 1999). ↩

-

14

Adam Smith, The Theory of Moral Sentiments (London: T. Cadell, 1790; republished by Oxford University Press, 1976), pp. 319-320. ↩

-

15

John Rawls, Collected Papers, edited by Samuel Freeman (Harvard University Press, 1999). ↩

-

16

Samuel P. Huntington, The Clash of Civilizations and the Remaking of World Order (Simon and Schuster, 1996), p. 318. ↩

-

17

Clifford Geertz, “Culture War,” The New York Review, November 30, 1995. This is a review of Marshall Sahlins, How ‘Natives’ Think, About Captain Cook, for Example (University of Chicago Press, 1995), and Gananath Obeyesekere, The Apotheosis of Captain Cook: European Mythmaking in the Pacific (Princeton University Press, 1992). ↩

-

18

Gertrude Himmelfarb, “The Illusions of Cosmopolitanism,” in Martha Nussbaum with Respondents, For Love of Country (Beacon Press, 1996), pp. 74-75. ↩

-

19

The different Indian calendars are discussed (both on their own and as ways of interpreting India’s history and traditions) in my essay “India Through Its Calendars,” The Little Magazine, Vol. 1, No. 1 (New Delhi, 2000). ↩

-

20

See Huntington, The Clash of Civilizations, p. 69. ↩

-

21

On this and related issues, see my Development as Freedom (Knopf, 1999), Chapter 10, and the references cited there. ↩

-

22

See my Human Rights and Asian Values (Carnegie Council on Ethics and International Affairs, 1997); a shortened version also came out in The New Republic, July 14 and 21, 1997. ↩

-

23

See Basil Davidson, F.K. Buah, and J.F. Ade Ajayi, A History of West Africa 1000-1800 (Harlowe, England: Longman, new revised edition, 1977), pp. 286-287. ↩

-

24

See M. Athar Ali, “The Perception of India in Akbar and Abu’l Fazl,” in Habib, Akbar and His India, p. 220. ↩

-

25

See Pushpa Prasad, “Akbar and the Jains,” in Habib, Akbar and His India, pp. 97-98. The one missing group seems to be the Buddhists (though one of the early translations included them in the account by misrendering the name of a Jain sect as that of Buddhist monks). Perhaps by then Buddhists were hard to find around Delhi or Agra. ↩

-

26

See Iqtidar Alam Khan, “Akbar’s Personality Traits and World Outlook: A Critical Reappraisal,” in Habib, Akbar and His India, p. 96. ↩

-

27

See also Martha Nussbaum, Cultivating Humanity: A Classical Defense of Reform in Liberal Education (Harvard University Press, 1997). ↩

-

28

Michael Sandel, Liberalism and the Limits of Justice (Cambridge University Press, 2nd edition, 1998), p. 150. ↩

-

29

I discuss this issue in Reason Before Identity: The Romanes Lecture for 1998 (Oxford University Press, 1999). ↩

-

30

New Delhi: National Book Trust, 1994. ↩

-

31

Alberuni’s India, translated by E.C. Sachau, edited by A.T. Embree (Norton, 1971), Part I, Chapter I, p. 20. The Arabic word then used for Hindu or Indian was the same, and I have replaced Sachau’s choice of the English word “Hindu” in this passage by “Indian,” since the reference is to the inhabitants of the country. ↩

-

32

For helpful suggestions, I am most grateful to Sissela Bok, Muzaffar Qizilbash, Emma Rothschild, and Thomas Scanlon. ↩