1.

As a species, human beings have always been distressingly assiduous in devising ways to kill each other. Until recently, however, their best efforts have not equaled the random operations of disease. Disease has seldom been thought of as part of the human arsenal of destruction, probably because it once lay beyond effective human control and may still. But in most of the wars in modern memory it has been a bigger killer than battle. There is no obvious connection between the two, but the mere assemblage of men for fighting has generally been more deadly than the weapons they deploy.

The statistics alone are appalling, as a few examples will attest. In the War of the Austrian Succession, 1740–1748, four thousand Americans participated under British direction in the siege of Cartagena. Though there were few pitched battles, only three hundred survived. The historian of the British army, after narrating the military operations at length, concluded that this phase of the war came to an end through “the gradual annihilation of the contending armies by yellow fever.”1 In the North American theater of the same war New Englanders sent four thousand men to capture the French fortress of Louisburg on Cape Breton Isle. After arriving at the scene, only about half the troops were fit for action. About one hundred died in the assault on the fortress, but of those left as a garrison 890 died.

In the most spectacular battle of the French and Indian War, 1754–1763, in which both commanders famously died on the Plains of Abraham outside Quebec, the British-American losses were 664, the French 640. The garrison of seven thousand that occupied the city was reduced by typhus, typhoid, dysentery, and scurvy to four thousand effective men in the winter that followed. The comparative toll in modern wars has been lowered by a little. In the First World War the losses to combat and disease in the American Expeditionary Force were about equal, but the war was accompanied by a catastrophic epidemic of flu and pneumonia that killed a total of 675,000 Americans, civilian and military, in the ten months from September 1, 1918, to June 30, 1919. This was more than the total battle deaths in the United States armed forces in the First and Second World Wars, the Korean War, and Vietnam combined. Worldwide the pandemic killed somewhere between 20 and 40 million people. In the Second World War the official figures of the Army Medical Corps in the South and Southwest Pacific for 1942 and 1943 show 9,698 men hospitalized for wounds and 539,866 for disease.2

The numbers could be multiplied for other places and other centuries, but they do not figure largely in military histories, or in historical assessments of the contests in which they occurred. Elizabeth A. Fenn’s book on the smallpox epidemic that accompanied the American War for Independence is a brilliant exception.3 Smallpox, along with anthrax and a host of other killers, has suddenly become once again a household word, but it may be worth repeating some of the grim facts about the world’s oldest identifiable disease with the deadliest history of any. It is a strictly human disease: it cannot be contracted or conveyed by any other animal or any insect. It kills about 30 percent of those infected. What enables the other 70 percent to survive is not clear, but nearly everyone exposed to it comes down with it, and exposure is hard to avoid if you come any-where near it. Variola, the name of the smallpox virus, is so easily airborne that a single invalid can infect people in widely separated rooms of the same building. It can live for several days, in the unsterilized clothing, bedding, or belongings of its last victim, long enough to find a new host. It is not contagious until the symptomatic fever, rash, and boils begin to appear ten or twelve days after exposure, but one person in the early stages can unwittingly infect dozens, even hundreds, of others. The potential for exposure of men assembled in army camps or any other close quarters is obvious.

In the eighteenth century, before anyone had heard of the germ theory of disease, these were all well-known facts. As Fenn explains, the people of European countries had lived with smallpox throughout their lives. It was one of the many deadly fevers that people feared but expected. Those who survived smallpox were immune from further attacks, which were therefore limited mostly to children in brief cyclical epidemics. In pre-Columbian America the disease, along with many others, was unknown, and “virgin-soil” epidemics carried away most of the dense native populations of Mexico and Central America after the arrival of the Spanish. North of Mexico, fishermen from Europe carried similar epidemics to the Indians of the East Coast before European settlers arrived; but the comparatively isolated peoples west of the Mississippi and in the far north were still at risk in 1775. And in the English colonies, with population more widely dispersed than in Europe, children could often grow up without exposure, and epidemics were therefore less frequent and more deadly.

Advertisement

Early in the eighteenth century, a relatively benign mode of contracting smallpox was discovered, probably first in Africa or Asia, and then introduced in Europe and America. Known as inoculation or variolation, it consisted of taking matter from the pustule (pox) of an infected person and inserting it by incision in the skin of a healthy one. For reasons still not known or understood, the chances of dying from the disease thus transmitted were statistically reduced from 30 percent to one percent. The only disadvantage, apart from the remaining chance of death, was that you could still transmit the disease in full vigor to anyone not yet immune to it. Ideally, inoculated persons were quarantined for three or four weeks in remotely located houses or hospitals. Generally it was only the well educated and well-to-do in America who opted for it or could afford a month or more out of work. Many regarded the procedure as a deliberate and wicked spreading of a feared disease. Hospitals were sometimes burned by mobs. Americans therefore retained their high vulnerability and their deadly epidemics.

When the fighting in the Revolutionary War began in 1775, it coincided with the start of yet another epidemic. Perhaps because smallpox was so commonplace a matter in the eighteenth century, the existence of this epidemic and its extent have scarcely been noticed by historians of the Revolution. Fenn’s study shows how crucial the management of exposure to it became in the winning of American independence and how it spread out to transform relationships among the other Americans who occupied the interior of the continent and the Pacific Coast.

George Washington had survived a bout with the disease in 1752 and was thus himself immune. When he took command of the troops besieging Boston in 1775, most of the British forces investing the town bore the scars of former infection. In November 1775, the officer commanding, General Sir William Howe, had the rest inoculated. Washington, with most of his troops at risk, could not afford to immobilize them by inoculation and had to iso-late any victims from the rest of the army, and the rest of the army, so far as possible, from contact with the enemy and from the inhabitants of Boston. Success in fending off smallpox then and later depended on making the right choice at the right time between isolation and inoculation. When the British abandoned Boston in March 1777, the inhabitants indulged in a frenzy of mass inoculation. “Boston,” wrote one resident, “is become a hospital with the small-pox.” Washington hastily departed in pursuit of Howe, the bulk of his army uninfected and intact.

Isolation worked for Washington this time. But when he sent uninoculated troops north in an effort to bring Canada into the Revolution, Variola was waiting for them. Futile attempts to contain outbreaks among men already exhausted from the long march simply disrupted discipline and organization. The infection spread until the army was obliged to retreat in total disarray, more men felled by the disease than by the defending British.

In the South the situation was reversed when the British enlisted the assistance of the most likely loyalists. The royal governor of Virginia, Lord Dunmore, offered freedom to slaves who would join his colors. Between eight hundred and one thousand took the offer. Crowded aboard British warships they quickly came down with the disease, infecting everyone at risk. Again there were attempts at isolation in a camp ashore, but probably most of the slaves who sought freedom lost their lives and Dunmore lost hundreds of his loyalists, black and white.

For Washington the opportunity to inoculate instead of isolate came when he took up headquarters in Morristown, New Jersey, late in 1776. After completing his foray across the Delaware, he hesitated for a time, because it meant that three quarters of his army would be out of action, but on February 5, 1777, he made the decision for mass inoculation and followed it up for new recruits the next year at Valley Forge. By the time his men went into action in pursuit of the British from Philadelphia to New York in June 1778, they had gained an immunity from smallpox to match the former British advantage. In the final campaigns in the South, despite the continued susceptibility of short-term American militia, the Continental Army was not again threatened by Variola. Alive and well, its troops could take advantage of the opportunity to bottle up the British at Yorktown. “In view of this,” Fenn observes, “Washington’s unheralded and little-recognized resolution to inoculate the Continental forces must surely rank among his most important decisions of the war.”

Advertisement

The Revolutionary War, like all wars, moved a lot of people around, and Variola moved with them. Fenn traces the epidemic to New Orleans and Mexico City and from there as far north as Hudson’s Bay and as far west as Alaska. Trade among the various peoples of the North American continent guaranteed the extension of Variola among them sooner or later, and it seems to have been more lethal to Native Americans, of whatever tribe or clan, than to people of European descent. Tribes that congregated in villages contracted the disease early and fell prey to their more nomadic enemies, who then succumbed to it themselves. The acquisition of horses and guns multiplied contacts and conflicts, especially among the Plains Indians. When the virus reached the Pacific Coast, it hit virgin territory. Fenn estimates over 25,000 deaths there before the virus ran out of victims. Because of Washington’s precautions after the disastrous Canadian expedition, the battle casualties in his army defied the usual pattern and exceeded those from disease, or at least from smallpox. But the epidemic had killed more than 130,000 throughout the continent by the time it ended in uncanny conjunction with the war.

The appearance of Fenn’s account in depth of an epidemic that has hitherto scarcely been noticed is perhaps symptomatic of what has happened to American attitudes toward disease in the last three quarters of a century. Serious epidemics have always been major events in the lives of everyone who experienced them and in time of war they have had a significant effect on military operations. But their larger effects have generally gone unnoticed because the epidemics themselves have left scarcely a trace on public memory. The influenza epidemic of 1918–1919 killed more people in a shorter time than any other disaster of any kind on record. Like the lesser smallpox epidemic described by Fenn, it had far-reaching effects on the conduct of the war that went with it and on the lives of everyone who survived it. But it received scant coverage from the press of the time and disappeared from public memory almost instantly. Paul Fussell found no occasion to mention it in The Great War and Modern Memory.4

It did not get serious examination by historians until 1976, when Alfred Crosby assessed its lethal extent. Crosby, in a searching afterword to his study, noted the attention that the epidemic received in memoirs and autobiographies and its almost total absence from twentieth-century literature and from the standard textbook histories of the United States. “The average college graduate born since 1918,” he observed, “literally knows more about the Black Death of the fourteenth century than the World War I pandemic, although it is undoubtedly true that several of his or her older friends or relatives lived through it and, if asked, could describe the experience in some detail.”5

2.

Death from disease, even half a million deaths in less than a year, had some of the same familiarity in the 1920s that it had had in the 1720s or the 1820s. But by the 1990s a few cases of West Nile virus could galvanize the public and the media, as a few cases of anthrax can do today. The difference is not simply the novelty of the threat but the expectation of a public response to it. The years since 1918 have seen a revolution in medicine that has made the management of disease as much a matter of public policy as making war has always been. The two have joined in a more intimate combination than their previous fortuitous conjunction, so that the unexplained appearance of any disease, new or old, can have sinister implications. Man-made epidemics are now possible, and prevention of any and all epidemics has necessarily become a political and scientific objective.

The politics can be strangely more complicated than the science. Virtually everyone would agree that preventing disease is a good thing and propagating it a bad one. But when science makes prevention feasible, obstacles can grow like dragon’s teeth. The history of smallpox after the epidemic that Fenn describes is an object lesson. It has been more than two centuries since Edward Jenner, an English country doctor, did the science. Jenner discovered a simple way to destroy smallpox as a disease throughout the whole world, but it took until 1980 to get it done. What Jenner discovered in 1796 was vaccination, derived from cowpox, that exposed no one to Variola, either in its manufacture or in its application. Unlike inoculation the immunity it conferred was not lifelong, but it lasted from seven to ten years, long enough to eliminate the disease from an entire community by stages, and from one community after another until there were no more human hosts to pass it on. How it was belatedly done, how Variola was put out of existence except in laboratory vials, is the enthralling story, with a frightening aftermath, that Jonathan Tucker tells in Scourge.

From the beginning the commanders of armies had no trouble recognizing the importance of vaccination. In 1805 Napoleon ordered it for all his troops. Some governments were equally far sighted. By 1821 it was compulsory by law in Bavaria, Denmark, Norway, Bohemia, Russia, and Sweden. But in the rest of the world progress was sporadic, impeded not only by inertia after the odds of infection were lowered but also by the organized opposition of people who did not “believe in” vaccination or considered it a violation of civil rights. By 1889 more than a hundred anti-vaccination societies in Europe and the US were protesting against it. Although hundreds of thousands had themselves vaccinated voluntarily, the disease remained endemic nearly everywhere. In the United States by the 1930s only nine states required vaccination. As usual, wars brought epidemics with them. The Franco-Prussian War of 1870–1871 produced half a million deaths from smallpox, partly because of a mistaken belief in France that vaccination granted permanent immunity. At the outbreak of the Second World War smallpox was still endemic in sixty-nine countries. At its conclusion the number had risen to eighty-seven.

That the number was zero by 1980 has to be counted as a triumph for the World Health Organization, created by the United Nations in 1948. More particularly it was a triumph for D.A. Henderson, a doctor from Ohio, who led the struggle within and outside the WHO against every obstacle placed in his path, including civil wars, mass migrations, and lying public officials. If Henderson had had his way, Variola would be gone not only from any human body but from the two laboratories where it is known to remain in refrigerated vials: Russia and the United States.

Its continued existence, there and perhaps in hidden laboratories in other countries, is one result of the new opportunities opened in the twentieth century to propagate disease and put its superior killing power to use in warfare. The use of smallpox as a weapon of war was already considered in the eighteenth century and occasionally attempted, as in General Jeffery Amherst’s infamous gift in 1763 to his Indian enemies of blankets loaded with Variola from recent victims. But biological warfare did not come into its own until the discovery of DNA and of the possibilities it opened for controlling and altering the microorganisms of disease. The same understanding of the structure of an organism that enabled medical scientists to devise protections against it enabled them to create designer diseases to frustrate protection. Many disease organisms, like the flu virus, are prone to mutate continually. Smallpox does so only occasionally, but it and all the others can be manipulated by gene splicing into something new and more deadly than the original.

The United States had already begun experimenting with biological weapons in World War II and continued until 1969, when President Nixon halted the program by executive order. The Soviet Union had a similar, much larger, program, but claimed to abandon it after signing the Biological Weapons Convention in 1972. Seemingly the ongoing destruction of smallpox throughout the world was accompanied by a larger renunciation of biological warfare. But in 1992, the defection of a leading Russian scientist revealed that the Soviet program had not only continued at an accelerated pace but that Russia was still at work splicing Variola with other viruses to produce a doomsday weapon. Throughout the 1990s both countries obligingly promised total destruction of their stocks of Variola at a specified date, but they both kept postponing the date, ostensibly to give scientists more time to work on a cure (vaccination offered no protection once the virus took hold). The last date promised for such destruction was December 31, 2002. It has now been postponed indefinitely.

It seems unlikely that the laboratories of Russia or the United States will have found a cure anytime soon. But the success of the campaign to wipe it out by vaccination has left the whole world ready for a virgin-soil pandemic. Compulsory vaccination has been abandoned everywhere, and the vaccinations performed in childhood on the adult population have long since lost their effect. It would probably take a good deal more than a year now to manufacture enough vaccine to protect everyone in a country the size, say, of the United States. And if the virus becomes available in the meantime, the capacity for martyrdom exhibited on September 11 could find expression in the suicidal release of Variola through a handful of disease-bearing volunteers. No one can be sure that the laboratories of Russia and the United States are the only remaining repositories. No one can be sure that one of them has not created a designer smallpox with a kill rate much greater than 30 percent.

Doomsday prophecies have been the standard fare of many religions and still are. Hiroshima made the prophecies more believable, and the power of viruses and bacteria now enhances their appeal. In Japan, the Aum Shinrikyo sect had a try at wiping out unbelievers in a do-it-yourself rehearsal of doomsday. September 11 was a more dramatic and more successful try. Any fanatics who get their hands on Variola, or worse, an enhanced form of it, could bring on now the apocalypse so many have yearned for. Tucker reports a role-playing discussion conducted by D.A. Henderson at Johns Hopkins University in February 1999, at a meeting attended by 950 public health experts. The result was a prediction that a single case in a northeast United States city in April would result, despite the efforts of hospitals, doctors, and health officials, in the reestablishment of endemic smallpox in fifteen countries by year’s end, with a full accompaniment of social and political chaos. In June 2001, another simulation in Oklahoma City predicted three million cases and a million deaths nationwide within sixty-eight days.

Disease still outranks other modes of dealing death, and among diseases smallpox can still kill more people faster than any other that has yet had the chance. In September 2000, the Centers for Disease Control in Atlanta contracted with a company in Cambridge, Massachusetts, to produce 168 million doses of vaccine over a twenty-year period. “Delivery of the first lots,” Tucker assures us, “is expected in mid-2004.” We are, at this writing, told that a combination of drug companies will make delivery by next fall. Possibly. Until then, if one of your neighbors breaks out in a rash and boils, you might try inoculation. It worked for Washington’s army.

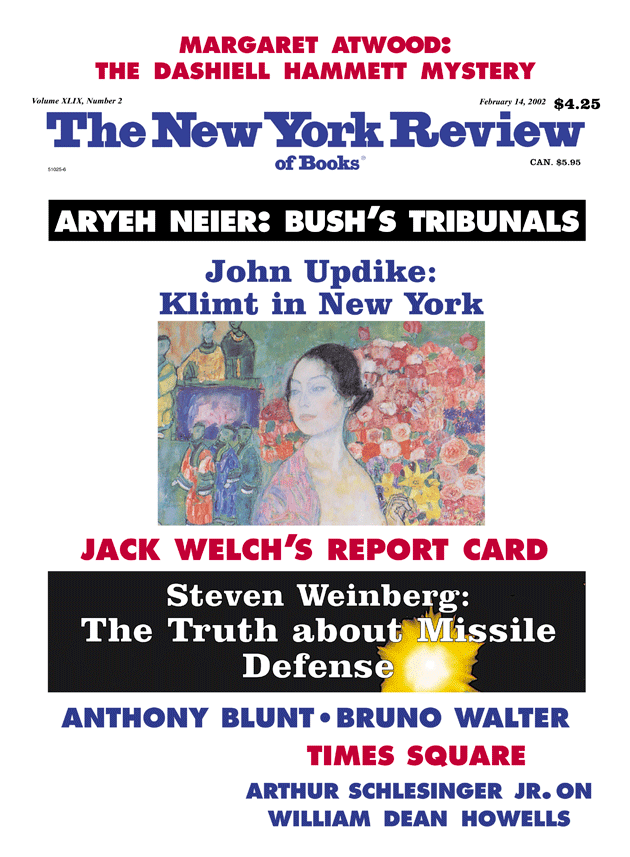

This Issue

February 14, 2002

-

1

J.W. Fortescue, A History of the British Army (Macmillan, 1910), Vol. 2, p. 76. ↩

-

2

Numbers from Francis Parkman, A Half Century of Conflict (Little, Brown, 1924), Vol. 2, pp. 133, 150n., 317; Montcalm and Wolfe (Little, Brown, 1925), Vol. 2, p. 317; Alfred W. Crosby Jr., Epidemic and Peace, 1918 (Greenwood, 1976), pp. 206–207; Eric Bergerud, Touched with Fire: The Land War in the South Pacific (Viking, 1996), p. 454. ↩

-

3

I don’t use the word “brilliant” lightly, but the reader should know that the author is a friend and allowed me to read the manuscript before publication. ↩

-

4

Oxford University Press, 1975. ↩

-

5

Crosby, Epidemic and Peace, pp. 314–315. ↩