1.

In December 1993, a slum landlord in Baltimore named Lawrence Polakoff rented an apartment to a twenty-one-year-old single mother and her three-year-old son, Max.1 A few days after they moved in, Max’s mother was invited to participate in a research study comparing how well different home renovation methods protected children from lead poisoning, which is still a major problem endangering the health of millions of American children, many of them poor.

Congress had banned the sale of interior lead paint in 1978, but it remained on the walls of millions of homes nationwide, and there was no adequate federal program to deal with it. In Baltimore, most slum housing contained at least some lead paint, and nearly half of the children who lived in these houses had levels of lead in their blood well above that considered safe by the Centers for Disease Control. Max’s blood lead was low when he moved into Polakoff’s apartment, but Polakoff had been cited at least ten times in the past for violating Baltimore’s lead paint regulations, and several former tenants would later sue him for poisoning their children, so the boy was now in great danger.

The research study in which Max and his mother participated was run by two scientists affiliated with Baltimore’s Johns Hopkins University with support from the US Environmental Protection Agency. The scientists had formed a partnership with a local contractor, who identified slum landlords like Polakoff and urged them to rent preferentially to families with children aged six months to four years, just when they start crawling around the house and when lead exposure is most dangerous to the developing brain. If the parents agreed, their home would receive one of three different types of lead removal and their children—all of whom were healthy and normal and had low blood lead when they joined the study—would be given regular blood tests to see if their lead levels rose or fell.2

The three lead removal methods varied in cost and thoroughness. In twenty-five of the homes, areas with peeling paint were scraped and repainted and a doormat was placed by the main entrance. This was called “level I abatement” and the cost was not to exceed $1,650. Another twenty-five homes received more extensive “level II abatement” in which chipping paint was scraped and repaired, doormats were placed at all entrances, an easy-to-clean floor covering was installed, and collapsing walls were covered with plasterboard. The cost of this was not to exceed $3,500. In a third set of twenty-five dwellings, all of the above was done, but in addition, all windows were replaced. The cost of this “level III abatement” was not to exceed $7,000. Two control groups of twenty-five families each were also recruited into the study. Half lived in houses that had been built after the interior lead paint ban in 1978, and half lived in older houses that were supposed to have been fully renovated in the past.

Max’s apartment received level II abatement. While carrying out the work, the contractor noticed some “hot spots”—areas of lead paint that could shed dangerous dust. He pointed them out to Polakoff, and also recorded their location on forms that were sent to the researchers, but no one told Max’s mother. Because of the cost limits for level II abatement, the hot spots were not repaired. When Max was tested six months later, his blood lead had nearly quadrupled, to a level known to cause permanent brain damage.

In 1990, Leslie Hanes, another young black single woman, moved into an apartment that was supposed to have been fully stripped of lead paint years earlier. In 1992, she gave birth to a daughter, Denisa, and in the spring of the following year, she too joined the toddler lead study.3 The day before Hanes signed the consent form, the contractor found that her apartment was not in fact lead-free. The remaining lead paint was removed, but by the following September Denisa’s blood lead level had more than tripled and was now six times higher than that currently considered safe by the Centers for Disease Control.

Denisa’s mother was not informed of the blood test result for another three months, by which time it was nearly Christmas. The research assistant who told her about it wished her happy holidays and advised her to wash her front steps more carefully and to keep eighteen-month-old Denisa from putting her hands in her mouth. When Denisa eventually entered school, she had trouble keeping up and had to repeat second grade. This came as a surprise to her mother, a former high school honors student. As Hanes told The Washington Post’s Manuel Roig-Franzia in 2001, sometimes Denisa came home crying because she thought she was stupid. “No, baby, you’re not stupid,” Leslie told her. “We just have to work harder.”

Advertisement

The link between lead poisoning and low IQ is based on the findings of epidemiological studies of large groups of children, so there’s no way of knowing for certain whether Denisa’s problems—or those of any particular child—were caused by lead poisoning. Some children have a low IQ because they were born that way or for some other reason, but because Denisa’s blood lead level was so high, it’s very likely that in her case, lead poisoning was the cause.

Why was such an unethical experiment ever allowed to proceed? In Lead Wars

CUNY’s Gerald Markowitz and Columbia University’s David Rosner convincingly show that the Baltimore toddler study emerged from a century of policymaking in which the US government, faced at various times with a choice between protecting children from lead poisoning and protecting the businesses that produced and marketed lead paint, almost invariably chose the latter. In the process, some of the scientific research on lead poisoning became corrupted.

Long before the Baltimore toddler study was even conceived, millions of children had their growth and intelligence stunted by lead-contaminated consumer products—and some five million preschool children are still at risk today. One expert even estimated that America’s failure to address the lead paint problem early on may well have cost the American population, on average, five IQ points—enough to double the number of retarded children and halve the number of gifted children in the country. Not only would our nation have been more intelligent had its leaders banned lead paint early on, it might have been safer too, since lead is known to cause impulsivity and aggression. Blood lead levels in adolescent criminals tend to be several times higher than those of noncriminal adolescents, and there is a strong geographical correlation between crime rates and lead exposure in US cities.4

In 2000, the two mothers sued the Johns Hopkins–affiliated Kennedy Krieger Institute, which employed the scientists. The mothers’ cases were thrown out by a lower court, but after an appeals court remanded the case to be heard, the mothers reached an undisclosed settlement with the institute. The ninety-six-page appeals court judgment compared the Baltimore lead study to the notorious Tuskegee experiment, in which hundreds of black men with syphilis were denied treatment with penicillin for decades so that US Public Health Service researchers could study the course of the disease.

In September 2011, twenty-five other parents involved in the toddler study filed a class action suit against the Kennedy Krieger Institute, accusing it of negligence, fraud, battery, and violating Maryland’s consumer protection act. Because the children’s medical records are confidential, only their parents and the researchers know for certain which—if any—of them had been poisoned, but all of the plaintiffs claim their children had been endangered. A decision has yet to be handed down.

Surprisingly, many public health experts and professional ethicists defended the Baltimore toddler lead study. Like all US medical research, it had been reviewed in advance by an ethics committee, in this case one based at Johns Hopkins. According to Markowitz and Rosner, the committee’s report questioned only whether the control group children, who lived in supposedly lead-free housing, would receive any benefit from the study, but said nothing about the potential harm to the children in the experimental groups. In a series of medical journal commentaries following the appeals court decision, many public health experts and professional bioethicists claimed that the decision was a disaster for their profession. The president of Johns Hopkins even predicted (incorrectly) that it would drive millions of dollars of research funding from the state. Others argued that research like the toddler study was necessary if affordable solutions to the problems of the poor were to be found.5

Of course researchers should try to find lower-cost solutions to serious public health problems like lead poisoning. However, three aspects of this experiment seem particularly outrageous. First, the landlords recruited into the study were encouraged to rent preferentially to families with small children, but didn’t inform the parents in advance that they were being considered for a research experiment or that they might be better off looking for lead-free housing. Second, the parents were not informed immediately when lead “hot spots” were found in the apartments, or when their children’s lead levels rose. If they had been, they might have taken steps to repair their houses on their own, or even moved out—a nuisance for the researchers, perhaps, but potentially of life-altering benefit to the children.

The third and most important point is that the researchers almost certainly knew in advance that level I and level II abatement—the cheaper of the three methods used—would not protect children from being poisoned. Markowitz and Rosner don’t make this clear in Lead Wars, and so many readers might not realize just how problematic the toddler study really was.6 In the decade before the study commenced, the scientists conducted two other studies in which the homes of children with relatively high lead levels received treatments very similar to level I and level II abatement. Some of these homes also received bimonthly visits from a professional “dust control team”—something not offered to the families in the toddler study. After one year, lead levels in some of the children in these earlier studies had risen so high they had to be hospitalized.7 The most likely reason was that these cheaper abatement methods didn’t involve the replacement of lead-paint-trimmed windows, which can produce plumes of lead dust every time they are opened or closed.8

Advertisement

As one of the scientists wrote in a 1984 letter to The New England Journal of Medicine, such partial methods should not be used for protecting the general population: “more permanent changes,…such as replacement of a deteriorated window casement, may be a more effective long-term solution.”9 In subsequent studies, the scientists showed that window replacement was indeed crucial for the reduction of lead dust in contaminated houses,10 and that the amount of lead dust remaining in the toddler study homes after level I and level II abatement was similar to, and in some cases higher than, that found in scores of homes in which children had been poisoned in the 1980s.11 Why did the scientists then proceed to test two ineffective lead abatement methods on healthy children?

The researchers themselves seem to have been decent men. The senior researcher, J. Julian Chisolm, conducted a door-to-door survey of Baltimore slum children in the 1950s and found that on average, their lead levels were six times higher than among workers employed in the lead industry itself. He then helped develop a treatment known as chelation, in which lead-poisoned children are given injections of chemicals that bind to lead and draw it out of the tissues so that it can be excreted. The injections are painful, must be administered over several weeks, and don’t prevent brain damage, but they do prevent death.

Mark Farfel, Chisolm’s younger colleague, told The Baltimore Sun that it had always bothered him that children who were already sick received state-of-the-art hospital treatment, but so little was being done to prevent them from being poisoned in the first place. Farfel refused to speak to Markowitz and Rosner, and Chisolm was no longer alive when they began writing their book. But from the history they relate in Lead Wars, it’s possible to imagine how these men could not effectively resist the momentum of government indifference to the poor, pervasive racial prejudice, and careless decision-making that influenced government policymaking throughout the lead-poisoning crisis.

2.

The problem began in the early twentieth century when a spate of lead-poisoning cases in children occurred across the United States. The symptoms—vomiting, convulsions, bleeding gums, palsied limbs, and muscle pain so severe “as not to permit of the weight of bed-clothing,” as one doctor described it—were recognizable at once because they resembled the symptoms of factory workers poisoned in the course of enameling bathtubs or preparing paint and gasoline additives. One Dupont factory was even nicknamed “the House of the Butterflies” because so many workers had hallucinations of insects flying around. Many victims had to be taken away in straitjackets; some died.

By the 1920s, it was known that one common cause of childhood lead poisoning was the consumption of lead paint chips. Lead paint was popular in American homes because its brightness appealed to the national passion for hygiene and modernism, but the chips taste sweet, and it could be difficult to keep small children away from them. Because of its well-known dangers, many other countries banned interior lead paint during the 1920s and 1930s, including Belgium, France, Austria, Tunisia, Greece, Czechoslovakia, Poland, Sweden, Spain, and Yugoslavia.

In 1922, the League of Nations proposed a worldwide lead paint ban, but at the time, the US was the largest lead producer in the world, and consumed 170,000 tons of white lead paint each year. The Lead Industries Association had grown into a powerful political force, and the pro-business, America-first Harding administration vetoed the ban. Products containing lead continued to be marketed to American families well into the 1970s, and by midcentury lead was everywhere: in plumbing and lighting fixtures, painted toys and cribs, the foil on candy wrappers, and even cake decorations. Because most cars ran on leaded gasoline, its concentration in the air was also increasing, especially in cities.

Lead paint was the most insidious danger of all because it can cause brain damage even if it isn’t peeling. Lead dust drifts off walls, year after year, even if you paint over it. It’s also almost impossible to get rid of. Removal of lead paint with electric sanders and torches creates clouds of dust that may rain down on the floor for months afterward, and many children have been poisoned during the process of lead paint removal itself. Even cleaning lead-painted walls with a rag can create enough dust to poison a child. Gut renovating the entire house solves the problem, but this too may contaminate the air around the house for months.

Only in the early 1950s did companies start removing lead from most domestic products. But lead house paint remained in use until the congressional ban in the late 1970s, and it’s still on the walls of some 30 million American homes today.

Minuscule amounts of lead can poison a child. The signs of severe lead poisoning—convulsions, pain, coma, etc.—are typically seen when the concentration of blood lead exceeds sixty micrograms per deciliter (a tenth of a liter) of blood. This corresponds to the ingestion of a total amount of lead weighing about the same as six grains of table salt. According to the Centers for Disease Control, parents should be concerned if their children’s blood lead concentration exceeds five micrograms per deciliter, but studies have found that even infinitesimally low levels—down to one or two micrograms per deciliter—can reduce a child’s IQ and impair her self-control and ability to organize thoughts.

There is no way of knowing how many children were harmed over the past century by America’s decision not to ban lead from consumer products early on, but the number is somewhere in the millions. The most accurate national survey of lead poisoning was probably the 1976–1980 National Health and Nutrition Examination Survey, which found that 4 percent of all children under six—roughly 780,000—had blood lead concentrations exceeding thirty micrograms per deciliter, which was then thought to be the limit of safety.

Black children, the survey found, were six times more likely to have elevated lead than whites. The number of children with lead levels over five micrograms per deciliter—or for that matter over one or two—was obviously much higher, but there’s no way of knowing how high it was. The 1985 leaded gasoline ban and the gradual renovation of slum housing have since reduced the number of poisoned children, so that today, the CDC estimates that some 500,000 children who are between one and five years old have lead levels over five micrograms per deciliter.

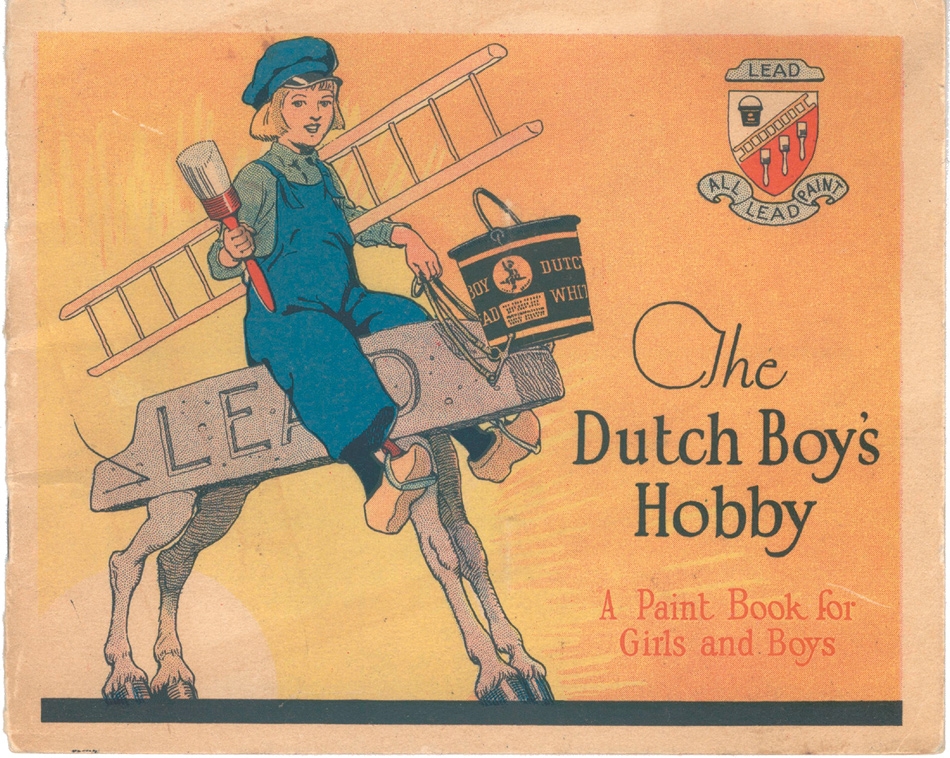

As the scale and horror of the lead paint problem came to light, the lead companies played down the bad news. When popular magazines like Ladies’ Home Journal began publicizing the dangers of lead poisoning in the 1930s and 1940s, lead and paint manufacturers placed cartoons in National Geographic and The Saturday Evening Post celebrating the joy that lead paint brought into children’s lives. Advertisements for Dutch Boy paint—which contained enough lead in one coat of a two-by-two-inch square to kill a child—depicted their tow-headed mascot painting toys with Father Christmas smiling over his shoulder.

Rather than banning lead from consumer products, government-sponsored public health campaigns characterized lead poisoning as a behavioral problem of the poor that they called “Pica”—a disorder in which people consume inedible substances—and advised parents to control their children. The lead companies also paid scientists who produced flawed studies casting doubt on the link between lead exposure and child health problems. When University of Pittsburgh professor Herbert Needleman first showed that even children with relatively modest lead levels tended to have lower intelligence and more behavioral problems than their lead-free peers, some of these industry-backed researchers claimed that his methods were sloppy and accused him of scientific misconduct (he has since been exonerated).

The companies also hired a public relations firm to influence stories in The Wall Street Journal and other conservative news outlets, which characterized Needleman as part of a leftist plot to increase government spending on housing and other social programs. So, just as the tobacco industry deliberately obfuscated the dangers of cigarettes until skyrocketing smoking-related Medicaid costs finally led state governments to sue the companies,12 and just as oil company–backed scientists now downplay the dangers of greenhouse gases, the lead industry also lied to Americans for decades, and the government did nothing to stop it.

During the 1980s, government officials finally agreed that the lead paint crisis was real, but they were conflicted about how to deal with it. In 1990, the Department of Health and Human Services developed a plan to remove lead from the nation’s homes over fifteen years at a cost of $33 billion—a large sum, but half the estimated cost of doing nothing, which would incur a greater need for special education programs, Medicaid and welfare payments for brain-damaged and disabled lead-poisoning victims, and other expenses. But the plan was opposed by the lead industry, realtors, landlords, insurance companies, and even some private pediatricians who objected to the extra bother of screening children. The plan was soon shelved, and instead, the EPA, looking for a cheaper way around the problem, commissioned the Baltimore toddler study.

Since then, the US government has spent less than $2 billion on lead abatement. This money has supported a number of exemplary state and nonprofit programs that work in inner cities, but it’s a tiny fraction of what’s needed, and about twenty times less than US spending on the global AIDS crisis since 2004 alone. It’s worth asking why both Republican and Democratic administrations appear to have cared so little about this threat to America’s children.

3.

Many people think the administration of public health programs is a bureaucratic business, like running a railroad or corporation. Researchers are supposed to identify programs to reduce hazards and governments are supposed to spend money on them. But as Markowitz and Rosner remind us, public health is inseparable from politics, and as history shows, governments are often reluctant to protect their populations without either activist pressure or the threat of social unrest.

It’s hard to imagine the squalor of eighteenth-century European cities. Just walking through certain Parisian neigh-borhoods in the 1780s could cause throat ulcers. By then, according to public health historian George Rosen, advances in science, medicine, and sta- tistics had created the basic under- standings and methods of public health, but they were being implemented only on a private, desultory basis. National programs, run by states, would have to await the political impulses of the French Revolution and the early nineteenth century. After the Revolution, Napoleon made public health a priority. He commissioned sewers, attempted to clean up the water supply, created more sanitary slaughterhouses and markets, and implemented the first government-funded, universal smallpox inoculation campaign.

Across the Channel, this lesson was not lost on the English. The sanitary reforms that commenced in London during the 1830s and 1840s were similarly motivated by fears that cholera epidemics and other diseases were not only reducing the productivity of workers, but also fostering revolutionary ideas. In the US, both activist pressure and fears of incipient unrest have accelerated some of our most important government public health programs, from the Progressive era when reformers pressured the government to ban child labor, improve working conditions in factories, reduce infant mortality, and create legal standards for food safety, sanitation, and housing; to the 1960s when the Sierra Club and other environmental groups put pressure on the government to impose regulations on pesticides and reduce air pollution, and underground networks of doctors and lawyers joined with the women’s movement to pressure the government to legalize abortion. In the 1980s gay groups like Act Up pressured the otherwise indifferent Reagan administration to invest in AIDS treatment programs.

Lead-poisoning prevention once had its partisans too, but they were marginal and rapidly stifled. During the 1960s, the Black Panthers and the Puerto Rican activist group the Young Lords set up community health clinics and carried out screening programs for tuberculosis and sickle cell anemia as well as lead poisoning. The historian Alondra Nelson’s excellent Body and Soul: The Black Panther Party and the Fight Against Medical Discrimination (2011) describes how these groups maintained that new civil rights laws and Great Society programs alone would never meet the needs of the poor unless the poor themselves had a voice in shaping them.13 The Panthers espoused violence and called for a separate black country. They certainly weren’t right about everything, but when it came to lead poisoning, they probably were.

By the early 1980s, the movements to achieve social justice led by Martin Luther King Jr., Malcolm X, and the Black Panthers had largely subsided, and with them, grassroots advocacy for the health of poor black children. Some scientists continued to raise the alarm about lead poisoning, including Herbert Needleman, Jane Lin-Fu of the US Children’s Bureau, Philip Landrigan of Mount Sinai Hospital in New York, and Ellen Silbergeld, the editor of the journal Environmental Research, but they lacked a strong social movement to take up their findings and fight for children at risk. Although there were some desultory campaigns against lead poisoning, neither the powerful women’s health movement nor environmental groups took up the issue in a sustained manner. The Obama administration has invested no more in this problem than George W. Bush’s did. Lead poisoning isn’t even on the CDC’s priority list of “winnable public health battles.”

Faced with a government aiming to spend as little as possible on a public health catastrophe, Chisolm and Farfel may well have felt they had no choice but to try to figure out how many corners they could cut. However, it’s also possible to imagine that these researchers might have worked with poor communities to use their research findings more creatively. They might have tried to mobilize public opinion in support of the original fifteen-year $33 billion lead removal plan. They could have tried to work with black politicians, religious leaders, civil rights groups, and parents’ organizations. If lead poisoning had been seen as a problem affecting middle-class children, this might well have happened. Instead, as Markowitz and Rosner put it, Chisolm and Farfel’s response was to “do another study.”

This Issue

March 21, 2013

When the Jihad Came to Mali

Homunculism

The Noble Dreams of Piero

-

1

The child’s name has been changed. ↩

-

2

Some families were recruited in situ; in these cases, the apartments they were already living in were subjected to either type 1 or type 2 abatement. ↩

-

3

The mother’s and daughter’s names have been changed. ↩

-

4

See Shankar Vedantam, “Research Links Lead Exposure, Criminal Activity,” The Washington Post, July 8, 2007. ↩

-

5

See, for example, Robert M. Nelson, “Nontherapeutic Research, Minimal Risk, and the Kennedy Krieger Lead Abatement Study,” IRB: Ethics and Human Research, Vol. 23, No. 6 (November–December 2001); Anna C. Mastroianni and Jeffrey P. Kahn, “Risk and Responsibility: Ethics, Grimes v Kennedy Krieger, and Public Health Research Involving Children,” American Journal of Public Health, Vol. 92, No. 7 (July 2002); B.P. Lanphear, “Editorial: The Conquest of Lead Poisoning: A Pyrrhic Victory,” Environmental Health Perspectives, Vol. 115, No. 10 (October 2007). ↩

-

6

In their grant proposal for the toddler study, the researchers claimed that they intended to test “a new approach” to lead abatement, a statement quoted uncritically in Lead Wars. In fact, many of the children in the study were being placed in homes treated with methods that had been shown to fail. ↩

-

7

J.J. Chisolm Jr., E.D. Mellits, and S.A. Quaskey, “The Relationship Between the Level of Lead Absorption in Children and the Age, Type, and Condition of Housing,” Environmental Research, Vol. 38, No. 1 (October 1985), pp. 31–45; E. Charney, B. Kessler, M. Farfel, and D. Jackson, “Childhood Lead Poisoning: A Controlled Trial of the Effect of Dust-Control Measures on Blood Lead Levels,” The New England Journal of Medicine, Vol. 309, No. 18 (November 3, 1983), pp. 1089–1093. ↩

-

8

M.R. Farfel, J.J. Chisolm Jr., and C.A. Rohde, “The Longer-Term Effectiveness of Residential Lead Paint Abatement,” Environmental Research, Vol. 66, No. 2 (August 1994), pp. 217–221; M.R. Farfel and J.J. Chisolm Jr., “Health and Environmental Outcomes of Traditional and Modified Practices for Abatement of Residential Lead-Based Paint,” American Journal of Public Health, Vol. 80, No. 10 (October 1990), pp. 1240–1245. ↩

-

9

Evan Charney et al., “Effect of Dust Control on Blood Lead,” The New England Journal of Medicine, Vol. 310, No. 14 (April 5, 1984), pp. 924–925. ↩

-

10

Farfel et al., “The Longer-Term Effectiveness of Residential Lead Paint Abatement.” ↩

-

11

Compare Table ES-2 in “Lead-Based Paint Abatement and Repair and Maintenance Study in Baltimore: Findings Based on Two Years of Follow-Up” (Environmental Protection Agency, 747-R-97-005 December 1997), to Table 4 in Mark R. Farfel and J. Julian Chisolm Jr., “An Evaluation of Experimental Practices for Abatement of Residential Lead-Based Paint: Report on a Pilot Project,” Environmental Research, Vol. 55, No. 2 (1991), pp. 199–212. It is necessary to convert micrograms per square foot into milligrams per square meter. ↩

-

12

See Helen Epstein, “Getting Away with Murder,” The New York Review, July 19, 2007. ↩

-

13

University of Minnesota Press, 2011. ↩