D. John Barnett/Princeton University Press

The Trolley Problem, described as follows by David Edmonds in Would You Kill the Fat Man?: ‘You’re standing by the side of a track when you see a runaway train hurtling toward you: clearly the brakes have failed. Ahead are five people tied to the track. If you do nothing, the five will be run over and killed. Luckily you are next to a signal switch: turning this switch will send the out-of-control train down a side track, a spur, just ahead of you. Alas, there’s a snag: on the spur you spot one person tied to the track: changing the direction will inevitably result in this person being killed. What should you do?’

Are certain actions intrinsically wrong, or are they wrong only because of their consequences? Suppose that by torturing someone, you could save a human life, or ten human lives, or a hundred. If so, would torture be morally permissible or perhaps even obligatory? Or imagine that capital punishment actually deters murder, so that with every execution, we can save two innocent lives, or three, or a dozen. If so, would capital punishment be morally permissible or perhaps even mandatory? And how, exactly, should we go about answering such questions?

On one view, the best method, and perhaps the only possible one, begins by examining our intuitions. Some people have a firm conviction that it is wrong for the government to torture or execute people, even if doing so would deter murder. Some people think that it is plain that a nation should not bomb a foreign city, and thus kill thousands of civilians, even if the bombing would ultimately save more lives than it would cost. If we are inclined to agree with these conclusions, we might test them by consulting a wide range of actual and hypothetical cases. That process could help us to refine our intuitions, eventually bringing them into accord with one another, and also with general principles that seem to explain them, and that they in turn help to justify.

Many philosophers are inclined to this view. To test our moral intuitions and to see what morality requires, they have been especially taken with a series of moral conundrums that sometimes go under the name of “trolleyology.” Here are two of the most important of these conundrums.

- The Trolley Problem. You are standing by the side of a railway track, and you see a runaway train coming toward you. It turns out that the brakes have failed. Five people are tied to the track. They will be killed unless you do something. As it happens, you are standing next to a switch. If you pull it, the train will be diverted onto a side track. The problem is that there is a person tied to the side track, and if you pull the switch, that person will be killed. Should you pull the switch?

- The Footbridge Problem. You are standing on a footbridge overlooking a railway track, and you see a runaway train coming toward you. It turns out that the brakes have failed. Five people are tied to the track. They will be killed unless you do something. A fat man is near you on the footbridge, leaning over the railway, watching the train. If you push him off the footbridge, he will fall down and smash onto the track. Because he is so obese, he will bring the train to a stop and thus save the five people—but he will be killed in the process. Should you push him?

Most people have pretty clear intuitions about the two problems. In the Trolley Problem, you should pull the switch, but in the Footbridge Problem, you should not push the fat man. The question is: What is the difference between the two cases? Once we identify the answer, perhaps we will be able to say a great deal about what is right and wrong—not only about trolleys and footbridges, but also about the foundations of ethics and the limits of utilitarian ways of thinking. What we say may bear in turn on a wide range of real-world questions, involving not only torture, capital punishment, and just wars, but also the legitimate uses of coercion, our duties to strangers, and the place of cost-benefit analysis in assessing issues of health and safety.

In an elegant, lucid, and frequently funny book, Would You Kill the Fat Man?, David Edmonds, a senior research associate at Oxford, explores the Trolley and Footbridge Problems, and what intelligent commentators have said about them. Philippa Foot, who taught philosophy at Oxford from the late 1940s until the mid-1970s, was the founding mother of trolleyology (and also the granddaughter of Grover Cleveland).

Though Oxford was dominated by men in this period, three of its leading philosophers were women: Foot, Elizabeth Anscombe (for whose appointment Foot was responsible), and Iris Murdoch. Edmonds explains that their mutual entanglements, philosophical and otherwise, were not without complications. Murdoch’s many discarded lovers included M.R.D. Foot, who became Philippa’s husband. The marriage badly strained the relationship between the two women (“Losing you & losing you in that way was one of the worst things that ever happened to me,” Murdoch wrote to Philippa). After M.R.D. Foot left his wife, she and Murdoch again became friends (and had a brief love affair themselves).

Anscombe once said that Foot was the only Oxford moral philosopher worth heeding, but the two had a terrible falling out over contraception and abortion, which Foot believed to be morally permissible. Anscombe vehemently disagreed, using the term “murderer” to describe “almost any woman who chose to have an abortion.” Foot thought that Anscombe was “more rigorously Catholic than the Pope.”

The Trolley Problem grew directly out of these debates. Haunted by World War II and its horrors, Foot rejected the view, widespread in Oxford circles at the time, that ethical judgments are merely statements of personal preference, and also the claim that the best way to analyze those judgments is to see how the relevant words are used in ordinary language. Foot believed that ethical judgments could be defended as a matter of principle. In 1967, she published an article, “The Problem of Abortion and the Doctrine of the Double Effect,” which introduced the Trolley Problem.

Advertisement

The Doctrine of Double Effect is well known in Catholic thinking. It distinguishes sharply between intended harms, which are impermissible, and merely foreseen harms, which may be permissible. According to Catholic theology, a woman is permitted to have a hysterectomy if necessary to remove a life-threatening tumor, even if a fetus in her womb will die as a result. The reason is that her intention is to save her own life, not to kill the fetus. To explore the distinction between intended and foreseen effects, Foot introduced a number of hypothetical dilemmas, including the Trolley Problem and the Transplant Case, which asks whether a surgeon should kill a young man in order to farm out his organs to save five people who are now at risk. Foot thinks it plain that the surgeon is not permitted to kill the young man, even if lives would be saved on balance. (Interestingly, Foot did not reach a conclusion on the question whether a woman may have an abortion when her life and health are not in danger.)

Perhaps the Doctrine of Double Effect can explain why it is right to pull the switch in the Trolley Problem (where you don’t intend to kill anyone) but wrong in the Transplant Case to kill the young man (whose death is intended). But in an intricate argument, Foot ultimately concluded that the best way to explain our conflicting intuitions in such cases is to distinguish not between intended and foreseen effects, but between negative duties (such as the duty not to kill people) and positive ones (such as the duty to save people). In a later article, Foot emphasized that in the Trolley Problem, the question is whether to redirect an existing threat, which might be morally acceptable, unlike the creation of a new threat (as in the Transplant Case).

The Footbridge Problem was devised and made famous by Judith Jarvis Thomson, a philosopher at the Massachusetts Institute of Technology. In asserting a moral distinction between the Trolley Problem and the Footbridge Problem, Thomson drew attention to people’s rights. In Thomson’s view, the fat man has a right not to be killed, but the same is not true of the person with the misfortune to be tied up on the side track in the Trolley Problem. “It is not morally required of us that we let a burden descend out of the blue onto five [persons] when we can make it instead descend onto one.” A bystander cannot push someone to his death, but he can legitimately seek to minimize “the number of deaths which get caused by something that already threatens people.”

Edmonds observes that in her work on these questions, Thomson was speaking as a follower of Immanuel Kant, who believed that people should not be treated merely as means to other people’s ends. Foot herself had referred to the “existence of a morality which refuses to sanction the automatic sacrifice of the one for the good of the many…[and] secures to each individual a kind of moral space, a space which others are not allowed to invade.” Many people believe that when we insist that it is morally unacceptable to push the fat man (or to steal people’s organs, or to torture or execute innocent people), we are responding to deeply felt Kantian intuitions, and rightly so.

Of course it is true that utilitarians reject those intuitions and insist that “the good of the many” is what matters. On utilitarian grounds, the Trolley Problem and the Footbridge Problem seem identical and easy to resolve. You should pull the switch and push the fat man, because it is better to save five people than to save one. But in a discussion that bears directly on the Footbridge Problem, and that might be enlisted on the fat man’s behalf, John Stuart Mill emphasized that from the utilitarian point of view, it might be best to adopt clear rules that would increase general “utility” in the sense of overall well-being or social welfare, even if they would lead to reductions of utility in some individual cases. In Edmonds’s words:

It would be disastrous if, each time we had to act, we had to reflect on the consequences of our action. For one thing, this would take far too much time; for another, it would generate public unease. Far better to have a set of rules to guide us.

In principle, utilitarian judges should be willing to convict an innocent person, and even to execute him, if the consequence would be to maximize overall well-being. But a utilitarian might acknowledge that if our legal system were prepared to disregard the question of guilt or innocence, it might collapse. In many situations, we live by simple rules that perform well as far as the aggregate is concerned and that make life a lot easier than case-by-case judgments would. Edmonds writes:

Advertisement

It would be unsettling to have to worry that any time you visited a sick relative in hospital, it might be you who ended up under the scalpel with surgeons cutting out your organs. So, we should adhere to the rough-and-ready rules.

Even if we accept this conclusion, utilitarians should be prepared to admit that it would be acceptable and indeed obligatory to push the fat man under imaginable circumstances—if, for example, social cohesion would not be at risk, and no one would ever learn what happened. In response, Edmonds invokes a famous argument by Bernard Williams, who contended that in problems of this kind, utilitarianism points in the wrong direction. Williams devised a now-famous case, closely akin to the Footbridge Problem, in which a character named Jim finds himself in a South American town in which armed men are getting ready to shoot twenty innocent people. The leader of the men tells Jim that if he shoots one of the twenty, the other nineteen will be freed. Should Jim shoot?

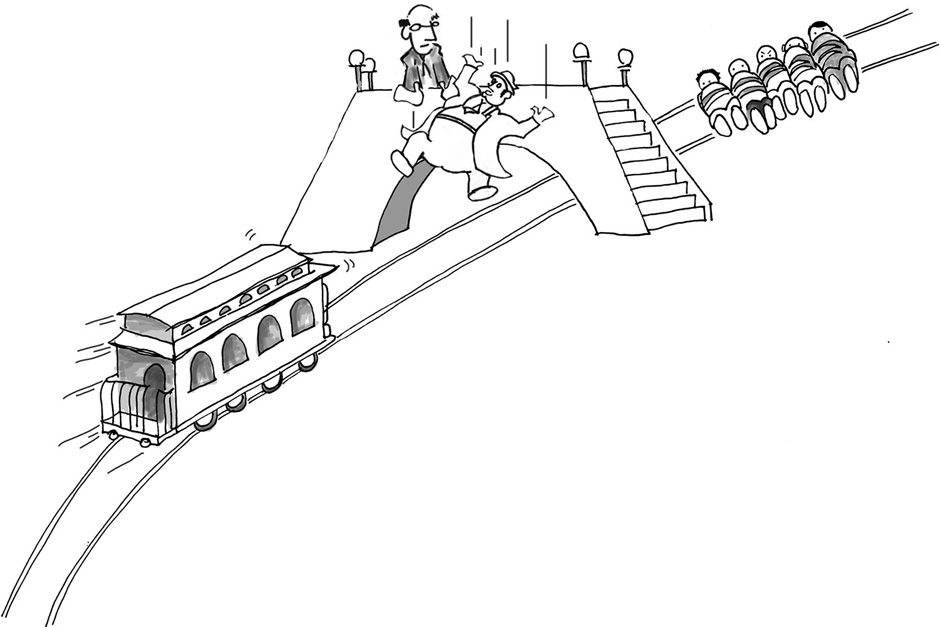

D. John Barnett/Princeton University Press

The Footbridge Problem, in which, Edmonds writes, ‘You’re on a footbridge overlooking the railway track. You see the trolley hurtling along the track and, ahead of it, five people tied to the rails…. There’s a very fat man leaning over the railing watching the trolley. If you were to push him over the footbridge, he would tumble down and smash on to the track below. He’s so obese that his bulk would bring the trolley to a shuddering halt. Sadly, the process would kill the fat man. But it would save the other five. Should you push the fat man?’

Williams contends that a person’s integrity matters, and hence that utilitarians are wrong to believe that because twenty is more than one, Jim’s choice is an easy one. In Edmonds’s words, the problem is that “all the utilitarian cares about is what produces the best result, not who produces this result or how this result is brought about.” Many philosophers believe that Williams is on the right track and that he has indeed identified a serious objection to utilitarianism, one that helps rescue our intuitions with respect to the Footbridge Problem.

In a related discussion, Williams explores the case of a man who, faced with a situation in which he can save only one of two people in peril, chooses to save his wife. Williams memorably notes that if the man pauses to think about whom he ought to save and whether he ought to be impartial, he is having “one thought too many.” Some people might say the same kind of thing about the Footbridge Problem. From the moral point of view, the correct answer might be a clear and simple refusal to push an innocent person to his death.

Edmonds is aware that psychologists and behavioral economists have raised serious questions about intuitive thinking, and that these questions might create problems for such philosophers as Foot, Thomson, and Williams.1 In their pioneering work, Daniel Kahneman and Amos Tversky have shown that our intuitions are highly vulnerable to “framing effects.” If people are told that of those who have an operation, 90 percent are alive after five years, they tend to think it’s a good idea to have that operation. But if they’re told that of those who have an operation, 10 percent are dead after five years, they tend to think it’s a bad idea to have the same operation. The setting matters as well. When people are placed in a good mood—for example, by good weather or by reading happy stories—their intuitions might be different from what they are when they are angry or sad.

Behavioral scientists (starting with Kahneman and Tversky) have also shown that people rely on heuristics, or simple rules of thumb, that generally work well, but that can produce systematic errors. When people depend on heuristics, they substitute an easy question for a hard one. Kahneman associates heuristics with what he calls “fast thinking,” found in the more intuitive forms of cognition that psychologists have described as System 1. “Slow thinking,” avoiding heuristics in favor of more deliberative approaches, is a product of System 2.2

Consider the representativeness heuristic, in accordance with which our intuitive judgments about probability are influenced by our assessments of resemblance (the extent to which A “looks like” B). The representativeness heuristic is famously exemplified by people’s answers to questions about the likely career of a hypothetical woman named Linda, described as follows:

Linda is 31 years old, single, outspoken, and very bright. She majored in philosophy. As a student, she was deeply concerned with issues of discrimination and social justice, and also participated in anti-nuclear demonstrations.

People were asked to rank, in order of probability, eight possible futures for Linda. Several of these were fillers (such as psychiatric social worker and elementary school teacher); the two crucial ones were “bank teller” and “bank teller and active in the feminist movement.”

Most people say that Linda was less likely to be a bank teller than a bank teller who was also active in the feminist movement. This is an obvious mistake (called a conjunction error), in which characteristics A and B are thought to be more likely than characteristic A alone.3 As a matter of logic, a single outcome has to be more likely than one that includes both that outcome and another. The error stems from the representativeness heuristic. Linda’s description seems—as a matter of quick judgment—to match “bank teller and active in the feminist movement” a lot better than “bank teller.” Reflecting on the example, Stephen Jay Gould observed that “I know [the right answer], yet a little homunculus in my head continues to jump up and down, shouting at me—‘but she can’t just be a bank teller; read the description.’”4

With respect to trolleyology, people’s intuitions are affected by framing and setting as well. When people are first asked about the Footbridge Problem, and then turn to the Trolley Problem, they’re much less likely to want to pull the switch in the latter case. If people watch a comedy before being asked about the Footbridge Problem, they’re more likely to want to push the fat man than if they watch a tedious documentary instead. If the fat man is called Tyrone Payton, liberals are less likely to push him off the footbridge than if his name is Chips Ellsworth III (as it turns out, conservatives are unaffected by such names). And if the question involves not the fat man but a fat monkey (who must be pushed to save five monkeys), people get a lot more utilitarian, and are more willing to push.

In recent years, neuroscientists have been investigating people’s intuitions as well. How does the human brain respond to the Trolley and Footbridge Problems? Joshua Greene, a psychologist at Harvard, has done influential work on this question, trying to match responses to actual changes in brain states.5 His basic claim is that far more than the Trolley Problem, the Footbridge Problem engages the brain’s emotional sectors. Greene and his coauthors find “that there are systematic variations in the engagement of emotions in moral judgment,” and that brain areas associated with emotion are far more active in contemplating the Footbridge Problem than in contemplating the Trolley Problem.6 A striking claim here is that the emotional parts of the brain, which evolved earlier and operate more quickly and automatically, are more likely to be associated with certain feelings of moral obligation—leading to approaches that philosophers call “deontological.”

Greene and various coauthors have engaged in a series of studies to support this conclusion. For example, people with the kind of brain damage that interferes with regions generally associated with emotion rather than cognition are far more likely to push the fat man than those without any such damage.7 So too, people who have a visual rather than verbal “cognitive style”—in the sense that they perform better on tests of visual accuracy than on tests of verbal accuracy—are more likely to favor deontological approaches (and thus to refuse to push the fat man). In the authors’ words, “visual imagery plays an important role in triggering the automatic emotional responses that support deontological judgments.”8

It might seem tempting to take these findings as a point for utilitarianism, but the temptation should be resisted. Suppose that emotional sectors of the brain do produce immediate deontological intuitions. It does not follow that those intuitions are wrong. To know whether they are, we need a moral argument, not a brain scanner.

Alert to this point, Edmonds ends by briefly offering his own view, which is that the Doctrine of Double Effect provides the right solution, and that Foot and Thomson led philosophers in the wrong direction by failing to embrace it. Recall that this doctrine distinguishes between intended harms, which are morally impermissible, and merely foreseen harms—as when an operation for a life-threatening tumor may cause an abortion. Edmonds insists that the doctrine “has powerful intuitive resonance,” and that there is a real difference between “intending and merely foreseeing.” For Edmonds, the solution to the Trolley and Footbridge Problems lies in accepting that difference, which helps to account for our intuitions. He thinks that solution has “many virtues: it is simple and economic, it doesn’t seem arbitrary, and it has intuitive appeal across a broad range of cases. It is the reason why the fat man would be safe from me at least.”

Edmonds has written an entertaining, clear-headed, and fair-minded book. But his own discussion raises doubts about a widespread method of resolving ethical issues, to which he seems committed, and of which trolleyology can be counted as an extreme case. The method uses exotic moral dilemmas, usually foreign to ordinary experience, and asks people to identify their intuitions about the acceptable solution. When the method is used carefully,9 the intuitions are not necessarily treated as authoritative, but they are given a great deal of respect, at least if they are firm. Hence Edmonds’s claim, in defense of the Doctrine of Double Effect, that it “has intuitive appeal across a broad range of cases,” and hence the emphasis—by Foot, Thomson, and Williams—on our intuitive reactions to particular cases that seem to confound utilitarian thinking. But should we really give a lot of weight to those reactions?

If we put Mill’s points about the virtues of clear rules together with a little psychology, we might approach that question in the following way. Many of our intuitive judgments reflect moral heuristics, or moral rules of thumb, which generally work well. You shouldn’t cheat or steal; you shouldn’t torture people; you certainly should not push people to their deaths. It is usually good, and even important, that people follow such rules. One reason is that if you make a case-by-case judgment about whether you ought to cheat, steal, torture, or push people to their deaths, you may well end up doing those things far too often. Perhaps your judgments will be impulsive; perhaps they will be self-serving. Clear moral proscriptions do a lot of good.

For this reason, Bernard Williams’s reference to “one thought too many” is helpful, but perhaps not for the reason he thought. It should not be taken as a convincing objection to utilitarianism, but instead as an elegant way of capturing the automatic nature of well-functioning moral heuristics (including those that lead us to give priority to the people we love). If this view is correct, then it is fortunate indeed that people are intuitively opposed to pushing the fat man. We do not want to live in a society in which people are comfortable with the idea of pushing people to their deaths—even if the morally correct answer, in the Footbridge Problem, is indeed to push the fat man.

On this view, Foot, Thomson, and Edmonds go wrong by treating our moral intuitions about exotic dilemmas not as questionable byproducts of a generally desirable moral rule, but as carrying independent authority and as worthy of independent respect. And on this view, the enterprise of doing philosophy by reference to such dilemmas is inadvertently replicating the early work of Kahneman and Tversky, by uncovering unfamiliar situations in which our intuitions, normally quite sensible, turn out to misfire. The irony is that where Kahneman and Tversky meant to devise problems that would demonstrate the misfiring, some philosophers have developed their cases with the conviction that the intuitions are entitled to a great deal of weight, and should inform our judgments about what morality requires. A legitimate question is whether an appreciation of the work of Kahneman, Tversky, and their successors might lead people to reconsider their intuitions, even in the moral domain.

Nothing I have said amounts to a demonstration that it would be acceptable to push the fat man. Kahneman and Tversky investigated heuristics in the domain of risk and uncertainty, where they could prove that people make logical errors. The same is not possible in the domain of morality. But those who would allow five people to die might consider the possibility that their intuitions reflect the views of something like Gould’s homunculus, jumping up and down and shouting, “Don’t kill an innocent person!” True, the homunculus might be right. But perhaps we should be listening to other voices.

-

1

In Experiments in Ethics (Harvard University Press, 2008), Kwame Anthony Appiah emphasizes the difficulties raised by behavioral science for philosophers’ use of moral intuitions. ↩

-

2

See Daniel Kahneman, Thinking, Fast and Slow (Farrar, Straus and Giroux, 2010). ↩

-

3

On some of the complexities in the example, which has spawned a large literature, see Barbara Mellers, Ralph Hertwig, and Daniel Kahneman, “Do Frequency Representations Eliminate Conjunction Effects?,” Psychological Science, Vol. 12, No. 4 (July 2001). ↩

-

4

Stephen Jay Gould, Bully for Brontosaurus: Reflections in Natural History (Norton, 1991), p. 469. ↩

-

5

Greene develops his arguments in detail in Moral Tribes: Emotion, Reason, and the Gap Between Us and Them (Penguin, 2013). ↩

-

6

Joshua D. Greene et al., “An fMRI Investigation of Emotional Engagement in Moral Judgment,” Science, Vol. 293, No. 5537 (September 14, 2001). ↩

-

7

See Michael Koenigs et al., “Damage to the Prefrontal Cortex Increases Utilitarian Moral Judgements,” Nature, Vol. 446 (April 19, 2007). ↩

-

8

Elinor Amit and Joshua D. Greene, “You See, the Ends Don’t Justify the Means: Visual Imagery and Moral Judgment,” Psychological Science, Vol. 23, No. 8 (August 2012). ↩

-

9

Frances Kamm, a student of Thomson’s, may well be its most careful and ingenious current expositor, and she has written detailed defenses of the method. See Frances Kamm, Morality, Mortality: Vol. 1 (Oxford University Press, 1993); and Intricate Ethics (Oxford University Press, 2007). Note her suggestion that “my approach is generally to stick with our common moral judgements, which I share and take seriously,” and also her arresting claim that “I don’t really have a considered judgement about a case until I have a visual experience of it,” producing “the intuitive judgement of what you really should do.” See Alex Voorhoeve, “In Search of the Deep Structure of Morality: Frances Kamm Interviewed,” Imprints, No. 9 (2006). ↩