At medical school I was taught by Dr. Nimmo, an elderly Edinburgh physician who wore a pinstripe suit, carried a heavy gold watch, and whose combed white hair was held in place with pomade. If he realized that a patient was hard of hearing he’d take his stethoscope from his jacket pocket and ceremoniously place the earpieces into their ears. Taking the other end in his hands, he’d speak slowly and clearly into it as if into a microphone. The focus and amplification of the stethoscope meant that he didn’t have to shout loudly, and the privacy of the patient on the open ward was preserved. For the most part his patients found the role reversal hilarious; occasionally the medical students did too. I remember him turning to one student who was sniggering a little too conspicuously. “There’s nothing funny about trying to communicate properly,” he said. “Use whatever means you can to understand and be understood.”

In my clinical practice I still make use of his advice when meeting patients who are hard of hearing. Most of those who try on my stethoscope appreciate it, and yes—most of them find it funny. It’s less funny overcoming communication difficulties with those who are profoundly deaf. If a Sign interpreter is not available, and the patient’s lip-reading skills are not excellent, we cover pages of paper with written questions and answers. Even so, much of the breadth and subtlety of the consultation is lost.

One of my profoundly deaf patients is Miss Black, a woman in her early fifties whose lip-reading skills are good. But our appointments still take much longer than if she were hearing. Often we don’t have the luxury of discussing her emotional well-being, but focus on her physical complaint, diagnosis, and a plan for treatment. She lives alone on welfare benefits with little involvement in what the deaf sometimes call the “hearing world,” though she has many friends among the Edinburgh deaf community. Recently I needed to talk to her without the time pressure of the clinic, so I pedaled down from the office to visit her at home.

Beside a button on her front door was a sign: “Press Hard—Don’t Knock.” The wire was connected to a flashing light inside rather than a bell. The walls of her small apartment were covered with pictures, and the floors with toys for her cats. Among her small collection of books she had a medical encyclopedia; she told me she combs it for clues before our appointments. After years of encounters with doctors she’s learned that it’s quicker to propose her own ideas about diagnosis, and suggest her own treatments.

Outside there were workmen digging up the road. I was irritated by the pneumatic drills but of course they made no difference to her. With the luxury of time she began telling me about her earliest memories: they were of frustration and despair, being treated as if she was stupid (in her file I’d seen letters that referred to her as “retarded”). As a little girl she didn’t understand why people were so often angry with her, and why they were moving their mouths. At age four, it having been realized that she was deaf, she was sent to a special school to learn Sign. “And when did you start learning to lip-read?” I asked her. She held up eight fingers. “Lip-reading hard,” she said with the distinctive, hard-won voice many deaf people have. She held her hand low, at about the height of a four-year-old, and put two bunched fists to her eyes to symbolize weeping. Then she shifted her hand higher, to about the height of an eight-year-old, and began to nod and smile. “At eight can understand.”

Experts in language acquisition say that the first three years of a child’s life are the most crucial in developing the conceptual frame to build fluent language. By the time Miss Black began to learn language (and Sign language is as delicate, sensitive, and complete a means of expression as any spoken language) that critical period was past. She didn’t say as much, but she’d been living with the consequences of that delay ever since. It was there in her lack of involvement with the hearing world, in her inability to find satisfying work, in the clumsy handwriting with which she communicated.

In I Can Hear You Whisper Lydia Denworth makes what she calls “an intimate journey through the science of sound and language.” That critical window of language acquisition, so detrimental to Miss Black, was of immense significance to Denworth: when her son was about a year old she learned that he was very hard of hearing and might soon be deaf. She had noticed something was amiss because the development of his language was much slower than it had been for her other two sons.

Advertisement

Children born deaf of deaf parents have no language delay at all: being exposed to Sign from birth enables a baby to develop as full a vocabulary as the hearing, not just to describe the world, but to manipulate abstract concepts. As Homo sapiens—the “thinking” or “knowing” man—it’s through language that we flourish, because language has provided us a tool kit for abstract thought. Children born deaf of hearing parents are at a disadvantage in this respect: either they must learn to lip-read (which is slow and difficult, as Miss Black pointed out) or they must be exposed to Sign from as young an age as possible (Sign is actually easier to acquire than speech: children can be fully fluent by three).

Over the last twenty-five years there has been an eruption of interest in a third possibility: the insertion of cochlear implants. It’s now common in countries with well-funded health care systems for deaf children to be fitted with one or two of these devices (in Australia, 80 percent of deaf children have them). Having one on each side improves comprehension in a noisy environment, as well as sound localization. However, 15 to 20 percent of recipients gain little benefit from them and, contrary to some claims, they don’t reproduce normal hearing. Instead they transmit a simplified, broken-down representation of the acoustic world (one of the technology’s pioneers, Michael Merzenich, likens the signal from implants to “playing Chopin with your fist”). But despite these limitations, they have the potential to magnificently enhance the understanding and acquisition of spoken language.

In Europe and the United States, the eighteenth and early nineteenth centuries saw the rapid spread of schools for the deaf, each teaching the various national languages of Sign. Many deaf individuals of that period wrote eloquently about the liberation they’d experienced through Sign. But during the late nineteenth century the emphasis in education shifted from learning Sign to the acquisition of spoken language through lip-reading and speech.

That shift, perhaps begun with the best of intentions,1 was a disaster for the deaf community. Speaking and lip-reading take years of concentrated work that squeezes out education in most other areas of human proficiency and knowledge. By the mid-twentieth century educational attainment among the deaf had plummeted, literacy skills of deaf adults were poor, and the deaf were significantly more marginalized than they had been a century earlier. Miss Black was fortunate to learn Sign when she did.

Andrew Solomon’s 1994 article in The New York Times Magazine, “Defiantly Deaf,” illuminated just how damaging the emphasis on oral skills could be. He quotes Jackie Roth, a woman educated in an oralist environment:

We felt retarded…. Everything depended on one completely boring skill, and we were all bad at it…. We spent two weeks learning to say “guillotine” and that was what we learned about the French Revolution. Then you go out and say “guillotine” to someone with your deaf voice, and they haven’t the slightest idea what you’re talking about.

If the purpose of deaf education was to enable those of us who can’t hear to take a meaningful part in national and cultural life, it was failing.

During the 1980s deaf individuals began to assert their rights as members of a distinct and valuable community. Books like When the Mind Hears2 and Deaf In America3 framed a debate about how the hearing appreciated (or more poignantly, didn’t appreciate) those who couldn’t hear, and called on deaf individuals to take pride in their uniquely visual culture. Political rights and accommodations were won, and the capitalized “D” in “Deaf” was adopted as a marker of pride in a cultural identity.

Cochlear implants, which became available in experimental form just as Deaf identity was gathering confidence, were for many years seen as an insult—in some quarters they still are. If deafness is a difference, rather than an impairment, why implant wires into the brains of children (though the wire goes into the cochlea—a sense organ—not the brain)? Why, for that matter, consider deafness a “health care” issue at all? At the shrill end of the debate, parents who wanted implants for their children were branded child abusers, while at the other end, cochlear implants were hailed as a miracle of biblical proportions.

We’re in highly emotive territory here, sacred to some, debating cultural identity, disability and enablement, and how a society accommodates diversity. We have to proceed with care. But that can be difficult when a baby or toddler is thrown into the debate, her clock for language acquisition ticking, and her parents asked to make a decision that would test the wisdom of Solomon: to implant, or not to implant. “Deafness as such is not the affliction,” Oliver Sacks has pointed out, “affliction enters with the breakdown of communication and language.” How then to safeguard your child’s best chance of acquiring language and enabling communication? Denworth’s book explores the science surrounding that question. It is a personal memoir as well as a journalist’s inquiry by a mother who urgently needed to find her own answer.

Advertisement

Through cataract surgery, the blind had been made to see; could the deaf be made to hear? If the deaf could be made to hear, so the majority argument went, then the debate over the relative merits of oralism and Sign would become redundant. The earliest physicians had tried knocking deaf people on the ear with a stone, and since that didn’t work, later ones for the most part gave up on trying to “fix” deafness. That changed during the 1950s in Paris, when a surgeon called Charles Eyriès implanted an induction coil into the auditory nerve of Monsieur G., who’d been rendered deaf through surgery to remove tumors near his ears. When the coils were electrified G. heard a sound like cloth ripping, but little else. A Los Angeles ear surgeon, Bill House, heard of Eyriè’s experiment and through working on cadavers in the morgue figured out a more refined system: feeding a fine electrode wire into the snail-shaped cochlea of the ear. His initial trials, in 1961, were promising.

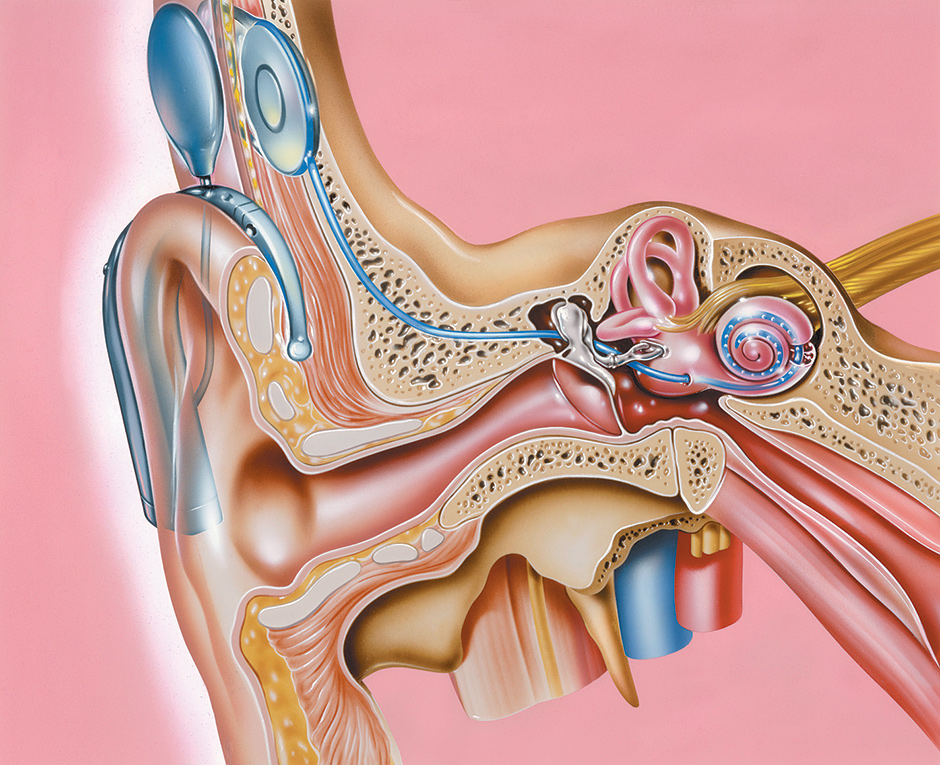

Science Photo Library/Corbis

A section of the outer and inner ear showing a cochlear implant, a prosthetic hearing device. Sound from an external microphone (behind the external ear, top left) is transmitted by wires to electrodes that are implanted into the cochlea (the coil at right). The electrodes transmit sound as electrical impulses to the auditory nerve and into the brain, allowing the person to hear.

The origins of our inner ear lie hundreds of millions of years back in evolution, when primitive fish began to develop hollows in the skin that were sensitive to waves of pressure from water around them, as well as to water’s movement as they pitched and rolled. With time the nerves became more refined, the hollows became tubes of seawater, and those tubes eventually closed off and buried themselves in the head. Further on in evolution, bones that were originally related to the jaw migrated and miniaturized, becoming the amplifying bones of the ear. The tubes dedicated to sensing rotational movement became our semicircular canals (balance), and it’s theorized that parts involved in sensing the pressure waves became our cochleas (hearing). In the composition of their salts, the fluids of our inner ear still carry the memory of that primordial ocean.

Within the human cochlea is a thin sensitive membrane, around thirty to thirty-five millimeters long, wound into a spiral and bathed in this salty fluid. This membrane resonates with sound, sensing from high to low frequency along its length, while associated nerve cells convey that resonance to the brain. To describe it simplistically, a cochlear implant is a series of very fine electrodes that lie along the length of the membrane. Sound is coded in a receiver behind the ear (often held on by magnets) and transmitted to electrodes, which then stimulate the nerve cells along the membrane in a way broadly analogous to sound. After William House’s first device in the early 1960s, during the next three decades groups in California, Melbourne, Vienna, and Utah worked almost independently on the research problem of how to optimize this ostensibly simple, but devilishly complicated, idea.

As an accomplished investigative reporter as well as a mother anxious to choose the best for her son, Denworth has interviewed most of the pioneers of this technology. Her inquiry takes her into a comprehensive examination of auditory physiology, the science of phonation, or how speech is produced, and the electronic coding of speech. A healthy cochlea can distinguish thousands of tones, but modern cochlear implants have just twenty-two channels. What’s astounding is that these devices, which reduce the complexity and plurality of our acoustic world to just twenty-two stimuli, are so successful in making speech comprehension possible. More astounding still is that each pioneering group developed a different coding system for sound, but had broadly comparable success. It seems you can send widely divergent signal patterns down a wire and the brain still makes sense of them. To understand more about why that might be, as well as what drives the urgency about whether to implant, we have to move from the cochlea down the acoustic nerve and into the brain.

Once they pass the cochlear nuclei—the first relay station for auditory information within the brainstem—nerve impulses from each side are dovetailed and compared; they move in parallel tracks up to the midbrain and then the cerebral cortex. It’s the midbrain connections that make us startle at a bang—a primitive, preconscious level of reaction; but it is the cerebral cortex that integrates those impulses into our experiential world. The cortex “learns” surprisingly quickly how to make sense of a new sound—if that sound is repeated and has meaning. This learning can occur at any age, but it’s in children that learning occurs most efficiently, and children that urgently need language to conceptualize the world.

In the mid-1990s, when I took a degree in neuroscience, the neuronal plasticity responsible for learning was thought to occur at the level of the synapse. As technology improved so did the resolution of neuroscience’s discoveries: it’s now suspected that learning occurs at the level of dendritic spines—tiny portions on the receiver tendrils on each neuron. My contemporaries were limited to MR (magnetic resonance) and PET (positron emission tomography) if the location of brain activity was to be examined, and EEG (electroencephalography) if the timing of brain activity was under question. It wasn’t possible then to get information on both location and timing simultaneously; but neuroscientists now have MEG (magnetoencephalography) scanners that resolve this problem.

In the sixteenth century it was believed that magnets had souls, that swallowing them would give you eloquence, and that their property of “action at a distance” was related to the attraction of love. Now we know that eloquence and love, as they are expressed in the brain, do create magnetic fields—each neuron, as it fires, generates a minuscule magnetic field around the axis of its impulse. Imagine these fields as the ripples on a pond after throwing a stone, then imagine the undulating surface sheen of the water stripped off and wrapped around your head. Haloes of magnetic fields ripple from our brains as we talk, think, experience the world.

MEG scanners pinpoint these almost impossibly small magnetic fields with amazing accuracy. They have shown us that only fifteen milliseconds after hearing a sound an impulse has reached the brainstem, and only a few milliseconds later it’s in the cortex. But the computation involved in understanding sounds is much slower: it takes sixty to one hundred milliseconds before large assemblies in the auditory cortex are activated, essentially “looking up” each sound. If the sound is familiar this process is quicker and generates less of a ripple, because the brain does less work to comprehend it. At around two hundred milliseconds conscious awareness begins, but isn’t lexically understood until after more like three or four hundred milliseconds. When we consider languages worldwide, we find that syllables last between 150 and 200 milliseconds—a constant that appears related to the physiology of the brain. It takes us almost six hundred milliseconds—over half a second—to recognize unexpected words, discordant tones in music, or make the looping connections necessary to make sense of discourse. These revelations emphasize just how much our brains do not passively sense the world; they weave it from threads of sensory information, moment to moment.

Applying the revelations from MEG scanners to the question of how children learn and acquire language has shown an intimate link not just between sound and learning to speak, but sound and literacy. Repetition, rhyme, and rhythm are all crucial in this process; the genius of Dr. Seuss has a basis in neurobiology. Like the beating down of paths through a garden of briars, some pathways through the tangle of neuronal connections are strengthened by repetition, while others, which are less used, become obsolete.

Late language acquisition is much clumsier than in childhood because many potential connections have already been lost, so adults must involve higher and slower brain centers. Training your ear, and improving the focus of your attention, actually speeds up the process of silent reading. Another astonishing finding is that acquiring Sign from babyhood improves later reading speed in English. Lydia Denworth decided to get a cochlear implant for her son because, since she was not a native Signer, it gave him the best chance of acquiring language quickly. That he is now thriving in mainstream schooling is, from her perspective, ample vindication of her and her husband’s decision.

Early in her inquiry she meets hearing children of deaf adults, and sees how uniquely placed they are to pass between the worlds of the deaf and hearing, “but they absorb some of the pain of their parents with each border crossing.” Toward the end she meets Sam Swiller, a deaf man who had an implant successfully inserted as an adult—forcing me to consider asking some of my own patients, such as Miss Black, if they’d like to be put forward for consideration of one. “The wall between the hearing world and the deaf world is getting shorter,” Swiller says. “It’s a more porous border.” When Denworth asked him why he went for an implant, despite being fully involved in the Deaf community and by then fluent in Sign, he answered, “Life is difficult and you need every type of weapon in your quiver, every resource possible.”

I Can Hear You Whisper is a triptych of reportage, popular science, and memoir. As reportage into the controversy surrounding cochlear implants it’s both timely and rigorous, though Denworth admits her own pro-implant bias. As popular science it’s enthralling, offering a window onto the latest research into perception, language, and the weaving of conscious awareness. As a memoir it is tender and involving: accompanying Denworth and her son on their journey, and imagining making the same journey with my own children, I was often deeply moved. To implant or not to implant is the question embedded in the fabric of this book, and there are no easy answers. But I remember the wisdom of my old teacher at medical school, as he placed his stethoscope gently into hard-of-hearing patients’ ears: “Use whatever means you can to understand and be understood.”

-

1

Some historians of Deaf culture can be less generous: for Harlan Lane and others the preference of speech over Sign was led by fear of difference rather than a desire to promote the greater involvement of the deaf in society. ↩

-

2

Harlan L. Lane, When the Mind Hears: A History of the Deaf (Random House, 1984); reviewed by Oliver Sacks in these pages, March 27, 1986. ↩

-

3

Carol Padden and Tom Humphries, Deaf in America: Voices from a Culture (Harvard University Press, 1988). ↩