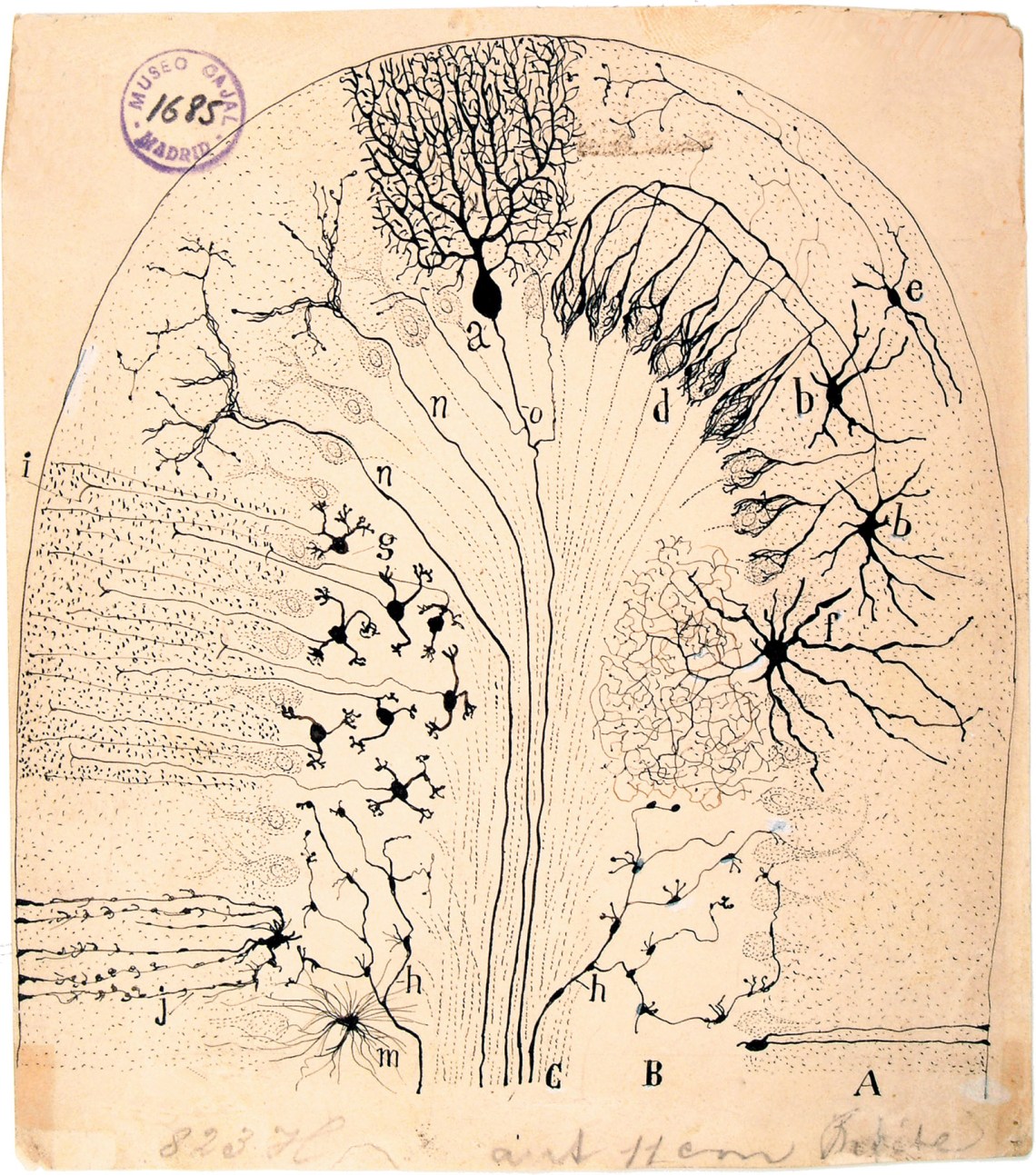

Instituto de Neurobiología Ramón y Cajal, Madrid

Diagram of the cerebellum by Santiago Ramón y Cajal, 1894. Cajal’s drawings are collected in The Beautiful Brain, edited by Eric A. Newman, Alfonso Araque, and Janet M. Dubinsky and published by Abrams in 2017. For more on Cajal, see Gavin Francis’s essay ‘In the Flower Garden of the Brain’ at nybooks.com/cajal.

Twenty-five years ago, at the end of my two-month rotation in psychiatry, Edinburgh Medical School delivered the results of our student assessments by posting three lists of names on a departmental notice board. It was a nerve-racking experience for all of us, who would learn of having passed or failed in full view of our peers.

A crowd gathered around the board, and one by one my classmates found their names on the pass list—or, even better, on the shorter list of those who passed “with distinction”—then cheered and went off to celebrate at the bar. But as I strained toward the lists I felt a ball of tension in my gut; I was a good student, had excelled in a few specialties so far, but couldn’t see my name. Then, there it was—unmistakably, on the dreaded third list of those who had failed. I felt a tap on my shoulder, someone pointed up at the distinguished students: there it was again. The test had combined a written exam and an appraisal of clinical competence; it seemed one of my assessing psychiatrists had deemed me a star student, the other, a failure. I reported upstairs for what turned out to be an awkward interview, the outcome of which was that both assessments had been wrong: I was an average student after all, neither struggling nor distinguished. I was left wondering if I’d make a good psychiatrist, an abysmal one, or both.

That experience was unique in my medical training: in no other specialty was there such confusion over what separates success from failure. If the psychiatrists couldn’t agree on the assessment of student performance, I wondered how much they’d agree on their assessments of patients. A thorny subject, perennially controversial, because mental health diagnoses have such power—to save lives, or ruin them. It’s a difficulty that Anne Harrington, a professor of the history of science at Harvard, tackles masterfully in Mind Fixers: Psychiatry’s Troubled Search for the Biology of Mental Illness, which she divides into a history of how psychiatry has approached, characterized, treated, and maltreated mental illness since the 1850s (“Doctors’ Stories”); a section on depression, schizophrenia, and manic depression as the three most illustrative disorders (“Disease Stories”); and finally suggestions for the future of psychiatry, with an argument that a fundamental reappraisal is needed (“Unfinished Stories”).

I work now as a primary care physician in Edinburgh; approximately a third of my consultations concern mental health, and every day brings first-hand examples of just how variable the manifestations of mental illness can be, and how mutable its diagnostic labels. There are patients of mine who’ve had four or five different diagnoses since their career in the care of psychiatric services began, even though their core symptoms (and distress) have hardly changed. Most people who have lived a few decades with severe mental illness have seen their own label evolve, simply because the way their symptoms are characterized by psychiatrists has evolved. This is true as much for “major” psychiatric conditions, such as paranoid psychosis and psychotic depression, as it is for conditions habitually thought of as existing along a spectrum that reaches normality (whatever that is), such as autism or attention-deficit disorder.

Psychiatrists save lives. For some of my patients, diagnoses are received with gratitude, either because they give substance to a long-held sense of difference, or because they offer access to a supportive community of others with similar experiences. Sometimes they are welcomed as keys to unlock educational support. But for other patients, the labels are abhorred as stigmatizing, symbolic of the medical profession’s intolerance of human diversity. As a physician, my job is the relief of human suffering (patient means sufferer in Latin); in psychiatry, that mandate rarely feels straightforward.

The first attempt in the US to systematize categories of mental illness came in 1918, with the Statistical Manual for the Use of Institutions for the Insane, which was designed to help hospitals and asylums keep records and file censuses for government statistics. The categories were crude and often pejorative, so when the American Psychiatric Association published its first Diagnostic and Statistical Manual (DSM) in 1952, the more balanced, clinical language constituted a step forward. The DSM divided mental illness into conditions arising as a consequence of brain damage (“impairment of brain tissue”) and those considered “psychogenic” in nature. Freudian perspectives on mental illness were then in ascendance, and psychiatric symptoms (such as delusions, hallucinations, depressed or elevated mood) were not considered important as manifestations of a particular diagnosis. What was important were the unconscious conflicts and psychodynamic processes behind each person’s symptoms. The DSM-II, published in 1968, elaborated on this view of mental illness without questioning its fundamentals, adding a section on child and adolescent conditions, and allowing for the possibility of a patient receiving more than one diagnosis (“co-morbidities”).

Advertisement

In 1980 the DSM-III was released. DSM-III aimed to tackle what many doctors had come to see as the fuzzy inutility of psychiatric diagnosis by introducing a checklist codification of mental symptoms. The authors of the manual were optimistic that the decade ahead would transform psychiatry and that, through the use of the diagnostic grids, doctors would be able to discern reliable biological markers of psychiatric disorder. The hope was that if patients could be grouped more easily into categories, like with like, then each group could be studied for physical similarities. This became known as the “biological revolution” in psychiatry. Diagnostic reliability through checklists would lead to radical improvements in the understanding of different conditions and how to treat them.

Ten years later, however, not a great deal of progress had been made. Harrington describes a 1990 psychiatric conference held in Worcester, Massachusetts, at which a speaker said he was going to reveal, with his next slide, a list of established facts about one of the most life-changing diagnoses: schizophrenia. “The screen then went blank,” writes Harrington, “prompting wry laughter to ripple through the auditorium.”

The DSM of 1952 recognized 106 diagnoses; 1968’s DSM-II had 182. The shift to checklists in 1980 swelled that figure to 285, and with the DSM-IV, published in 1994, it edged a little higher, to 307. “Were all these new categories really novel discoveries driven by rigorous scientific inquiry?” Harrington asks. “Were there really so many different ways to be mentally ill?” One of the champions of the new era of which DSM-III was emblematic was the neuropsychiatrist Nancy Andreasen; by 2007 her enthusiasm for a strictly biological approach to psychiatry had cooled, and she was lamenting a decline in “careful clinical evaluation” and the “death of phenomenology in the United States”—an assessment echoed by Oliver Sacks, who wrote of the DSM:

Richness and detail and phenomenological openness have disappeared, and one finds instead meager notes that give no real picture of the patient or his world but reduce him and his disease to a list of “major” and “minor” diagnostic criteria.

By attempting to rationalize diagnosis, Harrington explains, psychiatry inadvertently impoverished and sidelined itself. By the launch of DSM-5 in 2013 (there was a conscious switch to Arabic numerals) the then director of the National Institute of Mental Health, Thomas Insel, distanced himself from the manual and realigned the agency’s research away from its categories. “Biology,” he told The New York Times that spring, “never read that book.” More than three decades after the attempt to put psychiatry on a firm biological foundation, Insel complained that its diagnostic categories were still based solely on “consensus about clusters of clinical symptoms, not any objective laboratory measure.” This was a crude, embarrassing approach, long outdated in the rest of medicine, “equivalent to creating diagnostic systems based on the nature of chest pain or the quality of fever.”

With the first part of her book, “Doctors’ Stories,” Harrington evaluates some of the many ways psychiatrists sought, through the nineteenth and twentieth centuries, to portray themselves as engaged in a biological science. The keepers of asylums too often found their patients more interesting dead than alive: only as cadavers could their brains be removed for study.

The German physician Emil Kraepelin, who is often cited as a founder of modern psychiatry, wanted to get his nascent discipline away from prison-asylums—where physicians were resigned to containing their patients, not curing them—toward a more compassionate, nuanced appreciation of each patient’s inner world. He identified two kinds of psychosis, defined broadly as mental illness in which someone loses touch with reality, often suffering delusions or hallucinations of phenomena that are demonstrably untrue: dementia praecox (“precocious mind-loss”) and manic-depressive insanity (what we’d now call bipolar disorder). “In the acute phase of both diseases, patients manifested states of agitated delirium that were sometimes virtually indistinguishable from each other,” writes Harrington. “The most important difference between the two disorders lay in their different prognoses,” it being presumed that those with dementia praecox would never get better while those with manic depression might improve.

The prevailing ideas were changing: an emphasis on finding organic lesions was already ceding to a more psychological approach. In 1892 the Association of Medical Superintendents of American Institutions for the Insane changed its name to the American Medico-Psychological Association; members began to call themselves not “superintendents” but “alienists.” In 1921 the organization renamed itself the American Psychiatric Association.

Advertisement

In Switzerland in 1874 a seventeen-year-old named Eugen Bleuler was disgusted by the dismissive attitude physicians took to his sister’s catatonic psychosis, and he decided to become a psychiatrist; in 1898 he was appointed the director of the Burghölzli hospital, near Zurich. A senior colleague of Carl Jung, Bleuler “adopted an unusually pastoral attitude toward his patients, spending a great deal of time on the wards talking with them and becoming familiar with their delusions and idiosyncrasies,” Harrington writes. Bleuler was an admirer of Freud’s work, and in 1911 he proposed the term “schizophrenia,” meaning “split mind,” to supersede Kraepelin’s “dementia praecox.”

He didn’t mean split personality but wanted to characterize the illness as a splitting of reliable associations among thoughts, feelings, and perceptions. The word caught on: by the 1920s dementia praecox was considered archaic. But an echo of the old orthodoxy of mental illness arising from organic pathology persisted, hastened by the discovery that “general paralysis of the insane” (GPI) had an organic cause: syphilis. In 1914 the Harvard pathologist Elmer Southard wrote of the two evolving camps within psychiatry: the “brain spot men” staring down their microscopes versus the “mind twist men” who saw mental illness as purely experiential.

In 1924 a new law made it legal to sterilize Americans deemed mentally retarded, but courts still had the final say; it wasn’t until 1927 that a Virginian woman named Carrie Buck became the first of over 65,000 men and women nationwide to be sterilized under the law. In 1933 the German government would cite the US Supreme Court’s decision in Buck v. Bell (1927) defending its own Law for the Prevention of Hereditarily Diseased Offspring. The American Journal of Psychiatry published two opinion pieces side by side in 1942, arguing for and against the euthanasia of “feebleminded” children. Dr. Foster Kennedy argued in favor, while Dr. Leo Kanner (later famous for debunking the myth of cold-hearted “refrigerator mothers” being responsible for inducing autism in their progeny) defended the social utility of a “mentally deficient” man who had collected garbage in his district for many years and who led a happy, productive life. “Shall we psychiatrists take our cue from the Nazi Gestapo?” Kanner asked. Although he defended the sterilization of those “unfit to rear children,” euthanasia was a step too far.

Harrington shows just how close the United States came to implementing the euthanasia programs of fascist Germany: in an unsigned editorial in July 1942 the journal editors came down on the side of promoting euthanasia in some cases, and implied that parents who expressed resistance to the idea must be suffering from a morbid state with origins in “obligation or guilt.” For Harrington, these editors saw one of the tasks of psychiatry as helping parents “realize that they did not truly love their severely disabled children after all.”

The 1920s and 1930s had brought the inception of a series of “shock” treatments, particularly for schizophrenia: patients were given malaria in the hope that recurrent, exhausting fevers would wring madness from their minds, and the discovery of insulin meant that patients could be “shocked” with hypoglycemic comas. (Harrington explains that the care and attention these patients received, not the coma, were what caused some of their conditions to improve.) In Hungary, Dr. Ladislav Meduna began inducing cataclysmic epileptic seizures with the drug metrazol, and his method spread: in 1942 a paper in the American Journal of Psychiatry reported that forty-six of two hundred metrazol-treated patients had suffered spinal fractures as a result of intense seizures. In Mussolini’s Italy a neurologist named Ugo Cerletti switched to electricity after observing how in an abattoir, the electrical stunning of pigs often induced convulsions.

After being initially lauded as a treatment for psychosis, electroconvulsive therapy (ECT) came to be recommended mainly for depression—the energy of the seizure was presumed to release some “vitalizing” substance in the body that would help lift the spirits. Perhaps the most extreme manifestation of the 1930s biological approach to psychiatry was lobotomy, an incision through the prefrontal lobes of the brain, invented by António Egas Moniz, a Portuguese neurologist, and popularized across the United States by Walter Freeman, a man with negligible surgical training who notoriously used ice-picks to lobotomize his patients through their eye sockets.

Freud’s psychoanalytic approach was still gaining adherents. Its insights came to dominate Western psychiatry, as Europe and North America began to address the aftermath of war. Freud’s method prioritized the patient’s childhood experiences, unconscious conflicts, and a tripartite schema of the psyche (composed of id, ego, and superego) over a psychiatry more concerned with the patient’s direct contemporary experience of mental symptoms. In 1943 William Menninger, a psychoanalyst, was appointed director of psychiatry for the US Army.

After the war, analysts like Anna Freud (Sigmund’s daughter) and Donald Winnicott, caring for evacuee children and orphans, noticed the frequency with which psychopathology was rooted in “maternal deprivation.” A group of female psychoanalysts including Frieda Fromm-Reichmann and Marguerite Sechehaye advocated a radical re-mothering of their adult patients to redress this presumed deprivation: sitting with patients in their urine, accepting gifts of feces, even (in the case of Sechehaye) offering food while holding the patient closely, “like a mother feeding her baby,” in Sechehaye’s words.

By the 1950s, motherhood more generally was being blamed for mental illness—Harrington writes poignantly of the many ways mothers were considered culpable for their children’s mental illnesses. If too “seductive and smothering,” they would render their sons homosexual (homosexuality was a “psychopathic personality disorder” and then a “sexual deviance,” according to successive iterations of the DSM); if too cold and distant, they would cause autism. Yet another kind of mother, if excessively permissive, induced delinquency. Single African-American mothers were guilty of dominating their households in a way that emasculated their sons so that they couldn’t, as Harrington summarizes, “develop the masculine confidence they needed to survive in a world where the odds were stacked against them.”

By the mid-1960s this era of mother-blaming was passing. Lobotomy was going out of fashion too, in part because broadening recognition of Nazi atrocities encouraged restraint, in part because new drugs did the same job with less finality or brutality. The “major tranquilizer” chlorpromazine (Thorazine), once known as the “chemical lobotomy,” was approved in 1954. It was derived from a class of antihistamines used in the dye industry (they’re bright blue in water), and it was initially tested as a possible preventative for surgical shock—patients given the drug before operations became sluggish and indifferent.

It passed swiftly from the surgical to the psychiatric wards. Psychoanalysts accepted the drug into their own schemas of mental illness by describing it as dampening “affective drive” and leveling “psychic energy.” Equanil, Miltown, and Valium (the “minor” tranquilizers) quickly followed, aggressively marketed first as drugs for “overworked” businessmen, then for stressed, anxious housewives. “The neurotic mother and wife who in the 1950s had been blamed for causing mental illness in her children had, by the 1960s, herself become the patient,” Harrington observes.

New drugs also made it easier to get patients out of long-stay hospitals. Litigation accelerated deinstitutionalization: patients and their families became aware of their right to receive “adequate treatment,” which hospitals were blatantly incapable of providing. “Hospitals would have to comply or face huge fines,” writes Harrington. “Unfortunately, virtually all hospitals lacked sufficient funds to comply, so what they did instead was release more patients.” Against this backdrop, the antipsychiatry movement surged. In 1961 the sociologist Erving Goffman compared psychiatric hospitals to concentration camps; at the same time rebel psychiatrists like R.D. Laing and Thomas Szasz insisted that mental illness itself was a social construction with no empirical reality.

For the psychoanalysts, diagnosis was less important than the psychological processes underpinning each patient’s unique set of symptoms. Harrington reports studies from 1949 and 1962 (by P. Ash and A. Beck, respectively) that found only about 30 percent concordance between the diagnosis reached by two psychiatrists assessing the same patient. That may not have mattered to the doctors, but about halfway through her book Harrington cites a study that should matter to every medical student and every practicing physician who has anything to do with mental illness (i.e., all of them): David Rosenhan’s “On Being Sane in Insane Places,” published in Science in 1973. The paper has had its detractors, but in an experiment of stunning elegance Rosenhan, a Stanford psychologist, exploded notions of the reliability and utility of psychiatric diagnosis, and ignited a controversy that still burns fiercely today.

Eight researchers, including Rosenhan, presented themselves at twelve psychiatric institutions, complaining that they were hearing indistinct voices, saying “empty,” “thud,” or “hollow”—terms chosen because they had not previously been reported in the literature. All said that they were undistressed by their “symptoms,” but all were admitted. “Immediately upon admission to the psychiatric ward,” reported Rosenhan, “the pseudopatient ceased simulating any symptoms of abnormality.” Eleven of the twelve episodes of admission resulted in a diagnosis of schizophrenia and just one, having been admitted to an expensive private hospital, was given what was then considered a more upmarket diagnosis—manic-depressive psychosis. All asked to be discharged as soon as they arrived on the ward, professing their symptoms gone, but their inpatient stays ranged from nine to fifty-two days (with nineteen days the average). When eventually discharged, the supposedly “schizophrenic” patients were told that their diagnoses were confirmed but that they were now “in remission.” (Aware of how sticky, consequential, and pejorative these labels can be, all had used pseudonyms.)

Rosenhan’s pseudopatients took notes throughout their hospital stays, recording clinician and attendant behavior, and clocking the time staff spent with patients. No clinicians asked to see these notes, or expressed any interest in them. Among the many scorching insights of the study was that the more elevated a clinician was within the hospital hierarchy, the less time he or she spent with patients. Abuse of patients in full view of other patients was routine, but stopped “abruptly” in the presence of other staff. (“Staff are credible witnesses,” Rosenhan wrote. “Patients are not.”) Rosenhan also concluded that fellow patients were better judges of sanity than clinicians. (“You’re not crazy,” he quotes one fellow patient as saying. “You’re a journalist or a professor. You’re checking up on the hospital.”)

When Rosenhan’s findings became public, one teaching hospital “doubted that such an error could occur” and asked Rosenhan to send at least one pseudopatient for assessment at a time of his choosing over the following three months. During that period forty-one (of 193 total) admissions “were alleged, with high confidence, to be pseudopatients by at least one member of the staff.” In fact Rosenhan sent no one at all.

“The view has grown,” Rosenhan wrote in the introduction to his paper,

that psychological categorization of mental illness is useless at best and downright harmful, misleading, and pejorative at worst. Psychiatric diagnoses, in this view, are in the minds of observers and are not valid summaries of characteristics displayed by the observed.

His damning conclusion was that “it is clear that we cannot distinguish the sane from the insane in psychiatric hospitals.”

It’s been thirty years since the conference in Worcester at which a speaker showed a blank slide to reflect the established facts about schizophrenia, and the science of what schizophrenia is—what a medic might call its pathophysiology—hasn’t budged an inch. An elegant and valuable recent contribution to this discussion is This Book Will Change Your Mind About Mental Health by Nathan Filer, a former psychiatric nurse in the southwest of England. Filer writes about a series of people and families touched by schizophrenia diagnoses. He uses their stories to explore the nature of delusions and hallucinations, review the latest research into their causes, and describe how the diagnosis is reached. Like Harrington, he recounts the haphazard manner in which treatments have been discovered, perpetrated, and discarded, but he explores more deeply than Harrington the unfair stigma that people with such a diagnosis still experience. Along the way he questions the assumptions made by the psychiatric establishments of both the US and UK, and asks whether “schizophrenia” as a concept needs an overhaul.

Filer’s interest is in the fine details, the humanity and the specificity of each individual’s slide into a unique psychosis. “We’ve seen that the most distressing personal experiences—those that lead to the diagnosis of mental disorders—are as likely to be shaped by our relationships and the pressures of our environment as they are by any abnormalities in our biology,” he concludes. “Sometimes what needs ‘fixing’ mightn’t reside within the individual at all.”

Schizophrenia involves a pervasive, enduring disturbance in the reliability of perceptions; any influence it has on mood is usually considered secondary. Harrington devotes a chapter each to biological psychiatry’s approach to the two principal categories of mood disorder, depression and manic-depression. “Depression” today is arguably “melancholia” by another name; “melancholics” were once presumed to have an excess of black bile that depressed their spirits, but now genetics, neurotransmitters, and a constitutional inability to meet life’s challenges are all invoked—explanations theoretically open to being proved or disproved empirically, but which have been difficult to substantiate with hard evidence. The first mass-market antidepressant, amitriptyline (Elavil), was promoted by Merck as a safe alternative to ECT; like the formidably lucrative chlorpromazine, it was derived from a group of antihistamines. Within twenty years, the efficacy of its class of tricyclic antidepressants was taken so much for granted that physicians could be sued for not prescribing them.

Tricyclics had side effects and were dangerous in overdose. In the early 1970s a new antidepressant that focused on boosting brain serotonin levels called Zelmid was developed in Sweden by Astra Pharmaceuticals. It was approved for use in Europe in 1981. But recipients began to report unpleasant, flu-like side effects, and in 1983 it was withdrawn.

Between 1972 and 1975, Eli Lilly had also been developing one of these drugs, later called “selective serotonin reuptake inhibitors” (SSRIs), and had called it “fluoxetine.” It was only with the withdrawal of Zelmid that fluoxetine was released for use, in 1987, under the name Prozac. One of Prozac’s advantages over the older tricyclic drugs was simply that patients seemed more likely to lose weight than to gain it. Sales soared. In 1993 Peter Kramer’s Listening to Prozac spent three months on the best-seller list. Four years later, direct advertising of prescription drugs to patients encouraged the unsubstantiated idea that low mood is simply a correctable chemical imbalance—a position not too different from the medieval idea of humors—and the age of “cosmetic psychopharmacology” (Kramer’s coinage) arrived.

The checklist approach to diagnosis introduced by the DSM-III, and the increasing use of questionnaires like the Hamilton Depression Scale, tended to promote a diagnosis of depression; since 1997 that tendency has only ballooned. Harrington offers a cogent summary of the ways pharmaceutical companies publicize a diagnosis first, then market an old drug as a new cure, such as Eli Lilly’s magazine campaign to promote Prozac, “Welcome Back,” or Pfizer’s TV campaign for Zoloft, which characterized the brain as a kind of cake that needed the right balance of chemicals in order to be successful.

Kraepelin had wondered as early as 1899 if swings from mania to melancholy—“circular insanity” or “insanity of double form”—were manifestations of a “single morbid process.” If so, it was unusually resistant to treatment: shock treatments and tranquilizers made no difference either to the severity or frequency of the swings. In 1949 John Cade, a psychiatrist in Melbourne, wondered if mania might have a detectable chemical signature. He tried injecting salts from the urine of manic patients into guinea pigs: they died of convulsions. He was advised to combine patients’ uric acid with lithium, to make it soluble. “The normally skittish animals became lethargic and placid,” Harrington writes. “When placed on their backs, instead of frantically trying to right themselves, they just lay there, apparently content.” This wasn’t all new: lithium was used as a tonic for nerves in the nineteenth century, as a constituent in Vichy and Perrier mineral waters, and as an additive in 7 Up as late as 1950.

In 1954 a drug trial in Denmark randomly allocated to patients lithium or a placebo and found strong evidence for its benefits. Lithium in overdose can permanently damage kidney and thyroid function, and its use requires close monitoring: when the study followed up with participants to check for ill effects, a startling eight of the thirty-eight patients were discovered to be sourcing their own lithium against medical advice. They said it helped not just in the acute phase of manic psychosis but prevented relapse into mania. A study in 1967 by the same Danish team followed almost ninety Danish women with manic depression. Untreated, they averaged a relapse every eight months; on lithium, relapses occurred “only once every 60–85 months and were of notably shorter duration.” As an elemental salt, lithium couldn’t, of course, be patented; Harrington is withering in her discussion of how slow the US market was to take up such an unprofitable drug.

The DSM-IV divided recurrent swings of mania and depression into either Bipolar I or Bipolar II: the former manifesting itself as more florid psychoses, and the latter as milder episodes of alternating depression and elevated mood (“bipolar lite”). “But if bipolar disorder could have two subtypes, then why not more—maybe even a lot more?” Harrington asks. Since the 1970s Hagop Akiskal, a psychiatrist in Tennessee, has been insisting that bipolar disorder is not one condition but many, across a broad spectrum of both severity and character.

This Book Will Change Your Mind About Mental Health is explicit about how devastating severe psychotic illness can be, and honest about how little is known of the condition’s neurobiology. Genetics are important, as is fetal neurovulnerability, but adverse childhood experiences and a lack of social support seem just as fundamental. I’ve seen in my own practice how the social restrictions of the Covid-19 pandemic seem to have set off, or revealed, paranoid psychosis in people who’ve never suffered mental illness before, and case reports confirming the same observation are beginning to be published in Spain and the US. Among her conclusions to Mind Fixers, Harrington quotes the social science finding that many psychiatric patients have a better response to being provided with “their own apartment and/or access to supportive communities, than being given a [prescription] for a new or stronger antipsychotic.”

Some might consider this finding radical (contingent as it is on excellent insurance or a robust welfare state), but in my own family practice I look after two such “sheltered housing” complexes for people with chronic mental illness. The arrangement works beautifully: when given social support and primary health care, these patients rarely need the attention of psychiatrists at all. I see the patients more or less regularly, depending on their symptoms, and for those in a spiral of worsening distress, a psychiatrist is always available by phone.

Between the primary care physician and the psychiatrist we prescribe the same old drugs—major and minor tranquilizers, occasional antidepressants—to deal with agitation, hallucinations, paranoia, depression, and mania. We pay close attention to the patients and what the staff at the supportive housing complexes are saying, and zero attention to DSM categories. (Those categories don’t help in the alleviation of my patients’ mental distress; medication and supportive care do.) Once you have a health care system that offers care based on distress and need rather than diagnostic category, and from which oppressive insurance requirements have been removed, it’s my experience that both patient and physician benefit, and the therapeutic relationship flourishes.

Harrington knows that an approach like this is challenging to a strictly biological interpretation of severe mental illness—no one expects your liver cancer or osteonecrosis to improve if someone gives you a job or a house—but mental disorders are different, centered on perceptual and social worlds. To treat them as purely physical is to misunderstand their nature. The final pages of Harrington’s book summarize the story so far: asylums gave way to neuroanatomy, which ceded to somatic shock therapies, psychoanalysis, and the “biological revolution” in turn. Psychiatry is again in crisis, but “times of crisis are times of opportunity.”

As a medical student I was puzzled by how my psychiatry tutors could struggle to distinguish a good student from a failing one, but I see that ambiguity now as magnanimous in its way, demonstrating a certain liberation from orthodoxy. In any discipline an admission of uncertainty sanctions new ways of thinking. With creativity and humanity, the next generation of psychiatrists should be free to build new models of mental distress in all its manifestations, and new approaches to treatment.