From 1946 until 1964 the conservative politicians who dominated Congress thought that the federal government might be capable of transforming American society, but they saw this as a danger to be avoided at almost any cost. For the following twelve years the liberals who dominated Congress thought that the federal government should try to cure almost every ill Americans were heir to. After 1976 the political climate in Congress changed again. The idea that government action could solve—or even ameliorate—social problems became unfashionable, and federal spending was increasingly seen as waste. As a result, federal social-welfare spending, which had grown from 5 percent of the nation’s gross national product in 1964 to 11 percent in 1976, has remained stuck at 11 percent since 1976.

Conservative politicians and writers are now trying to shift the prevailing view again, by arguing that federal programs are not just ineffective but positively harmful. The “problem,” in this emerging view, is not only that federal programs cost a great deal of money that the citizenry would rather spend on video recorders and Caribbean vacations, but that such programs hurt the very people they are intended to help.

Losing Ground, by Charles Murray, is the most persuasive statement so far of this new variation on Social Darwinism. Murray is a former journalist who has also done contract research for the government and is now associated with the Manhattan Institute, which raises corporate money to support conservative authors such as George Gilder and Thomas Sowell. His name has been invoked repeatedly in Washington’s current debates over the budget—not because he has provided new evidence on the effects of particular government programs, but because he is widely presumed to have proven that federal social policy as a whole made the poor worse off over the past twenty years. Murray’s popularity is easy to understand. He writes clearly and eloquently. He cites many statistics, and he makes his statistics seem easy to understand. Most important of all, his argument provides moral legitimacy for budget cuts that many politicians want to make in order to reduce the federal deficit.

Murray summarizes this argument as follows:

The complex story we shall unravel comes down to this:

Basic indicators of well-being took a turn for the worse in the 1960s, most consistently and most drastically for the poor. In some cases, earlier progress slowed; in other cases mild deterioration accelerated; in a few instances advance turned into retreat. The trendlines on many of the indicators are—literally—unbelievable to people who do not make a profession of following them.

The question is why….

The easy hypotheses—the economy, changes in demographics, the effects of Vietnam or Watergate or racism—fail as explanations. As often as not, taking them into account only increases the mystery.

Nor does the explanation lie in idiosyncratic failures of craft. It is not just that we sometimes administered good programs improperly, or that sound concepts sometimes were converted to operations incorrectly. It is not that a specific program, or a specific court ruling or act of Congress, was especially destructive. The error was strategic….

The most compelling explanation for the marked shift in the fortunes of the poor is that they continued to respond, as they always had, to the world as they found it, but that we—meaning the not-poor and undisadvantaged—had changed the rules of their world. Not of our world, just of theirs. The first effect of the new rules was to make it profitable for the poor to behave in the short term in ways that were destructive in the long term. Their second effect was to mask these long-term losses—to subsidize irretrievable mistakes. We tried to provide more for the poor and produced more poor instead. We tried to remove the barriers to escape from poverty, and inadvertently built a trap.

In appraising this argument, we must, I believe, draw a sharp distinction between the material condition of the poor and their social, cultural, and moral condition. If we look at material conditions we find that, Murray notwithstanding, the position of poor people showed marked improvement after 1965, which is the year Murray selects as his “turning point.” If we look at social, cultural, and moral indicators, the picture is far less encouraging. But since most federal programs are aimed at improving the material conditions of life, it is best to start with them.

1.

In making his case that “basic social indicators took a turn for the worse in the 1960s,” Murray begins with the official poverty rate. The income level, or “thresh-old,” that officially qualifies a family as poor varies according to the number and age of its members and rises every year with the Consumer Price Index, so in theory it represents the same level of material comfort year after year.1 If a family’s total money income is below its poverty threshold, all its members are counted as poor. The official definition of the poverty level is to a large extent arbitrary. When the Gallup survey asks how much money a couple with two children needs to “get along in this community,” for example, the typical respondent said $15,000 in 1983. The “poverty” threshold for such a family was only $10,000 in 1983. But few would say that people with incomes below the poverty threshold were not poor.

Advertisement

Table 1 (see next page) shows that the official poverty rate fell from 30 to 22 percent of the population during the 1950s and from 22 to 13 percent during the 1960s.

This hardly seems to fit Murray’s argument that social indicators took a turn for the worse in the 1960s. The official rate was still 13 percent in 1980, but even this was not exactly a “turn for the worse.” Furthermore, the official poverty statistics underestimate actual progress since 1965. To begin with, the Consumer Price Index (CPI), which the Census Bureau uses to correct the poverty thresholds for inflation, exaggerated the amount of inflation between 1965 and 1980 by about 13 percent, because of a flaw in the way it measured housing costs. The official poverty line therefore represented a higher standard of living in 1980 than in 1965. If we use the Personal Consumption Expenditure (PCE) deflator from the National Income Accounts to adjust the poverty line for inflation, Table 1 shows that poverty fell from 19 percent in 1965 to 13 percent in 1980.

A more fundamental problem with the official poverty statistics is that they do not take account of changes in families’ need for money. They make no adjustment for the fact that Medicare and Medicaid now provide many families with low-cost medical care, for example, or for the fact that food stamps have reduced families’ need for cash, or for the fact that more families now live in government-subsidized housing.

Experts on poverty have devised a number of different methods for estimating the value of noncash benefits. Most conservatives prefer the “market value” approach, which values noncash benefits at what it would cost to buy them on the open market and adds this amount to recipients’ incomes. To see what this implies, consider Mrs. Smith, an elderly widow living alone in Indiana, who is covered by both Medicare and Medicaid. Private insurance comparable to Medicare–Medicaid would have cost Mrs. Smith $4000 in 1979.2 To get Mrs. Smith’s “true” income, advocates of the “market value” approach simply add $4000 to her money income. Since, by the official standard, Mrs. Smith’s poverty threshold was only $3472 in 1979, the “market value” approach put her above the poverty line even if she had no cash income whatever. This is plainly absurd. Mrs. Smith cannot eat her Medicaid card, or trade it for a place to live, or even use it for transportation to her doctor’s office.

If we want a more realistic picture of how Medicare and Medicaid have affected Mrs. Smith’s life, we must answer two distinct questions: how it affected her ability to obtain medical care and whether it cut her medical bills.

When the Census Bureau values noncash benefits according to what they save the recipient, it finds that they lowered the 1980 poverty rate from 13 to 10 percent.3 The Census has not made comparable estimates for the 1950s or 1960s, but we can make informed guesses about 1950 and 1965. In 1965, Medicare and Medicaid did not exist, food stamps reached fewer than 2 percent of the poor, and there were 600,000 public housing units for 33 million poor people. In 1950 food stamps did not exist at all and there were 200,000 public housing units for 45 million poor people. Taken together, these programs could hardly have cut the poverty rate by more than one point in either year. On this assumption Table 1 estimates the “net” poverty rate at 10 percent in 1980, 18 percent in 1965, and 29 percent in 1950.4

It should go without saying that since the original poverty threshold was arbitrary, these statistics do not prove that only 10 percent of the population was “really” poor in 1980. The figure could be either higher or lower, depending on how you define poverty. The figures do, however, tell us that the proportion of the population living below our arbitrary threshold was almost twice as high in 1965 as in 1980, and almost three times as high in 1950 as in 1980. At least in economic terms, therefore, Murray is wrong: the poor made a lot of progress after 1965.

Furthermore, even these “net” poverty statistics underestimate the improvement in poor people’s material circumstances. Mrs. Smith’s $4000 Medicaid card may not lift her out of poverty, but it has dramatically improved her access to doctors and hospitals. In 1964, before Medicare and Medicaid, the middle classes typically saw doctors five times a year, whereas the poor saw doctors four times a year. By 1981, the middle classes were seeing doctors only four times a year, while the poor were seeing them almost six times a year. Since the poor still spent twice as many days in bed as the middle classes, and were three times as likely to describe their health as “fair” or “poor,” this redistribution of medical care still fell short of what one would expect if access depended solely on “need.”5 But it was a big step in the right direction.

Advertisement

Increased access to medical care seems to have improved poor people’s health. The most widely cited health measure is infant mortality. The United States does not collect statistics on infant mortality by parental income, but it does collect these statistics by race, and it seems reasonable to assume that differences between whites and blacks parallel those between rich and poor. Table 1 shows that the gap between blacks and whites, which had widened during the 1950s and narrowed only trivially during the early 1960s, narrowed very rapidly after 1965. Table 1 tells a similar story about overall life expectancy. Life expectancy rose more from 1965 to 1980 than it had from 1950 to 1965, and the disparity between whites and nonwhites narrowed faster after 1965 than before. Nobody knows how much Medicare and Medicaid contributed to these changes, but notwithstanding all the defects in the American medical care system, it is hard to believe they were not important.6

Nonetheless, despite all the improvements since 1965, Murray is right that, apart from health, the material condition of the poor improved faster from 1950 to 1965 than from 1965 to 1980. The most obvious explanation is that the economy turned sour after 1970. Inflation was rampant, output per worker increased very little, and unemployment began to edge upward. The real income of the median American family, which had risen by an average of 2.9 percent a year between 1950 and 1965, rose only 1.7 percent a year between 1965 and 1980. From 1950 to 1965 it took a 4.0 percent increase in median family income to lower net poverty by one percentage point. From 1965 to 1980, because of expanding social welfare spending, a 4.0 percent increase in median income lowered net poverty by 1.2 percent. Nonetheless, median income grew so much more slowly after 1965 that the decline in net poverty also slowed.7

Murray rejects this argument. In his version of economic history the nation as a whole continued to prosper during the 1970s. The only problem, he claims, was that “the benefits of economic growth stopped trickling down to the poor.” He supports this version of economic history with statistics showing that gross national product grew by 3.2 percent a year during the 1970s compared to 2.7 percent a year between 1953 and 1959. This is true, but irrelevant. The economy grew during the 1950s because output per worker was growing. It grew during the 1970s because the labor force was growing. The growth of the labor force reflected a rapid rise in the number of families dividing up the nation’s economic output. GNP per household hardly grew at all after 1970 (see Table 1).8

But a question remains. As Table 2 shows, total government spending on “social welfare” programs grew from 11.2 to 18.7 percent of GNP between 1965 and 1980.

If all this money had been spent on the poor, poverty should have fallen to virtually zero. But “social welfare” spending is not mostly for the poor. It includes programs aimed primarily at the poor, like Medicaid and food stamps, but it also includes programs aimed primarily at the middle classes, like college loans and military pensions, and programs aimed at almost everybody, like medical research, public schools, and Social Security. In 1980, only a fifth of all “social welfare” spending was explicitly aimed at low-income families, and only a tenth was for programs providing cash, food, or housing to such families.9 Table 2 shows that cash, food, and housing for the poor grew from 1.0 percent of GNP in 1965 to 2.0 percent in 1980.10 This was a large increase in absolute terms. But redistributing an extra 1.0 percent of GNP could hardly be expected to reduce poverty to zero.

A realistic assessment of what social policy accomplished between 1965 and 1980 must also take account of the fact that if all else had remained equal, demographic changes would have pushed the poverty rate up during these years, not down. Table 2 shows that both the number of people over sixty-five and the number living in families headed by women grew steadily from 1950 to 1980. We do not have poverty rates for these groups in 1950, but in 1960 the official rates were roughly 33 percent for the elderly and 45 percent for families headed by women. Since neither group includes many jobholders, economic growth does not move either group out of poverty very fast. From 1960 to 1965, for example, economic growth lowered official poverty from 22 to 17 percent for the nation as a whole, but only lowered it from 33 to 31 percent among the elderly and from 45 to 42 percent among households headed by women.

When poverty became a major social issue during the mid-1960s, government assistance to both these groups was quite modest. In 1965 the typical retired person got only $184 a month from Social Security in 1980 dollars, and a large minority got nothing whatever. Only about a quarter of all families headed by women got benefits from Aid to Families with Dependent Children (AFDC), and benefits for a family of four averaged only $388 a month in 1980 dollars (see Table 2).

From 1965 to 1970 the AFDC system changed drastically. Welfare offices had to drop a wide range of restricitive regulations that had kept many women and children off the rolls. It became much easier to combine AFDC with employment, and benefit levels rose appreciably. As a result of these changes something like half of all persons in families headed by women appear to have been receiving AFDC by 1970. 11

But as the economy floundered in the 1970s legislators began to draw an increasingly sharp distinction between the “deserving” and the “undeserving” and the “undeserving” poor. The “deserving” poor were those whom legislators judged incapable of working, namely the elderly and the disabled. Despite their growing numbers, they got more and more help. By 1980 the average Social Security retirement check bought 50 percent more than it had in 1970, and official poverty among the elderly had fallen from 25 to 16 percent (see Table 2). Taking noncash benefits into account, the net poverty rate was lower for those over 65 than for those under 65 in 1980.12

We have less precise data on the disabled, but we know their monthly benefits grew at the same rate as benefits for the elderly, and the percentage of the population receiving disability benefits also grew rapidly during the 1970s. Since we have no reason to suppose that the percentage of workers actually suffering from serious disabilities grew, it seems reasonable to suppose that a larger fraction of the disabled were getting benefits, and that poverty among the disabled fell as a result.

While legislators were increasingly generous to the “deserving” poor during the 1970s, they showed no such concern for the “undeserving” poor. The undeserving poor were those who “ought” to work but were not doing so. They were mainly single mothers and marginally employable men whose unemployment benefits had run out—or who had never been eligible in the first place. Men whose unemployment benefits have run out usually get no federal benefits. Most states offer them token “general assistance,” but it is seldom enough to live on. Data on this group is scanty.

Single mothers do better than unemployable men, because legislators are reluctant to let the children starve and cannot find a way of cutting benefits for mothers without cutting them for children as well. Nonetheless, as Table 2 shows, the purchasing power (in 1980 dollars) of AFDC benefits for a family of four rose from $388 a month in 1965 to $435 in 1970. In addition, Congress made food stamps available to all low-income families after 1971. These were worth another $150 to a typical family of four.13 By 1972, the AFDC–food stamp “package” for a family of four was worth about $577 a month. Benefits did not keep up with inflation after 1972, however, and by 1980 the AFDC–food stamp package was worth only $495 a month.14 As a result, the welfare rolls grew no faster than the population after 1975, though the number of families headed by women continued to increase.15

According to Murray, keeping women off the welfare rolls should have raised their incomes in the long run, since it should have pushed them into jobs where they would acquire the skills they need to better themselves. This did not happen. The official poverty rate in households headed by women remained essentially constant throughout the 1970s, at around 37 percent. Since the group at risk was growing, families headed by women accounted for a rising fraction of the poor (see Table 2).

Taken together, Tables 1 and 2 tell a story very different from the one Murray tells in Losing Ground. First, contrary to what Murray claims, “net” poverty declined almost as fast after 1965 as it had before. Second, the decline in poverty after 1965, unlike the decline before 1965, occurred despite unfavorable economic conditions, and depended to a great extent on government efforts to help the poor. Third, the groups that benefited from this “generous revolution,” as Murray rightly calls it, were precisely the groups that legislators hoped would benefit, notably the aged and the disabled. The groups that did not benefit were the ones that legislators did not especially want to help. Fourth, these improvements took place despite demographic changes that would ordinarily have made things worse. Given the difficulties, legislators should, I think, look back on their efforts to improve the material conditions of poor people’s lives with some pride.

2.

Up to this point I have treated demographic change as if it were entirely beyond human control, like the weather. According to Murray, however, what I have labeled “demographic change” was a predictable byproduct of government policy. Murray does not, it is true, address the role of government in keeping old people alive longer. But he does argue that changes in social policy, particularly the welfare system, were responsible for the increase in families headed by women after 1965. Since this argument recurs in all conservative attacks on the welfare system, and since scholarly research supports it in certain respects, it deserves a fair hearing.

Murray illustrates his argument with an imaginary Pennsylvania couple named Harold and Phyllis. They are young, poorly educated, and unmarried. Phyllis is also pregnant. The question is whether she should marry Harold. Murray first examines her situation in 1960. If Phyllis does not marry Harold, she can get the equivalent of about $70 a week in 1984 money from AFDC. She cannot supplement her welfare benefits by working, and on $70 a week she cannot live by herself. Nor can she live with Harold, since the welfare agency checks up on her living arrangements, and if she is living with a man she is no longer eligible for AFDC. Thus if Phyllis doesn’t marry Harold she will have to live with her parents or put her baby up for adoption. If Phyllis does marry Harold, and he gets a minimum-wage job, they will have the equivalent of $124 a week today. This isn’t much, but it is more than $70. Furthermore, if Phyllis is not on AFDC she may be able to work herself, particularly if her mother will help look after her baby. Unless Harold is a complete loser, Phyllis is likely to marry Harold—if he asks.

Now the scene shifts to 1970. The Supreme Court has struck down the “man in the house” rule, so Phyllis no longer has to choose between Harold and AFDC. She can have both. According to Murray, if Phyllis does not marry Harold and he does not acknowledge that he is the father of their child, Harold’s income will not “count” when the local welfare department decides whether Phyllis is eligible for AFDC, food stamps, and Medicaid. This means she can get paid to stay home with her child while Harold goes out to work, but only so long as she doesn’t marry Harold. Furthermore, the value of her welfare “package” is now roughly the same as what Harold or she could earn at a minimum-wage job. Remaining eligible for welfare is thus more important than it was in 1960, as well as being easier. From Phyllis’s viewpoint marrying Harold is now quite costly.

While the story of Harold and Phyllis makes persuasive reading, it is misleading in several respects. First, it is not quite true, as Murray claims, that “any money that Harold makes is added to their income without affecting her benefits as long as they remain unmarried.” If Phyllis is living with Harold, and Harold is helping to support her and her child, the law requires her to report Harold’s contributions when she fills out her “need assessment” form. What has changed since 1960 is not Phyllis’s legal obligation to report Harold’s contribution but the likelihood that she will be caught if she lies. Federal guidelines issued in 1965 now prohibit “midnight raids” to determine whether Phyllis is living with Harold. Furthermore, even if Phyllis concedes that she lives with Harold, she can deny that he pays the bills and the welfare department must then prove her a liar. Still, Phyllis must perjure herself, and there is always some chance she will be caught.

The second problem with the Harold and Phyllis story is that Murray’s account of Harold’s motives is not plausible. In 1960, according to Murray, Harold marries Phyllis and takes a job paying the minimum wage because he “has no choice.” But the Harolds of this world have always had a choice. Harold can announce that Phyllis is a slut and that the baby is not his. He can tell Phyllis to get an illegal abortion. He can join the army. Harold’s parents may insist that he do his duty by Phyllis, but then again they may blame her for leading him astray. If Harold cared only about improving his standard of living, as Murray suggests, he would not have married Phyllis in 1960.

According to Murray, Harold is less likely to marry Phyllis in 1970 than in 1960 because, with the demise of the “man in the house” rule and with higher benefits, Harold can get Phyllis to support him. But unless Harold works, Phyllis has no incentive either to marry him or to let him live off her meager check, even if she shares her bed with him occasionally. If Harold does work, and all he cares about is having money in his pocket, he is better off on his own than he is sharing his check with Phyllis and their baby. From an economic viewpoint, in short, Harold’s calculations are much the same in 1970 as in 1960. Marrying Phyllis will still lower his standard of living. The main thing that has changed since 1960 is that Harold’s friends and relatives are less likely to think he “ought” to marry Phyllis.

This brings us to the central difficulty in Murray’s story. Since Harold is unlikely to want to support Phyllis and their child, and since Phyllis is equally unlikely to want to support Harold, the usual outcome is that they go their separate ways. At this point Phyllis has three choices: get rid of the baby (through adoption or abortion), keep the baby and continue to live with her parents, or keep the baby and set up housekeeping on her own. If she keeps the baby she usually decides to stay with her parents. In 1975 threequarters of all first-time unwed mothers lived with their parents during the first year after the birth of their baby. (No room for Harold here.) Indeed, half of all unmarried mothers under twenty-four lived with their parents in 1975—and this included divorced and separated mothers as well as those who had never been married.16

If Phyllis expects to go on living with her parents, she is not likely to worry much about how big her AFDC check will be. Phyllis has never had a child and she has never had any money. She is used to her mother’s paying the rent and putting food on the table. Like most children she is likely to assume that this arrangement can continue until she finds an arrangement can continue until she finds an arrangement she prefers. In the short run, having a child will allow her to leave school (if she has not done so already) without having to work. It will also mean changing a lot of diapers, but Phyllis may well expect her mother to help with that. Indeed, from Phyllis’s viewpoint having a child may look rather like having another little brother or sister. If it brings in some money, so much the better, but if she expects to live with her parents money is likely to be far less important to her than her parents’ attitude toward illegitimacy. That is the main thing that changed for her between 1960 and 1970.

Systematic efforts at assessing the impact of AFDC benefits on illegitimacy rates support my version of the Harold and Phyllis story rather than Murray’s. The level of a state’s AFDC benefits has no measurable effect on its rate of illegitimacy. In 1984, AFDC benefits for a family of four ranged from $120 a month in Mississippi to $676 a month in New York. David Ellwood and Mary Jo Bane recently completed a meticulous analysis of the way such variation affects illegitimate births.17 In general, states with high benefits have less illegitimacy than states with low ones, even after we adjust for differences in race, region, education, income, urbanization, and the like. This may be because high illegitimacy rates make legislators reluctant to raise welfare benefits.

To get around this difficulty, Ellwood and Bane asked whether a change in a state’s AFDC benefits led to a change in its illegitimacy rate. They found to consistent effect. Nor did high benefits widen the disparity in illegitimate births between women with a high probability of getting AFDC—teen-agers, nonwhites, high school dropouts—and women with a low probability of getting AFDC.

What about the fact that phyllis can now live with Harold (or at least sleep with him) without losing her benefits? Doesn’t this discourage marriage and thus increase illegitimacy? Perhaps. But Table 2 shows that illegitimacy has risen at a steadily accelerating rate since 1950. There is no special “blip” in the late 1960s, when midnight raids stopped and the “man in the house” rule passed into history. Nor is there consistent evidence that illegitimacy increased faster among probable AFDC recipients than among women in general.

Murray’s explanation of the rise in illegitimacy thus seems to have at least three flaws. First, most mothers of illegitimate children initially live with their parents, not their lovers, so AFDC rules are not very relevant. Second, the trend in illegitimacy is not well correlated with the trend in AFDC benefits or with rule changes. Third, illegitimacy rose among movie stars and college graduates as well as welfare mothers. All this suggests that both the rise of illegitimacy and the liberalization of AFDC reflect broader changes in attitudes toward sex, law, and privacy, and that they had little direct effect on each other.

But while AFDC does not seem to affect the number of unwed mothers, as Murray claims, it does affect family arrangements in other ways. Ellwood and Bane found, for example, that benefit levels had a dramatic effect on the living arrangements of single mothers. If benefits are low, single mothers have trouble maintaining a separate household and are likely to live with their relatives—usually their parents. If benefits rise, single mothers are more likely to maintain their own households.

Higher AFDC benefits also appear to increase the divorce rate. Ellwood and Bane’s work suggests, for example, that if the typical state had paid a family of four only $180 a month in 1980 instead of $350, the number of divorced women would have fallen by a tenth. This might be partly because divorced women remarry more hastily in states with very low benefits. But if AFDC pays enough for a woman to live on, she is also more likely to leave her husband. The Seattle–Denver “income maintenance” experiments, which Murray discusses at length, found the same pattern.

The fact that high benefits lead to high divorce rates is obviously embarrassing for liberals, since most people view divorce as undesirable. But it has no bearing on Murray’s basic thesis, which is that changes in social policy after 1965 made it “profitable for the poor to behave in the short term in ways that are destructive in the long term.” If changes in the welfare system were encouraging teen-agers to quit school, have children, and not take steady jobs, as Murray contends, he would clearly be right about the long-term costs. But if changes in the welfare system have merely encouraged women who were unhappy in their marriages to divorce their husbands, or have discouraged divorced mothers from marrying lovers about whom they feel ambivalent, what makes Murray think this is “destructive in the long term”?

Are we to suppose that Phyllis is better off in the long run married to Harold if he drinks, or beats her, or molests their teen-age daughter? Surely Phyllis is a better judge of this than we are. Or are we to suppose that Phyllis’s children will be better off if she sticks with Harold? That depends on how good a father Harold is. The children may do better in a household with two parents, even if the parents are constantly at each other’s throats, but then again they may not. Certainly Murray offers no evidence that unhappy marriages are better for children that divorces, and I know of none.

Shorn of rhetoric, then, the “empirical” case against the welfare system comes to this. First, high AFDC benefits allow single mothers to set up their own households. Second, high AFDC benefits allow mothers to end bad marriages. Third, high benefits may make divorced mothers more cautious about remarrying. All these “costs” strike me as benefits.

Consider Harold and Phyllis again, but this time imagine that they married in 1960 and that it is now 1970. They have three children. Harold still has the deadend job in a laundry that Murray describes him as having taken in 1960, and he has now taken both to drinking and to beating Phyllis. Harold still has two choices. He can leave Phyllis or he can stay. If he leaves, Phyllis can try to collect child support from him, but her chances of success are low. So Harold can do as he pleases.

Phyllis is not so fortunate. She is not the sort of person who can earn much more than the minimum wage, so she cannot support herself and three children without help. If she is lucky she can go to her parents. Otherwise, if she lives in a state with low benefits, she has two choices: stick with Harold or abandon her children. Since she has been taught to stick with her children, she has to stick with Harold. If she lives in a state with high benefits she has a third choice: she can leave Harold and take her children with her. In a sense, AFDC is the price we pay for Phyllis’s commitment to her children. At 0.6 percent of total US personal income, it does not seem a high price.

Giving Phyllis more choices has obvious political drawbacks. So long as Phyllis lives with Harold, her troubles are her own. We may shake our heads when we hear about them, but we can tell ourselves that all marriages have problems, and that that is the way of the world. If Phyllis leaves Harold—or Harold leaves Phyllis—and she comes to depend on AFDC, her problems become public instead of private. Now if she cannot pay the rent or does not feed her children milk it could be because her monthly check is too small, not because she doesn’t know or care about the benefits of milk or because Harold spends the money on drink. Taking collective responsibility for Phyllis’s problems is not a trivial price to pay for liberating her from Harold. Most of her problems will, after all, remain intractable. But our impulse to drive her back into Harold’s arms so that we no longer have to think about her is the kind of impulse decent people should resist.

3.

The idea that Phyllis will be the loser in the long run if society gives her more choices exemplifies a habit of mind that seems as common among conservatives as among liberals. First you figure out what kind of behavior is in society’s interest. Then you define such behavior as “good.” Then you argue that good behavior, while perhaps disagreeable in the short run, is in the long-run interest of those who engage in it. Every parent will recognize this ploy:my son should take out the garbage because it is in his long-run interest to learn good work habits, not because I don’t want to take it out or don’t want to live with a shirker. The conflict between individual interests and the common interest, between selfishness and unselfishness, is thus transformed into a conflict between short-run and long-run self-interest. Unfortunately, the argument is often false.

Early in Losing Ground, Murray calculates what he calls the “latent” poverty rate, that is, the percentage of people who fall below the poverty line when we ignore transfer payments from the government such as Social Security, AFDC, unemployment compensation, and military pensions. The “latent” poverty rate rose from 18 percent in 1968 to 22 percent in 1980. Murray calls this “the most damning” measure of policy failure, because “economic independence—standing on one’s own abilities and accomplishments—is of paramount importance in determining the quality of a family’s life.” This is a classic instance of wishful thinking. Murray wants people to work (or clip coupons) because such behavior keeps taxes low and maintains a public moral order of which both he and I approve, so he asserts that failure to work will undermine family life. He doesn’t try to prove this empirically; he says it is self-evident. (“Hardly anyone, from whatever part of the political spectrum, will disagree.”) But the claim is not only self-evident; it is almost certainly wrong.

One major reason latent poverty increased after 1968 was that Social Security, SSI, food stamps, and private pensions allowed more old people to stop working. These programs also made it easier for old people to live on their own instead of moving in with younger relatives. Having come to depend on the government, old people suffer from latent poverty. But is there a shred of evidence that these changes undermined the quality of their family life? If so, why were the elderly so eager to trade their jobs for Social Security, and so reluctant to move in with their daughters-in-law?

Another reason latent poverty increased after 1968 was that more women and children came to depend on AFDC instead of on a man. According to Murray, a woman who depends on the government suffers from latent poverty, while a woman who depends on a man does not. But unless a woman can support herself and her children from her own earnings, she is always dependent on someone (“one man away from welfare”). Murray assumes that AFDC has a worse effect on family life than Harold. But that depends on Harold. Phyllis may not be very smart, but if she chooses AFDC over Harold, surely that is because she expects the choice to improve the quality of her family life, not undermine it. Even if, as Murray imagines, most AFDC recipients are really living in sin with men who help support them, what makes Murray think that the extra money these families get from AFDC makes their family life worse?

Murray’s conviction that getting checks from the government is always bad for people is complemented by his conviction that working is always good for them, at least in the long run. Since many people do not recognize that working is in their long-run interest, Murray assumes such people must be forced to do what is good for them. Harold, for example, would rather loaf than take an exhausting, poorly paid job in a laundry. To prevent Harold from indulging his self-destructive preference for loafing, we must make loafing financially impossible. America did this quite effectively until the 1960s. Then we allegedly made it easier for him to qualify for unemployment compensation, so he was more likely to quit his job whenever he got fed up. We also made it easier for him to live off Phyllis’s AFDC check. Once Harold had tasted the pleasures of indolence, he found them addictive, like smoking, so he never acquired either the skills or the self-discipline he would have needed to hold a decent job and support a family. By trying to help we therefore did him irreparable harm.

While I share Murray’s enthusiasm for work, I cannot see much evidence that changes in government programs significantly affected men’s willingness to work during the 1960s. When we look at the unemployed, for example, we find that about half of all unemployed workers were getting unemployment benefits in 1960. The figure was virtually identical in both 1970 and 1980.18 Thus while the rules governing unemployment compensation did change, the changes did not make joblessness more attractive economically. Murray is quite right that dropping the man-in-the-house rule made it easier for Harold to live off Phyllis’s AFDC check. But there is no evidence that this contributed to rising unemployment. Since black women receive about half of all AFDC money, Murray’s argument implies that as AFDC rules became more liberal and benefits rose in the late 1960s, unemployment should have risen among young black men. Yet Murray’s own data show that such men’s unemployment rate should have fallen in the 1970s, when the purchasing power of AFDC benefits was falling. In fact, their unemployment rates rose.

While Murray is probably wrong to blame government benefits for undermining the work ethic after 1965, he could be right that some groups, especially young blacks, became choosier about the jobs they would accept. Before accepting this popular theory, however, we ought to demand some hard evidence. Murray offers none. His data do show a widening gap between the unemployment rates for young blacks and young whites, and also between the rates for young blacks and older blacks, but there are many possible explanations for this change. To begin with, the ablest young blacks were more likely to be in school, leaving the least employable looking for jobs. Young blacks also faced increasing competition from white high school students, who were working in unprecedented numbers, and from Latin American and Asian immigrants, whom many employers preferred on the grounds that they worked harder for less money.

The kinds of jobs that young blacks had traditionally held may also have disappeared at an especially rapid rate in the 1970s—as they did, for example, when southern agriculture was mechanized in the 1950s and early 1960s. A fascinating book could be written on this whole issue, but Losing Ground is not it. Murray is so intent on blaming unemployment on the government that he discusses alternative explanations only in order to dismiss them.19

4.

While Murray’s claim that helping the poor is really bad for them is indefensible, his criticism of the ways in which government tried to help the poor from 1965 to 1980 still raises a number of issues that defenders of these programs need to face. Any successful social policy must strike a balance between collective compassion and individual responsibility. The social policies of the late 1960s and 1970s did not strike this balance very well. They vacillated unpredictably between the two ideals in ways that neither Americans nor any other people could live with over the long run. This vacillation played a major role in the backlash against government efforts to “do good.” Murray’s rhetoric of individual responsibility and self-sufficiency is not the basis for a social policy that would be politically acceptable over the long run either, but it provides a useful starting point for rethinking where we went wrong.

One chapter of Losing Ground is titled “The Destruction of Status Rewards”—not a happy phrase, but a descriptive one. The message is simple. If we want to promote virtue, we have to reward it. The social policies that prevailed from 1964 to 1980 often seemed to reward vice instead. They did not, of course, reward vice for its own sake. But if you set out to help people who are in trouble, you almost inevitably find that most of them are to some extent responsible for their present troubles. Few victims are completely innocent. Helping those who are not doing their best to help themselves poses extraordinarily difficult moral and political problems.

Phyllis, for example, turns to AFDC after she has left Harold. Her cousin Sharon, whose husband has left her, works forty hours a week in the same laundry where Harold worked before he took to drink. If we help Phyllis very much, she will end up better off than Sharon. This will not do. Almost all of us believe it is “better” for people to work than not to work. This means we also believe those who work should end up “better off” than those who do not work. Standing the established moral order on its head by rewarding Phyllis more than Sharon will undermine the legitimacy of the entire AFDC system. Nor is it enough to ensure that Phyllis is just a little worse off than Sharon. If Phyllis does not work, many—including Sharon—will feel that Phyllis should be substantially worse off, so there will be no ambiguity about the fact that Sharon’s virtue is being rewarded.

The AFDC revolution of the 1960s sometimes left Sharon worse off than Phyllis. In 1970, for example, Sharon’s minimum-wage job paid $275 a month if she worked forty hours every week and was never laid off. Once her employer deducted Social Security and taxes she was unlikely to take home more than $250 a month. Meanwhile, the median state (Oregon) paid Phyllis and her three children $225 a month, and nine states paid her more than $300 a month. This comparison is somewhat misleading in one respect, however. By 1970 Sharon could also get AFDC benefits to supplement her earnings in the laundry. Under the “thirty and a third” rule, adopted in 1967, local welfare agencies had to ignore the first $30 of Sharon’s monthly earnings plus a third of what she earned beyond $30 when they computed her need for ADFC. If, for example, Sharon lived in Oregon, had three children, and took home $250 a month from her job, she could get an additional $78 a month from AFDC, bringing her total monthly income to $328, compared to Phyllis’s $225. But Sharon could only collect her extra $78 a month by becoming a “welfare mother,” with all the humiliations and hassles that implied. Often she decided not to apply. Instead, she nursed a grievance against the government for treating Phyllis better than it treated her.

Upsetting the moral order in this way may not have had much effect on people’s behavior. sharon, for example, usually continued to work even if she could get almost as much on welfare. But this is irrelevant. Even if nobody quit work to go on welfare, a system that provided indolent Phyllis with as much money as diligent Sharon would be universally viewed as unjust. To say that such a system does not increase indolence—or doesn’t increase it much—is beside the point. A criminal justice system that frequently convicts the innocent and acquits the guilty may deter crime as effectively as a system that yields just results, but that does not make it morally or politically acceptable. We care about justice independent of its effects on behavior.

Yet while Murray claims to be concerned about rewarding virtue, he seems only interested in doing this if it does not cost the taxpayer anything. Instead of endorsing the “thirty and a third” rule, for example, on the grounds that it rewarded work, he lumps it with all the other undesirable changes that contributed to the growth of the AFDC rolls during the late 1960s. His rationale for this judgment seems to be that getting money from the government undermines Sharon’s self-respect even if she also holds a full-time job. This may often be true, but when it is, Sharon presumably does not apply for AFDC.

On balance, I prefer the Reagan administration’s argument against the “thirty and a third” rule. The administration persuaded Congress to drop the “thirty and a third” rule in 1981, substituting a dollar-for-dollar reduction in AFDC benefits whenever a recipient worked regularly. As a result, a mother of three is now better off in seven states if she goes on AFDC than if she works at a minimum-wage job. The administration made no pretense that this change was good for AFDC recipicnts, or that it made the system more just. The administration simply argued that supplementing the wages of the working poor was a luxury the American taxpayer could not afford, or at least did not want to afford. While this appeal to selfishness is certainly not morally persuasive, it offends me less than Murray’s claim that such changes are really in the victims’ best interests.

The difficulty of helping the needy without rewarding indolence or folly recurs when we try to provide “second chances.” America was a “second chance” for many of our ancestors, and it remains more committed to the idea that people can change their ways than any other society I know. But we cannot give too many second chances without undermining people’s motivation to do well the first time around. In most countries, for example, students work hard in secondary school because they must do well on the exams given at the end of secondary school in order to get a desirable job or go on to a university. In America, plenty of colleges accept students who have learned nothing whatever in high school, including those who score near the bottom on the SAT. Is it any wonder that Americans learn less in high school than their counterparts in other industrial countries?

Analogous problems arise in our efforts to deal with criminals. We claim that crime will be punished, but this turns out to be mostly talk. Building prisons is too expensive, and putting people in prisons makes them more likely to commit crimes in the future. So we don’t jail many criminals. Instead, we tell ourselves that probation, suspended sentences, and the like are “really” better. Needless to say, such a policy convinces both the prospective criminal and the public that punishment is a sham and that the criminal justice system has no real moral principles.

Still, it is important not to overgeneralize this argument. Many people apply it to premarital sex, for example, arguing that fear of economic hardship in an important deterrent to illegitimacy and that offering unwed mothers an economic “second chance” makes unmarried women more casual about sex and contraception. In this case, however, the problem turns out to be illusory. Unmarried women do not seem to make much effort to avoid pregnancy even in states like Mississippi, where AFDC pays a pittance. This means that liberal legislators can indulge their impulse to support illegitimate children in a modicum of decency without fearing that generosity will increase the number of children born into this unenviable situation.

The problem of “second chances” is intimately related to the larger problem of maintaining respect for the rules governing rewards and punishments in American society. As Murray rightly emphasizes, no society can survive if it allows people to violate its rules with impunity on the grounds that “the system is at fault.” Murray also argues that the liberal impulse to blame “the system” for blacks’ problems had an important part in the social, cultural, and moral deterioration of black urban communities after 1965. That such deterioration occurred in many cities is beyond doubt. Blacks were far more likely to murder, rape, and rob one another in 1980 than in 1965. Black males were more likely to father children they did not intend to care for or support. Black teen-agers were less likely to be working. More blacks were in school, but despite expanded opportunities for higher education and whitecollar employment, black teen-agers were not learning as much.20

All this being conceded, the question remains: were all these ills attributable to people’s willingness to “blame the system,” as Murray claims? Crime, druguse, child abandonment, and academic lassitude were increasing in the prosperous white suburbs of New York and Los Angeles—and, indeed, in London, Prague, and Peking—as well as in Harlem and Watts. Murray is right to emphasize that the problem was worst in black American communities. But recall that his explanation is that “we—meaning the not-poor and the un-disadvantaged—had changed the rules of their world. Not our world, just theirs.” If that is the explanation, why do all the same trends appear everywhere else as well?

Losing Ground does not answer such questions. Indeed, it does not ask them. But it does at least cast debate over social policy in what I believe are the correct terms. First, it does not simply ask how much our social policies cost, or appear to cost, but whether they work. Second, it makes clear that a successful program must not only help those it seeks to help but must do so in such a way as not to reward folly or vice. Third, it reminds us that social policy is about punishment as well as rewards, and that a policy that is never willing to countenance suffering, however deserved, will not long endure. The liberal coalition that dominated Washington from 1964 to 1980 did quite well by the first of these criteria: its major programs, contrary to Murray’s argument, did help the poor. But it did not do as well by the other two criteria: it often rewarded folly and vice and it never had enough confidence in its own norms of behavior to assert that those who violated these norms deserved whatever sorrows followed.

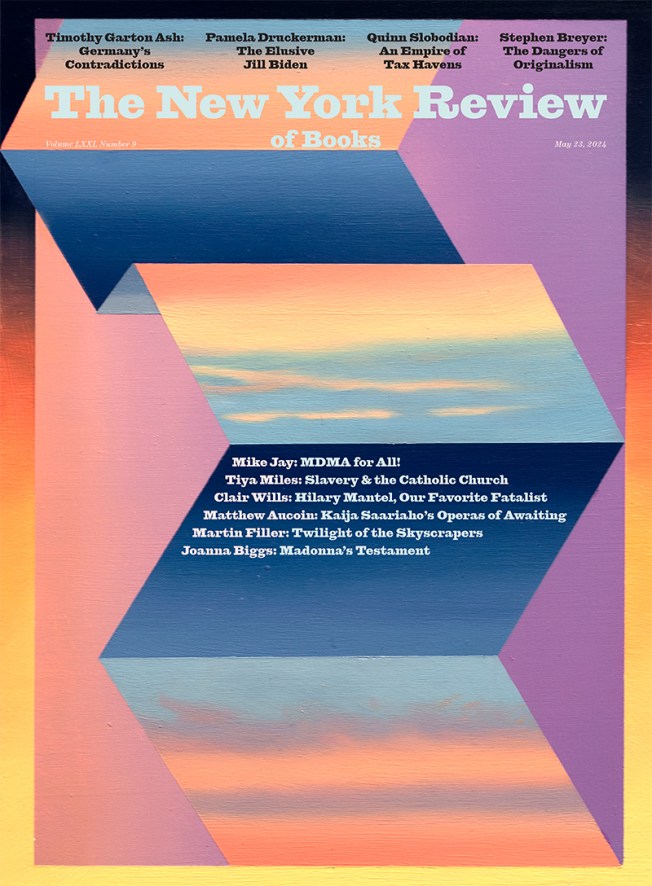

This Issue

May 9, 1985

-

1

Until 1980 the thresholds were also lower for farm families and for families headed by women. A widow living alone, for example, was supposed to need about 7 percent less than a widower living alone. ↩

-

2

US Bureau of the Census, “Estimates of Poverty Including the Value of Noncash Benefits: 1979–1982,” Technical Paper 51 (Government Printing Office, 1984). ↩

-

3

US Bureau of the Census, “Estimates of Poverty Including Noncash Benefits: 1979–1982,” Technical Paper 51 (Government Printing Office, 1984). ↩

-

4

Murray presents a different set of estimates for “net” poverty, taken from the work of Timothy Smeeding. Unlike the Census Bureau’s estimates, Smeeding’s estimates are corrected for underreporting of income. Smeeding’s estimates for years prior to 1979 are also corrected for under-reporting of noncash benefits. But Smeeding’s 1979 estimate, on which Murray places great emphasis, is not corrected for such underreporting. As a result, Smeeding’s series underestimates the decline in net poverty during the 1970s. ↩

-

5

Data taken from US Public Health Service, Health, United States (Government Printing Office, 1983), pp. 126, 127, 137. ↩

-

6

Hope Corman and Michael Grossman examine the effect of Medicaid on infant mortality in “Determinants of Neonatal Mortality Rates in the United States: A Reduced-Form Model,” Working Paper 1387 (National Bureau of Economic Research, 1985). ↩

-

7

From 1950 to 1980 the correlation between the official poverty rate and the logarithm of real median family income is 0.995. ↩

-

8

As Murray notes, GNP per person also grew quite rapidly during the 1970s, because the number of workers grew while the number of children fell. But this change did not reduce poverty, because family size did not decline appreciably among those with incomes below $10,000 in 1980 dollars. See US Bureau of the Census, Current Population Reports, Series P-60, no. 80, p. 17 and no. 132, p. 61 (Government Printing Office, 1971 and 1982). ↩

-

9

US Bureau of the Census, Statistical Abstract of the United States: 1984 (Government Printing Office), pp. 368 and 371. ↩

-

10

Murray’s figures show even more rapid growth in both “public aid” and “public assistance” after 1965, because he concentrates exclusively on federal spending, ignoring state and local expenditures. I find it hard to see how a writer who sees rising AFDC benefits as a major source of social decline can focus entirely on federal spending. It is the states, after all, that set AFDC benefits. ↩

-

11

The exact ratio is hard to determine, because prior to 1982 the Census Bureau did not have a satisfactory procedure for identifying mother-child families living with relatives. ↩

-

12

See US Bureau of the Census, “Estimates of Poverty Including the Value of Noncash Benefits: 1979–1982,” Technical Paper 51 (Government Printing Office, 1984). ↩

-

13

US Bureau of the Census, Statistical Abstract of the United States, 1984, p. 371. ↩

-

14

House Committee on Ways and Means, “Background Material and Data on Programs within the Jurisdiction of the Committee on Ways and Means” (Government Printing Office, 1985). The drop is even larger using the conventional inflation adjustment based on the CPI instead of the PCE deflator. ↩

-

15

The percentage of families on the rolls stabilized after 1975. The percentage of persons on the rolls declined after 1975, because AFDC families got smaller. ↩

-

16

David Ellwood and Mary Jo Bane, “The Impact of AFDC on Family Structure and Living Arrangements” (Kennedy School of Government, Harvard University, March 1984, offset). ↩

-

17

Ellwood and Bane, “The Impact of AFDC on Family Structure and Living Arrangements” (Kennedy School of Government, Harvard University, March 1984, offset). ↩

-

18

Economic Report of the President: 1985 (Government Printing Office, 1985), pp. 269 and 274. ↩

-

19

In discussing the effects of rising black school enrollment on unemployment rates, for example, Murray completely ignores the fact that those who enrolled in school were abler than those who dropped out. Because of this “creaming” process, the unemployment rate would have risen among black teen-agers who were not in school even if nothing else had changed. Robert Mare and Christopher Winship analyze this and related issues in “The Paradox of Lessening Racial Inequality and Joblessness Among Black Youth,” American Sociological Review (February 1984), pp. 39–55. ↩

-

20

Barbara Holmes summarizes changes in black academic achievement during the 1970s using data from the National Assessment of Educational Progress in “Reading, Science, and Mathematics Trends: A Closer Look” (Denver: Educational Commission of the States, December 1982). Black nine-year-olds and thirteen-year-olds gained ground during the 1970s, but black seventeen-year-olds lost ground in both science and mathematics and showed no change in reading. White seventeen-year-olds also lost ground. ↩