David Levinthal

David Levinthal: Untitled, from his 2008 series ‘I.E.D.,’ about the US wars in Afghanistan and Iraq. Levinthal’s work is on view in ‘David Levinthal: War, Myth, Desire,’ at the George Eastman Museum, Rochester, New York, until January 1, 2019. The accompanying book is published by the museum and Kehrer.

The Reign of George VI, 1900–1925, published anonymously in London in 1763, makes for intriguing reading today. Twentieth-century France still groans under the despotism of the Bourbons. America is still a British colony. “Germany” still means the sprawling commonwealth of the Holy Roman Empire. As the reign of George VI opens, the British go to war with France and Russia and defeat them both. But after a Franco-Russian invasion of Germany, the war reignites in 1917. The British invade and subdue France, deposing the Bourbons. After conquering Mexico and the Philippine Islands, the Duke of Devonshire enters Spain, and a general peace treaty is signed in Paris on November 1, 1920.

The impact of revolution on the international system lies far beyond this author’s mental horizons, and he has no inkling of how technological change will transform modern warfare. In his twentieth century, armies led by dukes and soldier-kings still march around the Continent reenacting the campaigns of Frederick the Great. The Britannia, flagship of the Royal Navy, is feared around the world for the devastating broadsides of its “120 brass guns.” The term “steampunk” comes to mind, except there is no steam. But there are passages that do resonate unsettlingly with the present: English politics is mired in factionalism, Germany’s political leadership is perilously weak, and there are concerns about the “immense sums” Russian Tsar Peter IV has invested in British client networks, with a view to disrupting the democratic process.

Predicting future wars—both who will fight them and how they will be fought—has always been a hit-and-miss affair. In The Coming War with Japan (1991), George Friedman and Meredith Lebard solemnly predicted that the end of the cold war and the collapse of the Soviet Union would usher in an era of heightened geopolitical tension between Japan and the US. In order to secure untrammeled access to vital raw materials, they predicted, Japan would tighten its economic grip on southwest Asia and the Indian Ocean, launch an enormous rearmament program, and begin challenging US hegemony in the Pacific. Countermeasures by Washington would place the two powers on a collision course, and it would merely be a matter of time before a “hot war” broke out.

The rogue variable in the analysis was China. Friedman and Lebard assumed that China would fragment and implode just as the Soviet Union had, leaving Japan and America as rivals in a struggle to secure control over it. It all happened differently: China embarked upon a phase of phenomenal growth and internal consolidation, while Japan entered a long period of economic stagnation. The book was clever, well written, and deftly argued, but it was also wrong. “I’m sure the author had good reasons in 1991 to write this, and he’s a really smart guy,” one reader commented in an Amazon review in 2014 (having failed to notice Meredith Lebard’s co-authorship). “But, here we are, 23 years later, and Japan wouldn’t even make the list of the top 30 nations in the world the US would go to war with.”

This is the difficult thing about the future: it hasn’t happened yet. It can only be imagined as the extrapolation of current or past trends. But forecasting on this basis is extremely difficult. First, the present is marked by a vast array of potentially relevant trends, each waxing and waning, augmenting one another or canceling one another out; this makes extrapolation exceptionally hard. Second, neither for the present nor for the past do experts tend to find themselves in general agreement on how the most important events were or are being caused—this too, bedevils the task of extrapolation, since there always remains a degree of uncertainty about which trends are more and which are less relevant to the future in question.

Finally, major discontinuities and upheavals seem by their nature to be unpredictable. The author of The Reign of George VI failed to predict the American and French Revolutions, whose effects would be profound and lasting. None of the historians or political scientists expert in Central and Eastern European affairs predicted the collapse of the Soviet bloc, the fall of the Berlin Wall, the unification of Germany, or the dissolution of the Soviet Union. And Friedman and Lebard failed to foresee the current economic, political, and military ascendancy of China.

Lawrence Freedman’s wide-ranging The Future of War: A History is aware of these limits of human foresight. It is not really about the future at all, but about how societies in the Anglophone West have imagined it. The book doesn’t advance a single overarching argument; its strength lies rather in the sovereign presentation of a diverse range of subjects situated at various distances from the central theme: the abiding military fantasy of the “decisive battle,” the significance of peace conferences in the history of warfare, the impact of nuclear armaments on strategic thought, the quantitative analysis of wars and their human cost, the place of cruelty in modern warfare, and the changing nature of war in a world of cyberweapons and hybrid strategy.

Advertisement

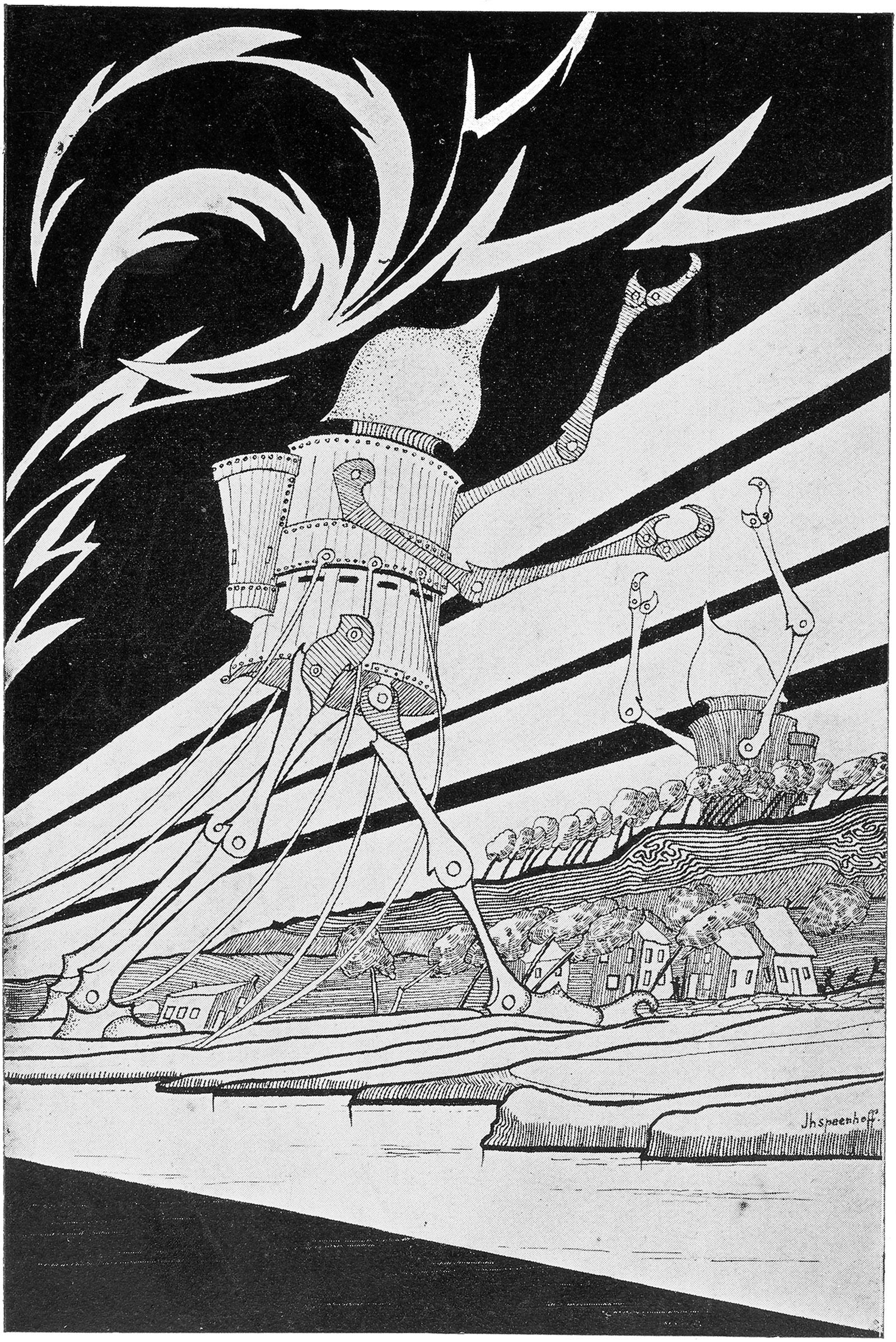

In modern societies, as Freedman shows, imagining wars to come has been done not just by experts and military planners but also by autodidacts and writers of fiction. The most influential early description of a modern society under attack by a ruthless enemy was H.G. Wells’s best seller The War of the Worlds (1897), in which armies of Martians in fast-moving metal tripods poured “Heat-Rays” and poisonous gas into London, clogging the highways with terrified refugees who were subsequently captured and destroyed, their bodily fluids being required for the nourishment of the invaders. The Martians had been launched from their home planet by a “space gun”—borrowed from Jules Verne’s From the Earth to the Moon (1865)—but the underlying inspiration came from the destruction of the indigenous Tasmanians after the British settlement of the island, an early nineteenth-century epic of rapes, beatings, and killings that, together with pathogens carried by the invaders, wiped out virtually the entire black population (a few survived on nearby Flinders Island). The shock of Wells’s fiction derived not so much from the novelty of such destruction, which was already familiar from the European colonial past, but from its unexpected relocation to a white metropolis.

The most accurate forecast of the stalemate on the Western Front in 1914–1918 came not from a professional military strategist but from the Polish financier and peace advocate Ivan Stanislavovich Bloch (1836–1901), whose six-volume study The War of the Future in Its Technical Economic and Political Relations (1898) argued that not even the boldest and best-trained soldiers would be able to cut through the lethal fire of a well-dug-in adversary. The next war, he predicted, would be “a great war of entrenchments” that would pit not just soldiers but entire populations against one another in a long attritional struggle. Bloch’s meticulously detailed scenario was an argument for the avoidance of war. If this kind of thinking failed to have much effect on official planning, it was because military planners foresaw a different future, one in which determined offensives and shock tactics would still carry the day against defensive positions. Their optimism waned during the early years of World War I but was revived in 1917–1918, with the return to a war of movement marked by huge offensive strikes and breakthroughs into enemy terrain.

The prospect of aerial warfare aroused a similar ambivalence. Wells’s War in the Air (1908) imagined a form of warfare so devastating for all sides that a meaningful victory by any one party was unthinkable. He depicted America as under attack from the east by German airships and “Drachenfliegers” and from the west by an “Asiatic air fleet” equipped with swarms of heavily armed “ornithopters” (lightweight one-man flying machines). The book closed with a post-apocalyptic vision of civilizational collapse and the social and political disintegration of all the belligerent states.

But others saw aerial warfare as a means of recapturing the promise of a swift and decisive victory. Giulio Douhet’s The Command of the Air (1921) aimed to show how an aerial attack, if conducted with sufficient resources, could carry war to the nerve centers of the enemy, breaking civilian morale and thereby placing decision-makers under pressure to capitulate. The ambivalance remains. To this day, scholars disagree on the efficacy of aerial bombing in bringing the Allied war against Nazi Germany to an end, and the Vietnam War remains the classic example of a conflict in which overwhelming air superiority failed to secure victory.

The ultimate twentieth-century weapon of shock was the atomic bomb. The five-ton device dropped on Hiroshima by an American bomber on August 6, 1945, flattened four square miles of the city and killed 80,000 people instantly. The second bomb, dropped three days later on Nagasaki, killed a further 40,000. The advent of this new generation of nuclear armaments—and above all the acquisition of them by the Soviet Union—opened up new futures. In 1954, a team at the RAND Corporation led by Albert Wohlstetter warned that if the leadership of one nuclear power came to the conclusion that a preemptive victory over the other was possible, these devastating weapons might be used in a surprise attack. On the other hand, if the destructive forces available to both sides were in broad equilibrium, there was reason to hope that the fear of nuclear holocaust would itself stay the hands of potential belligerents. “Safety,” as Winston Churchill put it in a speech to the British Parliament in March 1955, might prove “the sturdy child of terror, and survival the twin brother of annihilation.”

Advertisement

This line of argument gained ground as the underlying stability of the postwar order became apparent. The “function of nuclear armaments,” the Australian international relations theorist Hedley Bull suggested in 1959, was to “limit the incidence of war.” In a nuclear world, Bull argued, states were not just “unlikely to conclude a general…disarmament agreement,” but would be “behaving rationally in refusing to do so.” In an influential paper of 1981, the political scientist Kenneth Waltz elaborated this line of argument, proposing that the peacekeeping effect of nuclear weapons was such that it might be a good idea to allow more states to acquire one: “more may be better.”*

Most of us will fail to find much comfort in this Strangelovian vision. It is based on two assumptions: that the nuclear sanction will always remain in the hands of state actors and that state actors will always act rationally and abide by the existing arms control regimes. The first still holds, but the second looks fragile. North Korea’s nuclear deterrent is controlled by one of the most opaque personalities in world politics. This past January, Kim Jong-un reminded the world that a nuclear launch button is “always on my table” and that the entire United States was within range of his nuclear arsenal: “This is a reality, not a threat.”

For his part, the president of the United States taunted his Korean opponent, calling him “short and fat,” “a sick puppy,” and “a madman,” warning him that his own “Nuclear Button” was “much bigger & more powerful” and threatening to rain “fire and fury” down on his country. Then came the US–North Korea summit of June 12, 2018, in Singapore. The two leaders strutted before the cameras and Donald Trump spoke excitedly of the “terrific relationship” between them. But the summit was diplomatic fast food. It lacked, to put it mildly, the depth and granularity of the meticulously prepared summits of the 1980s. We are as yet no closer to the denuclearization of the Korean peninsula than we were before.

Meanwhile Russia has installed a new and more potent generation of intermediate-range nuclear missiles aimed at European targets, in breach of the 1987 INF Treaty. The US administration has responded with a Nuclear Posture Review that loosens constraints on the tactical use of nuclear weapons, and has threatened to pull out of the treaty altogether. The entire international arms control regime so laboriously pieced together in the 1980s and 1990s is falling apart. In a climate marked by resentment, aggression, braggadocio, and mutual distrust, the likelihood of a hot nuclear confrontation either through miscalculation or by accident seems greater than at any time since the end of the cold war.

Freedman is unimpressed by Steven Pinker’s claim that the human race is becoming less violent, that the “better angels of our nature” are slowly gaining the upper hand as more and more societies come to accept the view that “war is inherently immoral because of its costs to human well-being.” Pinker’s principal yardstick of progress, the declining number of violent deaths per 100,000 people per year across the world over the span of human history, strikes Freedman as too crude: it fails to take account of regional variations, phases of accelerated killing, and demographic change; it assumes excessively low death estimates for the twentieth century and fails to take account of the fact that deaths are not the only measure of violence in a world that has become much better at keeping the maimed and traumatized alive.

However the numbers stack up, there has clearly been a change in the circumstances and distribution of fatalities. Since 1945, conflicts between states have caused fewer deaths than various forms of civil war, a mode of warfare that has never been prominent in the fictions of future conflict. Two million are estimated to have died under the regime of Pol Pot in Cambodia in the 1970s; 80,000–100,000 of these were actually killed by regime personnel, while the rest perished through starvation or disease. In a remarkable spree of low-tech killing, the Rwandan genocide took the lives of between 500,000 and one million people.

The relationship between military and civilian mortalities has also seen drastic change. In the early twentieth century, according to one rough estimate, the ratio of military to civilian deaths was around 8:1; in the wars of the 1990s, it was 1:8. One important reason for this is the greater resistance of today’s soldiers to disease: whereas 18,000 British and French troops perished of cholera during the Crimean War, in 2002, the total number of British soldiers hospitalized in Afghanistan on account of infectious disease was twenty-nine, of whom not one died. On the other hand, civilians caught up in modern military conflicts, especially in situations where medical services and humanitarian supplies are disrupted, remain highly exposed to disease, thirst, and malnutrition.

A further reason for the disproportionate ballooning of civilian deaths is the tendency of military interventions to morph into chronic insurgencies and civil wars. Counting the dead is extremely difficult in a dysfunctional or destroyed state riven by civil strife, but the broad trends are clear enough. Whereas the total number of Iraqi combat deaths from the air and ground campaigns in the 1991 Gulf War appears to have been between 8,000 and 26,000, the total number of “consequential” Iraqi civilian deaths was around 100,000. Several tens of thousands of Iraqi military personnel were killed in the second Gulf War; the total civilian death toll may have been as high as 460,000 (the Lancet’s estimate of 655,000 is widely regarded as too high). The deaths incurred by the coalition forces in these two conflicts were 292 and 4,809 respectively. The problem is that even the most determined and skillful applications of military force, rather than definitively resolving disputes, inaugurate processes of escalation or disintegration that exact a much higher human toll than the military intervention itself.

Today, the phenomenon of the “battle” in which highly organized state actors are engaged is making way for a decentered form of ambient violence in which states engage “asymmetrically” with nonstate militias or civilians; cyberattacks disrupt elections, infrastructures, or economies; and missile-bearing drones cruise over insurgent suburbs. The resulting deterritorialization of violence in regions marked by decomposing states makes the kind of “decision” Clausewitz associated with battle difficult to achieve or even to imagine. “With the change in the type and tactics of a new and different enemy,” Robert H. Latiff writes in Future War, “we have evolved in the direction of total surveillance, unmanned warfare, stand-off weapons, surgical strikes, cyber operations and clandestine operations by elite forces whose battlefield is global.”

In pithy, flip-chart paragraphs, Latiff, a former US Air Force major general, sketches a vision of a future that resembles the fictional scenarios of William Gibson’s Neuromancer.

In the wars of the future, Latiff suggests, the “metabolically dominant soldier” who enjoys the benefits of immunity to pain, reinforced muscle strength, accelerated healing, and “cognitive enhancement” will enter the battlespace neurally linked not just to his human comrades but also to swarms of semiautonomous bots. “Flimmers,” missiles that can both fly and swim, will menace enemy craft on land and at sea, while undersea drones will seek out submarines and communication cables. Truck-mounted “Active Denial Systems” will deploy “pain rays” that heat the fluid under human skin to boiling point. Enemy missiles and aircraft will buckle and explode in the intense heat of chemical lasers. High-power radio-frequency pulses will fry electrical equipment across wide areas. Hypersonic “boost-glide vehicles” will ride atop rockets before being released to attack their targets at such enormous speeds that shooting them down with conventional missiles will be “next to impossible.” “Black biology” will add to these terrors a phalanx of super-pathogens. Of the more than $600 billion the US spends annually on defense, about $200 billion is allocated to research, development, testing, and procurement of new weapons systems.

Latiff acknowledges some of the ethical issues here, though he has little of substance to say about how they might be addressed. How will the psychology of “human-robot co-operation” work out in practice? Will “metabolically dominant” warriors returning from war be able to settle back comfortably into civilian society? What if robots commit war crimes or children get trapped in the path of “pain rays”? What if radio-magnetic pulse weapons shut down hospitals, or engineered pathogens cause epidemics? Will the growing use of drones or AI-driven vehicles diminish the capacity of armed forces personnel to perceive the enemy as fully human? “An arms race using all of the advanced technologies I’ve described,” writes Latiff toward the end of his book, “will not be like anything we’ve seen, and the ethical implications are frightening.”

Frightening indeed. A dark mood overcame me as I read these two books. It’s hard not be impressed by the inventiveness of the weapons experts in their underground labs, but hard, too, not to despair at the way in which such ingenuity has been uncoupled from larger ethical imperatives. And one can’t help but be struck by the cool, acquiescent prose in which the war studies experts portion out their arguments, as if war is and will always be a human necessity, a feature of our existence as natural as birth or the movement of clouds. I found myself recalling a remark made by the French sociologist Bruno Latour when he visited Cambridge in the spring of 2016. “It is surely a matter of consequence,” he said, surprising the emphatically secular colleagues in the room, “to know whether we as humans are in a condition of redemption or perdition.”

The principled advocacy of peace also has its history, though it receives short shrift from Freedman. The champions of peace will always be vulnerable to the argument that since the enemy, too, is whetting his knife, talk of peace is unrealistic, even dangerous or treacherous. The quest for peace, like the struggle to arrest climate change, requires that we think of ourselves not just as states, tribes, or nations, but as the human inhabitants of a shared space. It demands feats of imagination as concerted and impressive as the sci-fi creativeness and wizardry we invest in future wars. It means connecting the intellectual work done in centers of war studies with research conducted in peace institutes, and applying to the task of avoiding war the long-term pragmatic reasoning we associate with “strategy.”

“I don’t think that we need any new values,” Mikhail Gorbachev told an interviewer in 1997. “The most important thing is to try to revive the universally known values from which we have retreated.” And it must surely be true, as Pope Francis remarked in April 2016, that the abolition of war remains “the ultimate and most deeply worthy goal of human beings.” There have been prominent politicians around the world who understood this. Where are they now?

This Issue

November 22, 2018

A Very Grim Forecast

Romanticism’s Unruly Hero

The Crash That Failed

-

*

See Kenneth N. Waltz, “The Spread of Nuclear Weapons: More May Be Better,” The Adelphi Papers, Vol. 21, No. 171 (1981). ↩