A few weeks before the publication in early February of This Is How They Tell Me the World Ends, Nicole Perlroth’s disquieting account of the global trade in cyberweapons, multiple US government agencies and major corporations learned that they had been hit with one of the biggest cyberattacks in history. By all accounts, the operation—discovered in early December by the security firm FireEye, whose own closely guarded hacking tools were stolen—had been going on for at least nine months. Hackers believed to be agents of the Russian foreign intelligence service, SVR, appear to have embedded malware into a routine software upgrade from SolarWinds, a Texas-based IT company. When hundreds of the 18,000 users of the firm’s Orion network management system downloaded the upgrade, the malware opened those systems to the hackers. Further analysis revealed that about a third of the victims had not been SolarWinds clients, and thus the hackers must have been using other tactics in addition to the “trojanized” Orion software. Another point of entry may have been a backdoor in software developed by a Czech company called JetBrains, run by Russian nationals, that supplies its software testing product, TeamCity, to 300,000 businesses around the world, one of which is SolarWinds.

In fact, as reported by The New York Times, the hackers used multiple strategies to compromise the networks of an estimated 250 companies and federal agencies, including the Commerce Department, the Pentagon, the State Department, and the Department of Justice. According to the Associated Press, they “probably gained access to the vast trove of confidential information hidden in sealed documents, including trade secrets, espionage targets, whistleblower reports and arrest warrants.” Microsoft’s network was also hacked, and the source code to three of its products, including its cloud computing service, Azure, was stolen.

None of the alarms put in place by the government or private companies to detect such intrusions was tripped. In the daily White House press briefing on February 17, Anne Neuberger, the deputy national security adviser for cyber and emerging technology, pointed out that “the intelligence community largely has no visibility into private sector networks. The hackers launched the hack from inside the United States, which further made it difficult for the US government to observe their activity.”

In their analysis of the attack, security researchers at Microsoft found that the hackers’ methods included hijacking authentication credentials and password spraying—testing commonly used passwords on thousands of accounts at a time, hoping that at least one would be the key that turns the lock. Login credentials are for sale on the dark web, as Perlroth, who covers cybersecurity for The New York Times, found out when a hacker she was interviewing accurately relayed to her what she thought was her own clever and secure e-mail password. (She quickly changed it and began using two-factor authentication.)

Hackers can also gain entry to computer systems by phishing, in which they cast a wide net, indiscriminately sending official-looking e-mails to, say, the members of an organization or employees of a company, in an effort to trick at least one of them into sharing login credentials or opening a document spiked with malicious code. Spear-phishing is similar but targeted at a particular individual. These lucrative and disruptive techniques were used in 2016 to gain access to Clinton campaign adviser John Podesta’s e-mails (which were then archived and made searchable on WikiLeaks) and to launch municipal ransomware attacks like the one in Baltimore in 2019 that disabled the city’s computer systems—disrupting work in hospitals, airports, the judicial system, and elsewhere and preventing people from being able to pay their water bills, parking tickets, and property taxes—and ultimately cost the city $18.2 million in repairs and lost revenue. (The $100,000 ransom was not paid.)

Since 2017, when an anonymous group calling itself the Shadow Brokers stole and then released a cache of the NSA’s most coveted hacking tools, hackers working on behalf of nation-state intelligence agencies and militaries all over the world have had access to a sophisticated stockpile of exploits that they’ve used to infiltrate government computer networks on an enormous scale. (An exploit is typically defined as an attack on a computer system that takes advantage of a weakness or error in its code.) Russia’s cyberattack on Ukraine that year, which Perlroth calls “the most destructive and costly cyberattack in world history,” made use of the NSA tools to shut down banks, transportation services, monitors at the site of the former Chernobyl nuclear facility, and government offices, and had ripple effects around the globe, causing more than $10 billion in damages. Cybercriminals and hackers have also been aided by the theft and subsequent release of Vault 7, an arsenal of hacking tools developed by or for the CIA that WikiLeaks conveniently organized and archived.

Advertisement

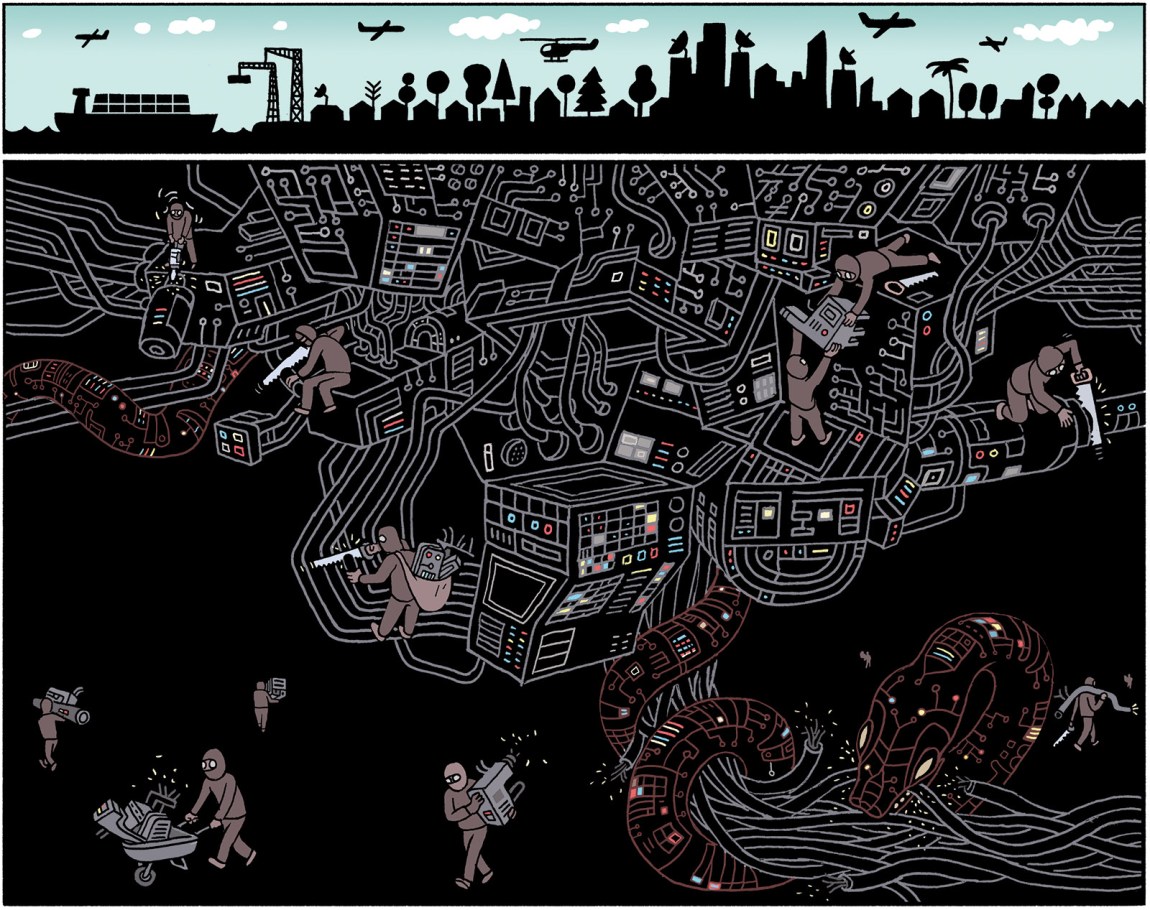

The world’s most expert hackers comb through computer code looking for programming errors that will provide access to computer networks. These software glitches are called “zero-days,” or “0-days” (pronounced “oh-days”), because at the moment a hacker discovers one, it’s been zero days since the software developer has found and fixed the flaw. As Perlroth describes it, a zero-day shields spies and cybercriminals with “a cloak of invisibility,” allowing unfettered, undetected access to a computer network:

In that one little snippet of a software update, you might be able to inject code into a web server that causes it to turn over the source code to the voicemail application…. Or you might find the gold mine—a remote code execution bug, the kind of bug that allows a hacker to run code of his choosing on the application from afar.

A recent study by Google’s Project Zero of last year’s zero-day attacks found that, had software vendors properly patched their known vulnerabilities, 25 percent of the attacks could have been prevented.

This Is How They Tell Me the World Ends is a vivid and provocative chronicle of Perlroth’s travels through the netherworld of the global cyberweapons arms trade. If the book’s title sounds overwrought, it’s because these hacking tools can give intruders access to critical infrastructure such as nuclear facilities, the power grid, industrial control systems, air traffic control, and waterworks, and thus have the capacity to become weapons of mass destruction. But they are relatively cheap compared with other weapons of mass destruction, and for sale in a market that is robust, largely out of sight, and welcoming to anyone with piles of cash at their disposal, whatever their motivation. This is not theoretical. Perlroth reports, for example, that Russia is already inside systems that operate US dams, nuclear power facilities, and pipelines, giving it the capacity to “unleash the locks at the dams, trigger an explosion, or shut down power to the grid.”

In 2013, Perlroth recounts, she was summoned by her bosses at The New York Times to be part of the small team of journalists from The Guardian, the Times, and ProPublica to examine and report on the classified documents stolen by the former NSA contractor Edward Snowden. As she went through them, she noticed that the documents “hinted at a lively outsourcing trade [in zero-days] with the NSA’s ‘commercial partners’ and ‘security partners.’” Her curiosity piqued, Perlroth pursued this lead for the next seven years, unfazed by warnings from both Leon Panetta, the former secretary of defense and director of the CIA, and General Michael Hayden, the former director of both the NSA and the CIA, who told her that “getting to the bottom of the zero-day market was a fool’s errand.” Though the questions she was asking were straightforward—who was searching for zero-days, who was weaponizing them, and who was paying for them—the answers, like the hackers themselves, were for the most part elusive.

The trade in zero-days mirrors the expansion of digitization and connectivity that has come to define much of our lives, as well as the proliferation of the code that powers it. In 1993, the year that Mosaic, the first graphical browser, was released, fewer than 15 million people worldwide had access to the Internet from a mainframe or personal computer. Data was typically stored locally, on disks and paper; hard-drive capacity was severely limited. Today there are close to five billion Internet users—well over half of the people on earth—and at least 30 billion Internet-connected devices, from smartphones to pacemakers to tractors to biometric sensors to surveillance systems, with 127 new devices connected to the Web every second. Each one of them is vulnerable to an attack. So are the servers that power cloud computing, where so much of the world’s data is stored.

In the early days of personal computing, hunting for flaws in software was the domain of hobbyists who rooted around software code for fun and bragging rights, not for profit. Typically, in those early days, when hackers alerted software developers to the vulnerabilities in their code, they were ignored. Perlroth argues that Microsoft was so intent on dominating personal computing during the late 1990s that it intentionally overlooked known flaws in its Internet Explorer browser that gave hackers access to Microsoft customers worldwide. This changed in 2001, when a bug in the browser enabled hackers to “brick”—render useless—hundreds of thousands of computers across the globe and later take hundreds of thousands of Microsoft customers offline. The attack’s ultimate target, the White House website, was apparently able to resist it.

Then, a week after September 11, hackers launched what was then the biggest cyberattack in the world. Called Nimda, it infected e-mail, hard drives, and servers, and impeded Internet traffic. “Before 9/11, there were so many holes in Microsoft’s products that the value of a single Microsoft exploit was virtually nothing,” Perlroth writes. “After 9/11, the government could no longer let Microsoft’s security issues slide.” Instead of turning over their discoveries to the software developers to patch, hackers began selling them, some in a black market conducted mainly online and others in a “gray market” of brokers that sell, for the most part, to governments. Suddenly software bugs were worth something, and a new “industry”—pecunia ex machina—was born. Private companies like Microsoft, Amazon, and Apple, as well as some government agencies, now hold “bug bounty” competitions to encourage hackers all over the world to search for coding errors, but the prize money pales in comparison with what can be made on the black and gray markets.

Advertisement

Not surprisingly, Perlroth had a hard time getting anyone in this world to talk to her. To acknowledge their activities is to acknowledge a business whose principals largely would like to keep hidden. Eventually, Perlroth tracked down a man—she gives him the name Jimmy Sabien—who had been a zero-day broker in the early 2000s. He’d pay hackers—the best, he said, were from Israel, and many also came from Eastern Europe—for software glitches that his firm would parlay into exploits that were then sold to US intelligence services, the Pentagon, and law enforcement. A run-of-the-mill bug in Microsoft Windows might go for $50,000, Sabien told her, while something more obscure might net twice that. “A bug that allowed the government’s spies to burrow deep into an adversary’s system, undetected, and stay awhile,” he said, could go for three times as much. (In true cloak-and-dagger style, buyers would show up at a transaction site with duffel bags full of cash.)

Since then, the prices have skyrocketed and the buyers have proliferated. In 2013 Perlroth was told that the market had already surpassed $5 billion. The same year, the NSA added $25 million to its budget, apparently to buy zero-days. More recently, Perlroth points out, foreign governments—especially, she says, oil-rich Middle Eastern countries, Israel, Britain, India, Russia, and Brazil—have been willing to match US prices, especially after the discovery in 2010 of Stuxnet, a malicious computer worm unleashed on Iran’s uranium enrichment facility at Natanz, which caused numerous centrifuges to burn out and demonstrated, in a very public way, the sly destructive potential of weaponized zero-days to wreak havoc in the physical world. (The attack is widely believed to have been a joint Israeli-American operation, though neither country has claimed credit for it.) The consequence has been an inscrutable yet escalating arms race that appears to have no limit.

Because cyberweapons are inexpensive in comparison with traditional ordnance and are available for anyone to discover, they have diminished the security advantage held by countries with outsize defense budgets. Shortly after the Stuxnet attack, for example, Iran mobilized what it claimed was the fourth-largest cyber army in the world, which then unleashed a sustained two-year assault on forty-six American banks and financial companies that shut people out of their accounts and cost those institutions millions of dollars. The hackers also breached the controls of an American dam. While it was the wrong one—the hackers apparently confused a small dam in Westchester County, New York, with a major dam in Oregon—it demonstrated the threat to essential, life-sustaining infrastructure posed by weaponized computer code. Perlroth recalls seeing a young Iranian at a hacking conference in Miami demonstrate how to break into the power grid in five seconds:

With his access to the grid, [he] told us, he could do just about anything he wanted: sabotage data, turn off the lights, blow up a pipeline or chemical plant by manipulating its pressure and temperature gauges. He casually described each step as if he were telling us how to install a spare tire, instead of the world-ending cyberkinetic attack that officials feared imminent.

While the prospect of mutually assured destruction may keep adversaries from launching such an attack on each other—General Mark Milley suggested in July 2019, during his confirmation hearing to become head of the Joint Chiefs of Staff, that if our adversaries “know that we have incredible offensive capability, then that should deter them from conducting attacks on us in cyber”—all bets are off with terrorists and rogue nations. As the Department of Defense wrote in its first “Strategy for Operating in Cyberspace,” in 2011, “The potential for small groups to have an asymmetric impact in cyberspace creates very real incentives for malicious activity.” Earlier this year, for example, a hacker of unknown origin broke into the water supply of a city outside Tampa, Florida, remotely commandeered the controls, and raised the amount of lye—used in small quantities to control the acidity of drinking water—to toxic levels. (Workers watched the attack unfold over three to five minutes and, when it was over, immediately restored the chemical composition of the water.) Industrial control systems, many of which are poorly defended, have become a preferred target of malicious actors aiming to undermine civil society.*

Years ago, when American intelligence agencies added the exploitation of coding errors to their surveillance tool kit, the practice must have seemed like a systems upgrade: instead of having to trail a suspected terrorist, for example, one could simply hack into his phone to acquire a comprehensive account of where he went, who he communicated with, and what they said. Instead of having to get a warrant to tap that phone, they could listen in on the underwater fiber-optic cables that carried Internet traffic. It’s unclear when, precisely, the US merged spying in cyberspace with the deployment of offensive cyberweapons, but in 2009, as the Stuxnet attack was underway, the Defense Department’s Cyber Command and the NSA were put under a single directorate. Four years later, according to documents leaked by Snowden, President Obama directed senior intelligence officers to come up with a list of potential cyberattack targets, including foreign infrastructure and computer systems.

One fundamental difference between traditional weapons and digital weapons is that traditional weapons are tactile objects that exist in the material world. Digital ordnance is obscure—a hieroglyphics of zeros and ones that begins with a coding error that could have been discovered by multiple hackers and be stored in any number of nation-state, criminal, or terrorist arsenals. According to a study by the RAND Corporation, zero-day exploits don’t remain secret for long—about eighteen months.

Perlroth never says how buyers like the NSA can be sure that sellers are not double-dealing, though she does tell the story of an American contracting company that, after being paid millions of dollars to secure the DOD’s computer system, subcontracted with another American firm that then farmed out the labor to coders in Russia, because they worked for cheap. The Russians were able to riddle the Pentagon’s security software with backdoors that opened the system to the foes it was meant to keep out.

And then there was the broker who believed he could tell, magically, if the buyers of his exploits were—true to their word—planning on using them exclusively on bad guys. But when one of his clients, an Italian firm called the Hacking Team, was itself hacked, leaked e-mails and invoices conclusively showed that the Hacking Team was supplying digital weapons to some of the most egregious human rights abusers on the planet, including Turkey and the United Arab Emirates (UAE).

Those countries are also buying the services of former elite NSA hackers, lured by exorbitant salaries and luxurious lifestyles. When a former NSA employee named David Evenden moved to the UAE in 2014 to work for the Abu Dhabi government (under the auspices of an American security contractor called CyberPoint), he was told—and he assumed—he was helping an ally fight the war on terror. Before long he was also tunneling deep into the networks of Qatar and its royals—again under the guise of sniffing out terrorists—which segued into spear-phishing human rights activists and journalists.

At the request of his Emirati bosses, Evenden wrote what he thought was a dummy e-mail inviting a British journalist to a fake human rights conference, just to show them how such an e-mail could be used to collect all sorts of personal information once the recipient opened it. Unbeknownst to him, the e-mail, which was laden with spyware that revealed the journalist’s password, contacts, messages, keystrokes, and GPS location, was actually sent by his bosses. It wasn’t until Evenden found himself, in 2015, inadvertently hacking First Lady Michelle Obama’s e-mails that he quit. Subsequently, Perlroth writes:

CyberPoint’s digital fingerprints were all over the hacks of some four hundred people around the world, including several Emiratis, who were picked up, jailed, and thrown into solitary confinement for…so much as questioning the ruling monarch in their most intimate personal correspondence.

Is it naive to imagine that there could ever be an ethics to this market? Many of the sellers claim they are dealing in “information,” not weapons. Many of them are uninterested in the supply chain that leads from them to governments that use that “information” to spy on dissidents and journalists and to engage in ethnic cleansing. The few efforts in this country to impose limits on what can and can’t be sold, and to whom, have met with resistance, most notably in 2015, when the Commerce Department tried—and failed—to require a license to export cybersecurity products. While black-market zero-day sellers have been criminally prosecuted under the Computer Fraud and Abuse Act, that law exempts gray-market brokers who sell to the government. Therein lies the hitch. As long as the discovery and trade in zero-days can be justified on the grounds of national security, there will be little incentive to regulate it. But as long as the government is committed to stockpiling zero-days and keeping their existence secret, rather than revealing them in order to get them patched, national—as well as international—security is also at risk.

Further complicating efforts to impose constraints on the sale, weaponization, and deployment of zero-days and other cyberweapons is the global reach of digital technology, including the Internet itself. Without international agreements, national regulation alone may lead to perceived or real vulnerabilities. But despite the potential dangers that cyberweapons pose, the nature of those weapons makes them unamenable to conventional arms control treaties. How, for instance, can cyberweapons, whose power derives from their secrecy, be monitored? And then there’s the problem of attribution. Countries have gotten good at plausible deniability. They’ve also taken to impersonating one another, as we saw in 2019 when Russian hackers, disguised as Iranian hackers, whose tools they’d stolen, targeted thirty-five countries.

A more manageable strategy has been to try to get countries to agree not to attack hospitals, the electric grid, and other critical infrastructure, but even that has been fraught. For a brief moment in 2015, during UN-brokered conversations between Obama and Chinese president Xi Jinping—in which they agreed, in principle, to abide by a such an accord—a limited but essential brake on the most dangerous uses of cyberweapons seemed possible. Perlroth notes that the Trump administration’s aggressive stance on China, however, erased whatever fellow feeling arose from those conversations.

In 2018, after a two-year slowdown, Chinese hackers—who had already stolen blueprints for the F-35 fighter jet—resumed aggressively infiltrating American computer networks. In early February of this year the FBI discovered that China, too, had hacked SolarWinds software and gained access to data at the Department of Agriculture and, most likely, other government agencies. Then, about a month later, in early March, cybersecurity researchers discovered that since January 6, state-sponsored hackers from China had been using a zero-day in Microsoft’s Exchange Server software to gain access to the e-mail systems of around 30,000 American businesses and organizations. The hackers also installed malware that will allow them to return to those systems in the future. Steven Adair, the president of Volexity, the company that detected the operation, called it “a ticking time bomb.”

It’s too early to know how the Biden administration will address cyberattacks, or if it will attempt to revive discussions with our adversaries to limit the use of cyberweapons. But in December, shortly after the SolarWinds attack was discovered, President-elect Biden pledged to “make cybersecurity a top priority at every level of government.” Commenting on Biden’s first call with Vladimir Putin shortly after taking office, in which he brought up Russia’s likely involvement in the SolarWinds hack, Press Secretary Jen Psaki offered what may be a preview of the president’s approach: “His intention was…to make clear that the United States will act firmly in defense of our national interests in response to malign actions by Russia.”

Though the evolution to cyber offense was probably the inevitable corollary of the global reach of the Internet, This Is How They Tell Me the World Ends gives a persuasive argument that Panetta and Hayden were wrong. The fool’s errand was not to try to get to the bottom of the secretive zero-day market, as they told Nicole Perlroth, but rather to amass a sophisticated cyber arsenal in the belief that its very existence will keep us safe.

—March 10, 2021

-

*

For more on this subject, see my article “The Drums of Cyberwar” in these pages, December 19, 2019. ↩