Partway through Alex Gibney’s earnest documentary Steve Jobs: The Man in the Machine, an early Apple Computer collaborator named Daniel Kottke asks the question that appears to animate Danny Boyle’s recent film about Jobs: “How much of an asshole do you have to be to be successful?” Boyle’s Steve Jobs is a factious, melodramatic fugue that cycles through the themes and variations of Jobs’s life in three acts—the theatrical, stage-managed product launches of the Macintosh computer (1984), the NeXT computer (1988), and the iMac computer (1998). For Boyle (and his screenwriter Aaron Sorkin) the answer appears to be “a really, really big one.”

Gibney, for his part, has assembled a chorus of former friends, lovers, and employees who back up that assessment, and he is perplexed about it. By the time Jobs died in 2011, his cruelty, arrogance, mercurial temper, bullying, and other childish behavior were well known. So, too, were the inhumane conditions in Apple’s production facilities in China—where there had been dozens of suicides—as well as Jobs’s halfhearted response to them. Apple’s various tax avoidance schemes were also widely known. So why, Gibney wonders as his film opens—with thousands of people all over the world leaving flowers and notes “to Steve” outside Apple Stores the day he died, and fans recording weepy, impassioned webcam eulogies, and mourners holding up images of flickering candles on their iPads as they congregate around makeshift shrines—did Jobs’s death engender such planetary regret?

The simple answer is voiced by one of the bereaved, a young boy who looks to be nine or ten, swiveling back and forth in a desk chair in front of his computer: “The thing I’m using now, an iMac, he made,” the boy says. “He made the iMac. He made the Macbook. He made the Macbook Pro. He made the Macbook Air. He made the iPhone. He made the iPod. He’s made the iPod Touch. He’s made everything.”

Yet if the making of popular consumer goods was driving this outpouring of grief, then why hadn’t it happened before? Why didn’t people sob in the streets when George Eastman or Thomas Edison or Alexander Graham Bell died—especially since these men, unlike Steve Jobs, actually invented the cameras, electric lights, and telephones that became the ubiquitous and essential artifacts of modern life?* The difference, suggests the MIT sociologist Sherry Turkle, is that people’s feelings about Steve Jobs had less to do with the man, and less to do with the products themselves, and everything to do with the relationship between those products and their owners, a relationship so immediate and elemental that it elided the boundaries between them. “Jobs was making the computer an extension of yourself,” Turkle tells Gibney. “It wasn’t just for you, it was you.”

In Gibney’s film, Andy Grignon, the iPhone senior manager from 2005 to 2007, observes that

Apple is a business. And we’ve somehow attached this emotion [of love, devotion, and a sense of higher purpose] to a business which is just there to make money for its shareholders. That’s all it is, nothing more. Creating that association is probably one of Steve’s greatest accomplishments.

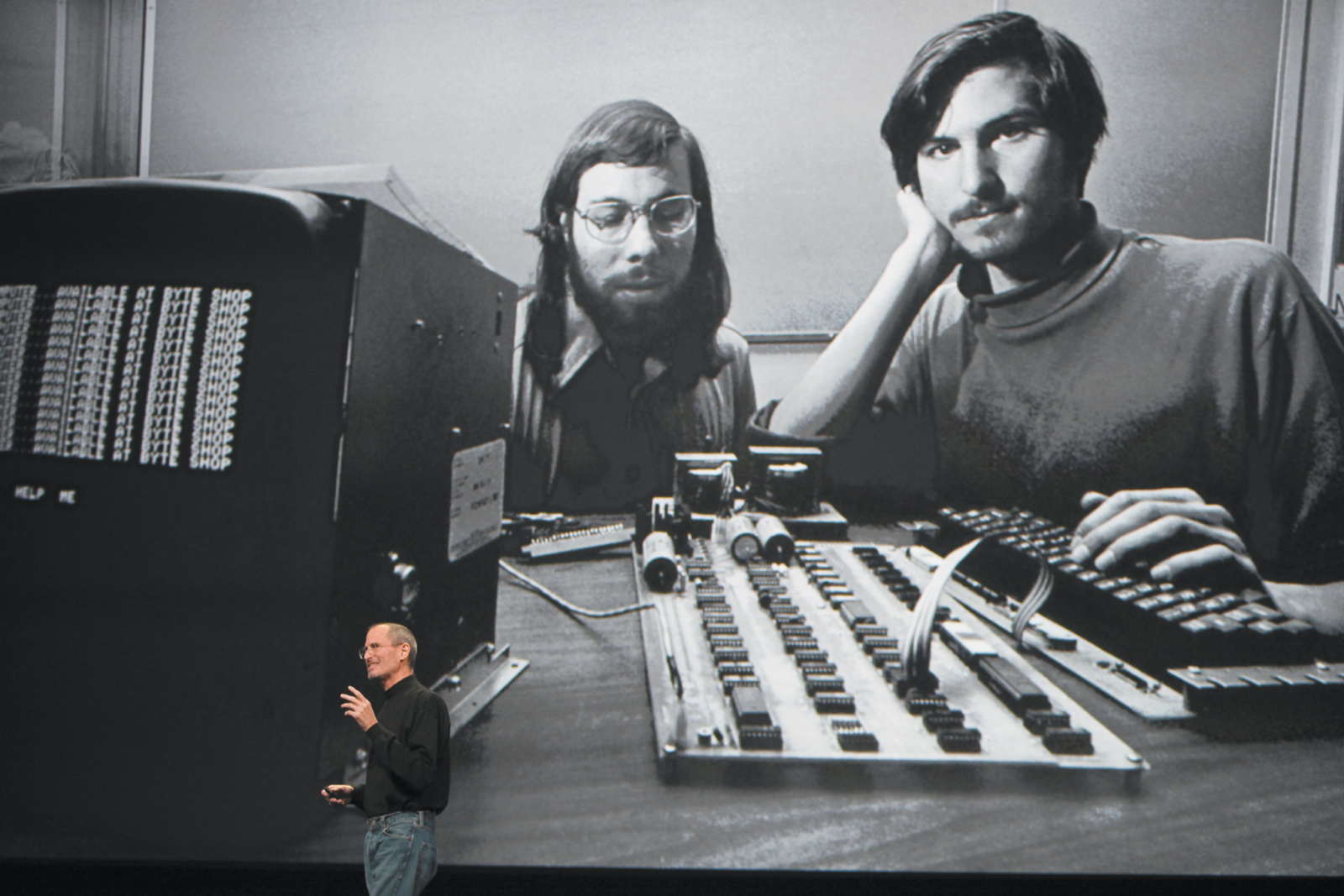

Jobs was a consummate showman. It’s no accident that Sorkin tells his story of Jobs through product launches. These were theatrical events—performances—where Jobs made sure to put himself on display as much as he did whatever new thing he was touting. “Steve was P.T. Barnum incarnate,” says Lee Clow, the advertising executive with whom he collaborated closely. “He loved the ta-da! He was always like, ‘I want you to see the Smallest Man in the World!’ He loved pulling the black velvet cloth off a new product, everything about the showbiz, the marketing, the communications.”

People are drawn to magic. Steve Jobs knew this, and it was one reason why he insisted on secrecy until the moment of unveiling. But Jobs’s obsession with secrecy went beyond his desire to preserve the “a-ha!” moment. Is Steve Jobs “the most successful paranoid in business history?,” The Economist asked in 2005, a year that saw Apple sue, among others, a Harvard freshman running a site on the Internet that traded in gossip about Apple and other products that might be in the pipeline. Gibney tells the story of Jason Chen, a Silicon Valley journalist whose home was raided in 2010 by the California Rapid Enforcement Allied Computer Team (REACT), a multi-agency SWAT force, after he published details of an iPhone model then in development. A prototype of the phone had been left in a bar by an Apple employee and then sold to Chen’s employer, the website Gizmodo, for $5,000. Chen had returned the phone to Apple four days before REACT broke down his door and seized computers and other property. Though REACT is a public entity, Apple sits on its steering committee, leaving many wondering if law enforcement was doing Apple’s bidding.

Advertisement

Whether to protect trade secrets, or sustain the magic, or both, Jobs was adamant that Apple products be closed systems that discouraged or prevented tinkering. This was the rationale behind Apple’s lawsuit against people who “jail-broke” their devices in order to use non-Apple, third-party apps—a lawsuit Apple eventually lost. And it can be seen in Jobs’s insistence, from the beginning, on making computers that integrated both software and hardware—unlike, for example, Microsoft, whose software can be found on any number of different kinds of PCs; this has kept Apple computer prices high and clones at bay. An early exchange in Boyle’s movie has Steve Wozniak arguing for a personal computer that could be altered by its owner, against Steve Jobs, who believed passionately in end-to-end control. “Computers aren’t paintings,” Wozniak says, but that is exactly what Jobs considered them to be. The inside of the original Macintosh bears the signatures of its creators.

The magic Jobs was selling went beyond the products his company made: it infused the story he told about himself. Even as a multimillionaire, and then a billionaire, even after selling out friends and collaborators, even after being caught back-dating stock options, even after sending most of Apple’s cash offshore to avoid paying taxes, Jobs sold himself as an outsider, a principled rebel who had taken a stand against the dominant (what he saw as mindless, crass, imperfect) culture. You could, too, he suggested, if you allied yourself with Apple. It was this sleight of hand that allowed consumers to believe that to buy a consumer good was to do good—that it was a way to change the world. “The myths surrounding Apple is for a company that makes phones,” the journalist Joe Nocera tells Gibney. “A phone is not a mythical device. It makes you wonder less about Apple than about us.”

To understand this graphically, one need only view online Eric Pickersgill’s photographic series “Removed,” in which the photographer has excised the phones and other electronic devices that had been in the hands of ordinary people going about their everyday lives, sitting at the kitchen table, cuddling on the couch, and lying in bed, for example. The result is images of people locked in an intimate gaze with the missing device that is so unwavering it shuts out everything else. As Pickersgill explains it:

The work began as I sat in a café one morning. This is what I wrote about my observation:

Family sitting next to me at Illium café in Troy, NY is so disconnected from one another. Not much talking. Father and two daughters have their own phones out. Mom doesn’t have one or chooses to leave it put away. She stares out the window, sad and alone in the company of her closest family. Dad looks up every so often to announce some obscure piece of info he found online. Twice he goes on about a large fish that was caught. No one replies. I am saddened by the use of technology for interaction in exchange for not interacting. This has never happened before and I doubt we have scratched the surface of the social impact of this new experience. Mom has her phone out now.

One assumes that Steve Jobs would have been heartened by these images, for they validate the perception—promoted by, among others, Jobs himself—that he was a visionary, a man who could show people what they wanted and, even more, a man who could show people what they wanted before they even knew what they wanted themselves. As Gibney puts it, “More than a CEO, he positioned himself as an oracle. A man who could tell the future.”

And he could—some of the time. It’s important to remember, though, that when Jobs was forced out of Apple in 1985, the two computer projects into which he had been pouring company resources, the Apple 3 and another computer called the Lisa, were abject failures that nearly shut the place down. A recurring scene in Boyle’s fable is Jobs’s unhappy former partner, the actual inventor of the original Apple computer, Steve Wozniak, begging him to publicly recognize the team that made the Apple 2, the machine that kept the company afloat while Jobs pursued these misadventures, and Jobs scornfully blowing him off.

Jobs’s subsequent venture after he left Apple, a workstation computer aimed at researchers and academics, appropriately called the NeXT, was even more disastrous. The computer was so overpriced and underpowered that few were sold. Boyle shows Jobs obsessing over the precise dimensions of the black plastic cube that housed the NeXT, rather than on the computer’s actual deficiencies, just as Jobs had obsessed over the minute gradations of beige for the Apple 1. Neither story is apocryphal, and both have been used over the years to illustrate, for better and for worse, Jobs’s preternatural attention to detail. (Jobs also spent $100,000 for the NeXT logo.)

Advertisement

Sorkin’s screenplay claims that the failure of the NeXT computer was calculated—that it was designed to catapult Jobs back into the Apple orbit. Fiction allows such inventions, but as the business journalists Brent Schlender and Rick Tetzeli point out in their semipersonal recounting, Becoming Steve Jobs, “There was no hiding NeXT’s failure, and there was no hiding the fact that NeXT’s failure was primarily Steve’s doing.”

Still, Jobs did use the NeXT’s surviving asset, its software division, as the wedge in the door that enabled him to get back inside his old company a decade after he’d been pushed out. NeXT software, which was developed by Avie Tevanian, a loyal stalwart until Jobs tossed him aside in 2006, became the basis for the intuitive, stable, multitasking operating system used by Mac computers to this day. At the time, though, Apple was in free fall, losing $1 billion a year and on the cusp of bankruptcy. The graphical, icon-based operating system undergirding the Macintosh was no longer powerful or flexible enough to keep up with the demands of its users. Apple needed a new operating system, and Steve Jobs just happened to have one. Or, perhaps more accurately, he had a software engineer—Tevanian—who could rejigger NeXT’s operating system and use it for the Mac, which may have been Jobs’s goal all along. Less than a year after Jobs sold the software to Apple for $429 million and a fuzzily defined advisory position at the company, the Apple CEO was gone, and the board of directors was gone, and Jobs was back in charge.

Jobs’s second act at Apple, which began either in 1996 when he returned to the company as an informal adviser to the CEO or in 1997 when he jockeyed to have the CEO ousted and took the reins himself, propelled him to rock-star status. True, a few years earlier, Inc. magazine named him “Entrepreneur of the Decade,” and despite his failures, he still carried the mantle of prophecy. It was Steve Jobs, after all, who looked at the first home computers, assembled by hobbyists like his buddy Steve Wozniak, and understood the appeal that they would have for people with no interest in building their own, thereby sparking the creation of an entire industry. (Bill Gates saw the same computer kits, realized they would need software to become fully functional, and dropped out of Harvard to write it.) But personal computers, as essential as they had become to just about everyone in the ensuing two decades, were, by the time Jobs returned to Apple, utilitarian appliances. They lacked—to use one of Steve Jobs’s favorite words—“magic.”

Back at his old company, Jobs’s first innovation was to offer an alternative to the rectangular beige box that sat on most desks. This new design, unveiled in 1998, was a translucent blue, oddly shaped chassis through which one could see the guts of the computer. (Other colors were introduced the next year.) It had a recessed handle that suggested portability, despite weighing a solid thirty-eight pounds. This Mac was the first Apple product to be preceded by the letter “i,” signaling that it would not be a solitary one-off, but instead, in a nod to the burgeoning World Wide Web, expected to be networked to the Internet.

And it was a success, with close to two million iMacs sold that first year. As Schlender and Tetzeli tell it, the iMac’s colorful shell was not just meant to challenge the prevailing industry aesthetic but also to emphasize and demonstrate that under Steve Jobs’s leadership, an Apple computer would reflect an owner’s individuality. “The i [in iMac] was personal,” they write, “in that this was ‘my’ computer, and even, perhaps, an expression of who ‘I’ am.”

Jobs was just getting started with the “i” motif. (For a while he even called himself the company’s iCEO.) Apple introduced iMovie in 1999, a clever if clunky video-editing program that enabled users to produce their own films. Then, two years later, after buying a company that made digital jukebox software, Apple launched iTunes, its wildly popular music player. iTunes was cool, but what made it even cooler was the portable music player Apple unveiled that same year, the iPod. There had been portable digital music devices before the iPod, but none of them had its capacity, functionality, or, especially, its masterful design. According to Schlender and Tetzeli,

The breakthrough on the iPod user interface is what ultimately made the product seem so magical and unique. There were plenty of other important software innovations, like the software that enables easy synchronization of the device with a user’s iTunes music collection. But if the team had not cracked the usability problem for navigating a pocket library of hundreds or thousands of tracks, the iPod would never have gotten off the ground.

By 2001, then, Apple’s strategy, which had the company moving beyond the personal computer into personal computing, was underway. Jobs convinced—or, most likely, bullied—music industry executives, who had been spooked by the proliferation of peer-to-peer Internet sites that enabled people to download their products for free, into letting Apple sell individual songs for about a dollar each on iTunes. This, Jobs must have known, set the stage for the dramatic upending of the music business itself. Among other consequences, Apple, and its millions of iTunes users, became the new drivers of taste, influence, and popularity.

Apple’s reach into the music business was fortified two years later when the company began offering a version of iTunes for Microsoft’s Windows operating system, making iTunes (and so the iPod) available to anyone and everyone who owned a personal computer. Providing a unique Apple product to Microsoft, a company Jobs did not respect, and that he had accused in court of stealing key elements of the Mac operating system, only happened, Schlender and Tetzeli suggest, because Jobs’s colleagues persuaded him that once Windows users experienced the elegance of Apple’s software and hardware, they’d see the light and come over from the dark side. In view of Apple’s recent $1 trillion valuation, it looks like they were right.

The iPod, as we all know by now, gave way to the iPhone, the iPod Touch, and the iPad. At the same time, Apple continued to make personal computers, machines that reflected Jobs’s clean, simple aesthetic, brought to fruition by Jony Ive, the company’s head designer. Ive was also responsible for the glass and metal minimalism of Apple’s handheld devices, where form is integral to function. Mobile phones existed before Apple entered the market, and there were even “smart” phones that enabled users to send and receive e-mail and surf the Internet. But there was nothing like the iPhone, with its smooth, bright touch screen, its “apps,” and the multiplicity of things those applications let users do in addition to making phone calls, like listen to music, read books, play chess, and (eventually) take and edit photographs and videos.

Steve Jobs’s hunch that people would want a phone that was actually a powerful pocket computer was heir to his hunch thirty years earlier that individuals would want a computer on their desk. Like that hunch, this one was on the money. And like that hunch, it inspired a new industry—there are now scores of smart phone manufacturers all over the world—and that new industry begot one of the first cottage industries of the twenty-first century: app development. Anyone with a knack for computer programming could build an iPhone game or utility, send it to Apple for vetting, and if it passed muster, sell it in Apple’s app store. These days, the average Apple app developer with four applications in the Apple marketplace earns about $21,000 a year. If someone were writing a history of the “gig economy”—making money by doing a series of small freelance tasks—it might start here.

Alex Gibney begins his movie wondering why Steve Jobs was revered despite being, as Boyle’s hero says of himself, “poorly made.” (In the film, he says this to his first child, whose paternity he denied for many years despite a positive paternity test, and whom he refused to support, even as she and her mother were so poor they had to rely on public assistance.) Gibney pursues the answer vigorously, and while the quest is mostly absorbing, it never gets to where it wants to go because the filmmaker has posed an unanswerable question.

And here is another: With one new book and two new movies about Jobs out this season alone, why this apparently enduring fascination with the man? Even if he is the business genius Schlender and Tetzeli credibly make him out to be, the most telling lesson to be learned from Jobs’s example might be summed up by inverting one of his favorite marketing slogans: Think Indifferent. That is, care only about the product, not the myriad producers, whether factory workers in China or staff members in Cupertino, or colleagues like Wozniak, Kottke, and Tevanian, who had been crucial to Apple’s success.

iPhones and their derivative handheld i-devices have turned Apple from a niche computer manufacturer into a global digital impresario. In the first quarter of 2015, for example, iPhone and iPad sales accounted for 81 percent of the company’s revenue, while computers made up a mere 9 percent. They have also made Apple the richest company in the world. The challenge, now, as the phone and computer markets become saturated, is to come up with must-have products that create demand without the enchantments of Steve Jobs.

This past year saw the launch of the much-anticipated Apple Watch, which failed to generate consumer enthusiasm. Sales dropped 90 percent in the first week and continued to disappoint for the rest of the year. The company also released the iPad Pro, a larger, more powerful iPad, and it, too, did not fare well. There have been rumors of an upcoming Apple car—maybe it’s electric, maybe self-driving, maybe built from the ground up, maybe in partnership with Mercedes-Benz, maybe it will be launched in 2019, maybe that’s too soon—but when the current Apple CEO, Tim Cook, was asked about the car by Stephen Colbert on his show, and again in December by Charlie Rose on 60 Minutes, he was less than forthcoming.

Even so, in the years since Jobs’s death, despite failing to introduce a blockbuster product, and despite its recent drop in share price, the company continues to grow. 2015 was Apple’s most profitable year so far, with revenues of $234 billion. According to financial analysts, this either makes Apple stock a bargain or a bear poised to fall from a tree. So far, no one has created an app that can predict the future.

Apple’s release of Siri, the iPhone’s “virtual assistant,” a day after Jobs’s death, is as good a prognosticator as any that artificial intelligence (AI) and machine learning will be central to Apple’s next generation of products, as it will be for the tech industry more generally. (Artificial intelligence is software that enables a computer to take on human tasks such as responding to spoken language requests or translating from one language to another. Machine learning, which is a kind of AI, entails the use of computer algorithms that learn by doing and rewrite themselves to account for what they’ve learned without human intervention.) A device in which these capabilities are much strengthened would be able to achieve, in real time and in multiple domains, the very thing Steve Jobs sought all along: the ability to give people what they want before they even knew they wanted it.

What this might look like was demonstrated earlier this year, not by Apple but by Google, at its annual developer conference, where it unveiled an early prototype of Now on Tap. What Tap does, essentially, is mine the information on one’s phone and make connections between it. For example, an e-mail from a friend suggesting dinner at a particular restaurant might bring up reviews of that restaurant, directions to it, and a check of your calendar to assess if you are free that evening. If this sounds benign, it may be, but these are early days—the appeal to marketers will be enormous.

Google is miles ahead of Apple with respect to AI and machine learning. This stands to reason, in part, because Google’s core business emanates from its search engine, and search engines generate huge amounts of data. But there is another reason, too, and it loops back to Steve Jobs and the culture of secrecy he instilled at Apple, a culture that prevails. As Tim Cook told Charlie Rose during that 60 Minutes interview, “one of the great things about Apple is that [we] probably have more secrecy here than the CIA.”

This institutional ethos appears to have stymied Apple’s artificial intelligence researchers from collaborating or sharing information with others in the field, crimping AI development and discouraging top researchers from working at Apple. “The really strong people don’t want to go into a closed environment where it’s all secret,” Yoshua Benigo, a professor of computer science at the University of Montreal told Bloomberg Business in October. “The differentiating factors are, ‘Who are you going to be working with?’ ‘Am I going to stay a part of the scientific community?’ ‘How much freedom will I have?’”

Steve Jobs had an abiding belief in freedom—his own. As Gibney’s documentary, Boyle’s film, and even Schlender and Tetzeli’s otherwise friendly assessment make clear, as much as he wanted to be free of the rules that applied to other people, he wanted to make his own rules that allowed him to superintend others. The people around him had a name for this. They called it Jobs’s “reality distortion field.” And so we are left with one more question as Apple goes it alone on artificial intelligence: Will hubris be the final legacy of Steve Jobs?

-

*

When Bell died, every phone exchange in the United States was shut down for a moment of silence. When Edison died, President Hoover turned off the White House lights for a minute and encouraged others to do so as well. ↩