To the Editors:

Bernard Williams’s review of What Computers Can’t Do [NYR, November 13] saddened me for the familiar reason that I felt he had missed the central point of my book, but even more in that he displays the same gullible belief in computer progress, and the same uninformed ridicule of relevant work both in British and Continental philosophy that I had hoped to dispel.

It is absurd to try to settle fundamental philosophical differences in a few pages, but I think we can at least settle the empirical question about how “clever” current computers are, and are likely to become.

Williams’s grasp of conditions in the flashy field of AI is both disingenuous and provincial. At the time he was writing that “Artificial Intelligence has gone through a sober process of realizing that human beings are cleverer than is supposed,” Herbert Simon, Dean of American Artificial Intelligencia, in one of his periodic press releases, boasted:

I think you can say that the computer is now showing intuition and the ability to think for itself. Some of us don’t see any principle or reason that would prevent machines from becoming more intelligent than man. [Wall Street Journal, June 28, 1973.]

Moreover, while Williams admonished that in the light of recent progress, rehearsing “boasts and disappointments” is of “decreasing relevance,” English AI was fighting for its existence, thanks to the General Survey of Artificial Intelligence released in March, 1972, by Sir James Lighthill. Lighthill, a distinguished British applied mathematician, evaluating AI at the request of the British Research Council, came to a conclusion diametrically opposed to Williams’s, and in line with mine.

I concluded in 1968 that in spite of optimistic predictions, work in AI was stagnating; what looked like successes were solutions of very specific problems with no possibility of generalization, and there was no hope for the foreseeable future. Lighthill’s conclusion shows that in the past five years nothing has changed.

To sum up…work…during the past twenty-five years is to some extent encouraging about programs written to perform in highly specialized problem domains, when the programming takes very full account of the results of human experience and human intelligence within the relevant domain, but is wholly discouraging about general-purpose programs seeking to mimic the problem-solving aspects of human Central Nervous System activity over a rather wide field. Such a general-purpose program, the coveted long-term goal of AI activity, seems as remote as ever.

Lighthill blames many of the failures on the “combinatorial explosion” which results when one tries to deal with problems involving many variables. But generally he limits himself to the inductive conclusion that since all past optimistic predictions have proved disappointing further optimism is unjustified. On this basis he recommends cutting off all British funds for AI research. However, as long as he can give no better account of the failures than exponential growth of combinations, he is subject to the objection that heuristic programs may soon be successful in pruning this growth tree.

In my book I tried to forestall such objections by pointing out a pattern in AI failures, and by giving reasons why these failures could have been expected at just the points at which they occurred. Williams overlooked this central concern because, naïvely, he let himself be convinced that solid progress in AI was at last being made. Thus, instead of an analysis of continuing failures, he assumed, in spite of my explicit denial, that I was offering a proof of impossibility—a proof which, understandably, he could not find.

What, in fact, emerged from my investigation was a pattern of success in solving problems in which the relevant data was restricted to a few clearly specifiable alternatives, and failure when the problem allowed no such restriction. Thus, in games such as checkers, which computers play at master level, only the color and position of the pieces is relevant, never their size or temperature, etc., while in language translation, generally acknowledged to have been a total failure, it turns out that facts we know about sizes of books and pens, for example, are necessary for disambiguating a sentence like “The book is in the pen.” Indeed, everything that human beings know is potentially relevant to disambiguating some sentence or other. Worse, since one might well understand in a James Bond movie that the book was in the fountain pen rather than, say, the play pen, it turns out that the situation determines the significance of the facts as well as their relevance. Indeed, the situation may even determine what is to count as a fact. It seems there are no neutral facts in terms of which to analyze the situation, and it is this difficulty in formalizing or programming the pragmatic context which poses the ultimate problem for AI.

Advertisement

Whether such a formalization is possible is a vital philosophical question. Traditional philosophers since Socrates have assumed that such an analysis must be possible, whereas recent thinkers such as Wittgenstein and Heidegger have introduced important considerations to suggest that this sort of reduction to elements cannot be carried out. When Cambridge philosophers like Williams are jolted out of their insularity and begin to take these problems seriously, they may tire of dismissing Heidegger as a shepherd of Being, and find in his work, once they trouble to read it, a rigorous analysis of the limits of technology.

Once the technological problem of context has been focused and the philosophical assumptions which hide it have been revealed, the latest success in AI cited indirectly by Williams—Winograd’s program for talking to a computer about a display of cubes, pyramids, etc.—can be seen for what it is. Far from showing “greatly increased sensitivity to context” as Williams was led to believe, the restriction to a “block world” cuts language off from its human context, thus turning the problem of natural language communication into a manageable game.

By contrast, the latest striking failure at MIT is Charniak’s attempt to write a program for understanding children’s stories. Charniak thought, quite reasonably, that asking a computer right off to be as intelligent as a grown-up, is too tough a task, so he proposed to write a program for understanding just one episode in a children’s story. But whereas the complex but neatly circumscribed block world was stunningly tractable to programming, the children’s story, opening as it does into the human world of owning, giving, wanting, etc., has so far turned out to be impossible to program. This is just the pattern that the distinction between game and real life developed in my book would lead one to expect.

Williams’s rejoinder that one might program a “fragment of human intelligence,” misses just this point. Intelligence is not a piecemeal affair. A computer no matter how clever at answering questions about blocks, but which has no concept of giving, needing, wanting, etc., would be even less intelligent than a calculating idiot. And such psychological notions gear so totally into the rest of human life that it may well be impossible to spell them out in the explicit, context-free way necessary to program them into a digital computer.

Thus, even though merely cataloguing the failures of AI up to the present does not prove the impossibility of intelligent machines, optimists such as Williams must show how the human context, seemingly essential to human intelligence, can either be ignored or programmed, before they can discount my claim that computers can’t be clever.

Hubert L. Dreyfus

University of California at Berkeley

To the Editors:

…I shall confine myself to illustrating how Williams’s [review] still depends on the Platonic assumption, and sketching (hopefully suggestively) what may be wrong with that. He notes (p. 40) that Dreyfus does not argue against the possibility of an intelligent artifact (or artificial organism), but only that such an artifact could not be a digital computer. He goes on to claim, however, that this lets the cat out of the bag: “For if we can gain enough physical knowledge to construct an artificial organism…then we understand it” (emphasis added). From that, and the view that it is “the structure of a system” rather than its material make up which determines the kinds of behavior of which it is capable, Williams concludes that “even if it were an analogue system that we had uncovered, it is unclear why in principle it should be impossible to model it in a digital machine.”

The assumption is betrayed in the words “construct,” “structure” and “system.” What justifies the leap from “produce an artifact” to “construct a system”? The supposition that to understand something is to uncover its systematic structure, is precisely the point at issue.

But what about the nervous system? Isn’t that a structure of neurons, ganglia, synapses, and all the rest? Well, to be sure, there are cell walls and various other demarcations of tissue, but who’s to say whether these represent functional divisions as well as metabolic necessities? It’s far from obvious that individual cells, or any other identifiable lumps of brain, can be regarded as system components with specifiable operations and specifiable interactions from which “complex” human behavior is “built up.” The question is not whether the functioning of the components in the neural structure is analog or digital, but whether the brain is a structure of functionally isolable components at all (cf., Dreyfus, pp. 72-74 and 233-234). Our Platonic, mechanist prejudice is that it must be; but why?

Intuitively, one imagines the (functionally pertinent) states of an analog device to vary continuously over some range, as opposed to those of digital devices, which are distinct and well differentiated. So perhaps the suggestion that the brain has no distinct and well-differentiated (functionally pertinent) components, can be expressed by saying that it is analog in a kind of second order or organizational way, “parts” and “processes” blending into one another in myriad and imperceptible ways.

Advertisement

Now, it may well be the case that second order analog devices are not in general digitally simulable, even in principle. By the same token, they may not lend themselves to a scientific treatment in terms of laws and distinct parameters. Early in the seventeenth century, physics, and thence the whole of science, was revolutionized by a conceptual shift from the qualitative logic of properties to the quantitative mathematics of measurables. Mr. Dreyfus’s deep philosophical point, the one which seems to have escaped Mr. Williams, is that a science of the mind may in turn require a new conceptual revolution. So far, it is a negative and conjectural position; for its positive fulfillment, we await our Galileo.

John Haugeland

Berkeley, California

Bernard Williams replies:

Dreyfus casts me as an “optimist” about AI; but my review expressed no particular optimism about AI, and the question whether it was promising enough to spend scarce research money on, which was Sir James Lighthill’s question, I did not raise at all. At the empirical level, practically all I said was that AI had learned some lessons from old errors which Dreyfus castigates. My concern was with what seems to me the more interesting part of the issue, and of the book: Dreyfus’s general philosophical reasons for being against AI.

I represented Dreyfus as trying to show that the aims of AI were, for philosophical reasons, unattainable. He points out that he explicitly denied any attempt at proof. That is true, and I apologize for not having made it clear. However, granted that, it is very unclear how much he was trying to show; so unclear, in fact, as to leave a doubt whether he remains consistent to his denial. On page 136, for instance, in an admittedly obscure passage, he seems to say that the “only alternative” to something agreed to be impossible is to understand facts “as a product of the situation”—and that is something which he seems to regard (p. 116 and elsewhere) as implying that computer programs cannot be applied.

But even if the role of philosophical reasoning in the book is consistently more modest than I implied, this does not weaken my objections: for my objections were not just that the philosophical arguments failed to be demonstrative, but that they were in good part not of a type to show anything relevant at all.

One thing which, I argued, Dreyfus’s type of reasoning could not show is something which he once more asserts: that there could be no such thing as a fragment of human intelligence. He seems to think that I have missed his point here; on the contrary, I see it very clearly, but I think, and have argued, that he gives no convincing reasons for it. In this area, he once more ignores a relevant issue which I raised, the intelligent activity of simpler organisms. A large proportion of his arguments deal with the supposed unity of the cultural world of humans. In what form do these arguments apply to other intelligent organisms, and hence to the simulation of their intelligence?

Haugeland’s remarks, on the other hand, about a possible new order of complexity in the functioning of nervous systems is presumably not confined to the human case: the possible new type of complexity would apply to most living things. Put in such general terms, it would be a rash person who excluded such a possibility a priori. But Haugeland’s account of it does not seem to help much with the issue I raised against Dreyfus and which Haugeland has taken up: whether, if that possibility obtained, we should be able to make an artificial organism—not “construct,” since he objects to that, but make, as opposed (for instance) to rear or grow. Could we, on Haugeland’s conception of the possibility he mentions, have an understanding of intelligent organisms which enabled us to make them? How much scientific understanding, at what level, of intelligent organisms do Dreyfus’s ideas leave room for? I was left in the dark about that by Dreyfus, and I get no more light on it from Haugeland.

It may be that AI is on the wrong track, and its assumptions absurdly simplistic. But if it is, we shall not come to know it, nor be helped to get clear about these things, through the kind of philosophy Dreyfus is trying to bring to bear. I thought I gave a few reasons for that claim.

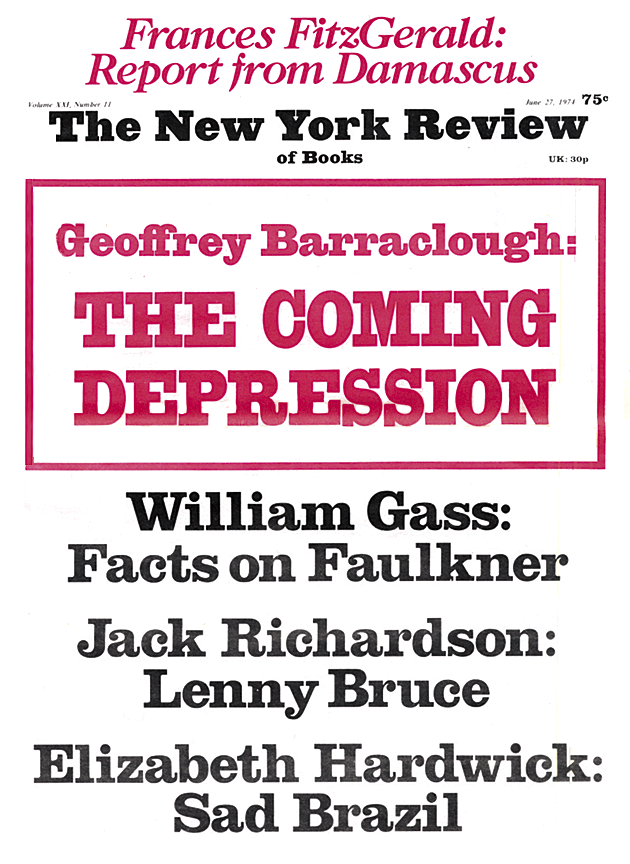

This Issue

June 27, 1974